Marrying Vega and Zen: The AMD Ryzen 5 2400G Review

by Ian Cutress on February 12, 2018 9:00 AM ESTBenchmarking Performance: CPU Rendering Tests

Rendering tests are a long-time favorite of reviewers and benchmarkers, as the code used by rendering packages is usually highly optimized to squeeze every little bit of performance out. Sometimes rendering programs end up being heavily memory dependent as well - when you have that many threads flying about with a ton of data, having low latency memory can be key to everything. Here we take a few of the usual rendering packages under Windows 10, as well as a few new interesting benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

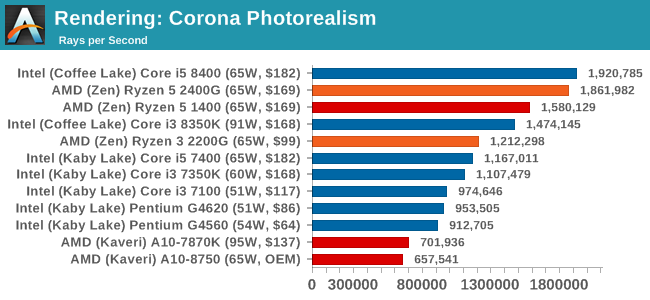

Corona 1.3: link

Corona is a standalone package designed to assist software like 3ds Max and Maya with photorealism via ray tracing. It's simple - shoot rays, get pixels. OK, it's more complicated than that, but the benchmark renders a fixed scene six times and offers results in terms of time and rays per second. The official benchmark tables list user submitted results in terms of time, however I feel rays per second is a better metric (in general, scores where higher is better seem to be easier to explain anyway). Corona likes to pile on the threads, so the results end up being very staggered based on thread count.

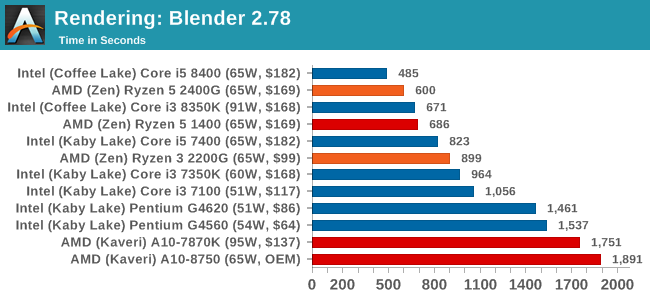

Blender 2.78: link

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

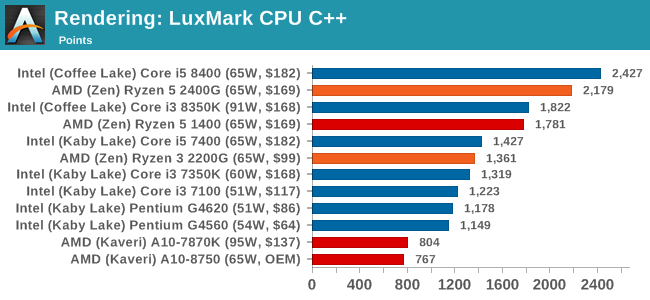

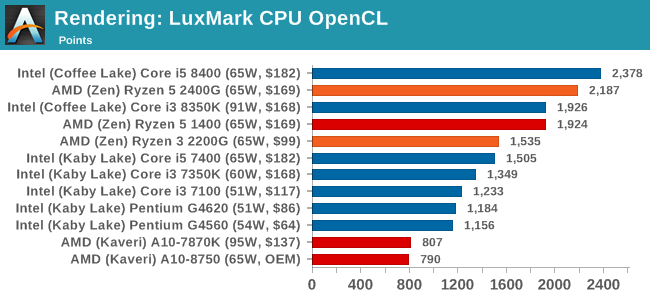

LuxMark v3.1: Link

As a synthetic, LuxMark might come across as somewhat arbitrary as a renderer, given that it's mainly used to test GPUs, but it does offer both an OpenCL and a standard C++ mode. In this instance, aside from seeing the comparison in each coding mode for cores and IPC, we also get to see the difference in performance moving from a C++ based code-stack to an OpenCL one with a CPU as the main host.

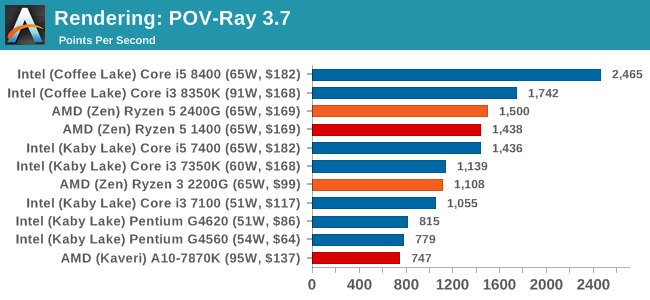

POV-Ray 3.7.1b4: link

Another regular benchmark in most suites, POV-Ray is another ray-tracer but has been around for many years. It just so happens that during the run up to AMD's Ryzen launch, the code base started to get active again with developers making changes to the code and pushing out updates. Our version and benchmarking started just before that was happening, but given time we will see where the POV-Ray code ends up and adjust in due course.

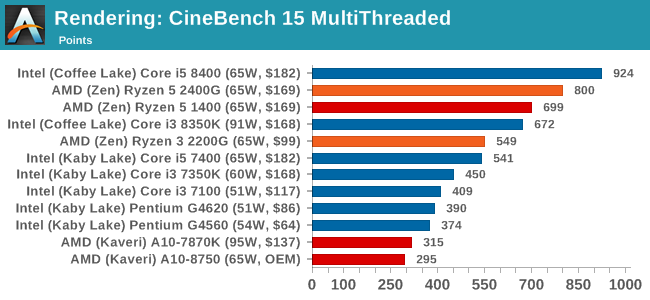

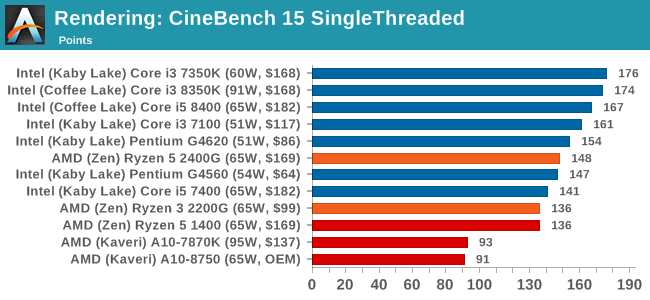

Cinebench R15: link

The latest version of CineBench has also become one of those 'used everywhere' benchmarks, particularly as an indicator of single thread performance. High IPC and high frequency gives performance in ST, whereas having good scaling and many cores is where the MT test wins out.

Conclusions on Rendering: It is clear from these graphs that most rendering tools require full cores, rather than multiple threads, to get best performance. The exception is Cinebench.

177 Comments

View All Comments

nevcairiel - Tuesday, February 13, 2018 - link

Some more realistic gaming settings might be nice. Noone is going to play on settings that result in ~20 fps, and the GPU/CPU scaling can tilt quite a bit if you reduce the settings.I can see why you might not like it, because it takes the focus away from the GPU a bit and makes comparisons against a dGPU harder (unless you run it on the exact same hardware, which might mean you have to re-run it every time), but this is a combined product, so testing both against other iGPU products would be useful info.

atatassault - Tuesday, February 13, 2018 - link

20 FPS is playable. I have a 2 in 1 with a Skylake i3-6100u, and 20 FPS is what it gets in Skyrim. Any notion of things being "unplayable" under 30/60 FPS is like an audiohile saying songs are unlistenable on speakers less than $10,000.lmcd - Tuesday, February 13, 2018 - link

Any notion of things being "unplayable" under 30/60 FPS is like an audiophile saying songs are unlistenable on speakers less than $100.Fixed it for you (FIFY).

nevcairiel - Thursday, February 15, 2018 - link

I rather reduce settings a bit to go up in FPS then look at 20 fps average. There often is many things one can turn off without a huge visual impact to achieve much better performance.29a - Saturday, October 26, 2019 - link

What a useless review. I came here to see if this thing can do some low end gaming and you didn't even test on 720p.Gideon - Tuesday, February 13, 2018 - link

Yes sorry, I didn't mean to nitpick. Just being a web developer myself dealing mosrly with frontend code, I just wanted to mention that Speedometer is actually considered to be fairly representative by both Mozilla and Google (and true enough the frameworks they use are actual frontend JS frameworks rendering TodoMVC) If you are already aware of that then that's excellent.richardginn - Monday, February 12, 2018 - link

An article looking at how memory speed affects FPS on the 2400G and 2200G is s must.I say you can 1080P game with this although it looks like for a bunch of games you will be on low settings

stanleyipkiss - Monday, February 12, 2018 - link

Check out Hardware Unboxed's review on YouTube. They did just that.beginner99 - Tuesday, February 13, 2018 - link

Yeah this review should have used medium or low settings, something that is actually playable on the CPUs tested. 25 fps might work for Civ6 but not a shooter.iter - Monday, February 12, 2018 - link

Not too shabby, 2-3x the igpu perf of intel and comparable cpu perf in the same price range. And it will likely pull ahead even further in the upcoming weeks as faster memory becomes supported.