The AnandTech Coffee Lake Review: Initial Numbers on the Core i7-8700K and Core i5-8400

by Ian Cutress on October 5, 2017 9:00 AM EST- Posted in

- CPUs

- Intel

- Core i5

- Core i7

- Core i3

- 14nm

- Coffee Lake

- 14++

- Hex-Core

- Hyperthreading

Silicon and Process Nodes: 14++

Despite being somewhat reserved in our pre-briefing, and initially blanket labeling the process node for these chips as ‘14nm’, we can confirm that Intel’s newest ‘14++’ manufacturing process is being used for these 8th Generation processors. This becomes Intel’s third crack at a 14nm process, following on from Broadwell though Skylake (14), Kaby Lake (14+), and now Coffee Lake (14++).

With the 8th Generation of processors, Intel is moving away from having the generation correlate to both the process node and microarchitecture. As Intel’s plans to shrink its process nodes have become elongated, Intel has decided that it will use multiple process nodes and microarchitectures across a single generation of products to ensure that every update cycle has a process node and microarchitecture that Intel feels best suits that market. A lot of this is down to product maturity, yields, and progress on the manufacturing side.

| Intel's Core Architecture Cadence (8/20) | |||||

| Core Generation | Microarchitecture | Process Node | Release Year | ||

| 2nd | Sandy Bridge | 32nm | 2011 | ||

| 3rd | Ivy Bridge | 22nm | 2012 | ||

| 4th | Haswell | 22nm | 2013 | ||

| 5th | Broadwell | 14nm | 2014 | ||

| 6th | Skylake | 14nm | 2015 | ||

| 7th | Kaby Lake | 14nm+ | 2016 | ||

| 8th | Kaby Lake Refresh Coffee Lake Cannon Lake |

14nm+ 14nm++ 10nm |

2017 2017 2018? |

||

| 9th | Ice Lake? ... |

10nm+ | 2018? | ||

| Unknown | Cascade Lake (Server) | ? | ? | ||

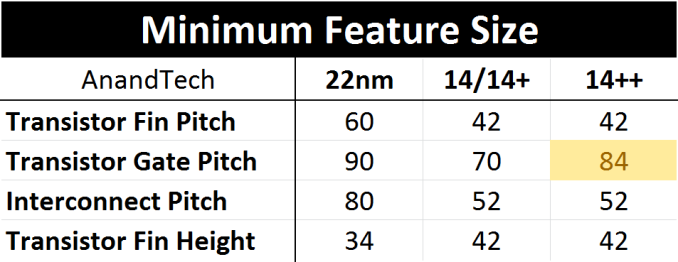

Kaby Lake was advertised as using a 14+ node with slightly relaxed manufacturing parameters and a new FinFET profile. This was to allow for higher frequencies and better overclocking, although nothing was fundamentally changed in the core manufacturing parameters. With Coffee Lake at least, the minimum gate pitch has increased from 70nm for 84nm, with all other features being equal.

Increased gate pitch moves transistors further apart, forcing a lower current density. This allows for higher leakage transistors, meaning higher peak power and higher frequency at the expense of die area and idle power.

Normally Intel aims to improve their process every generation, however this seems like a step ‘back’ in some of the metrics in order to gain performance. The truth of the matter is that back in 2015, we were expecting Intel to be selling 10nm processors en-masse by now. As delays have crept into that timeline, the 14++ note is holding over until 10nm is on track. Intel has already stated that 10+ is likely to be the first node on the desktop, which given the track record on 14+ and 14++ might be a relaxed version of 10 in order to hit performance/power/yield targets, with some minor updates. Conceptually, Intel seems to be drifting towards seperate low-power and high-performance process nodes, with the former coming first.

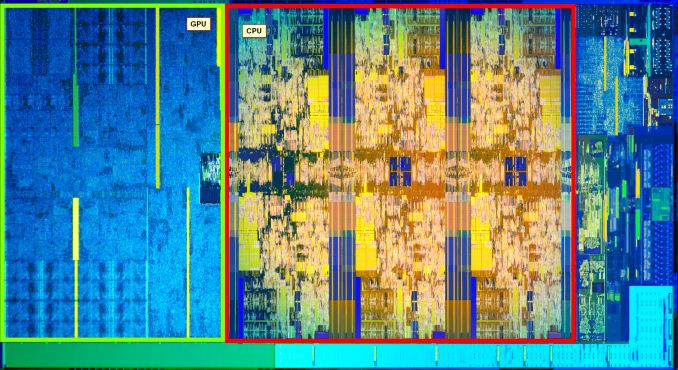

Of course, changing the fin pitch is expected to increase the die area. With thanks to HEKPC (via Videocardz), we can already see a six-core i7-8700K silicon die compared to a quad-core i7-7700K.

The die area of the Coffee Lake 6+2 design (six cores and GT2 graphics) sits at ~151 mm2, compared to the ~125 mm2 for Kaby Lake 4+2 processor: a 26mm2 increase. This increase is mainly due to the two cores, however there is a minor adjustment in the integrated grpahics as well to support HDCP 2.2, not to mention any unpublished changes Intel has made to their designs between Kaby Lake and Coffee Lake.

The following calculations are built on assumptions and contain a margin of error

With the silicon floor plan, we can calculate that the CPU cores (plus cache) account for 47.3% of the die, or 71.35 mm2. Divided by six gives a value of 11.9 mm2 per core, which means that it takes 23.8 mm2 of die area for two cores. Out of the 26mm2 increase then, 91.5% of it is for the CPU area, and the rest is likely accounting for the change in the gate pitch across the whole processor.

The Coffee Lake 4+2 die would then be expected to be around ~127 mm2, making a 2mm2 increase over the equivalent Kaby Lake 4+2, although this is well within the margin of error for measuring these processors. We are expecting to see some overclockers delid the quad-core processors soon after launch.

In previous Intel silicon designs, when Intel was ramping up its integrated graphics, we were surpassing 50% of the die area being dedicated to graphics. In this 6+2 design, the GPU area accounts for only 30.2% of the floor plan as provided, which is 45.6 mm2 of the full die.

Memory Support on Coffee Lake

With a new processor generation comes an update to memory support. There is always a small amount of confusion here about what Intel calls ‘official memory support’ and what the processors can actually run. Intel’s official memory support is typically a guarantee, saying that in all circumstances, with all processors, this memory speed should work. However motherboard manufacturers might offer speeds over 50% higher in their specification sheets, which Intel technically counts as an overclock.

This is usually seen as Intel processors having a lot of headroom to be conservative, avoid RMAs, and maintain stability. In most cases this is usually a good thing: there are only a few niche scenarios where super high-speed memory can equate to tangible performance gains* but they do exist.

*Based on previous experience, but pending a memory scaling review

For our testing at least, our philosophy is that we test at the CPU manufacturers’ recommended setting. If there is a performance gain to be had from slightly faster memory, then it pays dividends to set that as the limit for official memory support. This way, there is no argument on what the rated performance of the processor is.

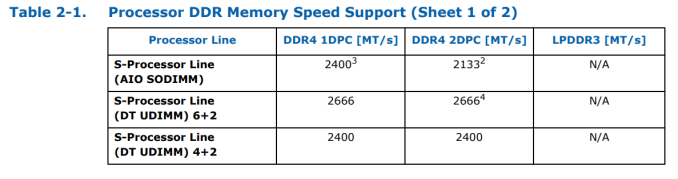

For the new generation, Intel is supporting DDR4-2666 for the six-core parts and DDR4-2400 for the quad-core parts, in both 1DPC (one DIMM per channel) and 2DPC modes. This should make it relatively simple, compared to AMD’s memory support differing on DPC and type of memory.

It gets simple until we talk about AIO designs using the processors, which typically require SODIMM memory. For these parts, for both quad-core and hex-core, Intel is supporting DDR4-2400 at 1DPC and DDR4-2133 at 2DPC. LPDDR3 support is dropped entirely. The reason for supporting a reduced memory frequency in an AIO environment with SODIMMs is because these motherboards typically run their traces as chained between the memory slots, rather than a T-Topology which helps with timing synchronization. Intel has made the T-Topology part of the specification for desktop motherboards, but not for AIO or integrated ones, which explains the difference in DRAM speed support.

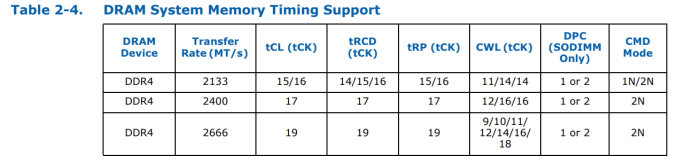

These supported frequencies follow JEDEC official sub-timings. Familiar system builders will be used to DDR4-2133 at a CAS Latency of 15, but as we increase the speed of the modules, the latency increases to compensate:

Intel’s official sub-timing support at DDR4-2666 is 19-19-19. Outside of enterprise modules, that memory does not really exist, because memory manufacturers can seem to mint DDR4-2666 16-17-17 modules fairly easily, and these processors are typically fine with those sub-timings. CPU manufacturers typically only state ‘supported frequency at JEDEC sub-timings’ and do not go into sub-timing discussions, because most users care more about the memory frequency. If time permits, it would be interesting to see just how much of a performance deficit the official JEDEC sub-timings provide compared to what memory is actually on sale.

222 Comments

View All Comments

xchaotic - Monday, October 9, 2017 - link

Well yeah, but even with non-HT i5 and i3, you still have plenty of cores to work with.Even if the OS (or a background task - say Windows Defender?) takes up a thread, you still have other cores for your game engine.nierd - Monday, October 9, 2017 - link

Do we? I've yet to see a good benchmark that measures task switching and multiple workloads - they measure 'program a' that is bad at using cores - and 'program b' that is good at using cores.In today's reality - few people are going to need maximum single program performance. Outside of very specific types of workloads (render farming or complex simulations for science) please show me the person that is just focused on a single program. I want to see side by side how these chips square off when you have multiple completing workloads that force the scheduler to balance tasks and do multiple context shifting etc. We used to see benchmarks back in the day (single core days) where they'd do things like run a program designed to completely trash the predictive cache so we'd see 'worst case' performance, and things that would stress a cpu. Now we run a benchmark suite that shows you how fast handbrake runs *if it's the only thing you run*.

mapesdhs - Tuesday, October 10, 2017 - link

I wonder if there's pressure never to test systems in that kind of real-world manner, perhaps the results would not be pretty. Not so much a damnation of the CPU, rather a reflection of the OS. :D Windows has never been that good at this sort of thing.boeush - Monday, October 9, 2017 - link

An *intelligent* OS thread scheduler would group low-demand/low-priority threads together, to multitask on one or two cores, while placing high-priority and high-CPU-utilization threads on respective dedicated cores. This would maximize performance and avoid trashing the cache, where and when it actually matters.If Windows 10 makes consistent single-thread performance hard to obtain, then the testing is revealing a fundamental problem (really, a BUG) with the OS' scheduler - not a flaw in benchmarking methodology...

samer1970 - Monday, October 9, 2017 - link

I fail to understand how you guys review a CPU meant for overclocking and only put non OC results in your tables ?If I wanted the i7 8700K without overclocking I would pick up the i7 8700 ans save $200 for both cooling and cheaper motherboard. and the i7 8700 can turbo all 6 cores to 4.3Ghz just like the i7 8700K

someonesomewherelse - Saturday, October 14, 2017 - link

Classic Intel, can't they make a chipset/socket with extra power pins so it would last for at least a few cpu generations?Gastec - Saturday, October 14, 2017 - link

I'm getting lost in all these CPU releases this year, it feels like there is a new CPU coming out every 2 months. Don't get me wrong, I like to have many choices but this is pathetic really. Someone is really desperate for more money.zodiacfml - Sunday, October 15, 2017 - link

The i3!lordken - Saturday, October 28, 2017 - link

cant you make bars for amd cpus red in graphs? Its crap to search for them if all lines are black (at least 7700k was highlighted in some)a bit disappointed, not a single world of ryzen/amd on summary page, you compare only to intel cpus? how come?

why only 1400 in civ AI test and not any R7/5 CPUs?

Also I would expect you hammer down intel a bit more on that not-so-same socket crap.

Ritska - Friday, November 3, 2017 - link

Why is 6800k faster then 7700k and 8700k in gaming? Is it worth buying if I can get one for 300$?