The AnandTech Coffee Lake Review: Initial Numbers on the Core i7-8700K and Core i5-8400

by Ian Cutress on October 5, 2017 9:00 AM EST- Posted in

- CPUs

- Intel

- Core i5

- Core i7

- Core i3

- 14nm

- Coffee Lake

- 14++

- Hex-Core

- Hyperthreading

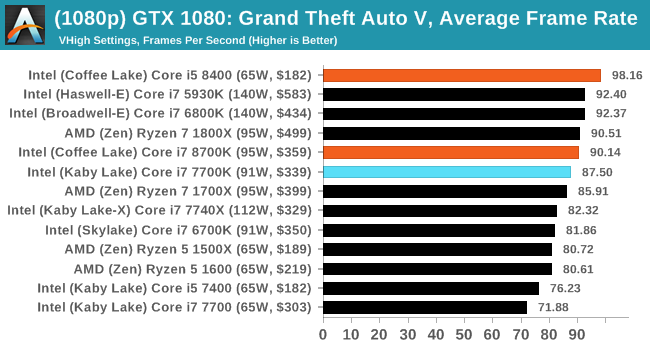

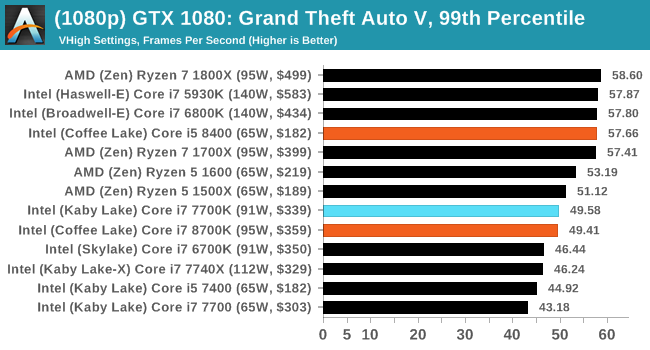

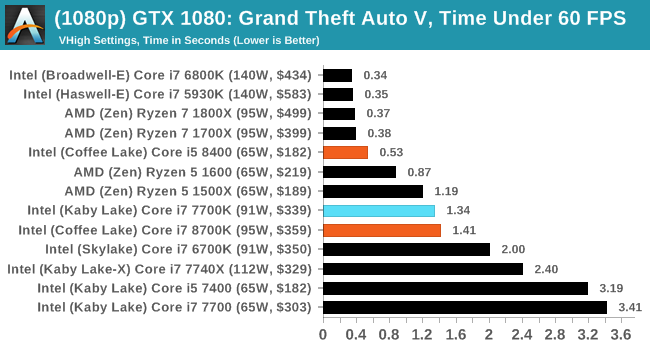

Grand Theft Auto V

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

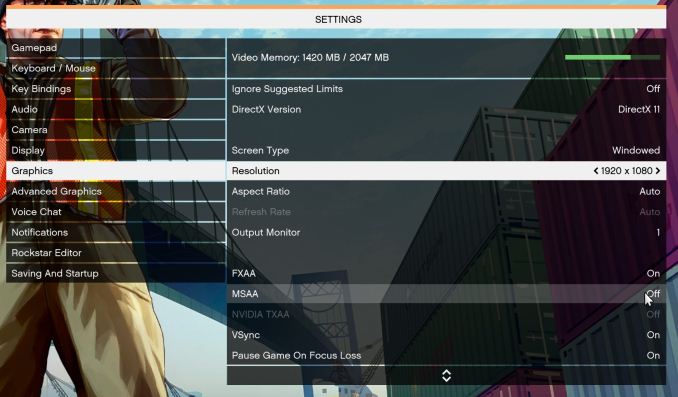

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low-end GPU with lots of GPU memory, like an R7 240 4GB).

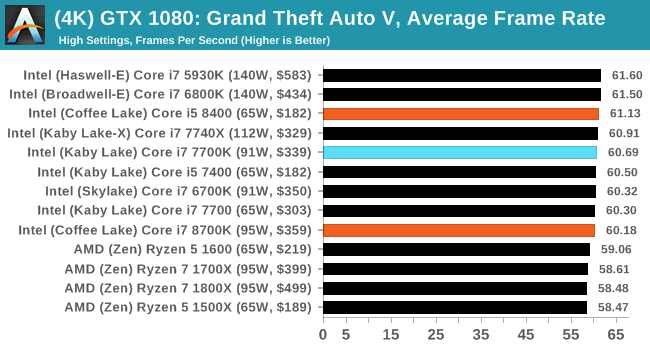

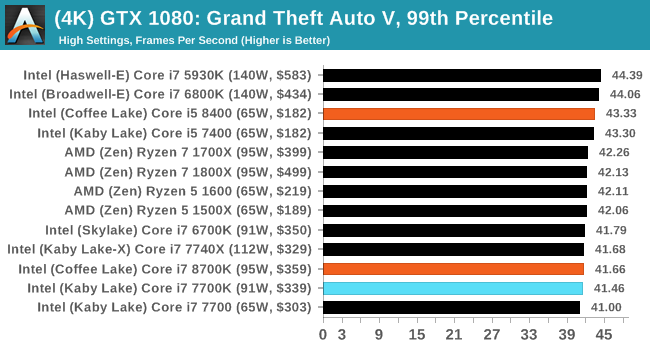

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

222 Comments

View All Comments

xchaotic - Monday, October 9, 2017 - link

Well yeah, but even with non-HT i5 and i3, you still have plenty of cores to work with.Even if the OS (or a background task - say Windows Defender?) takes up a thread, you still have other cores for your game engine.nierd - Monday, October 9, 2017 - link

Do we? I've yet to see a good benchmark that measures task switching and multiple workloads - they measure 'program a' that is bad at using cores - and 'program b' that is good at using cores.In today's reality - few people are going to need maximum single program performance. Outside of very specific types of workloads (render farming or complex simulations for science) please show me the person that is just focused on a single program. I want to see side by side how these chips square off when you have multiple completing workloads that force the scheduler to balance tasks and do multiple context shifting etc. We used to see benchmarks back in the day (single core days) where they'd do things like run a program designed to completely trash the predictive cache so we'd see 'worst case' performance, and things that would stress a cpu. Now we run a benchmark suite that shows you how fast handbrake runs *if it's the only thing you run*.

mapesdhs - Tuesday, October 10, 2017 - link

I wonder if there's pressure never to test systems in that kind of real-world manner, perhaps the results would not be pretty. Not so much a damnation of the CPU, rather a reflection of the OS. :D Windows has never been that good at this sort of thing.boeush - Monday, October 9, 2017 - link

An *intelligent* OS thread scheduler would group low-demand/low-priority threads together, to multitask on one or two cores, while placing high-priority and high-CPU-utilization threads on respective dedicated cores. This would maximize performance and avoid trashing the cache, where and when it actually matters.If Windows 10 makes consistent single-thread performance hard to obtain, then the testing is revealing a fundamental problem (really, a BUG) with the OS' scheduler - not a flaw in benchmarking methodology...

samer1970 - Monday, October 9, 2017 - link

I fail to understand how you guys review a CPU meant for overclocking and only put non OC results in your tables ?If I wanted the i7 8700K without overclocking I would pick up the i7 8700 ans save $200 for both cooling and cheaper motherboard. and the i7 8700 can turbo all 6 cores to 4.3Ghz just like the i7 8700K

someonesomewherelse - Saturday, October 14, 2017 - link

Classic Intel, can't they make a chipset/socket with extra power pins so it would last for at least a few cpu generations?Gastec - Saturday, October 14, 2017 - link

I'm getting lost in all these CPU releases this year, it feels like there is a new CPU coming out every 2 months. Don't get me wrong, I like to have many choices but this is pathetic really. Someone is really desperate for more money.zodiacfml - Sunday, October 15, 2017 - link

The i3!lordken - Saturday, October 28, 2017 - link

cant you make bars for amd cpus red in graphs? Its crap to search for them if all lines are black (at least 7700k was highlighted in some)a bit disappointed, not a single world of ryzen/amd on summary page, you compare only to intel cpus? how come?

why only 1400 in civ AI test and not any R7/5 CPUs?

Also I would expect you hammer down intel a bit more on that not-so-same socket crap.

Ritska - Friday, November 3, 2017 - link

Why is 6800k faster then 7700k and 8700k in gaming? Is it worth buying if I can get one for 300$?