Memory Scaling on Ryzen 7 with Team Group's Night Hawk RGB

by Ian Cutress & Gavin Bonshor on September 27, 2017 11:05 AM ESTGaming Performance

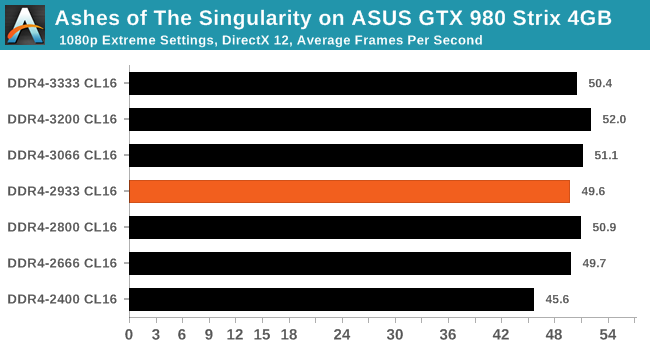

Ashes of the Singularity (DX12)

Seen as the holy child of DX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go and explore as many of the DX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

Performance with Ashes over our different memory settings was varied at best. The DDR4-2400 value can certainly be characterized as the lowest number near to ~45-46 FPS, while everything else is rounded to 50 FPS or above. Depending on the configuration, this could be an 8-10% difference in frame rates by not selecting the worst memory.

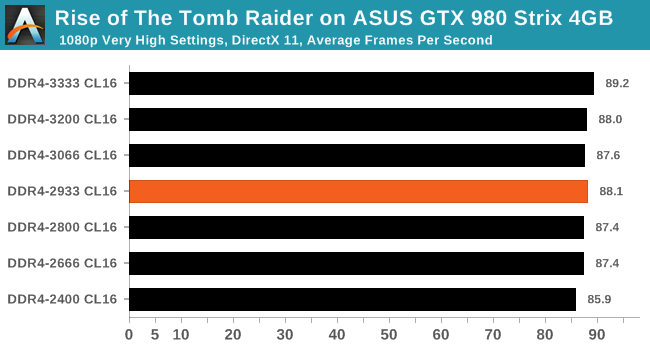

Rise Of The Tomb Raider (DX12)

One of the newest games in the gaming benchmark suite is Rise of the Tomb Raider (RoTR), developed by Crystal Dynamics, and the sequel to the popular Tomb Raider which was loved for its automated benchmark mode. But don’t let that fool you: the benchmark mode in RoTR is very much different this time around.

Visually, the previous Tomb Raider pushed realism to the limits with features such as TressFX, and the new RoTR goes one stage further when it comes to graphics fidelity. This leads to an interesting set of requirements in hardware: some sections of the game are typically GPU limited, whereas others with a lot of long-range physics can be CPU limited, depending on how the driver can translate the DirectX 12 workload.

We encountered insignificant performance differences in RoTR on the GTX 980. The 3.3 FPS increase at average framerates from top to bottom does not exactly justify the price cost between DDR4-2400 and DDR4-3333 when using a GTX 980 - not in this particular game at least.

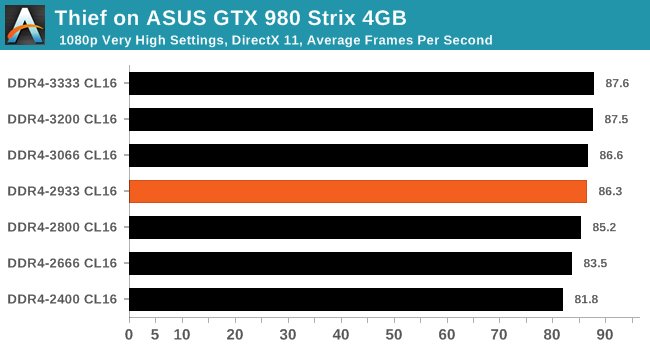

Thief

Thief has been a long-standing title in the hearts of PC gamers since the introduction of the very first iteration back in 1998 (Thief: The Dark Project). Thief is the latest reboot in the long-standing series and renowned publisher Square Enix took over the task from where Eidos Interactive left off back in 2004. The game itself uses the UE3 engine and is known for optimised and improved destructible environments, large crowd simulation and soft body dynamics.

For Thief, there are some small gains to be had from moving through from DDR4-2400 to DDR4-2933, around 5% or so, however after this the performance levels out.

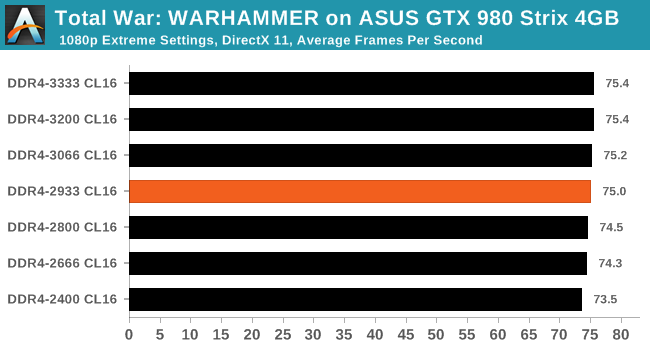

Total War: WARHAMMER

Not only is the Total War franchise one of the most popular real time tactical strategy titles of all time, but Sega has delved into multiple worlds such as the Roman Empire, the Napoleonic era, and even Attila the Hun. More recently the franchise has tackeld the popular WARHAMMER series. The developers Creative Assembly have integrated DX12 into their latest RTS battle title, it aims to take benefits that DX12 can provide. The game itself can come across as very CPU intensive, and is capable of pushing any top end system to their limits.

Even though Total War: WARHAMMER is very CPU performance focused benchmark, memory had barely any effect on the results.

65 Comments

View All Comments

Arbie - Wednesday, September 27, 2017 - link

While you're at it, reinstall the spellchecker on his PC. Looks like the DRAM testing broke it.lagittaja - Friday, September 29, 2017 - link

Ian, .vodka is right about this, you should take a closer look at the sub-timings. Maybe get in touch with The Stilt?.vodka - Sunday, October 1, 2017 - link

As luck would have it, someone did an excellent piece on what this article tried to explore.https://www.youtube.com/watch?v=S6yp7Pi39Z8

Here's a proper look at how timings and memory speed improves Ryzen performance, on a 3.9GHz 1700x vs a 5GHz 7700k.

He's using a Vega (much faster than a GTX 980), comparing 2666C16 auto timings, The Stilt's timings for 3200C14 and 3466C14, and 3600C16 with auto subtimings. All B-die.

Screenshots of the results in five games: https://imgur.com/a/EapgO

Unsurprisingly, auto subtimings are a disaster with 2666C16 and 3600C16 performing mostly the same in these five games (what you've found in your article), and Ryzen's true performance is hidden in tight subtimings that you have to manually configure and test for. The results are more than worth it.

Please, have a look in this direction. Get a Crosshair VI Hero and some proper high speed B-die memory capable of those timing sets. Make a follow up article...

Hopefully future Ryzen iterations will not be as reliant on fast memory to perform like that.

Zeed - Thursday, September 28, 2017 - link

OO You ware faster Maybe they should test RYZEN memory kits like this one ??https://www.overclockers.co.uk/team-group-dark-pro...

Or maybe Gskill Ryzen kit ??

peevee - Wednesday, September 27, 2017 - link

"he DDR4-2600 value can certainly be characterized as the lowest number near to 45-46% FPS"Nonsense alert.

Ian Cutress - Wednesday, September 27, 2017 - link

My mistake, edited the sentence one way, then changed my mind and went another route and forgot to remove the %. Updated.Ken_g6 - Wednesday, September 27, 2017 - link

And shouldn't that have been DDR4-2400?Jacerie - Wednesday, September 27, 2017 - link

Why would you only use a Nvidia gfx card in the test bed if the Infinity Fabric is designed to integrate with AMD GPUs as well. Looks like you need to go back to the bench and run these tests again with AMD gfx to get the true results.Dr. Swag - Wednesday, September 27, 2017 - link

Memory clock speed doesn't affect the IF clock speed on AMD GPUsZeDestructor - Wednesday, September 27, 2017 - link

GPUs don't talk to the CPU using IF, only PCIe. Well, onc consumer desktop anyways - presumably AMD has some crazy IF-IF under testing internally to compete against NVLink, CAPI, OmniPath and InfiniBand