An AMD Threadripper X399 Motherboard Overview: A Quick Look at Seven Products

by Ian Cutress & Joe Shields on September 15, 2017 9:00 AM ESTAdded 9/19: GIGABYTE X399 Designare-EX

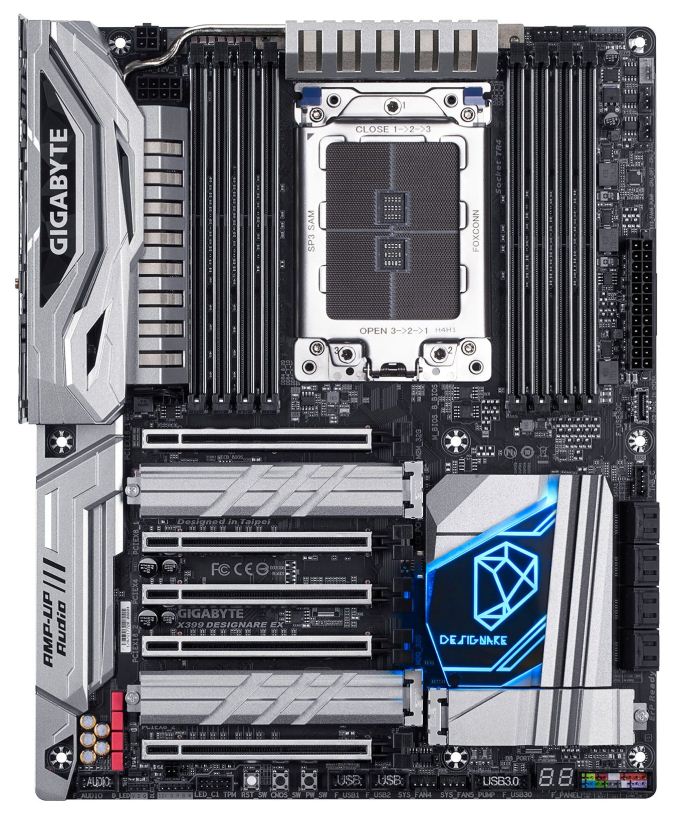

One of the first things one may notice on this board is lack of RGB LEDs compared with the AORUS Gaming 7. On the Gaming 7, where RGB LEDs are just about everywhere, the Designare EX on the other hand only has a few under the PCH heatsink. Aside from that, design aesthetics are remarkably similar, with only the color scheme changed from black colored heatsinks and shrouds (with the AORUS name) to all silver, and the GIGABYTE name on the shrouds instead. The PCH heatsink is the same shape with a different accent plate for the Designare, marking a not to GIGABYTE's aimed market for this product: design professionals. Also included is an integrated I/O shield giving it a more high-end feel.

Outside of what has been listed above, the specifications for the Designare are very similar to the Gaming 7, as it uses the same base PCB. Keeping on the platform trend, the Designare EX supports quad channel memory at two DIMMs per channel, for eight total supporting up to 128GB. What looks like an 8-phase VRM uses the same style main heatsink connected to a secondary heatsink via a heat pipe located behind the rear I/O. Being the same PCB, the power delivery is also listed as ‘server class’ like the Gaming 7, using fourth generation International Rectifier (IR) PWM controllers and third generation PowIRstage chokes. EPS power is found in its normal location in the top left corner of the board, with one 8-pin and one 4-pin.

In the top right corner of the board are five 4-pin fan headers along with an RGBW header for LEDs. Two other RGB headers are found across the bottom of the board, including another RGBW header. USB connectivity uses an onboard USB 3.1 (10Gbps) header from the chipset close to the eight SATA ports. There is a USB 3.0 header on the bottom of the board, two USB 2.0 headers near the power buttons, and a TPM header at the bottom of the board.

Like the AORUS Gaming 7, the Designare EX supports three M.2 drives. The two locations between the PCIe slots support up to 110mm long drives, while the third below the PCH heatsink can fit up to 80mm drives. All locations come with additional heatsinks to keep the drives underneath cool. The Designare EX uses the three M.2 slots instead of a separate U.2 connector. For other storage, GIGABYTE has equipped the board with eight SATA ports. The 5-pin Thunderbolt 3 header, required for add-in Thunderbolt 3 cards and unique for X399 to this specific GIGABYTE X399 board, is located just above the SATA ports. We are asking GIGABYTE if they plan to bundle a Thunderbolt 3 add-in card with this model, and are awaiting a response.

The rear of the motherboard, like some other designs on the market, uses a rear backplate to assist with motherboard rigidity. The thinking here is that these motherboards are often used in systems with multiple heavy graphics cards or PCIe coprocessors, such that if a motherboard screw is incorrectly tightened, the motherboard might be required to take the load and eventually warp. With the back-plate in place, this is designed to distribute that potential extra torque throughout the PCB, minimising any negative effects.

The PCIe slots are the same as the Gaming 7 also, with four of the five sourcing its lanes directly from the CPU. The slots used for GPUs are double-spaced and support an x16/x8/x16/x8 arrangement. The middle slot supports PCIe 3.0 x4 connection fed from the chipset. The middle slot can be used for additional add-in cards, such as a Thunderbolt 3 card.

Next to the PCIe slots is GIGABYTE’s audio solution, using a Realtek ALC1220 codec and using an EMI shield, PCB separation for the digital and analog audio signals, filter caps (both WIMA and Nichicon), and has headphone hack detection. GIGABYTE also uses DAC-UP, which delivers a more consistent USB power supply for USB connected audio devices.

Rear IO connectivity on the Designare EX is also like the AORUS Gaming 7. The only difference will be the additional Ethernet port as this model uses dual Intel NICs. Because of the USB 3.0 support from the CPU, the rear IO has eight USB ports, in yellow, blue, and white. There are also two USB 3.1 (10 Gbps) ports from the chipset, one USB Type-C. Network connectivity differs here with the Designare EX using two Intel NICs (we imagine some mixture of I219V or I211AT) and does away with the Rivet Networks Killer E2500 found on its little brother. Last, are a set of audio jacks including SPDIF.

Pricing was not listed, however, if it is slated to be the flagship of the X399 lineup, pricing is expected to be a higher than the already released X399 AORUS Gaming 7 at $389.99 on Amazon. GIGABYTE says the Designare EX will be available come Mid-October.

| GIGABYTE X399 Designare EX | |

| Warranty Period | 3 Years |

| Product Page | N/A |

| Price | TBD |

| Size | ATX |

| CPU Interface | TR4 |

| Chipset | AMD X399 |

| Memory Slots (DDR4) | Eight DDR4 Supporting 128GB Quad Channel Support DDR4 3600+ Support for ECC UDIMM (in non-ECC mode) |

| Network Connectivity | 2 x Intel LAN 1 x Intel 2x2 802.11ac |

| Onboard Audio | Realtek ALC1220 |

| PCIe Slots for Graphics (from CPU) |

2 x PCIe 3.0 x16 slots @ x16 2 x PCIe 3.0 x16 slots @ x8 |

| PCIe Slots for Other (from Chipset) |

1 x PCIe 2.0 x16 slots @ x4 |

| Onboard SATA | 8 x Supporting RAID 0/1/10 |

| Onboard SATA Express | None |

| Onboard M.2 | 3 x PCIe 3.0 x4 - NVMe or SATA |

| Onboard U.2 | None |

| USB 3.1 | 1 x Type-C (ASMedia) 1 x Type-A (ASMedia) |

| USB 3.0 | 8 x Back Panel 1 x Header |

| USB 2.0 | 2 x Headers |

| Power Connectors | 1 x 24-pin EATX 1 x 8-pin ATX 12V 1 x 4-pin ATX 12V |

| Fan Headers | 1 x CPU 1 x Watercooling CPU 4 x System Fan headers 2 x System Fan/ Water Pump headers |

| IO Panel | 1 x PS.2 keyboard/mouse port 1 x USB 3.1 Type-C 1 x USB 3.1 Type-A 8 x USB 3.0 2 x RJ-45 LAN Port 1 x Optical S/PDIF out 5 x Audio Jacks Antenna connectors |

Additional News (9/20):

After speaking with GIGABYTE, it seems that Thunderbolt 3 support will perhaps still be in limbo:

Thunderbolt 3 certification requires a few things from the CPU side like graphical output which we haven't been able to do. We expect this will be developed upon through Raven Ridge and possibly get more groundwork down to activate TB3 on the X399 Designare EX.

The header will remain, though TB3 use / full TB3 enablement will be at a later date. It seems like GIGABYTE has taken note that users are interested in TB3 on AMD.

99 Comments

View All Comments

ddriver - Monday, September 18, 2017 - link

You should look up into it. A gas turbine is not a jet engine. It is actually more efficient, and it doesn't utilize jet propulsion. Gas turbines power certain tanks, ships and helicopters. They are also used in power plants.thomasg - Sunday, September 17, 2017 - link

Honestly, could you just stop bringing your stupid gas turbine rant, since you don't really seem to grasp what efficiency is, and not even what power a typical engine has.Gas turbines are very efficient in use at or around their designed typical load. They are not efficient under medium and low load scenarios, where they will drop below modern gasoline combustion engines.

Those come with 200 kW - in high-powered sports cars, or top-of-the-line luxury limousines.

A "entry level car" will be at max. 75 kW peak power; and guess what: most of the time they are used far below the maximum output.

Modern gasoline car engines typically reach 45% efficiency, which they achieve in their typical load scenarios, at less than 50% of their design load.

Modern gas turbines can reach up to 60% efficiency, which is great - but this is usage at their design load. At half load, the efficiency will drop below 30%. The majority of miles driven with cars are at below half load.

What we expect from car engines is efficiency at their usage, while having enough reserves for quick acceleration. Gas turbines cannot do this efficiently, and gas turbines are notoriously laggy in variable load.

However, they can be used effectively in fully-hybrid cars, where peak-load is achieved by battery-backed electric motors.

But since these engines are so expensive to produce, it is simply more cost-effective to use fully-electric cars for this.

ddriver - Monday, September 18, 2017 - link

Of course that a gas turbine consumer vehicle will also utilize a battery buffer. You basically charge it while stationary, drive on battery until power runs out, which is when the turbine is activated to supply power and charge. An all-electric drive will significantly simplify the design, the transmission, and will ensure maximum torque on any level of power.You are way off, only F1 engines approach 50% of efficiency, but they only last like a few races, which is the cost of that efficiency. Totally impractical for consumer vehicles. The typical operational efficiency of consumer vehicles is as low as 20%. And they are also intrinsically limited in terms of torque delivery, which happens in a specific and rather narrow RPM range.

So, transitioning to gas turbine engines will not have a 100% but a 200% increase in efficiency. I guess little minds simply cannot appreciate then significance of that. Not to mention it will defacto force wide hybrid vehicle adoption, and a very overhead compared to internal combustion engines, as a gas turbine with the same power delivery will weight 1/4 of that, and will deliver 3 times as much energy from the same amount of fuel.

Gas turbine engines are also actually easier to make and maintain, they have far less moving parts. You seem to be confusing a regular gas turbine engine with the ultra-efficient one, which requires expenssive and time staking 5 axis machining of the components. Those exceed 70% efficiency, but if you aim for 60%, the manufacturing is much cheaper, easier and faster. Overall much more cost efficient.

Which is exactly why they are not being adopted. It will result in massive loss of revenue - cheaper engines, that need less of the expensive maintenance, less parts, and burn much less fuel. The priority of the industry is profit, and gas turbine vehicles will result in a massive dip in that aspect. Internal combustion engines are pathetic in terms of efficiency, but are very profit-effective.

ddriver - Monday, September 18, 2017 - link

"and a very LOW overhead compared to internal combustion engines"vgray35@hotmail.com - Sunday, September 17, 2017 - link

Get a glue ddriver. This is not about power saved whose numbers are minuscule compared to the power o/p of an automobile engine - IT IS ALL ABOUT TEMPERATURE OF THE INTEGRATED CHIPS WHICH ARE LIMITED TO NOT MUCH MORE THAN 100 deg C, and the difficulty of providing sufficient air flow or liquid cooling necessary to remove that heat. Failure to remove the heat dramatically reduces life of the chips by as much as 60% - 70% life reduction. The problem is greatly curtailed by not using circuity designs that would generate ever larger amounts of waste heat. Please stick to subject matter of this posting, which is about reducing CPU and GPU and VRM operating temperatures, without using huge heat sinks and liquid cooling radiators. How does one reduce these temperatures? The first step is to eliminate >85% of the heat in the ATX PSU, motherboard VRMs, and GPU VRMs, to reduce total heat load on the cooling system. And that technology is already available as mentioned above. Please cease with the 100 kW rhetoric which is meaningless in context of this temperature problem (yes little k for kilo not capitol K). Let's talk about the excess temperature issue. Get it!Thread Ripper is a HEDT platform and thus deserves a HEDT VRM solution, and not the same old worn out technologies that use air-gapped ferrite cored inductors, when resonance scaling permits increased resonant capacitance in exchange for much smaller resonant inductance using cheaper air-cored inductors. And to boot, a dramatic reduction is both size and cost. AMD should lead this charge and bring forth an appropriate reference VRM, using PWM-resonant switching and resonance scaling of the Cr/Lr resonant components. ARE YOU LISTENING AMD - LETS RETIRE THE BUCK CONVERTER ACROSS THE BOARD.

ddriver - Monday, September 18, 2017 - link

Getting glue. Now what?Here is a clue - remove the stock heatsink, install better cooling. Takes like 5 minutes. Heat problem solved. Crude, but it delivers result.

The industry standards are so low, there is barely a product, regardless of its price range, that someone with basic engineering cannot tangibly improve in a few easy steps.

An example, I recently got a yoga 720 2in1. Opened it up, removed the cooling, put good TIM, reinstalled cooling, now I have a 5 minute 5$ improvement that gave me a 10% boost in performance, temperature and battery life. They are just lazy, and don't go even for the most obvious, easiest to implement improvements.

They DONT WANT IT TO RUN COOL. They deliberately engineer it to run at its limit, so close that often they actually mess it up. So that this device can fail, so you can get a new one. It is a time bomb, planned obsolescence, and you can bet your ass they would have done the same regardless of the power delivery circuit involved. It may actually be a far more delicate and harder to address time bomb than hot running VRMs. Which you can easily cool down by ordering a custom heatpipe solution, which will set you back like 50$. That's a rather quick and affordable way to solve your problem, compared to complaining about it in this cesspool of mediocrity ;)

vgray35@hotmail.com - Monday, September 18, 2017 - link

Sorry ddriver, but I disagree with all your perspectives on this matter. You are clearly not capable of addressing the technical issues of Power Supply design for efficiency, and cannot get to grips with electronic circuity (or do not want to) and how different designs compare. You appear only interested in hijacking the original subject matter for your own purposes. You never contributed a single element addressing the original purpose of this thread, and so you have lost your credibility as a serious participant in my book, and hence you and I done.glennst43 - Friday, September 15, 2017 - link

Based on my experience with the Asus Zenith Extreme, you can expect a bumpy ride which should not be surprising with a new product. My last 3 systems were Intel X58, X79, and X99 boards purchased shortly after thier releases, and this platform (x399) has had the most issues. I expect that in a few months after some BIOS and driver updates, the experiecne will get much better. I suspect that the validation process is not as thorough as the Intel boards.Here are a few issues that I have experienced as an early adopter:

System would not boot with 2 video cards (resolved with BIOS update)

The 10G Network card would randomly disconnect (resolved? with driver update)

System sometimes will not come back from sleep and requires a hard reset (no resolution yet)

USB devices disconnecting/reconnecting randomly (no resolution yet)

johnnycanadian - Friday, September 15, 2017 - link

I'm crossing my fingers I made the correct choice with MSI's x399 offering. I too have been burned by the ASUS early-adopter-penalty and although Gigabyte has been good to me in the past, the MSI offered everything I needed and then some (although I'm firmly in the "get rid of the tacky LED" camp). Everything is getting stuffed into a Cooler Master HAF XB II EVO (with no glass but with the mesh top panel). Even if it's not perfect it can't be worse than running Windows on Boot Camp with a "trash can" (aptly named) Mac "Pro".arter97 - Friday, September 15, 2017 - link

ASUS PRIME X399-A : "In the ROG board this lead to a 40mm fan, which is not present here on the Prime."This is wrong. I own one and the fan is present under the shroud.

You can even see it from the side shot of the motherboard.