Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Intel Expanding the Chipset: 10 Gigabit Ethernet and QuickAssist Technology

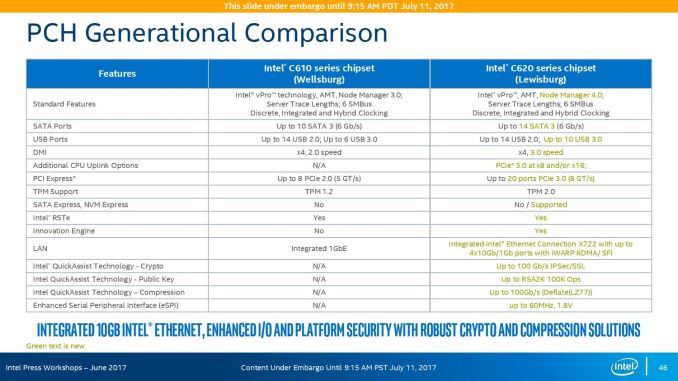

The refresh strategy from Intel on the chipset side has an ultra-long cadence. In recent memory, Intel’s platform launches are designed to support two generations of processor release, and in that time there is typically no chipset update, leaving the platform controller hub semi-static for functionality for usually three years. This is compared to the consumer side, where new chipsets are launched with every new CPU generation, with bigger jumps coming every couple of years. For the new launch today, Intel pushing the enterprise chipset ahead in a new direction.

The point of the chipset previously was to provide some basic IO support in the form of SATA/SAS ports, some USB ports, and a few PCIe lanes for simple controllers like USB 3.0, Gigabit Ethernet, or perhaps an x4 PCIe slot for a non-accelerator type card. The new chipsets, part of the C620 family codenamed Lewisburg, are designed to assist with networking, cryptography, and act more like a PCIe switch with up to 20 PCIe 3.0 lane support.

The headline features that matter most is the upgrade in DMI connection to the chipset, upgraded from DMI 2.0 to DMI 3.0 to match the consumer platforms, having those 20 PCIe 3.0 lanes from the chipset, and also the new feature under CPU Uplink.

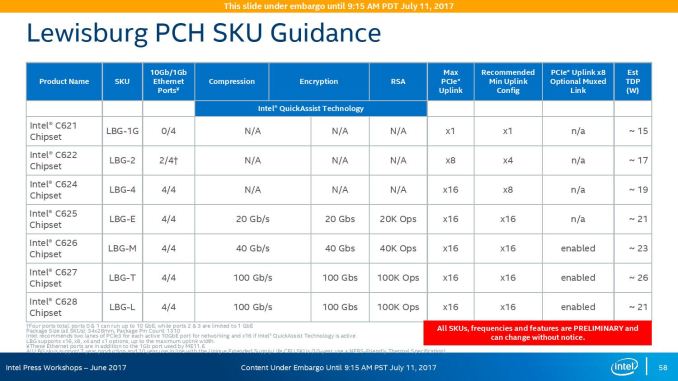

For the new generation of Lewisburg chipsets, if an OEM requires that a platform has access to a cryptography engine or 10 Gigabit Ethernet, then they can attach 8 or 16 lanes from the processor into the chipset via this CPU Uplink port. Depending on which model of chipset is being used, this can provide up to four 10 GbE ports with iWARP RDMA, or up to 100 GB/s IPSec/SSL of QuickAssist support.

Intel will offer seven different versions of the chipset, varying in 10G and QAT support, but also varying in TDP:

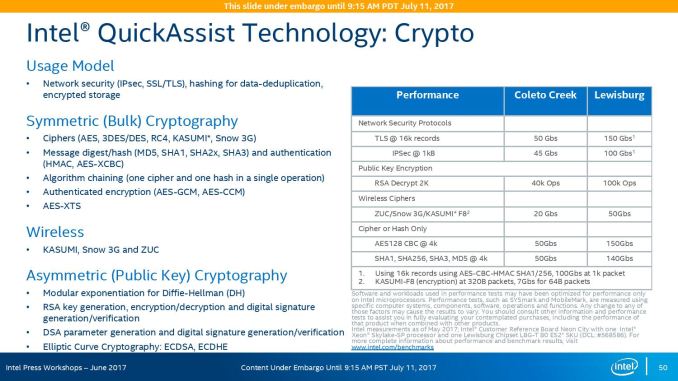

On the cryptography side, Intel has previously sold add-in PCIe cards for QuickAssist, but is now moving it onto the systems directly. By adding it into the chipset, it can be paired with the Ethernet traffic and done in-situ, and specifically Intel points to bulk cryptography (150 Gb/s AES256/SHA256), Public Key Encryption (100k ops of RSA2048) and compression (100+ Gb/s deflate).

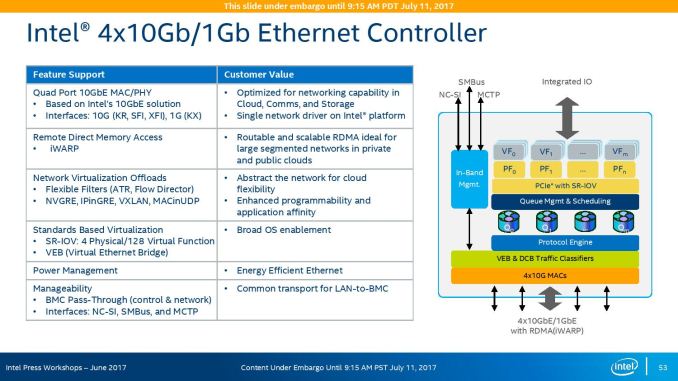

With the GbE, Intel has designed this to be paired with the X722 PHY, and supports network virtualization, traffic shaping, and supports Intel’s Data Plane Development Kit for advanced packet forwarding.

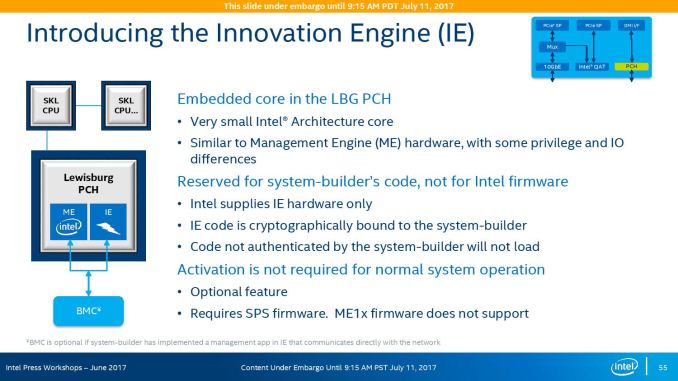

The chipset will also include a new feature called Intel’s Innovation Engine, giving a small embedded core into the PCH which mirrors Intel’s Management Engine but is designed for system-builders and integrators. This allows specialist firmware to manage some of the capabilities of the system on top of Intel’s ME, and is essentially an Intel Quark x86 core with 1.4MB SRAM.

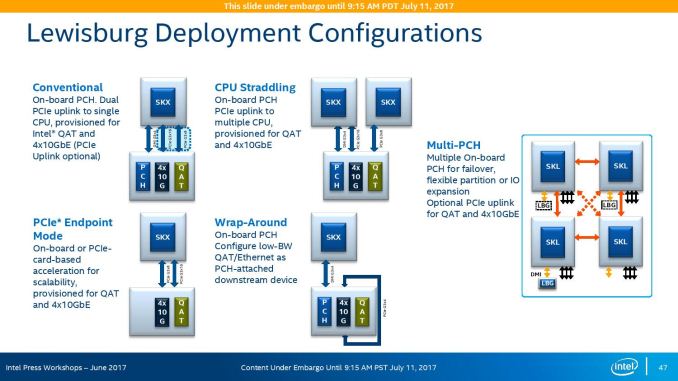

The chipsets are also designed to be supported between different CPUs within the same multi-processor system, or for a system to support multiple chipsets at once as needed.

219 Comments

View All Comments

CajunArson - Tuesday, July 11, 2017 - link

Would a high-end server that was built in 2014 necessarily update? Maybe not.Should a high-end server with a brand new microarchitecture use the most recent version of the software if it has any expectation of seeing a real benefit? Absolutely.

If this was a GPU review and Anandtech used 2 year old drivers on a new GPU (assuming they even worked at all) we wouldn't even be having this conversation.

BrokenCrayons - Tuesday, July 11, 2017 - link

Home users playing video games are in a different environment than you find in a business datacenter. There's a lot less money to be lost when a driver update causes a performance regression or eliminates a feature. Conversely, needlessly updating software in the aforementioned datacenter can result in the loss of many millions if something goes wrong.wallysb01 - Tuesday, July 11, 2017 - link

Conversely, having stuff working, but unnecessarily slowly costs money as well. Its a balance, and if you're spending hundreds of thousands or even millions on a cluster/data center/what have you, you'd probably want to spend at least a little bit of time optimizing it, right?Icehawk - Tuesday, July 11, 2017 - link

Most of the businesses I have worked for, ranging from 10 people to 50k, use severely outdated software and the barest minimum of patching. Optimization? HA!For example I work for a manufacturer & retailer currently, our POS system was last patched in 2012 by the vendor and has been replaced by at least two versions newer. We have XP machines in each of our stores as that is the only OS that can run the software.

The above is very typical. The 50k company I worked for had software so old and deeply entrenched that modernizing it is virtually impossible. My current company is working on getting to a new product... that was new in 2012 and has also been replaced with a newer version. Whee!

Icehawk - Tuesday, July 11, 2017 - link

One other thing - maybe the big shops actually do test/size but none of the places I have worked at and have been involved in do any testing, benchmarking, etc. They just buy whatever their preferred vendor gives them that meets the budget and they *think* will work. My coworker is in charge (lol) of selecting servers for a new office... he has no clue what anything in this article is. He has never read a single review, overview, or test of a processor. I could keep going on like this :(0ldman79 - Wednesday, July 12, 2017 - link

Icehawk's comments are so accurate it is scary.I can't tell you how many businesses running custom *nix software running in a VM on a Windows server.

They're not all about speed. Reliability is the single most important factor, speed is somewhere down the line. The people that make those decisions and the people that drink coffee while they're waiting on the machines are very different.

Neither understand that it could all be done so much better and almost all of them are utterly terrified at the concept of speeding up the process if it means *any* changes are made.

JohanAnandtech - Friday, July 21, 2017 - link

We did test with NAMD 2.12 (Dec 2016).sutamatamasu - Tuesday, July 11, 2017 - link

Glad, AMD make back again to this segment, now we can only see what can Raja to do for server market with Radeon instinct.Kaotika - Tuesday, July 11, 2017 - link

So this confirms that the previous information regarding Skylake-X core configurations was wrong, and 12-core variant is in fact using HCC-core instead of LCC-core?Ian Cutress - Tuesday, July 11, 2017 - link

We corrected that in our Skylake-X review.