Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

AMD's EPYC Server CPU

If you have read Ian's articles about Zen and EPYC in detail, you can skip this page. For those of you who need a refresher, let us quickly review what AMD is offering.

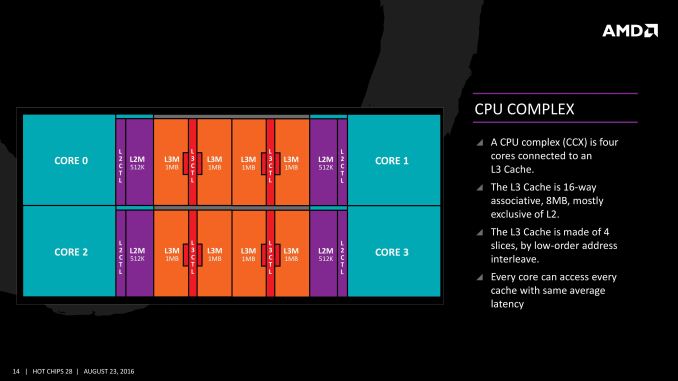

The basic building block of EPYC and Ryzen is the CPU Complex (CCX), which consists of 4 vastly improved "Zen" cores, connected to an L3-cache. In a full configuration each core technically has its own 2 MB of L3, but access to the other 6 MB is rather speedy. Within a CCX we measured 13 ns to access the first 2 MB, and 15 to 19 ns for the rest of the 8 MB L3-cache, a difference that's hardly noticeable in the grand scheme of things. The L3-cache acts as a mostly exclusive victim cache.

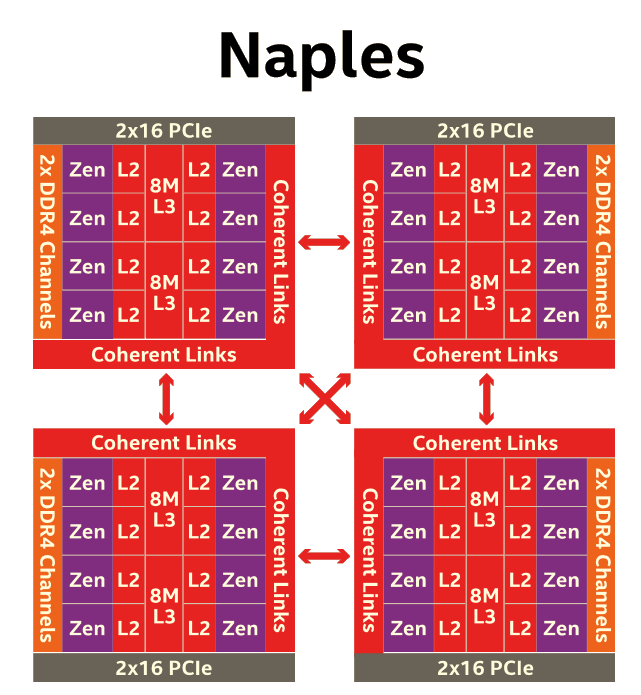

Two CCXes make up one Zeppelin die. A custom fabric – AMD's Infinity Fabric – ties together two CCXes, the two 8 MB L3-caches, 2 DDR4-channels, and the integrated PCIe lanes. That topology is not without some drawbacks though: it means that there are two separate 8 MB L3 caches instead of one single 16 MB LLC. This has all kinds of consequences. For example the prefetchers of each core make sure that data of the L3 is brought into the L1 when it is needed. Meanwhile each CCX has its own separate (not inside the L3, so no capacity hit) and dedicated SRAM snoop directory (keeping track of 7 possible states). In other words, the local L3-cache communicates very quickly with everything inside the same CCX, but every data exchange between two CCXes comes with a tangible latency penalty.

Moving further up the chain, the complete EPYC chip is a Multi Chip Module(MCM) containing 4 Zeppelin dies.

AMD made sure that each die is only one hop apart from the other, ensuring that the off-die latency is as low as reasonably possible.

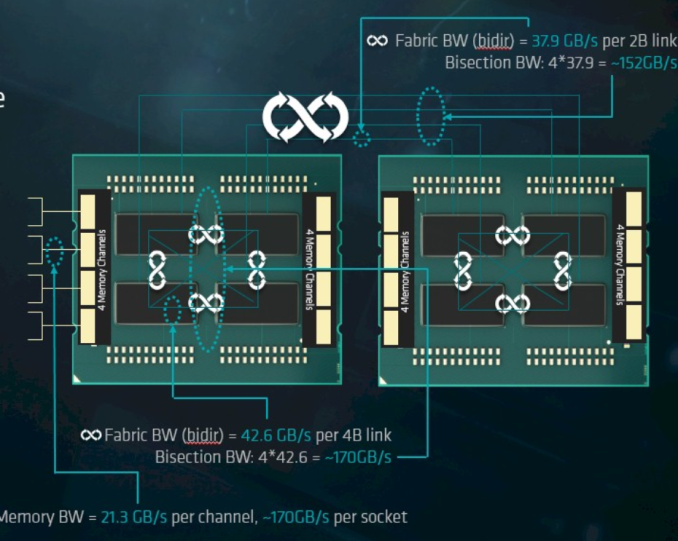

Meanwhile scaling things up to their logical conclusion, we have 2P configurations. A dual socket EPYC setup is in fact a "virtual octal socket" NUMA system.

AMD gave this "virtual octal socket" topology ample bandwidth to communicate. The two physical sockets are connected by four bidirectional interconnects, each consisting of 16 PCIe lanes. Each of these interconnect links operates at +/- 38 GB/s (or 19 GB/s in each direction).

So basically, AMD's topology is ideal for applications with many independently working threads such as small VMs, HPC applications, and so on. It is less suited for applications that require a lot of data synchronization such as transactional databases. In the latter case, the extra latency of exchanging data between dies and even CCX is going to have an impact relative to a traditional monolithic design.

219 Comments

View All Comments

CajunArson - Tuesday, July 11, 2017 - link

Would a high-end server that was built in 2014 necessarily update? Maybe not.Should a high-end server with a brand new microarchitecture use the most recent version of the software if it has any expectation of seeing a real benefit? Absolutely.

If this was a GPU review and Anandtech used 2 year old drivers on a new GPU (assuming they even worked at all) we wouldn't even be having this conversation.

BrokenCrayons - Tuesday, July 11, 2017 - link

Home users playing video games are in a different environment than you find in a business datacenter. There's a lot less money to be lost when a driver update causes a performance regression or eliminates a feature. Conversely, needlessly updating software in the aforementioned datacenter can result in the loss of many millions if something goes wrong.wallysb01 - Tuesday, July 11, 2017 - link

Conversely, having stuff working, but unnecessarily slowly costs money as well. Its a balance, and if you're spending hundreds of thousands or even millions on a cluster/data center/what have you, you'd probably want to spend at least a little bit of time optimizing it, right?Icehawk - Tuesday, July 11, 2017 - link

Most of the businesses I have worked for, ranging from 10 people to 50k, use severely outdated software and the barest minimum of patching. Optimization? HA!For example I work for a manufacturer & retailer currently, our POS system was last patched in 2012 by the vendor and has been replaced by at least two versions newer. We have XP machines in each of our stores as that is the only OS that can run the software.

The above is very typical. The 50k company I worked for had software so old and deeply entrenched that modernizing it is virtually impossible. My current company is working on getting to a new product... that was new in 2012 and has also been replaced with a newer version. Whee!

Icehawk - Tuesday, July 11, 2017 - link

One other thing - maybe the big shops actually do test/size but none of the places I have worked at and have been involved in do any testing, benchmarking, etc. They just buy whatever their preferred vendor gives them that meets the budget and they *think* will work. My coworker is in charge (lol) of selecting servers for a new office... he has no clue what anything in this article is. He has never read a single review, overview, or test of a processor. I could keep going on like this :(0ldman79 - Wednesday, July 12, 2017 - link

Icehawk's comments are so accurate it is scary.I can't tell you how many businesses running custom *nix software running in a VM on a Windows server.

They're not all about speed. Reliability is the single most important factor, speed is somewhere down the line. The people that make those decisions and the people that drink coffee while they're waiting on the machines are very different.

Neither understand that it could all be done so much better and almost all of them are utterly terrified at the concept of speeding up the process if it means *any* changes are made.

JohanAnandtech - Friday, July 21, 2017 - link

We did test with NAMD 2.12 (Dec 2016).sutamatamasu - Tuesday, July 11, 2017 - link

Glad, AMD make back again to this segment, now we can only see what can Raja to do for server market with Radeon instinct.Kaotika - Tuesday, July 11, 2017 - link

So this confirms that the previous information regarding Skylake-X core configurations was wrong, and 12-core variant is in fact using HCC-core instead of LCC-core?Ian Cutress - Tuesday, July 11, 2017 - link

We corrected that in our Skylake-X review.