Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Testing Notes

For the EPYC launch, AMD sent us their best SKU: the EPYC 7601. Meanwhile Intel gave us a choice between the top bin Xeon 8180 and the Xeon 8176. Considering that the latter had 165-173W TDP, similar to AMD's best EPYC, we felt that the Xeon 8176 was the best choice.

Unfortunately, our time testing the two platforms has been limited. In particular, we only received AMD's EPYC system last week, and the company did not put an embargo on the results. This means that we can release the data now, in time to compare it to the new Skylake-SP Xeons, however it also means that we've only had a handful of days to work with the platform before writing all of this up for today's embargo. We're confident in the data, but it means that we haven't had a chance to tease out the nuances of EPYC quite yet, and that will have to be something we get to in a future article.

Meanwhile we should note that we've had to retire the bulk of our historical benchmark data, as we upgraded both our compiler and OS (see below). Due to this, we only had a very limited amount of time to run additional systems, and for that reason we've opted include Intel's Xeon E5-2690. The Sandy Bridge-EP processor is about 5 years old, and for customers who aren't upgrading their servers every single generation, it's these servers that we believe are most likely to get upgraded in this round. So for server managers looking at finally buying into new hardware, you can get an idea of much return of investment you get.

Benchmark Configuration and Methodology

All of our testing was conducted on Ubuntu Server "Xenial" 16.04.2 LTS (Linux kernel 4.4.0 64 bit). The compiler that ships with this distribution is GCC 5.4.0.

You will notice that the DRAM capacity varies among our server configurations. The reason is that we had little time left before today's launch embargo. Removing any hardware is always a risk, so we decided to run our tests without significantly changing the internal hardware of the systems we received from AMD and Intel (SSDs were still replaced). As far as we know, all of our tests fit in 128 GB, so DRAM capacity should not have much influence on performance. But it wil have a impact on total energy consumption, which we will discuss.

Last but not least, we want to note how the performance graphs have been color-coded. Orange is AMD's EPYC, dark blue is Intel's best (Skylake-SP), and light blue is the previous generation Xeons (Xeon E5-v4) . Gray has been used for the soon-to-be-replaced Xeon v1.

Intel's Xeon "Purley" Server – S2P2SY3Q (2U Chassis)

| CPU | Two Intel Xeon Platinum 8176 (2.1 GHz, 28c, 38.5MB L3, 165W) |

| RAM | 384 GB (12x32 GB) Hynix DDR4-2666 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | Intel S2600WF (Wolf Pass baseboard) |

| Chipset | Intel Wellsburg B0 |

| BIOS version | 9/02/2017 |

| PSU | 1100W PSU (80+ Platinum) |

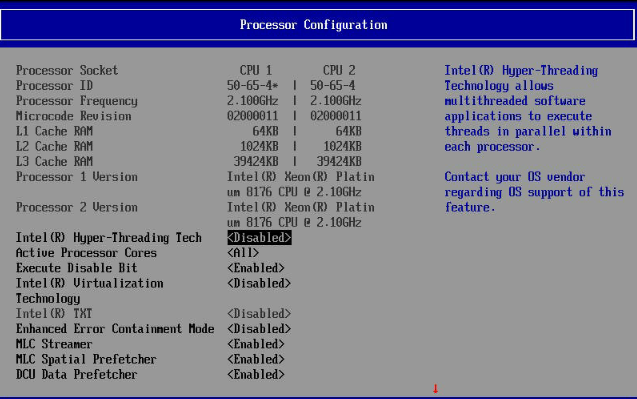

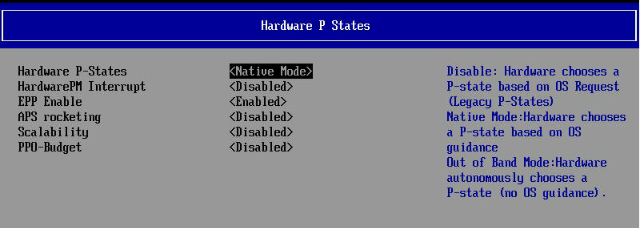

The typical BIOS settings can be seen below; we enabled hyperthreading and Intel virtualization.

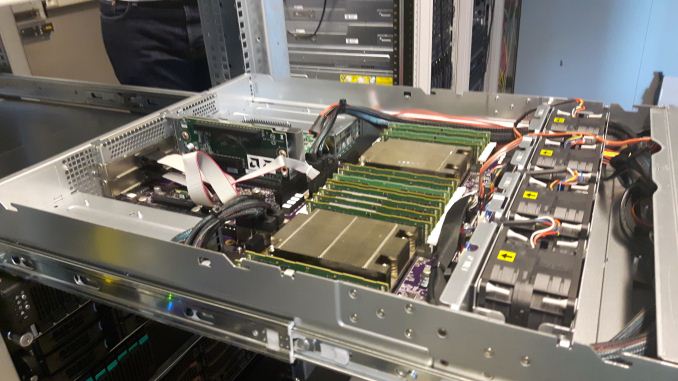

AMD EPYC 7601 – (2U Chassis)

Five years after our "Piledriver review", a new AMD server arrives in the Sizing Servers Lab.

| CPU | Two EPYC 7601 (2.2 GHz, 32c, 8x8MB L3, 180W) |

| RAM | 512 GB (16x32 GB) Samsung DDR4-2666 @2400 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3710 800 GB (data) |

| Motherboard | AMD Speedway |

| BIOS version | To check. |

| PSU | 1100W PSU (80+ Platinum) |

Intel's Xeon E5 Server – S2600WT (2U Chassis)

| CPU | Two Intel Xeon processor E5-2699v4 (2.2 GHz, 22c, 55MB L3, 145W) Two Intel Xeon processor E5-2690v3 (2.3 GHz, 14c, 35MB L3, 120W) |

| RAM | 256 GB (16x16GB) Kingston DDR-2400 |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3700 800 GB (data) |

| Motherboard | Intel Server Board Wildcat Pass |

| BIOS version | 1/28/2016 |

| PSU | Delta Electronics 750W DPS-750XB A (80+ Platinum) |

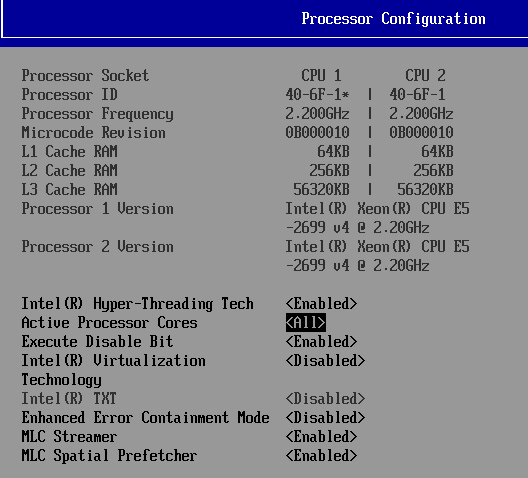

The typical BIOS settings can be seen below.

HP-G8 (2U Chassis) - Xeon E5-2690

| CPU | Two Intel Xeon processor E5-2690 (2.9GHz, 8c, 20MB L3, 135W) |

| RAM | 512 GB (16x32GB) Samsung DDR-3 LR-DIMM 1866 MHz @ 1333 MHz |

| Internal Disks | SAMSUNG MZ7LM240 (bootdisk) Intel SSD3700 800 GB (data) |

| Motherboard | HP G8 |

| BIOS version | 9/23/2016 |

| PSU | HP 750W (Gold) |

Other Notes

Both servers are fed by a standard European 230V (16 Amps max.) power line. The room temperature is monitored and kept at 23°C by our Airwell CRACs.

219 Comments

View All Comments

CajunArson - Tuesday, July 11, 2017 - link

Would a high-end server that was built in 2014 necessarily update? Maybe not.Should a high-end server with a brand new microarchitecture use the most recent version of the software if it has any expectation of seeing a real benefit? Absolutely.

If this was a GPU review and Anandtech used 2 year old drivers on a new GPU (assuming they even worked at all) we wouldn't even be having this conversation.

BrokenCrayons - Tuesday, July 11, 2017 - link

Home users playing video games are in a different environment than you find in a business datacenter. There's a lot less money to be lost when a driver update causes a performance regression or eliminates a feature. Conversely, needlessly updating software in the aforementioned datacenter can result in the loss of many millions if something goes wrong.wallysb01 - Tuesday, July 11, 2017 - link

Conversely, having stuff working, but unnecessarily slowly costs money as well. Its a balance, and if you're spending hundreds of thousands or even millions on a cluster/data center/what have you, you'd probably want to spend at least a little bit of time optimizing it, right?Icehawk - Tuesday, July 11, 2017 - link

Most of the businesses I have worked for, ranging from 10 people to 50k, use severely outdated software and the barest minimum of patching. Optimization? HA!For example I work for a manufacturer & retailer currently, our POS system was last patched in 2012 by the vendor and has been replaced by at least two versions newer. We have XP machines in each of our stores as that is the only OS that can run the software.

The above is very typical. The 50k company I worked for had software so old and deeply entrenched that modernizing it is virtually impossible. My current company is working on getting to a new product... that was new in 2012 and has also been replaced with a newer version. Whee!

Icehawk - Tuesday, July 11, 2017 - link

One other thing - maybe the big shops actually do test/size but none of the places I have worked at and have been involved in do any testing, benchmarking, etc. They just buy whatever their preferred vendor gives them that meets the budget and they *think* will work. My coworker is in charge (lol) of selecting servers for a new office... he has no clue what anything in this article is. He has never read a single review, overview, or test of a processor. I could keep going on like this :(0ldman79 - Wednesday, July 12, 2017 - link

Icehawk's comments are so accurate it is scary.I can't tell you how many businesses running custom *nix software running in a VM on a Windows server.

They're not all about speed. Reliability is the single most important factor, speed is somewhere down the line. The people that make those decisions and the people that drink coffee while they're waiting on the machines are very different.

Neither understand that it could all be done so much better and almost all of them are utterly terrified at the concept of speeding up the process if it means *any* changes are made.

JohanAnandtech - Friday, July 21, 2017 - link

We did test with NAMD 2.12 (Dec 2016).sutamatamasu - Tuesday, July 11, 2017 - link

Glad, AMD make back again to this segment, now we can only see what can Raja to do for server market with Radeon instinct.Kaotika - Tuesday, July 11, 2017 - link

So this confirms that the previous information regarding Skylake-X core configurations was wrong, and 12-core variant is in fact using HCC-core instead of LCC-core?Ian Cutress - Tuesday, July 11, 2017 - link

We corrected that in our Skylake-X review.