Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Memory Subsystem: Bandwidth

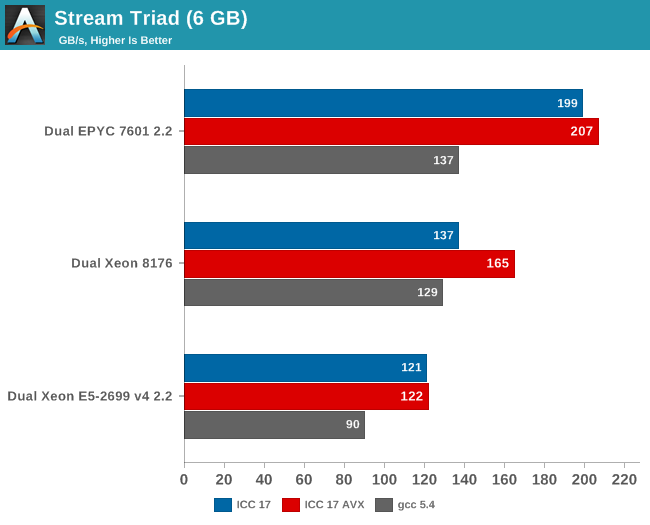

Measuring the full bandwidth potential with John McCalpin's Stream bandwidth benchmark is getting increasingly difficult on the latest CPUs, as core and memory channel counts have continued to grow. We compiled the stream 5.10 source code with the Intel compiler (icc) for linux version 17, or GCC 5.4, both 64-bit. The following compiler switches were used on icc:

icc -fast -qopenmp -parallel (-AVX) -DSTREAM_ARRAY_SIZE=800000000

Notice that we had to increase the array significantly, to a data size of around 6 GB. We compiled one version with AVX and one without.

The results are expressed in gigabytes per second.

Meanwhile the following compiler switches were used on gcc:

-Ofast -fopenmp -static -DSTREAM_ARRAY_SIZE=800000000

Notice that the DDR4 DRAM in the EPYC system ran at 2400 GT/s (8 channels), while the Intel system ran its DRAM at 2666 GT/s (6 channels). So the dual socket AMD system should theoretically get 307 GB per second (2.4 GT/s* 8 bytes per channel x 8 channels x 2 sockets). The Intel system has access to 256 GB per second (2.66 GT/s* 8 bytes per channel x 6 channels x 2 sockets).

AMD told me they do not fully trust the results from the binaries compiled with ICC (and who can blame them?). Their own fully customized stream binary achieved 250 GB/s. Intel claims 199 GB/s for an AVX-512 optimized binary (Xeon E5-2699 v4: 128 GB/s with DDR-2400). Those kind of bandwidth numbers are only available to specially tuned AVX HPC binaries.

Our numbers are much more realistic, and show that given enough threads, the 8 channels of DDR4 give the AMD EPYC server a 25% to 45% bandwidth advantage. This is less relevant in most server applications, but a nice bonus in many sparse matrix HPC applications.

Maximum bandwidth is one thing, but that bandwidth must be available as soon as possible. To better understand the memory subsystem, we pinned the stream threads to different cores with numactl.

| Pinned Memory Bandwidth (in MB/sec) | |||

| Mem Hierarchy |

AMD "Naples" EPYC 7601 DDR4-2400 |

Intel "Skylake-SP" Xeon 8176 DDR4-2666 |

Intel "Broadwell-EP" Xeon E5-2699v4 DDR4-2400 |

| 1 Thread | 27490 | 12224 | 18555 |

| 2 Threads, same core same socket |

27663 | 14313 | 19043 |

| 2 Threads, different cores same socket |

29836 | 24462 | 37279 |

| 2 Threads, different socket | 54997 | 24387 | 37333 |

| 4 threads on the first 4 cores same socket |

29201 | 47986 | 53983 |

| 8 threads on the first 8 cores same socket |

32703 | 77884 | 61450 |

| 8 threads on different dies (core 0,4,8,12...) same socket |

98747 | 77880 | 61504 |

The new Skylake-SP offers mediocre bandwidth to a single thread: only 12 GB/s is available despite the use of fast DDR-4 2666. The Broadwell-EP delivers 50% more bandwidth with slower DDR4-2400. It is clear that Skylake-SP needs more threads to get the most of its available memory bandwidth.

Meanwhile a single thread on a Naples core can get 27,5 GB/s if necessary. This is very promissing, as this means that a single-threaded phase in an HPC application will get abundant bandwidth and run as fast as possible. But the total bandwidth that one whole quad core CCX can command is only 30 GB/s.

Overall, memory bandwidth on Intel's Skylake-SP Xeon behaves more linearly than on AMD's EPYC. All off the Xeon's cores have access to all the memory channels, so bandwidth more directly increases with the number of threads.

219 Comments

View All Comments

msroadkill612 - Wednesday, July 12, 2017 - link

It looks interesting. Do u have a point?Are you saying they have a place in this epyc debate? using cheaper ddr3 ram on epyc?

yuhong - Friday, July 14, 2017 - link

"We were told from Intel that ‘only 0.5% of the market actually uses those quad ranked and LR DRAMs’, "intelemployee2012 - Wednesday, July 12, 2017 - link

what kind of a forum and website is this? we can't delete the account, cannot edit a comment for fixing typos, cannot edit username, cannot contact an admin if we need to report something. Will never use these websites from now on.Ryan Smith - Wednesday, July 12, 2017 - link

"what kind of a forum and website is this?"The basic kind. It's not meant to be a replacement for forums, but rather a way to comment on the article. Deleting/editing comments is specifically not supported to prevent people from pulling Reddit-style shenanigans. The idea is that you post once, and you post something meaningful.

As for any other issues you may have, you are welcome to contact me directly.

Ranger1065 - Thursday, July 13, 2017 - link

That's a relief :)iwod - Wednesday, July 12, 2017 - link

I cant believe what i just read. While I knew Zen was good for Desktop, i expected the battle to be in Intel's flavour on the Server since Intel has years to tune and work on those workload. But instead, we have a much CHEAPER AMD CPU that perform Better / Same or Slightly worst in several cases, using much LOWER Energy during workload, while using a not as advance 14nm node compared to Intel!And NO words on stability problems from running these test on AMD. This is like Athlon 64 all over again!

pSupaNova - Wednesday, July 12, 2017 - link

Yes it is.But this time much worse for Intel with their manufacturing lead shrinking along with their workforce.

Shankar1962 - Wednesday, July 12, 2017 - link

Competition has spoiled the naming convention Intels 14 === competetions 7 or 10Intel publicly challenged everyone to revisit the metrics and no one responded

Can we discuss the yield density and scaling metrics? Intel used to maintain 2year lead now grew that to 3-4year lead

Because its vertically integrated company it looks like Intel vs rest of the world and yet their revenue profits grow year over year

iwod - Thursday, July 13, 2017 - link

Grew to 3 - 4 years? Intel is shipping 10nm early next year in some laptop segment, TSMC is shipping 7nm Apple SoC in 200M yearly unit quantity starting next September.If anything the gap from 2 - 3 years is now shrink to 1 to 1.5 year.

Shankar1962 - Thursday, July 13, 2017 - link

Yeah 1-1.5 years if we cheat the metrics when comparison2-3years if we look at metrics accurately

A process node shrink is compared by metrics like yield cost scaling density etc

7nm 10nm etc is just a name