The AMD Zen and Ryzen 7 Review: A Deep Dive on 1800X, 1700X and 1700

by Ian Cutress on March 2, 2017 9:00 AM ESTBenchmarking Performance: CPU Rendering Tests

Rendering tests are a long-time favorite of reviewers and benchmarkers, as the code used by rendering packages is usually highly optimized to squeeze every little bit of performance out. Sometimes rendering programs end up being heavily memory dependent as well - when you have that many threads flying about with a ton of data, having low latency memory can be key to everything. Here we take a few of the usual rendering packages under Windows 10, as well as a few new interesting benchmarks.

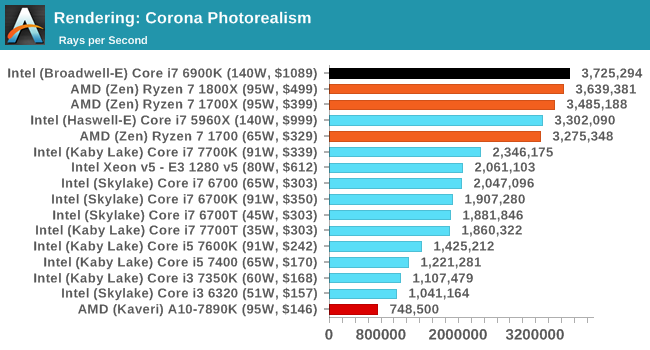

Corona 1.3

Corona is a standalone package designed to assist software like 3ds Max and Maya with photorealism via ray tracing. It's simple - shoot rays, get pixels. OK, it's more complicated than that, but the benchmark renders a fixed scene six times and offers results in terms of time and rays per second. The official benchmark tables list user submitted results in terms of time, however I feel rays per second is a better metric (in general, scores where higher is better seem to be easier to explain anyway). Corona likes to pile on the threads, so the results end up being very staggered based on thread count.

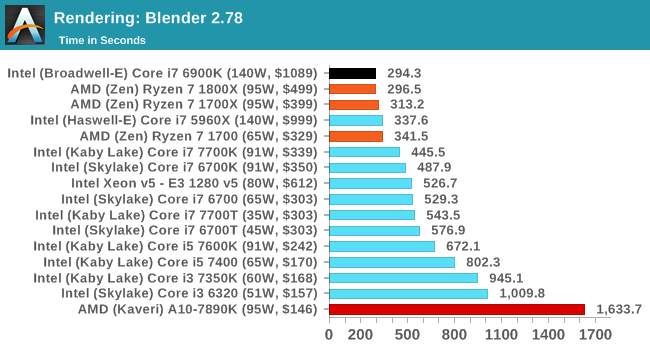

Blender 2.78

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

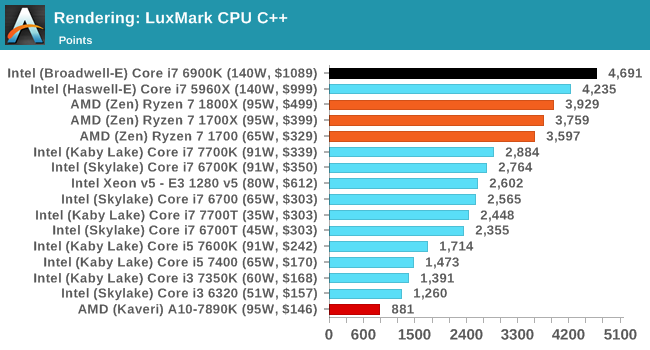

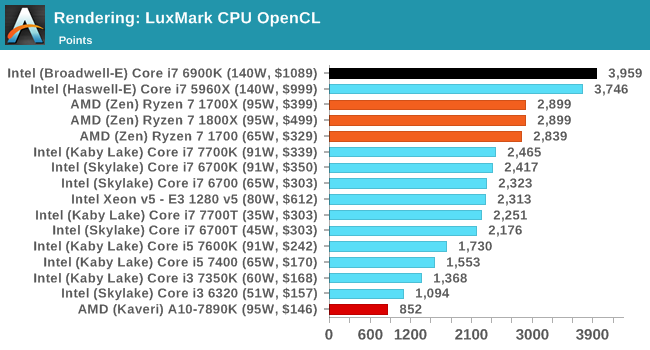

LuxMark

As a synthetic, LuxMark might come across as somewhat arbitrary as a renderer, given that it's mainly used to test GPUs, but it does offer both an OpenCL and a standard C++ mode. In this instance, aside from seeing the comparison in each coding mode for cores and IPC, we also get to see the difference in performance moving from a C++ based code-stack to an OpenCL one with a CPU as the main host.

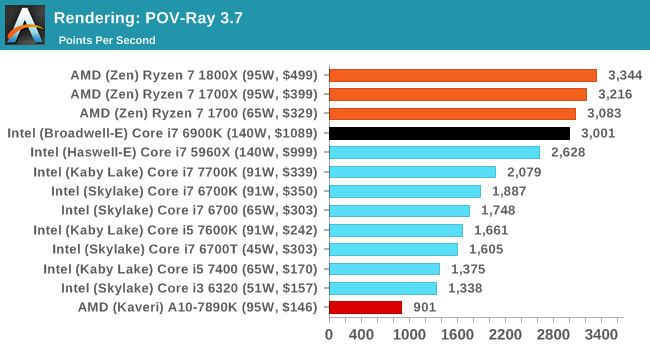

POV-Ray 3.7

Another regular benchmark in most suites, POV-Ray is another ray-tracer but has been around for many years. It just so happens that during the run up to AMD's Ryzen launch, the code base started to get active again with developers making changes to the code and pushing out updates. Our version and benchmarking started just before that was happening, but given time we will see where the POV-Ray code ends up and adjust in due course.

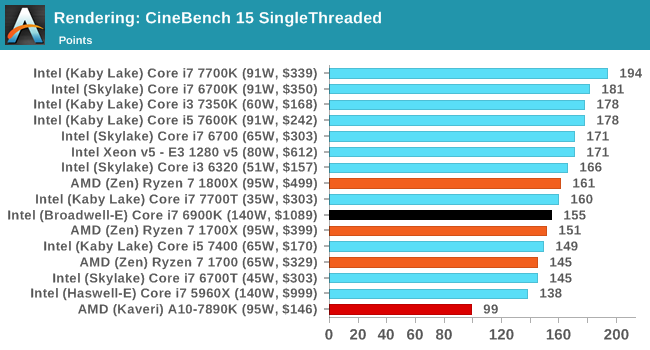

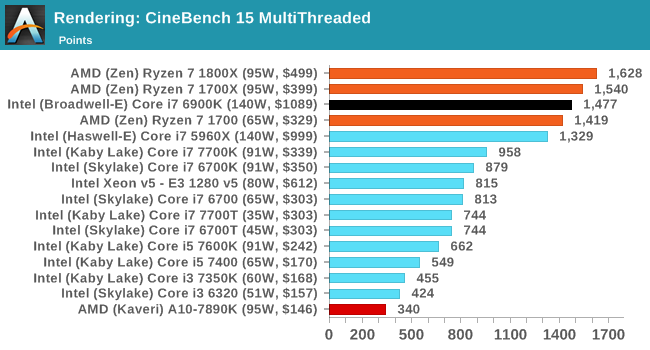

Cinebench R15

The latest version of CineBench has also become one of those 'used everywhere' benchmarks, particularly as an indicator of single thread performance. High IPC and high frequency gives performance in ST, whereas having good scaling and many cores is where the MT test wins out.

574 Comments

View All Comments

BurntMyBacon - Friday, March 3, 2017 - link

@Gothmoth: "gamer... as if the world is only full with idiotic people who waste their lives playing shooter or RPG´s."PC Gaming happens to be one of the few growing areas in the PC market. Not everyone games, but for those that do, the 7700K is still worth considering. Dropping $500 on the 1800X may not be the best call for those that don't take advantage of the parallelism. Of course, the 1800X wasn't really meant for people who can't take advantage of the parallelism. AMD will have lower cost narrower processors to address that gap. I'm curious as to how the performance/price equation will stand once AMD releases their upper end 6c/12t and 4c/8t processors.

Beany2013 - Friday, March 3, 2017 - link

Sod the 1800X - I need a new VM server, and if I want all the threads (sixteen), I can either drop £450 on a Xeon E5 2620 at 2.1-3ghz (cheapest Intel 16 thread option I can find), or I can spend £100 less, and get a Ryzen 7 1700 (3.0-3.7ghz) and put that extra money towards more RAM so I can run more VMs and get more work done.For those of us who aren't high end gamers - which is basically almost everyone, and a far more significant market - these chips may well give Intel a bloody nose in the workstation space; AMD have confirmed they'll use ECC RAM quite happily.

Photographers, videographers, CAD-CAM, developers etc are a bigger market in terms of raw units than high end gamers, and these chips look like being a pretty compelling option as it stands.

Steven R

Beany2013 - Friday, March 3, 2017 - link

(VM server for home, I should have noted - for work, I'll see how the Ryzen based opterons and supermicro mobos etc pan out - money is important in these factors, but I'm not a moron, and I'm not going to run production gear on gaming hardware, natch....)BurntMyBacon - Friday, March 3, 2017 - link

@Beany2013: "I need a new VM server, and if I want all the threads (sixteen), I can either drop £450 on a Xeon E5 2620 at 2.1-3ghz (cheapest Intel 16 thread option I can find), or I can spend £100 less, and get a Ryzen 7 1700 (3.0-3.7ghz) and put that extra money towards more RAM so I can run more VMs and get more work done."It is clear by this statement that you fall into the category of people that can take advantage of the parallelism. Therefore, my statement doesn't apply to your presented in the slightest.

I don't disagree that the Ryzen 7 series has a lot to offer to a lot of people (myself included). If I were in the market today, I'd be looking long and hard at an R7 1700X. The minor drop in gaming performance is less significant to me than the increase in performance for many other tasks I use my computer for. I do a little bit of dabbling in a lot of different things (most of which benefit from high thread count). I have noticed that for the set of applications I have open simultaneously and the tasks I have running, my computer is more responsive with more cores or threads, but single threaded performance is still important to the individual tasks.

In my workflow: (i3 < i5/FX-8xxx < i7 <? R7)

My point was that there is in fact a not so insignificant market of people putting computers together for the primary purpose of gaming. This market appears, by all metrics, to be growing. For this market, Intel's i7-7700K or better yet i5-7600K are still viable options that provide better performance/price than AMD's current options. I'll repeat: "AMD will have lower cost narrower processors to address that gap. I'm curious as to how the performance/price equation will stand once AMD releases their upper end 6c/12t and 4c/8t processors."

Cooe - Sunday, February 28, 2021 - link

"or better yet i5-7600K"Arguably the most short-sited statement in this entire comments section lol. The 4c/4t i5's had roughly equal gaming performance to Ryzen at launch but with ZERO headroom left for the future. This is why the i5-7600K gets absolutely freaking ROFLSTOMPED by the R5 1600 in modern titles/game engines.

JMB1897 - Friday, March 3, 2017 - link

Compelling, but I don't think it's totally there yet. I'd be worried about the memory issues. Increased latency as you add more DIMMs and dual vs quad channel. I'd spend that extra 100 on a Xeon personally.Sttm - Friday, March 3, 2017 - link

Thats who buys off the shelf CPUs thats cost $$$, Gamers. Thats who AMD needs to please with their product. GAMERS. Thats why AMD's stock has been tanking since Ryzen reviews went up, because GAMERS are the demographic that matters when it comes to performance CPU sales.deltaFx2 - Saturday, March 4, 2017 - link

@Sttm: You have an inflated opinion of the impact of gamers. No, AMD's stock isn't tanking because of gamers. I suggest you also look at Nvidia's stock, which is well down from its high of ~120, to ~98. Wed-Friday, Nvidia dropped from 105 to 98, and it dipped below that to ~96 at one point. That's roughly 7-8%. The two stocks are often correlated on drops, with AMD amplifying nvidia's drop. Both do GPUs, see? Some people make tonnes of money shorting AMD (and in recent times have lost their shirt doing so).Here's the truth: All Desktop, as per Lisa Su, is a 5 bn TAM market and gaming is part of this (let's say 50%). Nothing to scoff at, sure, but compared to laptop and server, it's a rounding error. There's NOTHING in these tests/reviews to suggest that AMD will suck in those markets; in fact, quite the opposite: power looks good, perf looks good. AMD's stock (long term) won't tank on the whims of gamers. They help get the mindshare, which is the only reason they're worth catering to (they tend to be a vocal, passionate, and sometimes irrational lot. You won't see datacenter gurus doing the stuff that gamers do. They certainly won't shoot each other over whose GPU is the best).

cmdrdredd - Saturday, March 4, 2017 - link

Believe it or not there are millions of people worldwide who pretty much use their PC for two things. The internet (web browsing, email etc) and gaming. You don't need 16 threads to check email and read forums either so gaming performance is going to be critical. It's not just the CPU performance, it's the entire platform that contributes to Gaming related performance.sans - Thursday, March 2, 2017 - link

Yeah, stick with Intel because Intel is the standard and its products are the best for each respective market. AMD is a total failure.