The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTCrysis 3

Still one of our most punishing benchmarks 3 years later, Crysis 3 needs no introduction. Crytek’s DX11 masterpiece, Crysis 3’s Very High settings still punish even the best of video cards, never mind the rest. Along with its high performance requirements, Crysis 3 is a rather balanced game in terms of power consumption and vendor optimizations. As a result it can give us a good look at how our video cards stack up on average, and later on in this article how power consumption plays out.

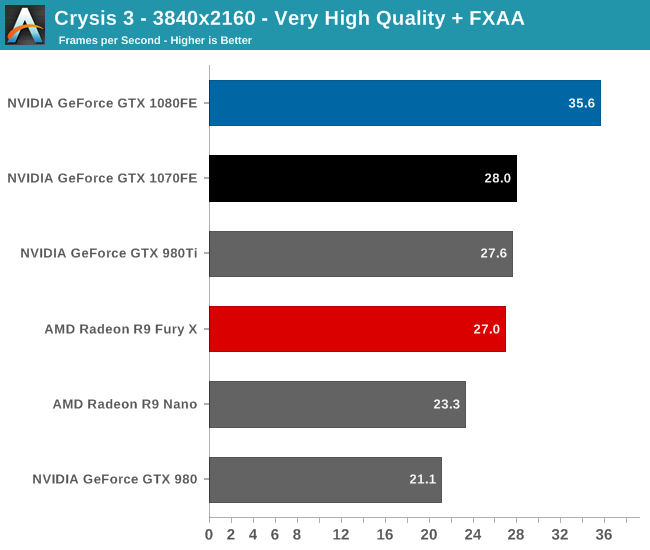

This being the first cycle we’ve used the Very High settings, it’s humorous to see a $700 video card getting 35fps on a 3 year old game. Very High settings give Crysis 3 a level of visual quality many games still can’t match, but the tradeoff is that it obliterates most video cards. We’re probably still 3-4 years out from a video card that can run at 4K with 4x MSAA at 60fps, never mind accomplishing that without the MSAA.

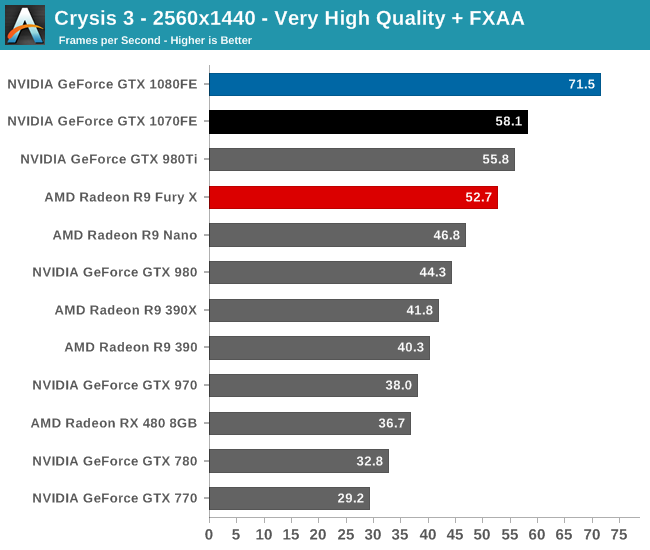

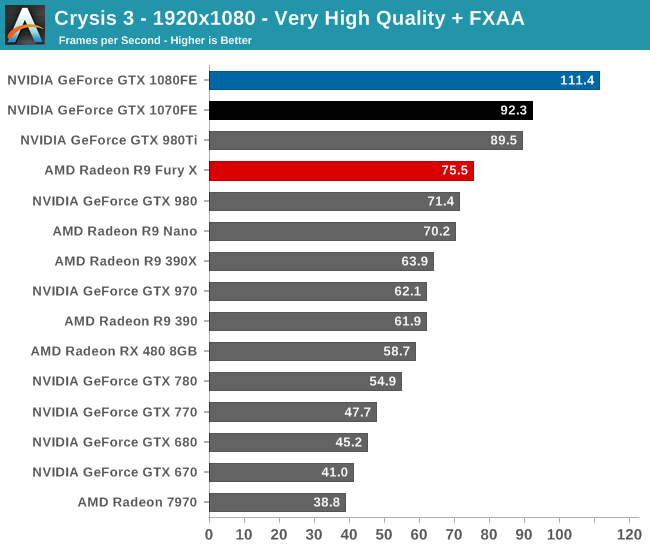

The GTX 1080 does however at least get the distinction of being the one and only card to crack 30fps at 4K. Though 30fps is not suggested for Crysis, it can legitimately claim to be the only card that can even handle the game at 4K with a playable framerate at this time. Otherwise if we turn down the resolution, the GTX 1080 is now the only card to crack 60fps at 1440p. Very close to that mark though is the GTX 1070, which at 58.1fps is a small overclock away from 60fps.

Looking at the generational comparisons, GTX 1080 and GTX 1070 lead by a bit less than usual, at 62% and 51% respectively. The GTX 1080/1070 gap on the other hand is pretty typical, with the GTX 1080 leading by 27% at 4K, 23% at 1440p, and 21% at 1080p.

200 Comments

View All Comments

Robalov - Tuesday, July 26, 2016 - link

Feels like it took 2 years longer than normal for this review :Dextide - Wednesday, July 27, 2016 - link

The venn diagram is wrong -- for GP104 it says 1:64 speed for FP16 -- it is actually 1:1 for FP16 (ie same speed as FP32) (NOTE: GP100 has 2:1 FP16 -- meaning FP16 is twice as fast as FP32)extide - Wednesday, July 27, 2016 - link

EDIT: I might be incorrect about this actually as I have seen information claiming both .. weird.mxthunder - Friday, July 29, 2016 - link

its really driving me nuts that a 780 was used instead of a 780ti.yhselp - Monday, August 8, 2016 - link

Have I understood correctly that Pascal offers a 20% increase in memory bandwidth from delta color compression over Maxwell? As in a total average of 45% over Kepler just from color compression?flexy - Sunday, September 4, 2016 - link

Sorry, late comment. I just read about GPU Boost 3.0 and this is AWESOME. What they did, is expose what previously was only doable with bios modding - eg assigning the CLK bins different voltages. The problem with overclocking Kepler/Maxwell was NOT so much that you got stuck with the "lowest" overclock as the article says, but that simply adding a FIXED amount of clocks across the entire range of clocks, as you would do with Afterburner etc. where you simply add, say +120 to the core. What happened here is that you may be "stable" at the max overclock (CLK bin), but since you added more CLKs to EVERY clock bin, the assigned voltages (in the BIOS) for each bin might not be sufficient. Say you have CLK bin 63 which is set to 1304Mhz in a stock bios. Now you use Afterburner and add 150 Mhz, now all of a sudden this bin amounts to 1454Mhz BUT STILL at the same voltage as before, which is too low for 1454Mhz. You had to manually edit the table in the BIOS to shift clocks around, especially since not all Maxwell cards allowed adding voltage via software.Ether.86 - Tuesday, November 1, 2016 - link

Astonishing review. That's the way Anandtech should be not like the mobile section which sucks...Warsun - Tuesday, January 17, 2017 - link

Yeah looking at the bottom here.The GTX 1070 is on the same level as a single 480 4GB card.So that graph is wrong.http://www.hwcompare.com/30889/geforce-gtx-1070-vs...

Remember this is from GPU-Z based on hardware specs.No amount of configurations in the Drivers changes this.They either screwed up i am calling shenanigans.

marceloamaral - Thursday, April 13, 2017 - link

Nice Ryan Smith! But, my question is, is it truly possible to share the GPU with different workloads in the P100? I've read in the NVIDIA manual that "The GPU has a time sliced scheduler to schedule work from work queues belonging to different CUDA contexts. Work launched to the compute engine from work queues belonging to different CUDA contexts cannot execute concurrently."marceloamaral - Thursday, April 13, 2017 - link

Nice Ryan Smith! But, my question is, is it truly possible to share the GPU with different workloads in the P100? I've read in the NVIDIA manual that "The GPU has a time sliced scheduler to schedule work from work queues belonging to different CUDA contexts. Work launched to the compute engine from work queues belonging to different CUDA contexts cannot execute concurrently."