Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTThe Performance Impact of Asynchronous Shading

Finally, let’s take a look at Ashes’ latest addition to its stable of DX12 headlining features; asynchronous shading/compute. While earlier betas of the game implemented a very limited form of async shading, this latest beta contains a newer, more complex implementation of the technology, inspired in part by Oxide’s experiences with multi-GPU. As a result, async shading will potentially have a greater impact on performance than in earlier betas.

Update 02/24: NVIDIA sent a note over this afternoon letting us know that asynchornous shading is not enabled in their current drivers, hence the performance we are seeing here. Unfortunately they are not providing an ETA for when this feature will be enabled.

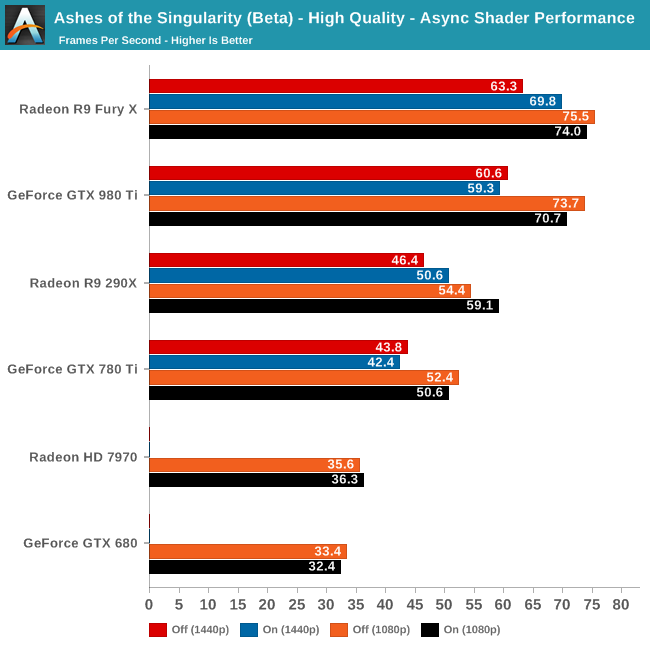

Since async shading is turned on by default in Ashes, what we’re essentially doing here is measuring the penalty for turning it off. Not unlike the DirectX 12 vs. DirectX 11 situation – and possibly even contributing to it – what we find depends heavily on the GPU vendor.

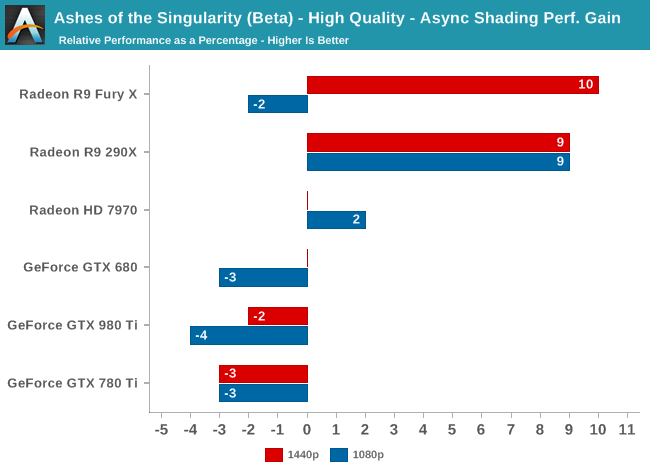

All NVIDIA cards suffer a minor regression in performance with async shading turned on. At a maximum of -4% it’s really not enough to justify disabling async shading, but at the same time it means that async shading is not providing NVIDIA with any benefit. With RTG cards on the other hand it’s almost always beneficial, with the benefit increasing with the overall performance of the card. In the case of the Fury X this means a 10% gain at 1440p, and though not plotted here, a similar gain at 4K.

These findings do go hand-in-hand with some of the basic performance goals of async shading, primarily that async shading can improve GPU utilization. At 4096 stream processors the Fury X has the most ALUs out of any card on these charts, and given its performance in other games, the numbers we see here lend credit to the theory that RTG isn’t always able to reach full utilization of those ALUs, particularly on Ashes. In which case async shading could be a big benefit going forward.

As for the NVIDIA cards, that’s a harder read. Is it that NVIDIA already has good ALU utilization? Or is it that their architectures can’t do enough with asynchronous execution to offset the scheduling penalty for using it? Either way, when it comes to Ashes NVIDIA isn’t gaining anything from async shading at this time.

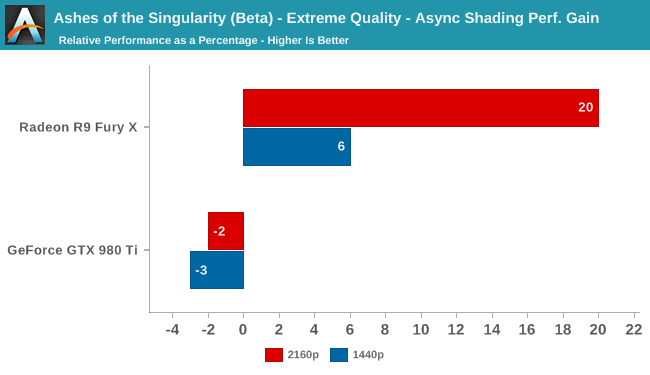

Meanwhile pushing our fastest GPUs to their limit at Extreme quality only widens the gap. At 4K the Fury X picks up nearly 20% from async shading – though a much smaller 6% at 1440p – while the GTX 980 Ti continues to lose a couple of percent from enabling it. This outcome is somewhat surprising since at 4K we’d already expect the Fury X to be rather taxed, but clearly there’s quite a bit of shader headroom left unused.

153 Comments

View All Comments

rarson - Wednesday, February 24, 2016 - link

Yeah, because people who bought a 980 Ti are already looking to replace them...Aspiring Techie - Wednesday, February 24, 2016 - link

I'm pretty sure that Nvidia's Pascal cards will be optimized for DX12. Still, this gives AMD a slight advantage, which they need pretty badly now.testbug00 - Wednesday, February 24, 2016 - link

*laughs*Pascal is more of the same as Maxwell when it comes to gaming.

Mondozai - Thursday, February 25, 2016 - link

Pascal is heavily compute-oriented, which will affect how the gaming lineup arch will be built. Do your homework.testbug00 - Thursday, February 25, 2016 - link

Sorry, Maxwell already can support packed FP16 operations at 2x the rate of FP32 with X1.The rat of compute will be pretty much exclusive to GP100. Like how Kepler had a gaming line and GK110 for compute.

MattKa - Thursday, February 25, 2016 - link

*laughs*I'd like to borrow your crystal ball...

You lying sack of shit. Stop making things up you retarded ass face.

testbug00 - Thursday, February 25, 2016 - link

What does Pascal have over Maxwell according to Nvidia again? Bolted on FP64 units?CiccioB - Sunday, February 28, 2016 - link

I have not read anything about Pascal from nvidia outside the FP16 capabilities that are HPC oriented (deep learning).Where have you read anything about how Pascal cores/SMX/cache and memory controller are organized? Are they still using crossbar or they finally passed to a ring bus? Are caches bigger or faster? What are the ratio of cores/ROPs/TMUs? How much bandwidth for each core? How much has the compressed memory technology improved? Have cores doubled the ALUs or they have made more independent core? How much independent? Is the HW scheduler now able to preempt the graphics thread or it still can't? How many threads can it support? Is the Voxel support better and able to be used heavily in scenes to make global illumination quality difference?

I have not read anything about this points. Have you some more info about them?

Because what I could see is that at a first glance even Maxwell was not really different than Kepler. But in reality the performance were quite different in many ways.

I think you really do not know anything about what you are talking about,.

You are just expressing your desire and hopes like any other fanboy as a mirror of the frustration you have suffered all these years with the less capable AMD architecture you have been using up to now. You just hop nvidia has stopped and AMD finally made a step forward. It may be you are right. But you can't say now, nor I would going telling such stupid thing you were saying without anything as a fact.

anubis44 - Thursday, February 25, 2016 - link

I think nVidia's been caught with their pants down, and Pascal doesn't have hardware schedulers to perform async compute, either. It may be that AMD has seriously beaten them this time.anubis44 - Thursday, February 25, 2016 - link

nVidia wasn't expecting AMD to force Microsoft's hand and release DX12 so soon. I have a feeling Pascal, like Maxwell, doesn't have hardware schedulers, either. It's beginning to look like nVidia's been check-mated by AMD here.