Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTThe Performance Impact of Asynchronous Shading

Finally, let’s take a look at Ashes’ latest addition to its stable of DX12 headlining features; asynchronous shading/compute. While earlier betas of the game implemented a very limited form of async shading, this latest beta contains a newer, more complex implementation of the technology, inspired in part by Oxide’s experiences with multi-GPU. As a result, async shading will potentially have a greater impact on performance than in earlier betas.

Update 02/24: NVIDIA sent a note over this afternoon letting us know that asynchornous shading is not enabled in their current drivers, hence the performance we are seeing here. Unfortunately they are not providing an ETA for when this feature will be enabled.

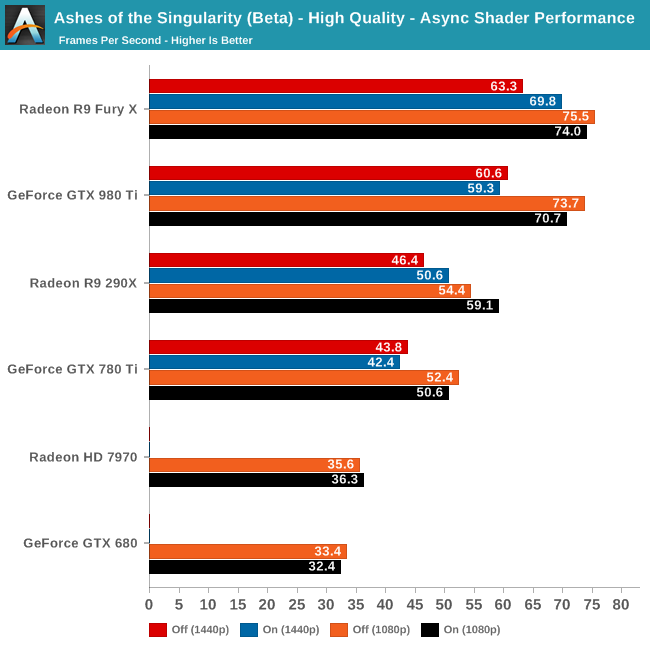

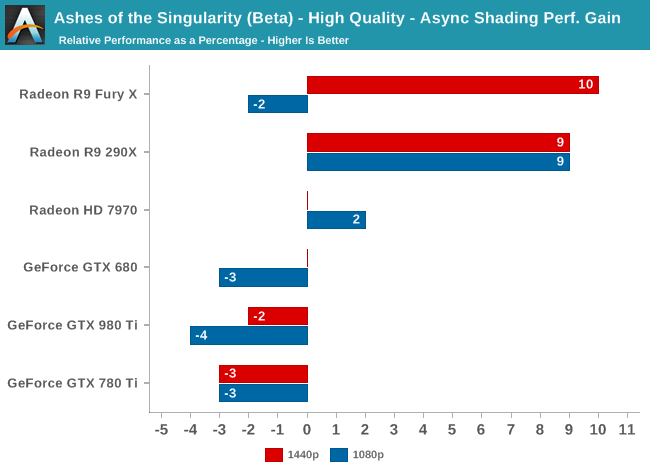

Since async shading is turned on by default in Ashes, what we’re essentially doing here is measuring the penalty for turning it off. Not unlike the DirectX 12 vs. DirectX 11 situation – and possibly even contributing to it – what we find depends heavily on the GPU vendor.

All NVIDIA cards suffer a minor regression in performance with async shading turned on. At a maximum of -4% it’s really not enough to justify disabling async shading, but at the same time it means that async shading is not providing NVIDIA with any benefit. With RTG cards on the other hand it’s almost always beneficial, with the benefit increasing with the overall performance of the card. In the case of the Fury X this means a 10% gain at 1440p, and though not plotted here, a similar gain at 4K.

These findings do go hand-in-hand with some of the basic performance goals of async shading, primarily that async shading can improve GPU utilization. At 4096 stream processors the Fury X has the most ALUs out of any card on these charts, and given its performance in other games, the numbers we see here lend credit to the theory that RTG isn’t always able to reach full utilization of those ALUs, particularly on Ashes. In which case async shading could be a big benefit going forward.

As for the NVIDIA cards, that’s a harder read. Is it that NVIDIA already has good ALU utilization? Or is it that their architectures can’t do enough with asynchronous execution to offset the scheduling penalty for using it? Either way, when it comes to Ashes NVIDIA isn’t gaining anything from async shading at this time.

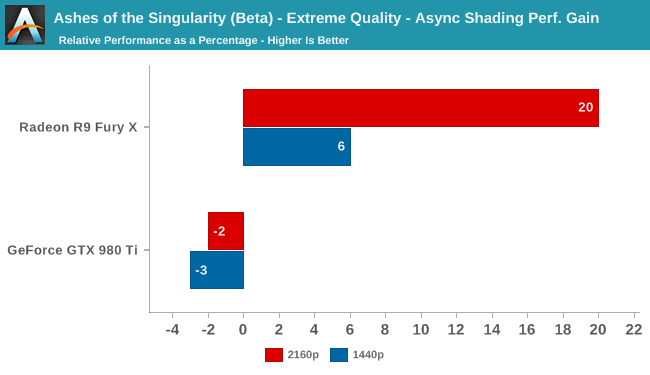

Meanwhile pushing our fastest GPUs to their limit at Extreme quality only widens the gap. At 4K the Fury X picks up nearly 20% from async shading – though a much smaller 6% at 1440p – while the GTX 980 Ti continues to lose a couple of percent from enabling it. This outcome is somewhat surprising since at 4K we’d already expect the Fury X to be rather taxed, but clearly there’s quite a bit of shader headroom left unused.

153 Comments

View All Comments

Friendly0Fire - Wednesday, February 24, 2016 - link

You don't have enough data to know this.Once the second generation of DX12 cards come out, then you can analyze the jumps and get a better idea. Ideally you'd wait for three generations of post-DX12 GPUs to get the full picture. As it is, all we know is that AMD's DX12 driver is better than their DX11 driver... which ain't saying much.

The_Countess - Thursday, February 25, 2016 - link

except we have 3 generations of DX12 cards already on AMD's side, starting with the hd7970, which still holds its own quit well.and we've had multiple DX12 and vulkan benchmarks already and in every one of them the 290 and 390 in particular beat the crap out of nvidia's direct competition. in fact they often beat or match the card above them as well

as for drivers. AMD's dx11 drivers are fine. they just didn't invest bucketloads of money in game specific optimizations like nividia did, but instead focused on fixed the need for those optimizations in the first place. nvidia's investment doesn't offer long term benefits (a few months, then people move on to the next game) and that level of optimization in the drivers is impossible and even unwanted in low level API's.

basically nvidia will be losing its main competitive advantage this year.

hero4hire - Friday, February 26, 2016 - link

I think what he meant was we don't have enough test cases to conclude mature dx12 performance. The odds are pointing to AMD having faster gpus for dx12. But until multiple games are out, and preferably one or two "dx12" noted driver, we're speculating. I thought this was clear from the article?It's a stretch calling 3 generations of dx12 released cards too. I guess if we add up draft revisions there are 50 generations of AC wireless.

You could state that because AMDs arch is targeting dx12, it looks to give an accross the board performance win in dx12 next gen games. But again we only have 1 beta game as a test case. Just wait and it will be a fact or not. No need to backfill the why

CiccioB - Sunday, February 28, 2016 - link

Right, they didn't invest bunchload in optimizing current game, they just payed a single company to make a benchmark game using their most strong point in DX12, super mega threaded (useless) engine. Not different than nvidia using super mega geometry (uselessly) complex scenes helped by tessellation.Perfect marketing: most return with less investments.

Unfortunately a single game with a bunchload of ASYN compute thread added just for the joy of it is not a complete DX12 trend: what about games that are going to support Voxel global illumination that AMD HW cannot handle?

We'll see where the game engine will point to. And if this is another faux-fire that AMD has started up these years seeing they are in big trouble.

BTW: it is stupid to say that 390 "beat up the crap aout of anything else" that is using a different API. All you could see is that a beefed up GPU like Hawaii consuming 80+W with respect to the competition manage finally to pass it as it should have do at day one. But this was only because of the use of a different API with different capacities that the other GPU could not benefit from.

You can't say it is better if with current standard API (DX11) that beefed up GPU can't really do better.

If you are so excited by the fact that a GPU 33% bigger than another is able to get almost 20% more in performance with a future API and best conditions at the moment a complete new architecture is going to be launched by both red and green teams, well, you really demonstrates how biased you are. Whoever has bought a 290 (then 390) card back in the old days during all these month has been biting dust (and loosing Watts) and the small boost at the end of these cards life is really a shallow thing to be exited for.

lilmoe - Wednesday, February 24, 2016 - link

I like what AMD has done with "future proofing" their cards and drivers for DirectX12. But people buy graphics cards to play games TODAY. I'd rather get a graphics card with solid performance in what we have now rather than get one and sit down playing the waiting game.1) It's not like NVidia's DX12 performance is "awful", you'll still get to play future games with relatively good performance.

2) The games you play now won't be obsolete for years.

3) I agree with what others have said; AOS is just one game. We DON'T know if NVidia cards won't get any performance gains from DX12 under other games/engines.

ppi - Wednesday, February 24, 2016 - link

You do not buy a new gfx card to play games TODAY, but for playing TOMORROW, next month, quarter and then for a few years (few being ~two), until the performance in new games regresses to the point when you bite the bullet and buy a new one.Most people do not have unlimited budget to upgrade every six months when a new card claims performance crown.

Friendly0Fire - Wednesday, February 24, 2016 - link

It's unlikely that the gaming market will be flooded by DX12 games within six months. It's unlikely to happen within a few years, even. Look at how slow DX10 adoption was.anubis44 - Thursday, February 25, 2016 - link

I think you're quite wrong about this. Windows 10 adoption is spreading like wildfire in comparison to Windows XP --> Vista. DX10 wasn't available as a free upgrade to Vista the way DX12 is in Windows 10.Despoiler - Thursday, February 25, 2016 - link

Just about every title announced for 2016 is DX12 and some are DX12 only. There are many already released games that have DX12 upgrades in the works.Space Jam - Wednesday, February 24, 2016 - link

Nvidia leading is always irrelevant. Get with the program :pNvidia's GPUs lead for two years? Doesn't matter, AMD based on future performance.

DX11 the only real titles in play? Doesn't matter, the miniscule DX12/Vulkan sample size says buy AMD!