Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTThe Performance Impact of Asynchronous Shading

Finally, let’s take a look at Ashes’ latest addition to its stable of DX12 headlining features; asynchronous shading/compute. While earlier betas of the game implemented a very limited form of async shading, this latest beta contains a newer, more complex implementation of the technology, inspired in part by Oxide’s experiences with multi-GPU. As a result, async shading will potentially have a greater impact on performance than in earlier betas.

Update 02/24: NVIDIA sent a note over this afternoon letting us know that asynchornous shading is not enabled in their current drivers, hence the performance we are seeing here. Unfortunately they are not providing an ETA for when this feature will be enabled.

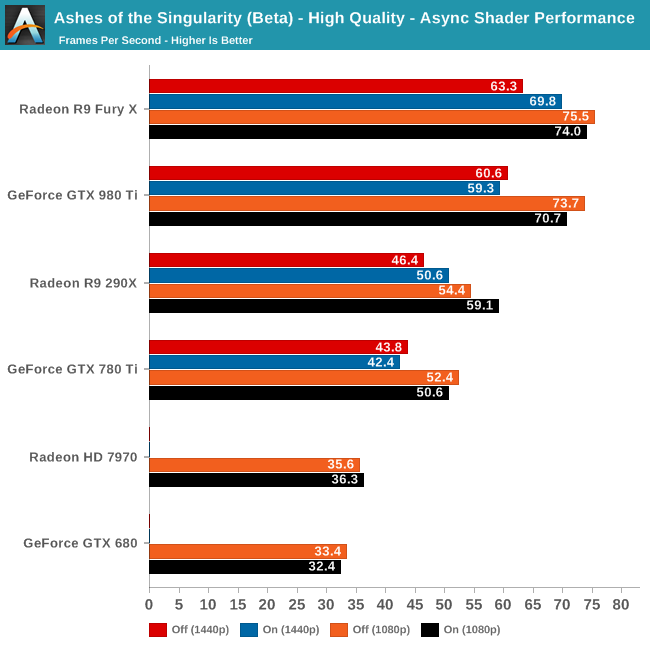

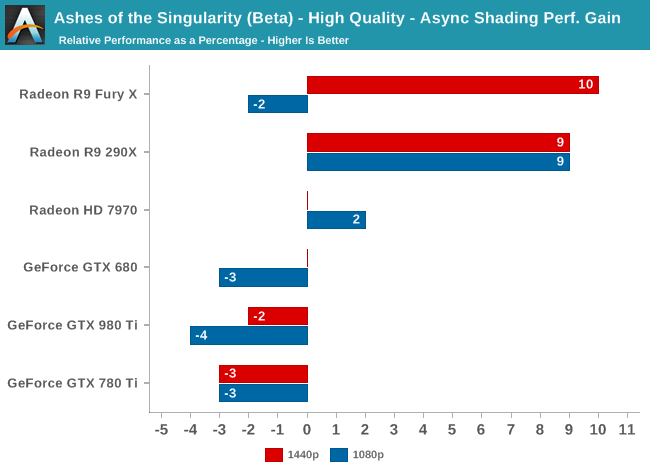

Since async shading is turned on by default in Ashes, what we’re essentially doing here is measuring the penalty for turning it off. Not unlike the DirectX 12 vs. DirectX 11 situation – and possibly even contributing to it – what we find depends heavily on the GPU vendor.

All NVIDIA cards suffer a minor regression in performance with async shading turned on. At a maximum of -4% it’s really not enough to justify disabling async shading, but at the same time it means that async shading is not providing NVIDIA with any benefit. With RTG cards on the other hand it’s almost always beneficial, with the benefit increasing with the overall performance of the card. In the case of the Fury X this means a 10% gain at 1440p, and though not plotted here, a similar gain at 4K.

These findings do go hand-in-hand with some of the basic performance goals of async shading, primarily that async shading can improve GPU utilization. At 4096 stream processors the Fury X has the most ALUs out of any card on these charts, and given its performance in other games, the numbers we see here lend credit to the theory that RTG isn’t always able to reach full utilization of those ALUs, particularly on Ashes. In which case async shading could be a big benefit going forward.

As for the NVIDIA cards, that’s a harder read. Is it that NVIDIA already has good ALU utilization? Or is it that their architectures can’t do enough with asynchronous execution to offset the scheduling penalty for using it? Either way, when it comes to Ashes NVIDIA isn’t gaining anything from async shading at this time.

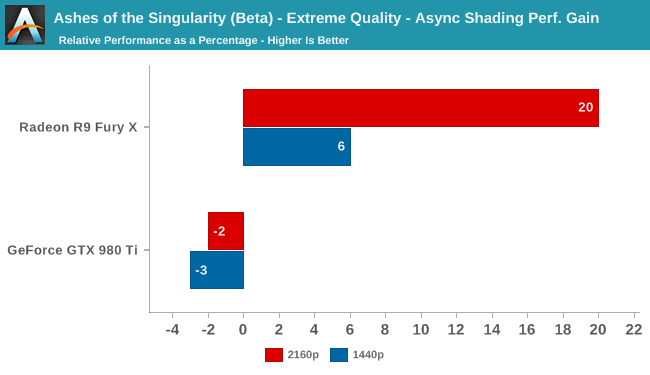

Meanwhile pushing our fastest GPUs to their limit at Extreme quality only widens the gap. At 4K the Fury X picks up nearly 20% from async shading – though a much smaller 6% at 1440p – while the GTX 980 Ti continues to lose a couple of percent from enabling it. This outcome is somewhat surprising since at 4K we’d already expect the Fury X to be rather taxed, but clearly there’s quite a bit of shader headroom left unused.

153 Comments

View All Comments

dustwalker13 - Thursday, February 25, 2016 - link

or ... not to put to fine a point on it, nvidias program and strategy to optimize games for their cards (aka in some instances actively sabotaging the competitions performance through using specialized operations that run great on nvidias hardware but very poorly on others) has lead to a near perfect usage of DX11 for them while amd was struggling along.on ashes, where there is no such interference, amd seems to be able to utilize the strong points of its architecture (it seems to be better suited for DX12) while nvidia has had no chance to "optimize" the competition out of the top spot ... too bad spaceships do not have hair ... ;P

prtskg - Thursday, February 25, 2016 - link

Lol! spaceships don't have hair. I'd have upvoted your comment if there was such an option.HalloweenJack - Thursday, February 25, 2016 - link

Waiting for Nvidia to `fix` async - just as they promised DX12 drivers for Fermi 4 months ago.....Harry Lloyd - Thursday, February 25, 2016 - link

Well, AMD has had bad DX11 performance for years, they clearly focused their architecture on Mantle/DX12, because they knew they would be producing GPUs for consoles. That will finally pay off this year.NVIDIA focused on DX11, having a big advantage for four years, and now they have to catch up, if not with Pascal, then with Volta next year.

doggface - Thursday, February 25, 2016 - link

Personally as the owner of an nVidia card, I have to say Bravo AMD. That's some impressive gains and I look forward to the coming D12 GPU wars from which we will all benefit.minijedimaster - Thursday, February 25, 2016 - link

Exactly. Also as a current Nvidia card owner, I don't feel the need to rush to a Windows 10 upgrade. Seems I have several months or more before I'll be looking into it. In the mean time DX11 will do just fine for me.mayankleoboy1 - Thursday, February 25, 2016 - link

AMD released 16.2 Crimson Edition drivers with more performance for AotS.Will you be re-benchmarking the game?

Link: http://support.amd.com/en-us/kb-articles/Pages/AMD...

albert89 - Thursday, February 25, 2016 - link

The reason why Nvidia is losing ground to AMD is because their GPU's are predominantly serial or DX11 while AMD as it is turning out is parallel (DX12) and has been for a number of years. And not only that, but are on their 3rd Gen of parallel architecture.watzupken - Thursday, February 25, 2016 - link

Not sure if its possible to retest this with a Tonga card with 4GB Vram, i.e. R9 380x or 380? Just a little curious why it seems to be lagging behind quite a fair bit.Anyway, its good to see the investment in DX 12 paying off for AMD. At least owners of older AMD cards can get a performance boost when DX 12 become more popular this year and the next. Not too sure about Nvidia cards, but they seem to be very focused on optimizing for DX 11 with their current gen cards and certainly seems to be doing the right thing for themselves since they are still doing very well.

silverblue - Friday, February 26, 2016 - link

Tonga has more ACEs than Tahiti, so this could be one of those circumstances, given more memory, of Tonga actually beating out the 7970/280X. However, according to AT's own article on the subject - http://www.anandtech.com/show/9124/amd-dives-deep-... - AMD admits the extra ACEs are likely overkill, though to be fair, I think with DX12 and VR, we're about to find out.