The IBM POWER8 Review: Challenging the Intel Xeon

by Johan De Gelas on November 6, 2015 8:00 AM EST- Posted in

- IT Computing

- CPUs

- Enterprise

- Enterprise CPUs

- IBM

- POWER

- POWER8

The L4-cache and Memory Subsystem

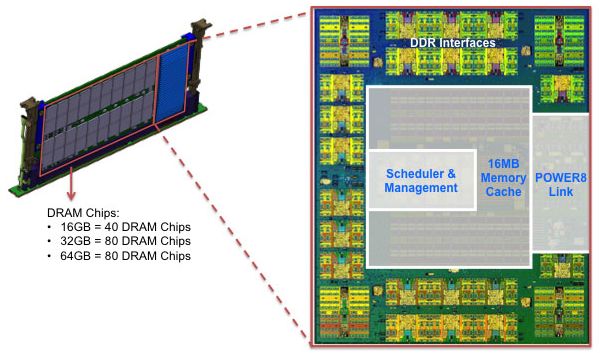

Each POWER8 memory controller has access to four "Custom DIMMs" or CDIMMs. Each CDIMMs is in fact a "Centaur" chip and 40 to 80 DRAM chips. The Centaur chip contains the DDR3 interfaces, the memory management logic and a 16 MB L4-cache.

The 16 MB L4-cache is eDRAM technology like the on-die L3-cache. Let us see how the CDIMMs look in reality.

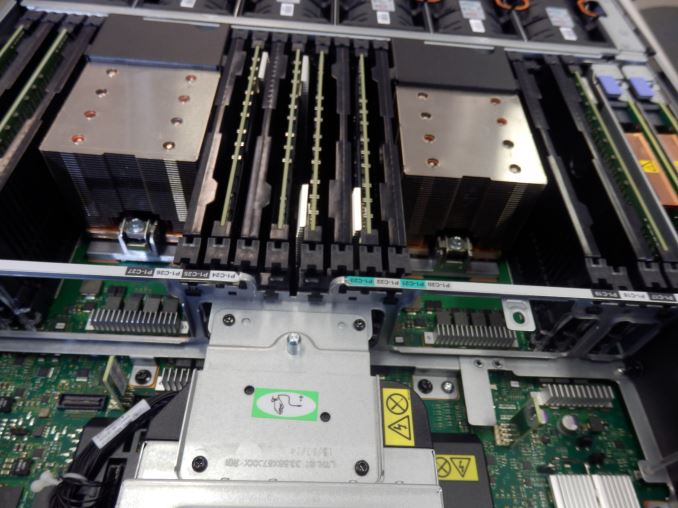

Considering that 4Gb DRAM chips were available in mid 2013, the 1600 MHz 2Gb DRAM chips used here look a bit outdated. Otherwise the (much) more expensive 64GB CDIMMs use the current 4Gb DRAM chips. The S822L has 16 slots and can thus use up to 1TB (64GB x 16) in DIMMs.

Considering that many Xeon E5 servers are limited to 768 GB, 1 TB is more than competitive. Some Xeon E5 servers can reach 1.5 TB with 64 GB LR-DIMMs but not every server supports this rather expensive memory technology. It is very easy to service the CDIMMs: a gentle push on the two sides will allow you to slide them out. The black pieces of plastic between the CDIMMS are just place-holders that protect the underlying memory slots. For our testing we had CDIMMs installed in 8 of our system's 16 slots.

The Centaur chip acts as a 16MB L4-cache to save memory accesses and thus energy, but it needs quite a bit of power (10-20 W) itself and as a result is covered by heatsink. CDIMMs have ECC enabled (8+1 for ECC) and have also an extra spare DRAM chip. As result, a CDIMM has 10 DRAM chips while offering capacity of 8 chips.

That makes the DRAM subsystem of the S822L much more similar to the E7 memory subsystem with the "Scalable memory interconnect" and "Jordan Creek" memory buffer technology than to the typical Xeon E5 servers.

146 Comments

View All Comments

Der2 - Friday, November 6, 2015 - link

Life's good when you got power.BlueBlazer - Friday, November 6, 2015 - link

Aye, the power bills will skyrocket.Brutalizer - Friday, November 13, 2015 - link

It is confusing that sometimes you are benchmarking cores, and sometimes cpus. The question here is "which is the fastest cpu, x86 or POWER8" - and then you should bench cpu vs cpu. Not core vs core. If a core is faster than another core says nothing, you also need to know how many cores there are. Maybe one cpu has 2 cores, and the other has 1.000 cores. So when you tell which core is fastest, you give incomplete information so I still have to check up how many cores and then I can conclude which cpu is fastest. Or can I? There are scaling issues, just because one benchmark runs well on one core, does not mean it runs equally well when run on 18 cores. This means I can not extrapolate from one core to the entire cpu. So I still am not sure which cpu is fastest as you give me information about core performance. Next time, if you want to talk about which cpu is faster, please benchmark the entire cpu. Not core, as you are not talking about which core is faster.Here are 20+ world records by SPARC M7 cpu. It is typically 2-3x faster than POWER8 and Intel Xeon, all the way up to >10x faster. For instance, M7 achieves 87% higher saps than E5-2699v3.

https://blogs.oracle.com/BestPerf/

The big difference between POWER and SPARC vs x86, is scalability and RAS. When I say scalability, I talk about scale-up business Enterprise servers with as many as 16- or even 32-sockets, running business software such as SAP or big databases, that require one large single server. SGI UV2000 that scales to 10.000s of cores can only run scale-out HPC number crunching workloads, in effect, it is a cluster. There are no customers that have ever run SGI UV2000 using enterprise business workloads, such as SAP. There are no SAP benchmarks nor database benchmarks on SGI UV2000, because they can only be used as clusters. The UV2000 are exclusively used for number crunching HPC workloads, according to SGI. If you dont agree, I invite you to post SAP benchmarks with SGI UV2000. You wont find any. The thing is, you can not use a small cluster with 10.000 cores and replace a big 16- or 32-socket Unix server running SAP. Scale-out clusters can not run SAP, only scale-up servers can. There does not exist any scale-out clustered SAP benchmarks. All the highest SAP benchmarks are done by single large scale-up servers having 16- or 32-sockets. There are no 1.000-socket clustered servers on the SAP benchmark list.

x86 is low end, and have for decades stopped at maximum 8-sockets (when we talk about scale-up business servers), and just recently we see 16- and 32- sockets scale-up business x86 servers on the market (HP Kraken, and SGI UV300H) but they are brand new, so performance is quite bad. It takes a couple of generations until SGI and HP have learned and refined so they can ramp up performance for scale-up servers. Also, Windows and Linux has only scaled to 8-sockets and not above, so they need a major rewrite to be able to handle 16-sockets and a few TB of RAM. AIX and Solaris has scaled to 32-sockets and above for decades, were recently rewritten to handle 10s of TB of RAM. There is no way Windows and Linux can handle that much RAM efficiently as they have only scaled to 8-sockets until now. Unix servers scale way beyond 8-sockets, and perform very well doing so. x86 does not.

The other big difference apart from scalability is RAS. For instance, for SPARC and POWER you can hot swap everything, motherboards, cpu, RAM, etc. Just like Mainframes. x86 can not. Some SPARC cpus can replay instructions if something went wrong. x86 can not.

For x86 you typically use scale-out clusters: many cheap 1-2 socket small x86 servers in a huge cluster just like Google or Facebook. When they crash, you just swap them out for another cheap server. For Unix you typically use them as a single scale-up server with 16- or 32-sockets or even 64-sockets (Fujitsu M10-4S) running business software such as SAP, they have the RAS so they do not crash.

zeeBomb - Friday, November 6, 2015 - link

New author? Niiiice!Ryan Smith - Friday, November 6, 2015 - link

Ouch! Poor Johan.=(Johan is in fact the longest-serving AT editor. He's been here almost 11 years, just a bit longer than I have.

hans_ober - Friday, November 6, 2015 - link

@Johan you need to post this stuff more often, people are forgetting you :)JohanAnandtech - Friday, November 6, 2015 - link

well he started with "niiiiice". Could have been much worse. Hi zeeBomb, I am Johan, 43 years old and already 17 years active as a hardware editor. ;-)JanSolo242 - Friday, November 6, 2015 - link

Reading Johan reminds me of the days of AcesHardware.com.joegee - Friday, November 6, 2015 - link

The good old days! I remember so many of the great discussions/arguments we had. We had an Intel guy, an AMD guy, and Charlie Demerjian. Johan was there. Mike Magee would stop in. So would Chris Tom, and Kyle Bennett. It was an awesome collection of people, and the discussions were FULL of real, technical points. I always feel grateful when I think back to Ace's. It was quite a place.JohanAnandtech - Saturday, November 7, 2015 - link

And many more: Paul Demone, Paul Hsieh (K7-architect), Gabriele svelto ... Great to see that people remember. :-)