The AMD Radeon R9 Fury Review, Feat. Sapphire & ASUS

by Ryan Smith on July 10, 2015 9:00 AM ESTGRID Autosport

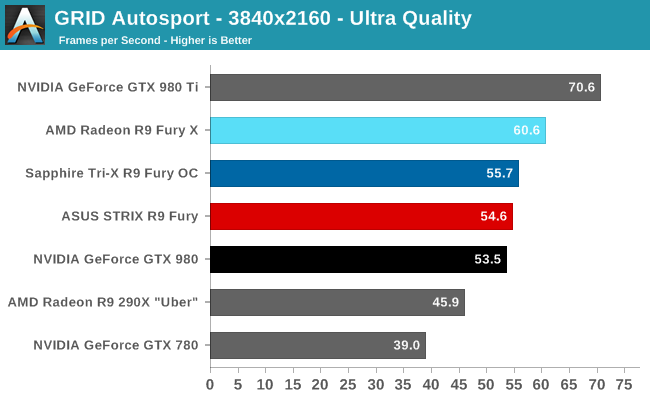

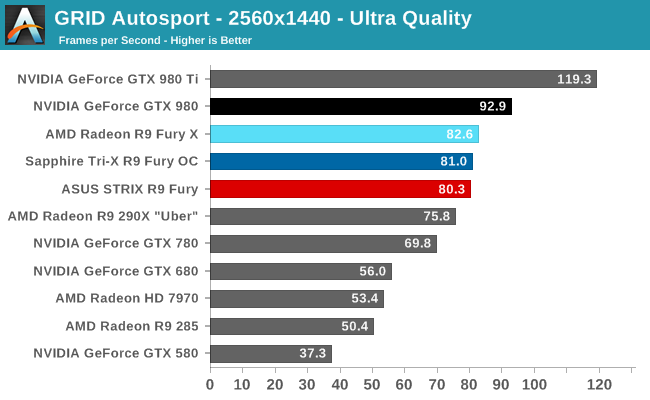

For the racing game in our benchmark suite we have Codemasters’ GRID Autosport. Codemasters continues to set the bar for graphical fidelity in racing games, delivering realistic looking environments layered with additional graphical effects. Based on their in-house EGO engine, GRID Autosport includes a DirectCompute based advanced lighting system in its highest quality settings, which incurs a significant performance penalty on lower-end cards but does a good job of emulating more realistic lighting within the game world.

In our R9 Fury X review, we pointed out how AMD is GPU limited in this game below 4K, and while the R9 Fury’s lower performance essentially mitigates that to a certain extent, it doesn’t change the fact that AMD is still CPU limited here. The end result is that at 4K the R9 Fury is only 2% ahead of the GTX 980 – less than it needs to be to justify the price premium – and at 1440p it’s fully CPU-limited and trailing the GTX 980 by 14%.

On an absolute basis AMD isn’t faring too poorly here, but AMD will need to continue dealing with and resolving CPU bottlenecks on DX11 titles if they want the R9 Fury to stay ahead of NVIDIA, as DX11 games are not going away quite yet.

288 Comments

View All Comments

Shadow7037932 - Friday, July 10, 2015 - link

Yes! Been waiting for this review for a while.Drumsticks - Friday, July 10, 2015 - link

Indeed! Good that it came out so early too :DI'm curious @anandtech in general, given the likely newer state of the city/X's drivers, do you think that the performance deltas between each fury card and the respective nvidia will swing further or into AMD's favor as they solidify their drivers?

Samus - Friday, July 10, 2015 - link

So basically if you have $500 to spend on a video card, get the Fury, if you have $600, get the 980 Ti. Unless you want something liquid cooled/quiet, then the Fury X could be an attractive albeit slower option.Driver optimizations will only make the Fury better in the long run as well, since the 980Ti (Maxwell 2) drivers are already well optimized as it is a pretty mature architecture.

I find it astonishing you can hack off 15% of a cards resources and only lose 6% performance. AMD clearly has a very good (but power hungry) architecture here.

witeken - Friday, July 10, 2015 - link

No, not at all. You must look at it the other way around: Fury X has 15% more resources, but is <<15% faster.0razor1 - Friday, July 10, 2015 - link

Smart , you :) :D This thing is clearly not balanced. That's all there is to it. I'd say x for the WC at 100$ more make prime logic.thomascheng - Saturday, July 11, 2015 - link

Balance is not very conclusive. There are games that take advantage of the higher resources and blows past the 980Ti and there are games that don't and therefore slower. Most likely due to developers not having access to Fury and it's resources before. I would say, no games uses that many shading units and you won't see a benefit until games do. The same with HBM.FlushedBubblyJock - Wednesday, July 15, 2015 - link

What a pathetic excuse, apologists for amd are so sad.AMD got it wrong, and the proof is already evident.

No, NONE OF US can expect anandtech to be honest about that, nor it's myriad of amd fanboys,

but we can all be absolutely certain that if it was nVidia whom had done it, a full 2 pages would be dedicated to their massive mistake.

I've seen it a dozen times here over ten years.

When will you excuse lie artists ever face reality and stop insulting everyone else with AMD marketing wet dreams coming out of your keyboards ?

Will you ever ?

redraider89 - Monday, July 20, 2015 - link

And you are not an nividia fanboy are you? Hypocrite.redraider89 - Monday, July 20, 2015 - link

Typical fanboy, ignore the points and go straight to name calling. No, you are the one people shold be sad about, delusional that they are not a fanboy when they are.redraider89 - Monday, July 20, 2015 - link

Proof that intel and nvidia wackos are the worst type of people, arrogant, snide, insulting, childish. You are the poster boy for an intel/nvidia sophomoric fanboy.