Intel & Cray Land Contract for 2 Dept. of Energy Supercomputers

by Ryan Smith on April 9, 2015 5:30 PM EST

Late last year the United States Department of Energy kicked off the awards phase of their CORAL supercomputer upgrade project, which would see three of the DoE’s biggest national laboratories receive new supercomputers for their ongoing research work. The first two supercomputers, Summit and Sierra, were awarded to the IBM/NVIDIA duo for Oak Ridge National Laboratory and Lawrence Livermore National Laboratory respectively. Following up on that, the final part of the CORAL program is being awarded today, with Intel and Cray receiving orders to build 2 new supercomputers for Argonne National Laboratory.

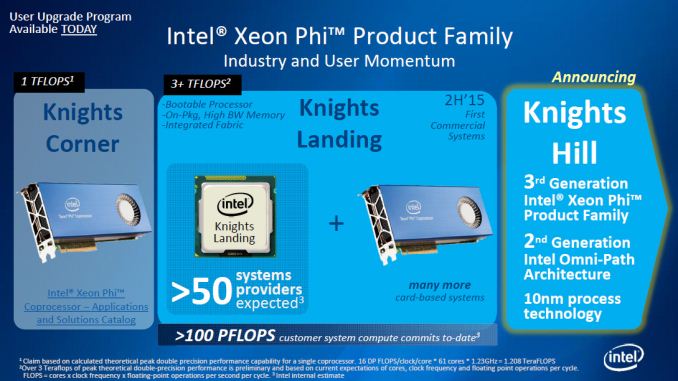

The flagship of these two computers is Aurora, a next-generation Cray “Shasta” supercomputer that is scheduled for delivery in 2018. Designed to deliver 180 PetaFLOPS of peak compute performance, Aurora will be heavily leveraging Intel’s suite of HPC technologies. Primarily powered by a future version of Intel’s Xeon Phi accelerators – likely the 10nm-fabbed Knights Hill – Aurora will be combining the Xeon Phi with Intel’s Xeon CPUs (Update: Intel has clarified that the Xeons are for management purposes only), an unnamed Intel developed non-volatile memory solution, and Intel’s high-speed and silicon photonics-driven Omni-Path interconnect technology. Going forward, Intel is calling this future setup their HPC scalable system framework.

At 180 PFLOPS of performance, Aurora will be in the running for what will be the world’s fastest supercomputer. Whether it actually takes the crown will depend on where exactly ORNL’s Summit supercomputer ends up – it’s spec’d for between 150 PFLOPS and 300 PFLOPS – with Aurora exceeding the minimum bounds of that estimate. All told this makes Aurora 18 times faster than its predecessor, the 10 PFLOPS Mira supercomputer. Meanwhile Aurora’s peak power consumption of 13MW is also 2.7 times Mira’s, which works out to an overall increase in energy efficiency of 6.67x.

| US Department of Energy CORAL Supercomputers | ||||||

| Aurora | Theta | Summit | Sierra | |||

| CPU Architecture | Intel Xeon (Management Only) |

Intel Xeon (Management Only) |

IBM POWER9 | IBM POWER9 | ||

| Accelerator Architecture | Intel Xeon Phi (Knights Hill?) | Intel Xeon Phi (Knights Landing) | NVIDIA Volta | NVIDIA Volta | ||

| Performance (RPEAK) | 180 PFLOPS | 8.5 PFLOPS | 150 - 300 PFLOPS | 100+ PFLOPS | ||

| Power Consumption | 13MW | 1.7MW | ~10MW | N/A | ||

| Nodes | N/A | N/A | 3,400 | N/A | ||

| Laboratory | Argonne | Argonne | Oak Ridge | Lawrence Livermore | ||

| Vendor | Intel + Cray | Intel + Cray | IBM | IBM | ||

The second of the supercomputers is Theta, which is a much smaller scale system intended for early production system for Argonne, and is scheduled for delivery in 2016. Theta is essentially a one-generation sooner supercomputer for further development, based around a Cray XC design and integrating Intel Xeon processors along with Knights Landing Xeon Phi processors. Theta in turn will be much smaller than Aurora, and is scheduled to deliver a peak performance of 8.5 PFLOPS while consuming 1.7MW of power.

The combined value of the contract for the two systems is over $200 million, the bulk of which is for the Aurora supercomputer. Interestingly the prime contractor for these machines is not builder Cray, but rather Intel, with Cray serving as a sub-contractor for system integration and manufacturing. According to Intel this is the first time in nearly two decades that they have been awarded the prime contractor role in a supercomputer, their last venture being ASCI Red in 1996. Aurora in turn marks the latest in a number of Xeon Phi supercomputer design wins for Intel, joining existing Intel wins such as the Cori and Trinity supercomputers. Meanwhile for partner Cray this is also the first design win for their Shasta family of designs.

Finally, Argonne and Intel have released a bit of information on what Aurora will be used for. Among fields/tasks planned for research on Aurora are: battery and solar panel improvements, wind turbine design and placement, improving engine noise & efficiency, and biofuel research, including more effective disease control for biofuel crops.

Source: Intel

35 Comments

View All Comments

JoJoman88 - Thursday, April 9, 2015 - link

But can it play GTA V at 4k 60Hz?testbug00 - Friday, April 10, 2015 - link

Unless GTA V has been made to run on the Phi architecture or Core architecture without a GPU, no.IF it can run it at 60Hz depends on what screen(s) the super computer is plugged into. If that screen is 60Hz, and GTA 5 can run, it will be running at 60Hz.

tuxRoller - Thursday, April 9, 2015 - link

Well, I guess this means we won't be seeing 1 exaflop by 2020.Still, pretty impressive efficiency from ibm/nvidia.

Morawka - Thursday, April 9, 2015 - link

mostly nvidia there but somethings got to push those cores i supposeMorawka - Thursday, April 9, 2015 - link

maybe intel will take the hint and support NVLINK or come up with something comparable with a lot of bandwidth for CPU to GPU interconnect.testbug00 - Friday, April 10, 2015 - link

You make it sound like they're not developing something like that. PCIe4 will have just under 16GBps transfer per lane. NVLink is about 20GBps per lane.Now, NVLink shipping products should launch at least 3-4 months before PCIe4. However, at worst, Intel should end up with 75-80% of the performance. I would guess Intel probably has something at least on par with NvLink in production, however.

Knight's landing can be socketed and used as the main system CPU, btw.

Kevin G - Friday, April 10, 2015 - link

Intel seems to be content with using QPI for internal chip communication and Omnipath for external node-to-node communication. Considering that these super computers will be using silicon photonics at some point, it wouldn't surprise me long term if Intel merges QPI and Omnipath in terms of a physical optical link with support for higher layers of both.At the moment, IBM seems content with electrical for internal usage but they have already used massive optical switches in POWER7 to provide a single system image for the cluster (though the entire system was not coherent, it did have a flat memory address space throughout). If IBM wants to go optical for inter-chip communication, nVidia will also have to step up.

testbug00 - Friday, April 10, 2015 - link

dunno what Knights Landing uses, but, i'm pretty sure they don't use QPI. Happy to be wrong.IntelUser2000 - Saturday, April 11, 2015 - link

One article says they decided not to make a dual socket version, which means only single socket is available.That means either a single socket version or a card version. Neither needs QPI. Of course there's a third one which adds external OmniPath Interconnect.

extide - Monday, April 13, 2015 - link

Knights Landing can use either PCIe or QPI, depending on the form factor. (Card vs Chip only)