GIGABYTE Server Releases ARM Solutions using AppliedMicro and Annapurna Labs SoCs

by Ian Cutress on March 24, 2015 12:51 PM EST

As Johan points out in his deep dive of ARM in the server market, given a focused strategy new ARM solutions can offer the potential to disrupt some very typical x86 applications. Migrating from the ARM instruction sets or the ARM architecture into something in silicon is one part of the equation, then producing something more tangible has been the quest of a few solution providers. Typically these solutions all focus on enterprise as one would expect, and it would take time to filter down depending on use case and application. It would seem that today, for the opening of the WHD.global event in Germany, GIGABYTE’s server arm is launching a couple of ‘Server on a Chip’ solutions.

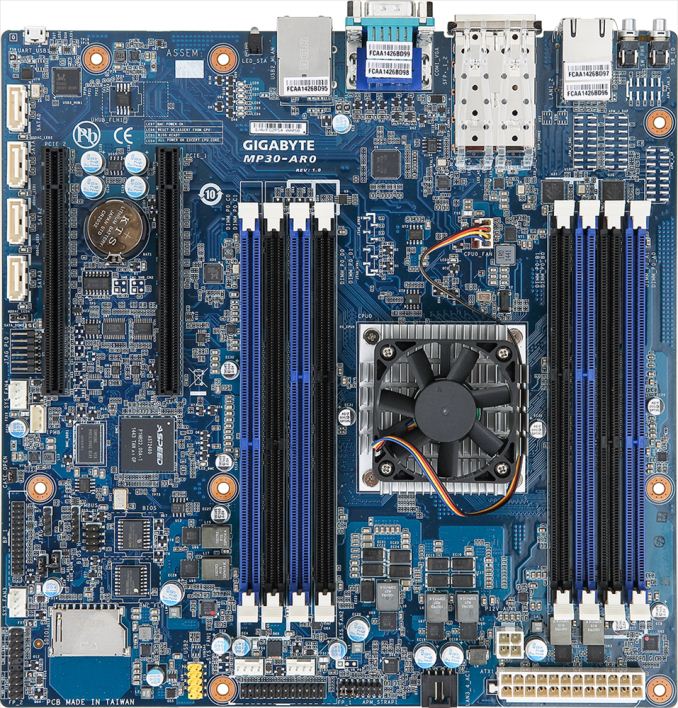

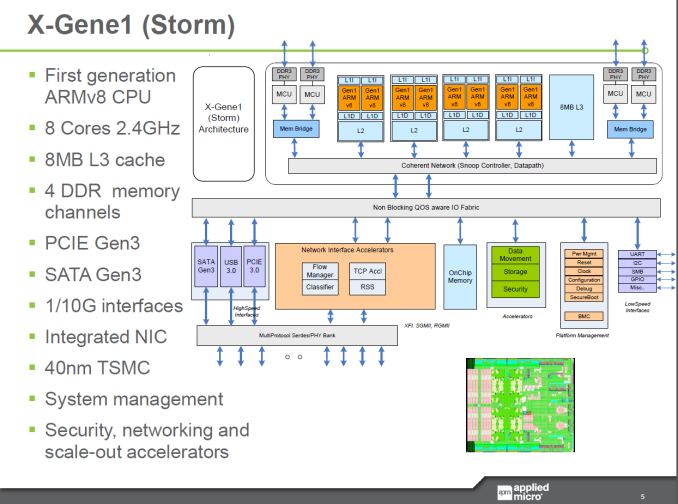

First up is a model built around the AppliedMicro X-Gene under the ARMv8-A architecture. The MP30-AR0 uses the 45W X-Gene1, a 40nm eight-core solution running at 2.4 GHz using pairs of cores shared L2 cache with an overriding 8MB L3 cache.

The system uses quad channel DDR3 with ECC, exploiting two DIMMs per channel for a total of 128GB. Two 10GbE SFP+ ports are supplied, along with two RJ-45 1 GbE ports from a Marvell 88E1512 controller. Four SATA 6 Gbps ports are part of the SoC, along with two PCIe 3.0 x8 slots in an x16 form factor. No USB 3.0, but USB 2.0 and a VGA output for the AST2400 server control IC included, which means another control network port on the rear-IO.

The MP30-AR0 is compliant with ARM Server Base System Architecture (SBSA) and Server Base Root Requirements (SBBR) standards, and is designed for cloud and scale-out computing. While GIGABYTE’s server division has been hard at work enabling their products to be sold at retail, the ARM based platforms will most likely be a distributor b2b only offering, at least of now. This motherboard/SoC system will also be available in a 1U server rackmount (the R120-P30) with four hotswappable bays and a single PCIe riser card, as shown above.

On the storage side, GIGABYTE Server is releasing the D120-S3G, a rackmount powered by the Annapurna Labs Alpine AL5140, which translates as a 1.7 GHz Quad A15 solution running at a 10W TDP relying on ARMv7 for the instruction set.

This system seems more for cold-storage, offering support for 16 SATA 6Gbps drives with RAID 5 and RAID 6 both supported. The motherboard has only one memory slot, but two gigabit Ethernet ports are flanked with two 10GbE integrated SFP+ ports as well. An AST2400 supplies the network control, and GIGABYTE is stating support for LTS Linux Kernel 3.10 and Ubuntu 14.04. If the product page is anything to go by, this is still technically a work in progress as they have not officially announced any other connectivity.

No word on release dates or pricing, although demonstrations at events can mean they might go on sale within the next couple of months.

16 Comments

View All Comments

ant6n - Tuesday, March 24, 2015 - link

I wonder whether it's possible to run this with a geforce or radeon in ubuntu.Soulkeeper - Tuesday, March 24, 2015 - link

with some effort, any distro targetting arm could work.although the games/binaries you run will need to support arm

I'm actually pretty impressed by this, even tho it's only 40nm

geekfool - Tuesday, March 24, 2015 - link

your probably better off waiting for the "Cavium" Support for NVIDIA GPU Accelerators in their 64-bit ARMv8-A ThunderX Processor Family if you want to use a GPU as a co-processor etc.Gigaplex - Tuesday, March 24, 2015 - link

Does NVIDIA even have a GeForce driver blob for ARM?Samus - Tuesday, March 24, 2015 - link

You would think they would, being an ARM licensee and being on their 5th gen Tegra SoC...But we are talking two different divisions that rarely cross paths within NVidia.

Azurael - Wednesday, March 25, 2015 - link

I would imagine it would work so long as the card is supported by Nouveau. I've run newer Nvidia (and AMD, for that matter) cards in PPC64 machines. Obviously you don't get the full functionality of the binary blobs, but especially in the case of Radeons, the binary drivers are an unstable POS even on a machine on which they are 'supposed' to work so it's no great loss.dragonsqrrl - Tuesday, March 24, 2015 - link

What are those WD drives in the server O_O? They look like Black's, but that lineup currently tops out at 4TB. Actually I can't find a 6.3TB drive in any of WD's lineups. Unannounced product leak?WithoutWeakness - Tuesday, March 24, 2015 - link

They're likely Ae series drives. An appliance like this would likely use Re, Se, or Ae series drives rather than WD Blacks or other consumer-oriented drives as they're better suited and designed for large arrays like this in large enclosures. The Ae series is designed for low power consumption and long-term "archive" storage.Plus I checked the product page and they have a photo of a bunch of drives labeled 6.3TB in a server that looks very similar to the one above: http://www.wdc.com/en/products/products.aspx?id=13...

dragonsqrrl - Tuesday, March 24, 2015 - link

Ya you're right. I just looked at the Black, Re, and Se lineups and assumed that nothing else that might go into a large array server existed. I guess the Black's wouldn't work for that either. I haven't even heard of the Ae until now. It's interesting that it's the only lineup with a 6.3TB capacity.DanNeely - Tuesday, March 24, 2015 - link

Yup. FYI. There're tow major technical reasons why consumer grade drives don't belong in large storage servers. the first is firmware that keeps triying to read a bad sector for so long before giving up that raid controllers will decide the entire drive has failed. When it's your only copy of date, 10 second of hail mary attempts before giving up is a good thing since occasionally it will manage to get a good read eventually (and then remap the sector out of use); in a raid array you want a rapid failure so that redundancy features can quickly get the data from an alternate location. The other issue is vibration tolerance; server grade drives are built to handle vibration loads that end up rapidly killing cheaper consumer drives. Lastly, while not really a technical issue, drives marketed at the enterprise will have better warranties: Longer lasting, faster replacement after a failure, (for SSDs: a higher guaranteed write limit) etc.