NVIDIA Tegra X1 Preview & Architecture Analysis

by Joshua Ho & Ryan Smith on January 5, 2015 1:00 AM EST- Posted in

- SoCs

- Arm

- Project Denver

- Mobile

- 20nm

- GPUs

- Tablets

- NVIDIA

- Cortex A57

- Tegra X1

GPU Performance Benchmarks

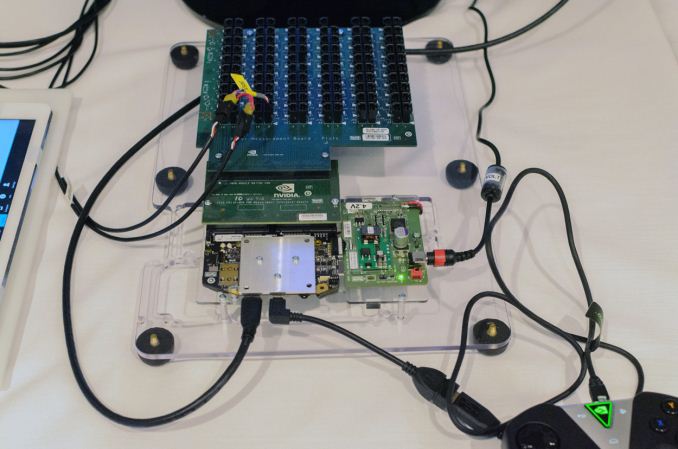

As part of today’s announcement of the Tegra X1, NVIDIA also gave us a short opportunity to benchmark the X1 reference platform under controlled circumstances. In this case NVIDIA had several reference platforms plugged in and running, pre-loaded with various benchmark applications. The reference platforms themselves had a simple heatspreader mounted on them, intended to replicate the ~5W heat dissipation capabilities of a tablet.

The purpose of this demonstration was two-fold. First to showcase that X1 was up and running and capable of NVIDIA’s promised features. The second reason was to showcase the strong GPU performance of the platform. Meanwhile NVIDIA also had an iPad Air 2 on hand for power testing, running Apple’s latest and greatest SoC, the A8X. NVIDIA has made it clear that they consider Apple the SoC manufacturer to beat right now, as A8X’s PowerVR GX6850 GPU is the fastest among the currently shipping SoCs.

It goes without saying that the results should be taken with an appropriate grain of salt until we can get Tegra X1 back to our labs. However we have seen all of the testing first-hand and as best as we can tell NVIDIA’s tests were sincere.

| NVIDIA Tegra X1 Controlled Benchmarks | |||||

| Benchmark | A8X (AT) | K1 (AT) | X1 (NV) | ||

| BaseMark X 1.1 Dunes (Offscreen) | 40.2fps | 36.3fps | 56.9fps | ||

| 3DMark 1.2 Unlimited (Graphics Score) | 31781 | 36688 | 58448 | ||

| GFXBench 3.0 Manhattan 1080p (Offscreen) | 32.6fps | 31.7fps | 63.6fps | ||

For benchmarking NVIDIA had BaseMark X 1.1, 3DMark Unlimited 1.2 and GFXBench 3.0 up and running. Our X1 numbers come from the benchmarks we ran as part of NVIDIA’s controlled test, meanwhile the A8X and K1 numbers come from our Mobile Bench.

NVIDIA’s stated goal with X1 is to (roughly) double K1’s GPU performance, and while these controlled benchmarks for the most part don’t make it quite that far, X1 is still a significant improvement over K1. NVIDIA does meet their goal under Manhattan, where performance is almost exactly doubled, meanwhile 3DMark and BaseMark X increased by 59% and 56% respectively.

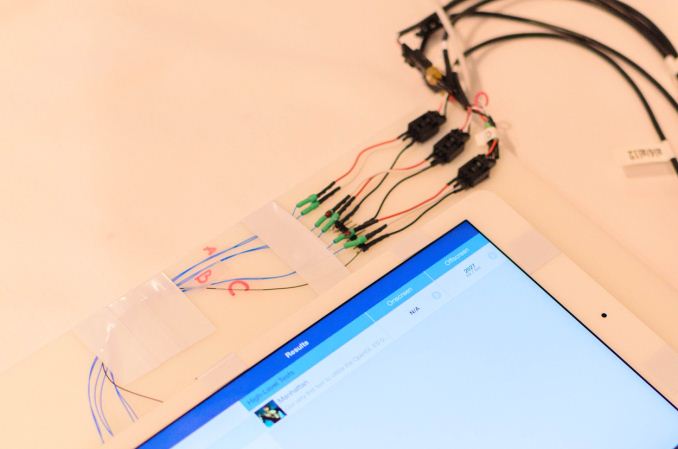

Finally, for power testing NVIDIA had an X1 reference platform and an iPad Air 2 rigged to measure the power consumption from the devices’ respective GPU power rails. The purpose of this test was to showcase that thanks to X1’s energy optimizations that X1 is capable of delivering the same GPU performance as the A8X GPU while drawing significantly less power; in other words that X1’s GPU is more efficient than A8X’s GX6850. Now to be clear here these are just GPU power measurements and not total platform power measurements, so this won’t account for CPU differences (e.g. A57 versus Enhanced Cyclone) or the power impact of LPDDR4.

Top: Tegra X1 Reference Platform. Bottom: iPad Air 2

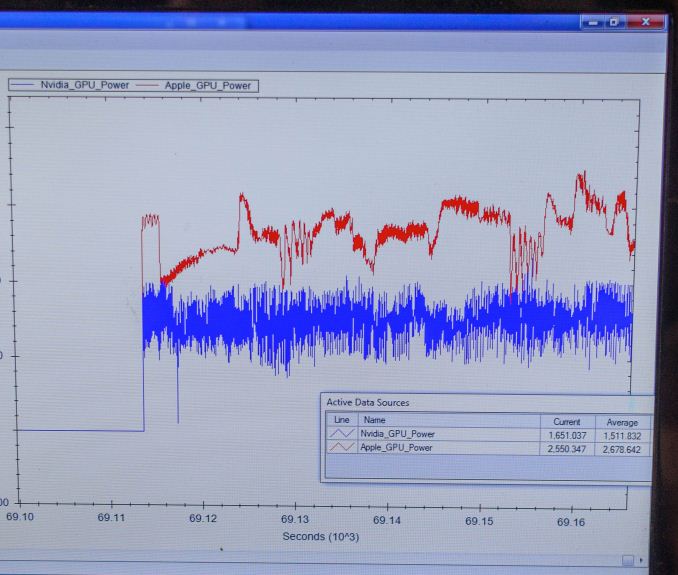

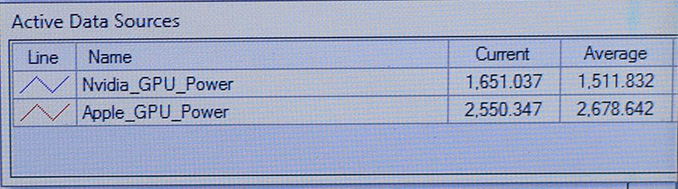

For power testing NVIDIA ran Manhattan 1080p (offscreen) with X1’s GPU underclocked to match the performance of the A8X at roughly 33fps. Pictured below are the average power consumption (in watts) for the X1 and A8X respectively.

NVIDIA’s tools show the X1’s GPU averages 1.51W over the run of Manhattan. Meanwhile the A8X’s GPU averages 2.67W, over a watt more for otherwise equal performance. This test is especially notable since both SoCs are manufactured on the same TSMC 20nm SoC process, which means that any performance differences between the two devices are solely a function of energy efficiency.

There are a number of other variables we’ll ultimately need to take into account here, including clockspeeds, relative die area of the GPU, and total platform power consumption. But assuming NVIDIA’s numbers hold up in final devices, X1’s GPU is looking very good out of the gate – at least when tuned for power over performance.

194 Comments

View All Comments

Mayuyu - Monday, January 5, 2015 - link

Apple should start licensing Nvidia GPUs instead of Imagination GPUs for next generation iDevices.twotwotwo - Monday, January 5, 2015 - link

It might be hard (or impossible) for them to do that without breaking compatibility with existing iOS games written around the PowerVR's quirks.Krysto - Monday, January 5, 2015 - link

The OpenGL stuff shouldn't be "impossible". Even the texture compression. I think developers can deal with that. Where Apple really shot itself in the foot is with the launch of the Metal API, though. Now they're stuck with Imagination for at least a few more years until they make it more abstract to work with multiple GPU architectures and not so..."metal". Or they can wait for OpenGL NG to appear, which will probably take just as much time.techconc - Monday, January 5, 2015 - link

How exactly did Apple "shoot itself in the foot" with Metal. They have a solution right now for mobile apps that rivals what is possible on other platforms. All the major game engines have already migrated to Metal. nVidia can show these generic OpenGL benchmarks all they want, but in practice, graphic intensive apps on the A7 and A8 series chips are seeing far greater efficiency and performance improvements.OpenGL NG sounds great in concept, but it takes forever for a consortium like Khronos to develop new standards and just as long for them to eventually be adopted. This is years away from becoming a reality. Yet, Apple gets all of the benefits of that right now. From my perspective, this gives Apple a strong competitive advantage.

akdj - Sunday, January 11, 2015 - link

Well said techconcNot sure if you're in to development, SoC design or just a 'user', Krysto...but BOTH Apple's 'Metal' and language 'Swift' were/are HUGE leaps forward to 'cut' the peanut butter layer on the GPU that is Open GL ES ...so developers have 'direct' access to the 'metal' AKA GPU portion of the SoC. It's an amazing feat in 'software engineering' that helped a huge load on the 'hardware engineering' side of the house....specifically because of this!

I own a Note 4 for my business

I own a 6+ as a personal driver.

The former a quad core, 2.7x Ghz procs and the Adreno 420 and 3GB of 'shared' SoC RAM

The latter, a dual core, 1.5Ghz procs with IT's solution for graphics and 1GB of 'shared' SoC RAM

I love them both, different reasons BUT, Play Asphalt 8 on both. Then tell me 'more muscle, power, RAM, cores or core speed' are the reasons I'm playing a more fluent game on iOS vs android

I'm ambidextrous and enjoy using both. Same in the office or home environment. OS X is primary but I've always had a Windows box since the big 'switch' a decade ago

Point being, software is damn near, and sometimes MORE important than hardware to the end user's experience. No one outside of us dorks, geeks, and pocket protector wearing Homers has a clue what FitFat, latency, core clock speed, or hell....cores for that matter MEAN! They couldn't tell Ya if theyre rocking 1, 2, 3 GB of RAM or NO RAM, lol.

The ultimate end experience is designed and defined by the software and hardware working in synergy WITH a development community willing to step up and develop a million optomized apps for your system. If it's running iOS or Android, you're in luck. Windows, a bit tougher to 'win' and if this SoC does indeed have the power/TDP numbers they're bragging, Apple's never been one to change supply chains

There's a reason Tim is CEO, & that's the biggest. When you're dropping 100,000 products a year, you HAVE to have suppliers that can fulfill your orders and needs

adriaaaaan - Thursday, January 15, 2015 - link

Are you honestly expecting a phone with a weaker GPU pushing 50% more pixels to out perform the other? Of course the iPhone is smoother in games its lower res than the note 4Maleficum - Wednesday, January 21, 2015 - link

Oh yeah, Note4 has to push more pixels than 6+. However, a resolution that high is simply not necessary in first place, and more importantly, over 30% of the pixel data the SoC has to process are nullified by the pentiled AMOLED. What a waste!Maxjonny55 - Saturday, June 20, 2015 - link

Metal has made easier for to access the GPU and the reason apple had done this due the lack of power on there CPUs compared to android device, yes sure GPUs can run apps with extra power but then so what? Open GL has always been doing that! More will know Java and Open GL and easier for development as all hardware vendor apart from apple will optimise hardware for it.I would not want to compare Asphalt 8 between devices as horse power and muscle has nothing to do with it but lazy work on the part of game creators.

Providing access to metal will make a difference to apps no doubt but some apps and not all.. Open GL provides access to GPU not sure why it took apple so long. I have a Nexus 9 and iPad Air 2 and can't see apart from Apple hype what the Air 2 had to offer in performance! Nexus 9 single core out performes the Air 2 and so does the 1 year older GPU..

Wolfpup - Wednesday, September 30, 2015 - link

"lack of power"? Apple's CPUs blow away any other ARM CPUs.Maleficum - Wednesday, January 21, 2015 - link

OpenGL is FAR outdated. It has way too many performance bottlenecks due to the aged design, and doesn't scale very well with modern GPU/CPU architectures.Both MS and Apple recognized this, et voila, Metal and the upcoming DX12 are their answers.

Pity that Android can't keep up with this, stuck with the opensource mess.