ARM Challenging Intel in the Server Market: An Overview

by Johan De Gelas on December 16, 2014 10:00 AM ESTThe Current Intel offerings

Before we can discuss the ARM server SoCs, we want to look at what they are up against: the current low end Xeons. We have described the midrange Xeon E5s in great detail in earlier articles.

The Xeon E3-12xx v3 is nothing more than a Core i5/i7 "Haswell" dressed up as a server CPU: a quad-core die, 8MB L3 cache, and two DDR3 memory channels. You pay a small premium – a few tens of dollars – for enabling ECC and VT-d support. Motherboards for the Xeon E3 are also only a few tens of dollar more expensive than a typical desktop board, and prices are between the LGA-1150 and LGA-2011 enthusiast boards. The advantages are remote management courtesy of a BMC, mostly an Aspeed AST chip.

For the enthusiasts that are considering a Xeon E3, the server chip has also disadvantages over it's desktop siblings. First of all, the boards consume quite a bit more power while in sleep state: 4-6W instead of the typical <1W of the desktop boards. The reason is that server boards come with a BMC and that these boards are supposed to be running 24/7 and not sleeping. So less time is invested in reducing the power usage in sleep mode: for example the voltage regulators are chosen to live long. Also, these boards are much more picky when it comes to DIMMs and expansions cards meaning that users have to check the hardware compatibility lists for the motherboard itself.

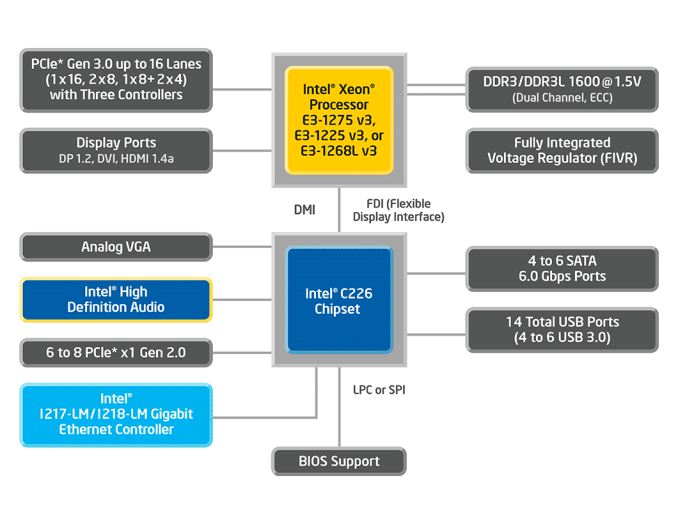

Back to the server world, the main advantage of the Xeon E3 is the single-threaded performance. The Xeon E3-1280 v3 runs the Haswell cores at 3.6GHz base clock and can boost to 4GHz. There are also affordable LP (Low Power) 25W TDP versions available, e.g. the Xeon E3-1230L v3 (1.8GHz up to 2.8GHz ) and E3-1240L v3 (2GHz up to 3GHz). These chips seemed to be in very limited supply when they were announced and were very hard to find last year. Luckily, they have been available in greater quantities since Q2 2014. It also worth noting that the Xeon E3 needs a C220 chipset (C222/224/226) for SATA, USB, and Ethernet, which adds 0.7W (idle) to 4.1W (TDP).

The weak points are the limited memory channels (bandwidth), the fact that Xeon E3 server is limited to eight threads, and the very limited (for a server) 32GB RAM capacity (4 Slots x 8 DIMMs). Intelligent Memory or I'M is one of the vendors that is trying to change this. Unfortunately their 16GB DIMMs will only work with the Atom C2000, leading to the weird situation that the Atom C2000 supports more memory than the more powerful Xeon E3. We'll show you our test results of what this means soon.

The Atom C2000 is Intel's server SoC with a power envelope ranging from 6W (dual-core at 1.7GHz) to 20W (octal-core at 2.4GHz). USB 2.0, Ethernet, SATA3, SATA2 and the rest (IO APIC, UART, LPC) are all integrated on the die, together with four pairs of Silvermont Cores sharing 1MB L2 cache. The Silvermont architecture should process about 50% more instructions per clock cycle than previous Atoms due an improved branch prediction, the loop stream detector (like the LSD in Sandy Bridge) and out-of-order execution. However the Atom micro architecture is still a lot simpler than Haswell.

Silvermont has much smaller buffers (for example, the load buffer only has 10 entries, where Haswell has 72!), no memory disambiguation, it executes x86 instructions (and not RISC-like micro-ops), and it can process at the most two integer and two floating point instructions, with a maximum of two instructions per cycle sustained. The Haswell architecture can process and sustain up to five instructions with "ideal" software. AES-NI and SSE 4.2 instructions are available with the C2000, but AVX instructions are not.

The advantages of the Atom C2000 are the low power and high integration -- no additional chip is required. The disadvantages are the relatively low single-threaded performance and the fact that the power management is not as advanced as the Haswell architecture. Intel also wants a lot of money for this SoC: up to $171 for the Atom C2750. The combination of an Atom C2000 and the FCBGA11 motherboard can quickly surpass $300 which is pretty high compared to the Xeon E3.

78 Comments

View All Comments

hojnikb - Tuesday, December 16, 2014 - link

Wow, i have never motherboard that simple :)CajunArson - Tuesday, December 16, 2014 - link

OK you devote another huge block of text to the typical x86 complexity myth* followed by: Oh, but the ARM chips are superior because they have special-purpose processors that overcome their complete lack of performance (both raw & performance per watt).Uhm... WTF?? I need to have a proprietary, poorly documented add-on processor to make my software work well now? How is that a "standard"? How is requiring a proprietary add-on processor that's not part of any standard and requires boatloads of software cruft working in a "reduced instruction set architecture" exactly?

I might as well take the AVX instruction set for modern x86... which is leagues ahead of anything that ARM has available, and say that x86 is now a "RISC" architecture because the AVX part of x86 is just as clean or cleaner than anything ARM has. I'll just conveniently forget about the rest of x86 just like the ARM guys conveniently forget about all the non-standard "application accelerators" that are required to actually make their chips compete with last-year's Atoms.

* Maybe in a micro-controller setting where you are using a PIC or Arduino the x86 decoding is a real issue, but in a server? Please. Considering the only hard numbers you have show a 2013-model Atom beating a 2015-model ARM server processor, you'll have to try harder.

hlmcompany - Tuesday, December 16, 2014 - link

The article describes ARM chips as becoming more competitive, but still lagging behind...not that they're superior.Kevin G - Tuesday, December 16, 2014 - link

The coprocessor idea is something stems from mainframe philosophy. Historically things like IO requests and encryption were always handled by coprocessors in this market.The reason coprocessors faded away outside of the mainframe market is that it was generally cheaper to do a software implementation. Now with power consumption being more critical than ever, coprocessors are seen as a means to lower overall platform power while increasing performance.

Philosophically, there is nothing that would prevent the x86 line from doing so and for the exact same reasons. In fact with PCIe based storage and NVMe on the horizon in servers, I can see Intel incorporating a coprocessor to do parity calculations for RAID 5/6 in there SoCs.

kepstin - Tuesday, December 16, 2014 - link

Intel has already added some instructions in avx and avx2 that vastly improve the performance of software raid5 and 6; the Haswell chip in my laptop has the Linux software raid implementation claiming 24GiB/s raid5 with avx, and 23GiB/s raid6 with avx2 (per core).MrSpadge - Tuesday, December 16, 2014 - link

Of course additional power draw for more complex instruction deconding mattes in servers: today they are driven by power-efficiency! The transistors may not matter as much, but in a multi-core environment they add up. Using the quoted statement from AMD of "only 10% more transistors" means one could place 11 RISC cores in the same area for the same cost as 10 otherwise identical x86 cores. Johan said it perfectly with "the ISA is not a game changer, but it matters".And you completely misunderstood him regarding the accelerators. Intel is producing "CPUs for everyone" and hence only providing few accelerators or special instructions. In the ARM ecosystem it's obvious that vendors are searching niches and are willing to provide custom solutions for it - hence the chance is far higher that they provide some accelerator which might be game-changing for some applications.

This doesn't mean the architecture has to rely entirely on them, neither does it mean they have to be undocumented. The accelerators do not even have to be faster than software solutions, as long as they're easy enough to work with and provide significant power savings. Intel is doing just that with special-purpose hardware in their own GPUs.

And don't act as if much would have changed in the Atom space ever since 22 nm Silvermont cores appeared. It doesn't matter if it's from 2013 or 2015 - it's all just the same core.

OreoCookie - Tuesday, December 16, 2014 - link

What's with all the unnecessary piss and vinegar?All CPU vendors rely increasingly on specialized silicon, newer Intel CPUs feature special crypto instructions (AES-NI) and Quick Sync, for instance. Adding special purpose hardware to augment the system (in the past usually done for performance reasons) is quite old, just think of hardware RAID cards and video »accelerators« (which are not called GPUs). The reason that Intel doesn't add more and more of these is that they build general purpose CPUs which are not optimized for a specific workload (the article gives a few examples). In other environments (servers, mobile) the workload is much more clearly defined, and you can indeed take advantage of accelerators.

The biggest advantage of ARM cpus is flexibility -- the ARM ecosystem is built on the idea to tailor silicon to your demands. This is also a substantial reason why Intel's efforts in the mobile market have been lackluster. Recently, Synology announced a new professional NAS (the DS2015xs) which was ARM-based rather than Intel-based. Despite its slower CPU cores, the throughput of this thing is massive -- in part, because it sports two (!) 10 GBit ethernet ports out of the box. Vendors are looking for niches where ARM-based servers could gain a foothold, so they are trying a lot of things and see what sticks.

goop666666 - Saturday, December 20, 2014 - link

LOL! Most of the comments here like this one seem to be written by people who think computers should all be like gaming machines or something.Here'a tip: no-one cares about "complexity," "standardization," "RISC," or anything else you mention. All they care about in the target market for ARM server chips is price, performance and power, and I mean ALL THREE.

On this Intel cannot compete. They sell wildly overpriced legacy hardware propped up by massive R&D expenditures and they're wedded to that model. The rest of the industry is wedded to the new and cheap model. Just like how the industry moved to mobile devices and Intel stood still, this change will also wash over Intel while they sit still in denial.

There's a reason why Intel stock has gone no-where for years.

nlasky - Monday, December 22, 2014 - link

Jan 8, 2010, Intel stock price $20.83. Dec 19, 2010, Intel stock price $36.37. If by gone no-where in for years you mean increased by 70% I guess you would be correct. Intel can't compete because they are wedded to their model? They have a profit margin of 20% and an operating margin of 27%. They could easily cut prices to compete with any ARM offerings. Servers have been around forever, unlike the mobile computing platform. Intel has an even larger stranglehold on this industry than ARM has in the mobile space. Here's a tip - stop spewing a bunch of uniformed nonsense just to make an argument.nlasky - Monday, December 22, 2014 - link

*Dec 19, 2014