Intel Xeon E5 Version 3: Up to 18 Haswell EP Cores

by Johan De Gelas on September 8, 2014 12:30 PM ESTCapacity: the New Arms Race

Some of the hottest software trends of today are Big Data and In Memory Business Analytics. Both applications benefit from fast processors, but even more importantly they are virtually unsatiable when it comes to RAM capacity. Another important area that's much closer to the daily work of many IT professionals is virtualization. As heavier applications are being virtualized, the typical amount of memory allocated to virtual machines has increased rapidly. As announced on the latest VMworld, vSphere 6 will now support Virtual machines that allocate up to 4TB (!!) of memory. The days where virtual machines were limited to only a fraction of "native" operating systems are behind us.

With the above developments, support for and development of high capacity DIMMs is crucial. Intel has been steadily improving the support for LRDIMMs (here's some additional information on LRDIMMs). The first Xeon E5-2600 had support for LRDIMMs but it only delivered higher capacity at the expense of lower bandwidth and higher latency. The memory controller of the Xeon E5-2600 v2 had several improvements specifically for LRDIMMs and as a result the latency and throughput tax was greatly reduced.

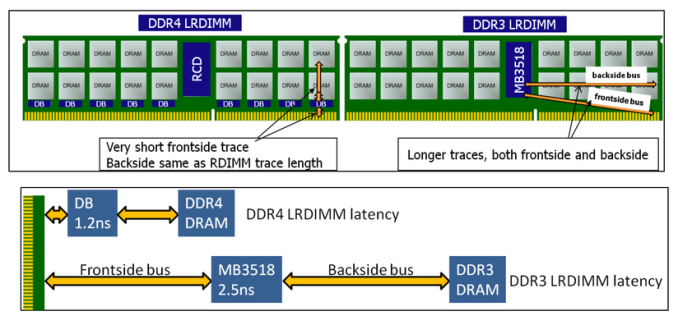

The advent of DDR4 has given the engineers of IDT the opportunity to give LRDIMMs a performance advantage instead of a disadvantage. By introducing data buffers close to the DRAM chips, they managed to reduce the I/O trace lengths tremendously. See the figure below.

DDR4 and DDR3 LRDIMMs compared, image courtesy of IDT.

The latency overhead of the extra buffering is thus significantly lower on DDR4 LRDIMMs. In other words, compared to Registered DDR4 running at the same speed with 1 DPC (1 DIMM per channel), the latency overhead will be small. As soon as you start to use more DIMMs per channel, LRDIMMs actually offer lower latency as they can run at higher speeds.

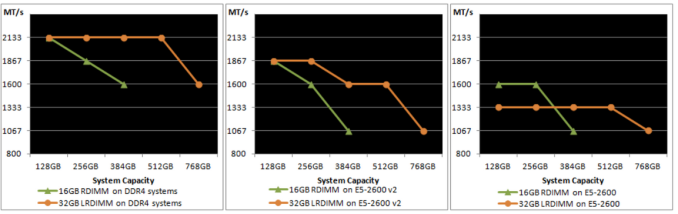

Below you can see the evolution of LRDIMM support over the three generations of Xeon E5s. On the far right is the speed of DIMMs on Sandy Bridge EP, in the middle is Ivy Bridge EP, and on the left is the speed of DIMMs on the new Haswell EP Xeon.

On Sandy Bridge EP (Xeon E5-2600), LRDIMMs were only clocked faster at three DPC. On Ivy Bridge EP (Xeon E5-2600 v2), the LRDIMMs were faster at two and three DPC. And on Haswell EP (Xeon E5-2600 v3), the bandwidth speed gap at two and three DPC has increased while the latency tax (not seen in the picture) has been reduced.

Samsung LRDIMM on top, RDIMM below. Notice the data buffers on the LRDIMM

Several sources tell us that LRDIMMs will be about 20%-25% more expensive. Our task then is to help you decide wether or not the investment is worth it. In this review, we will show some preliminary results.

The latency penalty has been reduced, but what about capacity? As you can see by the 4G marking in the photo above, the DIMMs used in our current servers are still using the mature 4Gbit DRAM chip technology. So currently, the Xeon E5-2600 v2 platform is limited to 384GB of registered DDR4 or 768GB of LRDIMMs. Quad-ranked RDIMMs, which were expensive, slow, and could only be used at 2DPC, are dead. The current 64GB LRDIMMs can be used at 3DPC, but they are Octal (!) ranks using quad-die-packages. As a result they are slow at 3DPC and power hungry.

But the future looks bright. At the end of this year, dual-ranked modules, such as the ones you can see above, will use 8Gb. This results in 64GB LRDIMMs and 32GB RDIMMs. That means the Xeon E5 platform will soon be able to address up to 1.5TB of physical RAM. In the second half of 2015, 128GB LRDIMMs should be available too, allowing up to 3TB of RAM.

85 Comments

View All Comments

coburn_c - Monday, September 8, 2014 - link

MY God - It's full of transistors!Samus - Monday, September 8, 2014 - link

I wish there were socket 1150 Xeon's in this class. If I could replace my quad core with an Octacore...wireframed - Saturday, September 20, 2014 - link

If you can afford an 8-core CPU, I'm sure you can afford a S2011 board - it's like 15% of the price of the CPU, so the cost relative to the rest of the platform is negligible. :)Also, s1150 is dual-channel only. With that many cores, you'll want more bandwidth.

peevee - Wednesday, March 25, 2015 - link

For many, if not most workloads it will be faster to run 4 fast (4GHz) cores on 4 fast memory channels (DDR4-2400+) than 8 slow (2-3GHz) cores on 2 memory channels. Of course, if your workload consists of a lot of trigonometry (sine/cosine etc), or thread worksets completely fit into 2nd level cache (only 256k!), you may benefit from 8/2 config. But if you have one of those, I am eager to hear what it is.tech6 - Monday, September 8, 2014 - link

The 18 core SKU is great news for those trying to increase data center density. It should allow VM hosts with 512Gb+ of memory to operate efficiently even under demanding workloads. Given the new DDR4 memory bandwidth gains I wonder if the 18 core dual socket SKUs will make quad socket servers a niche product?Kevin G - Monday, September 8, 2014 - link

In fairness, quad socket was already a niche market.That and there will be quad socket version of these chips: E5-4600v3's.

wallysb01 - Monday, September 8, 2014 - link

My lord. My thought is that this really shows that v3 isn’t the slouch many thought it would be. An added 2 cores over v2 in the same price range and turbo boosting that appears to functioning a little better, plus the clock for clock improvements and move to DDR4 make for a nice step up when all combined.I’m surprised Intel went with an 18 core monster, but holy S&%T, if they can squeeze it in and make it function, why not.

Samus - Monday, September 8, 2014 - link

I feel for AMD, this just shows how far ahead Intel is :\Thermogenic - Monday, September 8, 2014 - link

Intel isn't just ahead - they've already won.olderkid - Monday, September 8, 2014 - link

AMD saw Intel behind them and they wondered how Intel fell so far back. But really Intel was just lapping them.