Samsung Galaxy Tab Pro 8.4 and 10.1 Review

by Jarred Walton on March 22, 2014 9:30 PM ESTPerformance Benchmarks

In the world of laptops where I come from, we’re fast reaching the point – if not well beyond it – where talking about raw performance only matters to a small subset of users. Everything with Core i3 and above is generally “fast enough” that users don’t really notice or care. For tablets, the difference in speed between a budget and a premium device is far more dramatic. I’ve included numbers from the Dell Venue 8, which I’ll be providing a short review of in the near future. While the price isn’t bad, the two Samsung tablets feel substantially snappier – as they should. We’ll start with the CPU/system benchmarks and then move to the GPU/Graphics tests.

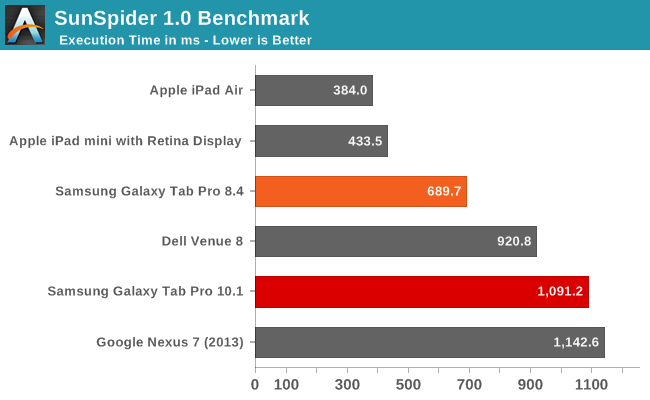

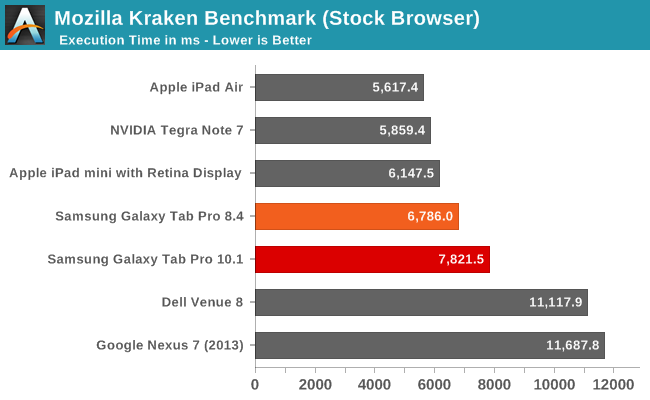

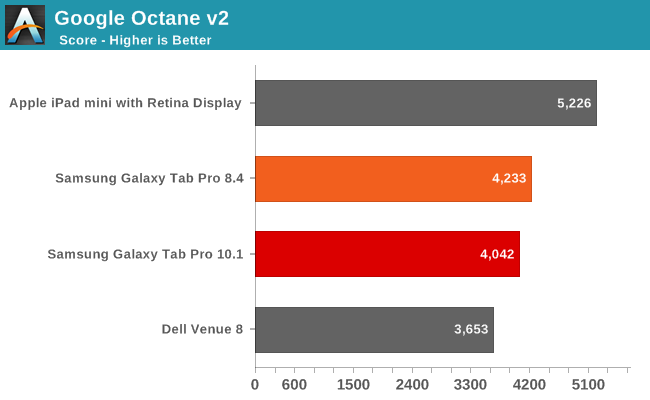

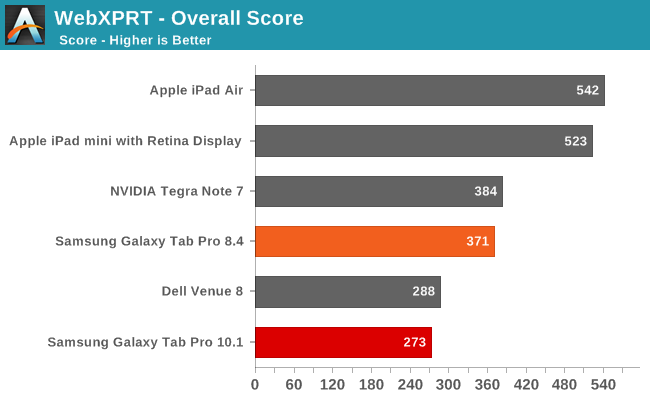

In terms of CPU speed, the Apple A7 chips still take the lead in all of our tests, though not always by a large margin. We’re also using different browsers (Chrome vs. Safari), and JavaScript benchmarks aren’t always the greatest way of comparing CPU performance. I ran additional benchmarks on the two Samsung tablets just to see if I could get some additional information; you can find a table of results for AndeBench, Basemark OS II, and Geekbench 3.

| CPU/System Benchmarks of Samsung Galaxy Tab Pro | |||

| Benchmark | Subtest | Tab Pro 10.1 | Tab Pro 8.4 |

| Andebench | Native | 13804 | 17533 |

| Java | 708 | 790 | |

| Basemark OS II | Overall | 865 | 1062 |

| System | 1542 | 1529 | |

| Memory | 419 | 503 | |

| Graphics | 1032 | 2555 | |

| Web | 838 | 724 | |

| Geekbench 3 | Single | 942 | 910 |

| Multi | 2692 | 2847 | |

| Integer Single | 1028 | 967 | |

| Integer Multi | 3135 | 3343 | |

| FP Single | 897 | 848 | |

| FP Multi | 3106 | 3080 | |

| Memory Single | 860 | 922 | |

| Memory Multi | 982 | 1391 | |

Interestingly, the Samsung Exynos 5 Octa 5420 just seems to come up short versus the Snapdragon 800 in most of these tests. However, there’s a bit more going on than you might expect. We checked for cheating in the benchmarks, and found no evidence that either of these tablets was doing anything unusual in terms of boosting clock speeds. What we did find is that the Pro 10.1 is frequently not running at maximum frequency – or anywhere near it – in quite a few of our CPU tests.

Specifically, Sunspider, Kraken, and Andebench had the cores hitting a maximum 1.1-1.3GHz. The Pro 8.4 meanwhile would typically hit the maximum 2.3GHz clock speed. The result, as you might imagine, is that the 8.4 ends up being faster, sometimes by a sizeable margin. Basemark OS II, Geekbench 3, and AnTuTu on the other hand didn’t have any such odd behavior, with the Pro 10.1 often hitting 1.8-1.9GHz (on one or more cores) during the testing, and when that happens it often ends up slightly faster than the Pro 8.4.

Which benchmark results are more "valid"? Well, that's a different subject, but as we're comparing Samsung tablets running more or less the same build of Android, we can reasonably compare the two and say that the 8.4 has better overall performance. Updated drivers or tweaking of the power governor on the 10.1 might change things down the road, but we can only test what exists right now.

Overall, both systems are sufficiently fast for a modern premium tablet, so I wouldn’t worry too much about whether or not you’re getting maximum clock speeds in a few benchmarks – in normal use you likely won’t notice one way or the other. But we’re only talking about the CPU performance when we say you won’t see the difference; let’s move over to the graphics benchmarks to see how the Galaxy Pro tablets fare.

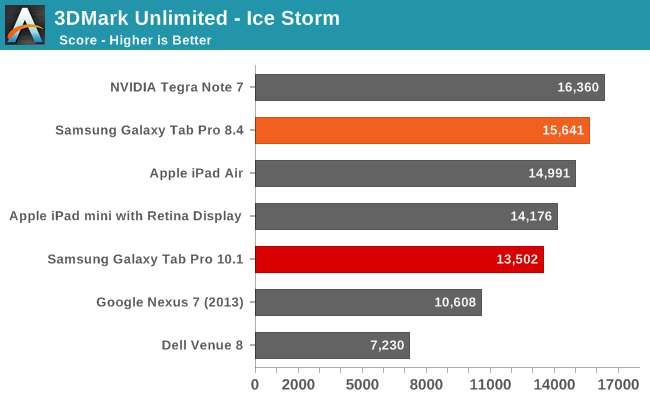

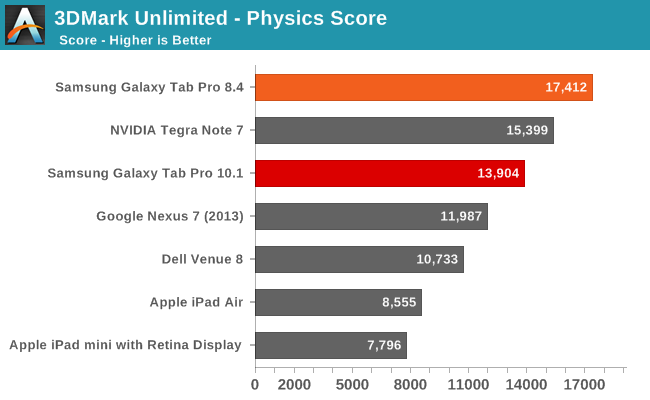

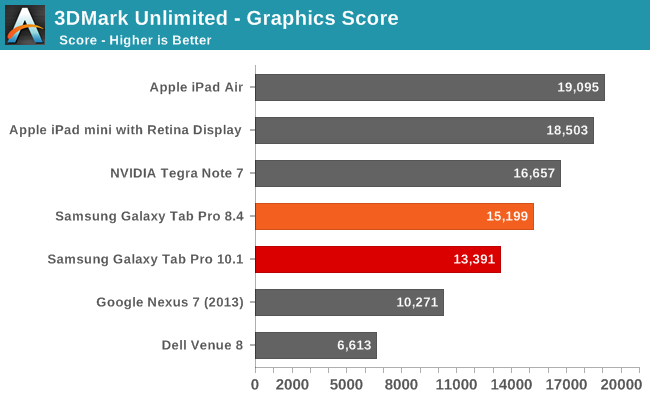

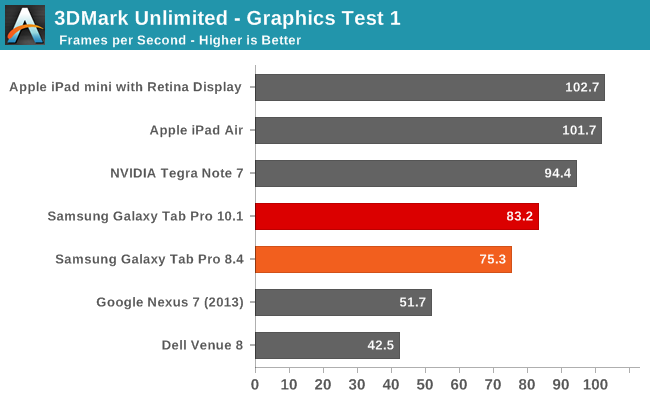

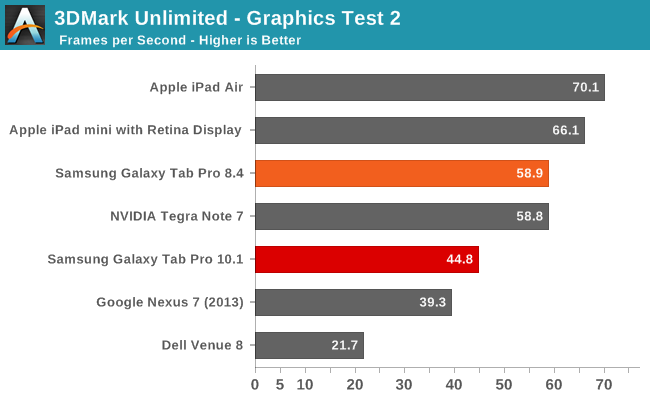

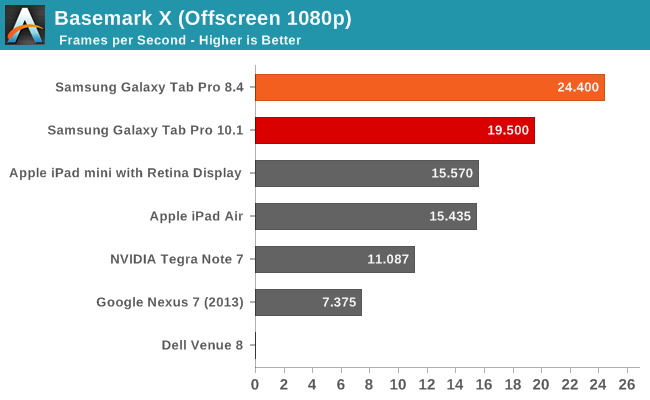

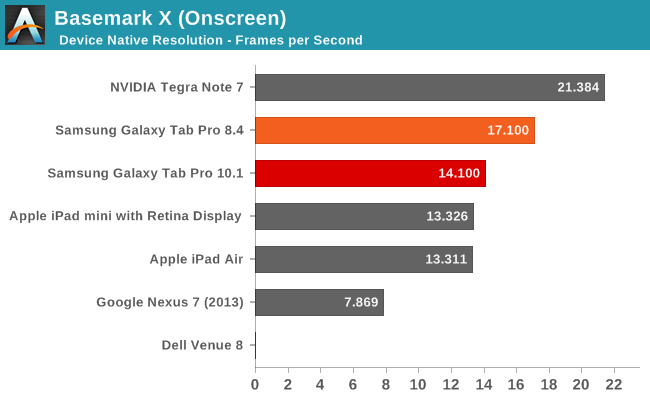

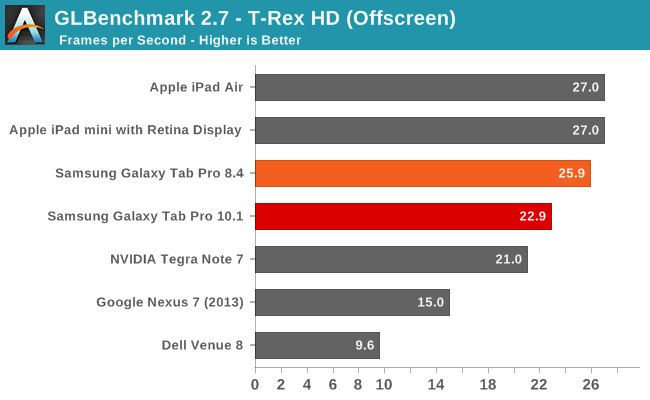

Graphics Performance

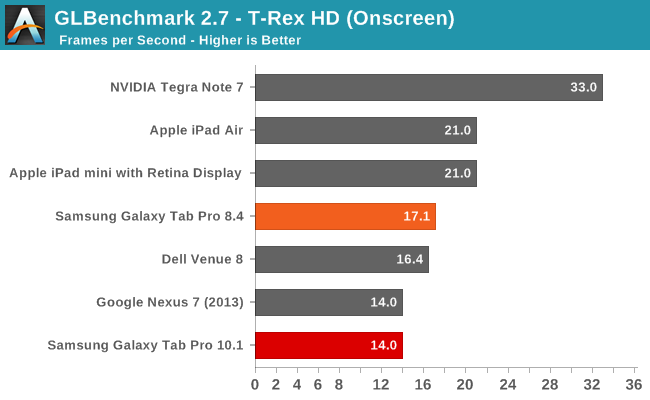

Outside of the 3DMark Unlimited Graphics Test 1 result, the Pro 8.4 sweeps the tables against its big brother. Playing games like Angry Birds Go! or any other reasonably demanding 3D titles in my experience confirms the above results – Adreno 330 beats the Mali-T628; end of discussion. I also had a few quirks crop up with the Pro 10.1 graphics, like Plants vs. Zombies 2 at one point stopped rendering all the fonts properly; a reboot fixed the problem, but I may have seen one or two other rendering glitches during testing. I have some additional results for GPU testing as well via GFXBench 3.0 if you’re interested:

| Graphics Benchmarks of Samsung Galaxy Tab Pro | |||

| Benchmark | Subtest | Tab Pro 10.1 | Tab Pro 8.4 |

| GFXBench 3.0 Onscreen | Manhattan (FPS) | 2.9 | 5.8 |

| T-Rex (FPS) | 14 | 17.1 | |

| ALU (FPS) | 13 | 59.8 | |

| Alpha Blending (MB/s) | 3295 | 6847 | |

| Fill (MTex/s) | 1956 | 3926 | |

| GFXBench 3.0 Offscreen | Manhattan (FPS) | 5.5 | 10.8 |

| T-Rex (FPS) | 22.9 | 25.9 | |

| ALU (FPS) | 25.6 | 138 | |

| Alpha Blending (MB/s) | 3093 | 7263 | |

| Fill (MTex/s) | 1956 | 3780 | |

The new Manhattan benchmark was one of the other tests where the Pro 10.1 didn’t seem to render things properly, and even then the Pro 8.4 ends up with nearly twice the frame rates. The ALU, Alpha Blending, and Fill rate scores might explain some of what’s going on, where in some cases the Pro 8.4 is more than four times as fast. Regardless, if you want maximum frame rates, I’d suggest getting the Pro 8.4 over the 10.1.

125 Comments

View All Comments

SilthDraeth - Saturday, March 22, 2014 - link

On my note phone if I want to take a screenshot, I hold the power button and the Samsung Home button. Give that a try. Or, on my wife's note 10.1 first edition, it has a dedicated screenshot softkey that appears where your normal android home keys, etc appear.FwFred - Sunday, March 23, 2014 - link

LOL... 'Pro'. Surface Pro 2 just fell off the chair laughing.garret_merrill - Friday, October 3, 2014 - link

Really good tablets, although I would seriously consider the Note Pro series too (highly ranked by a number of sources, see http://www.consumertop.com/best-tablets/). But either way, the Samsung tables deliver fantastic quality.Brian Z - Saturday, March 22, 2014 - link

Antutu? Really... Maybe somebody kidnapped Anand and Brian. Frigging Antutugrahaman27 - Saturday, March 22, 2014 - link

Better than just posting the browser speed tests for CPU, and draw final thoughts from that, which they have gotten in a habit of doing.JarredWalton - Sunday, March 23, 2014 - link

What's wrong with running one more benchmark and listing results for it? Sheesh... most of the time people complain about not having enough data, and now someone is upset for me running AnTuTu. Yes, I know companies have "cheated" on it in the past, but the latest revision seems about as valid in its reported scores as any of the other benchmarks. Now if it wouldn't crash half the time, that would be great. :-\Egg - Sunday, March 23, 2014 - link

You do realize that Brian has, for all intents and purposes, publicly cursed AnTuTu and mocked the journalists who used it?JarredWalton - Sunday, March 23, 2014 - link

The big problem is people that *only* (or primarily) use AnTuTu and rely on it as a major source of performance data. I'm not comparing AnTuTu scores with tons of devices; what I've done is provide Samsung Galaxy Tab Pro 8.4 vs. 10.1 scores, mostly to show what happens when the CPU in the 10.1 hits 1.8-1.9 GHz. It's not "cheating" to do that either -- it's just that the JavaScript tests mostly don't go above 1.2-1.3GHz for whatever reason. Octane and many other benchmarks hit higher clocks, but Sunspider and Kraken specifically do not. It's probably an architectural+governor thing, where the active threads bounce around the cores of the Exynos enough that they don't trigger higher clocks.Don't worry -- we're not suddenly changing stances on Geekbench, AnTuTu, etc. but given the odd clocks I was seeing with the 10.1 I wanted to check a few more data points. Hopefully that clarifies things? It was Brian after all that used AnTuTu to test for cheating (among other things).

Wilco1 - Sunday, March 23, 2014 - link

The reason for the CPU clock staying low is because the subtests in Sunspider and AnTuTu only take a few milliseconds (Anand showed this in graphs a while back). That means there is not enough time to boost the frequency to the maximum (this takes some time). Longer running benchmarks like Geekbench are fine. I wouldn't be surprised if the governor will soon start to recognize these microbenchmarks by their repeated bursty behaviour rather than by their name...Of course the AnTuTu and Javascript benchmarks suffer from many other issues, such as not using geomean to calculate the average (making it easy to cheat by speeding up just one subtest) and using tiny unrepresentative micro benchmarks far simpler than even Dhrystone.

Also it would be nice to see a bit more detail about the first fully working big/little octa core with GTS enabled. Previously AnandTech has been quite negative about the power consumption of Cortex-A15, and now it looks the 5420 beats Krait on power efficiency while having identical performance...

virtual void - Monday, March 24, 2014 - link

You cannot disregard the result produced by something just because the load generated by the benchmark comes in very short burst, that is the _typical_ workload faced by these kind of devices.The result in Geekbench give you some hint how the device would handle HPC-workloads, it give you very limited information about how well it handles mobile apps. Another problem with Geekbench is that it runs almost entirely out of L1$. 97% of the memory accesses where reported as L1-hits on a Sandy Bridge CPU (32kB L1D$). Not even mobile apps has such a small working set.

big.LITTLE is always at a disadvantage vs one single core in bursty workloads as the frequency transition latency is relatively high when switching CPU-cores. Low P-state switching time probably explains why BayTrail "feels" a lot faster than what the benchmarks like Geekbench suggest. BayTrail has a P-state latency of 10µs while ARM SoCs (without big.LITTLE) seem to lie between 0.1ms - 1ms (according to the Linux device-tree information).