10Gbit Ethernet: Killing Another Bottleneck?

by Johan De Gelas on March 8, 2010 12:00 PM EST- Posted in

- IT Computing

Network Performance in ESX 4.0 Update 1

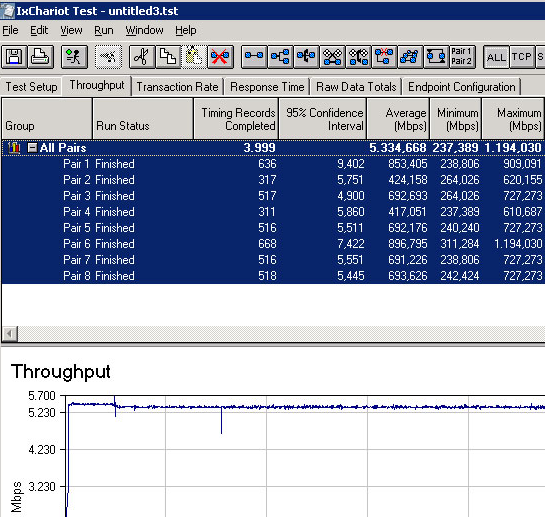

We used up to eight VMs, and each was assigned an “endpoint” in the Ixia IxChariot network test. This way we could measure the total network throughput that is possible to achieve with one, four or eight VMs. Making use of ESX NetQueue, the cards should be able to leverage their separate queues and the hardware Layer 2 “switch”.

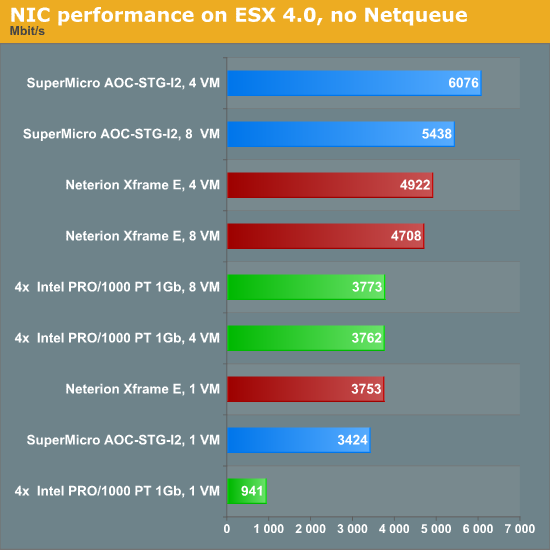

First, we test with NetQueue disabled. The cards will behave like a card with only one Rx/Tx queue. To make the comparison more interesting, we added two dual-port gigabit NICs into the benchmark mix. Teamed NICs are currently by far the most cost effective way to increase network bandwidth.

The 10G cards show their potential. With four VMs, they are able to achieve 5 to 6Gbit/s. There is clearly a queue bottleneck: both 10G cards perform worse with eight VMs. Notice also that 4x1Gbit does very well. This combination has more queues and can cope well with the different network streams. Out of a maximum line speed of 4Gbit/s, we achieve almost 3.8Gbit/s with four and eight VMs. Now let's look at CPU load.

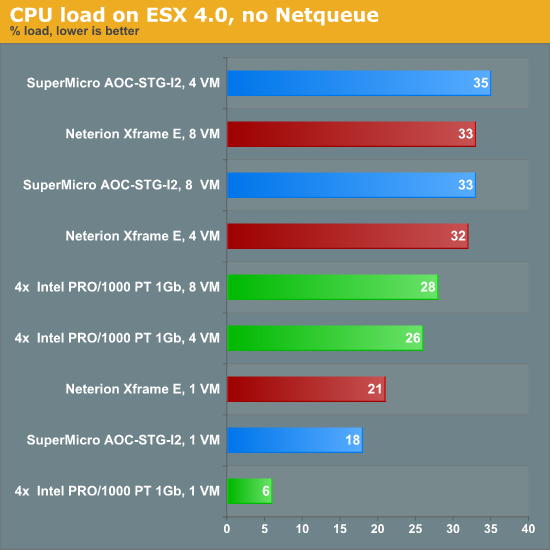

Once you need more than 1Gbit/s, you should pay attention to the CPU load. Four gigabit ports or one 10G port require 25~35% utilization of eight 2.9GHz Opterons cores. That means that you would need two or three cores dedicated just to keeping the network pipes filled. Let us see if NetQueue can do some magic.

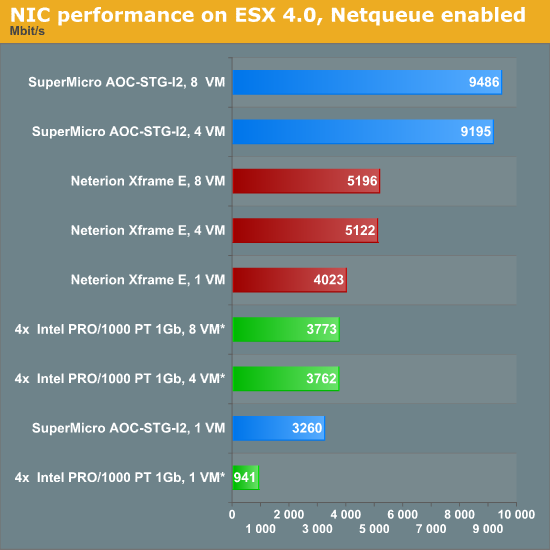

The performance of the Neterion card improves a bit, but it's not really impressive (+8% in the best case). The Intel 82598 EB chip on the Supermicro 10G NIC is now achieving 9.5Gbit/s with eight VMs, very close to the theoretical maximum. The 4x1Gbit/s NIC numbers were repeated in this graph for reference (no NetQueue was available).

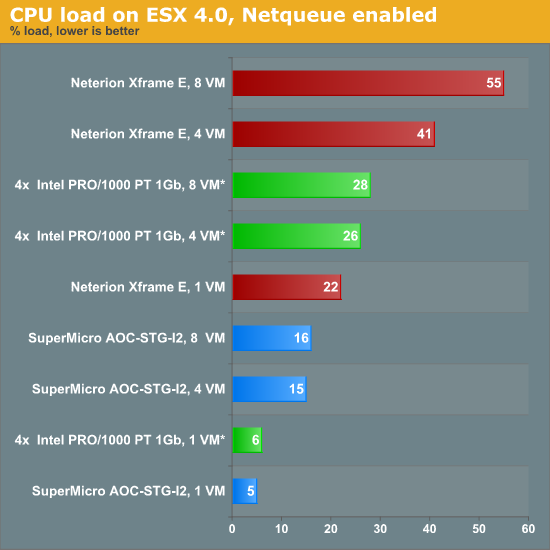

So how much CPU power did these huge network streams absorb?

The Neterion driver does not seem to be optimized for ESX 4. Using NetQueue should lower CPU load, not increase it. The Supermicro/Intel 10G combination shows the way. It delivers twice as much bandwidth at half the CPU load compared to the two dual-port gigabit NICs.

49 Comments

View All Comments

fredsky - Tuesday, March 9, 2010 - link

we do use 10GbE at work, and i passed a long time finding the right solutiom- CX4 is outdated, huge cable, short length power hungry

- XFP is also outdated and fiber only

- SFP + is THE thing to get. very long power, and can used with copper twinax AS WELL as fiber. you can get a 7m twinax cable for 150$.

and the BEST card available are Myricom very powerfull for a decent price.

DanLikesTech - Tuesday, March 29, 2011 - link

CX4 is old? outdated? I just connected two VM host servers using CX4 at 20Gb (40Gb aggregate bandwidth)And it cost me $150. $50 for each card and $50 for the cable.

DanLikesTech - Tuesday, March 29, 2011 - link

And not to mention the low latency of InfiniBand compared to 10GbE.http://www.clustermonkey.net/content/view/222/1/

thehevy - Tuesday, March 9, 2010 - link

Great post. Here is a link to a white paper that I wrote to provide some best practice guidance when using 10G and VMware vShpere 4.Simplify VMware vSphere* 4 Networking with Intel® Ethernet 10 Gigabit Server Adapters white paper -- http://download.intel.com/support/network/sb/10gbe...">http://download.intel.com/support/network/sb/10gbe...

More white papers and details on Intel Ethernet products can be found at www.intel.com/go/ethernet

Brian Johnson, Product Marketing Engineer, 10GbE Silicon, LAN Access Division

Intel Corporation

Linkedin: www.linkedin.com/in/thehevy

twitter: http://twitter.com/thehevy">http://twitter.com/thehevy

emusln - Tuesday, March 9, 2010 - link

Be aware that VMDq is not SR-IOV. Yes, VMDq and NetQueue are methods for splitting the data stream across different interrupts and cpus, but they still go through the hypervisor and vSwitch from the one PCI device/function. With SR-IOV, the VM is directly connected to a virtual PCI function hosted on the SR-IOV capable device. The hypervisor is needed to set up the connection, then gets out of the way. This allows the NIC device, with a little help from an iommu, to DMA directly into the VM's memory, rather than jumping through hypervisor buffers. Intel supports this in their 82599 follow-on to the 82598 that you tested.megakilo - Tuesday, March 9, 2010 - link

Johan,Regarding the 10Gb performance on native Linux, I have tested Intel 10Gb (the 82598 chipset) on RHEL 5.4 with iperf/netperf. It runs at 9.x Gb/s with a single port NIC and about 16Gb/s with a dual-port NIC. I just have a little doubt about the Ixia IxChariot benchmark since I'm not familiar about it.

-Steven

megakilo - Tuesday, March 9, 2010 - link

BTW, in order to reach 9+ Gb/s, the iperf/netperf have to run multiple threads (about 2-4 threads) and use a large TCP window size (I used 512KB).JohanAnandtech - Tuesday, March 9, 2010 - link

Thanks. Good feedback! We'll try this out ourselves.sht - Wednesday, March 10, 2010 - link

I was surprised by the poor native Linux results as well. I got > 9 Gbit/s with Broadcom NetXtreme using nuttcp as well. I don't recall whether multiple threads were required to achieve those numbers. I don't think they were, but perhaps using a newer kernel helped, the Linux networking stack has improved substantially since 2.6.18.themelon - Tuesday, March 9, 2010 - link

Did I miss where you mention this or did you completely leave it out of the article?Intel has had VMDq in Gig-E for at least 3-4 years in the 82575/82576 chips. Basically, anything using the igb driver instead of the e1000g driver.