Better Image Quality: CSAA & TMAA

NVIDIA’s next big trick for image quality is that they’ve revised Coverage Sample Anti-Aliasing. CSAA, which was originally introduced with the G80, is a lightweight method of better determining how much of a polygon actually covers a pixel. By merely testing polygon coverage and storing the results, the ROP can get more information without the expense of fetching and storing additional color and Z data as done with a regular sample under MSAA. The quality improvement isn’t as pronounced as just using more multisamples, but coverage samples are much, much cheaper.

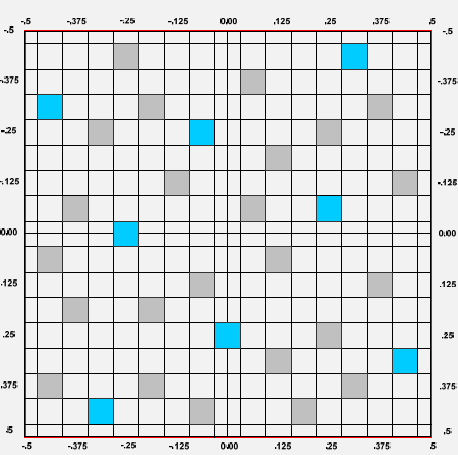

32x CSAA sampling pattern

For the G80 and GT200, CSAA could only test polygon edges. That’s great for resolving aliasing at polygon edges, but it doesn’t solve other kinds of aliasing. In particular, GF100 will be waging a war on billboards – flat geometry that uses textures with transparency to simulate what would otherwise require complex geometry. Fences, leaves, and patches of grass in fields are three very common uses of billboards, as they are “minor” visual effects that would be very expensive to do with real geometry, and would benefit little from the quality improvement.

Since billboards are faking geometry, regular MSAA techniques do not remove the aliasing within the billboard. To resolve that DX10 introduced alpha to coverage functionality, which allows MSAA to anti-alias the fake geometry by using the alpha mask as a coverage mask for the MSAA process. The end result of this process is that the GPU creates varying levels of transparency around the fake geometry, so that it blends better with its surroundings.

It’s a great technique, but it wasn’t done all that well by the G80 and GT200. In order to determine the level of transparency to use on an alpha to coverage sampled pixel, the anti-aliasing hardware on those GPUs used MSAA samples to test the coverage. With up to 8 samples (8xQ MSAA mode), the hardware could only compute 9 levels of transparency, which isn’t nearly enough to establish a smooth gradient. The result was that while alpha to coverage testing allowed for some anti-aliasing of billboards, the result wasn’t great. The only way to achieve really good results was to use super-sampling on billboards through Transparency Super-Sample Anti-Aliasing, which was ridiculously expensive given that when billboards are used, they usually cover most of the screen.

For GF100, NVIDIA has made two tweaks to CSAA. First, additional CSAA modes have been unlocked – GF100 can do up to 24 coverage samples per pixel as opposed 16. The second change is that the CSAA hardware can now participate in alpha to coverage testing, a natural extension of CSAA’s coverage testing capabilities. With this ability CSAA can test the coverage of the fake geometry in a billboard along with MSAA samples, allowing the anti-aliasing hardware to fetch up to 32 samples per pixel. This gives the hardware the ability to compute 33 levels of transparency, which while not perfect allows for much smoother gradients.

The example NVIDIA has given us for this is a pair of screenshots taken from a field in Age of Conan, a DX10 game. The first screenshot is from a GT200 based video card running the game with NVIDIA’s 16xQ anti-aliasing mode, which is composed of 8 MSAA samples and 8 CSAA samples. Since the GT200 can’t do alpha to coverage testing using the CSAA samples, the resulting grass blades are only blended with 9 levels of transparency based on the 8 MSAA samples, giving them a dithered look.

Age of Conan grass, GT200 16x AA

The second screenshot is from GF100 running in NVIDIA’s new 32x anti-aliasing mode, which is composed of 8 MSAA samples and 24 CSAA samples. Here the CSAA and MSAA samples can be used in alpha to coverage, giving the hardware 32 samples from which to compute 33 levels of transparency. The result is that the blades of grass are still somewhat banded, but overall much smoother than what the GT200 produced. Bear in mind that since 8x MSAA is faster on the GF100 than it was GT200, and CSAA has very little overhead in comparison (NVIDIA estimates 32x has 93% of the performance of 8xQ), the entire process should be faster on GF100 even if it were running at the same speeds as GT200. Image quality improved, and at the same time the performance improved too.

Age of Conan grass, GF100 32x AA

The ability to use CSAA on billboards left us with a question however: isn’t this what Transparency Anti-Aliasing was for? The answer as it turns out is both yes and no.

Transparency Anti-Aliasing was introduced on the G70 (GeForce 7800GTX) and was intended to help remove aliasing on billboards, exactly what NVIDIA is doing today with MSAA. The difference is that while DX10 has alpha to coverage, DX9 does not – and DX9 was all there was when G70 was released. Transparency Multi-Sample Anti-Aliasing (TMAA) as implemented today is effectively a shader replacement routine to make up for what DX9 lacks. With it, DX9 games can have alpha to coverage testing done on their billboards in spite of DX9 not having this feature, allowing for image quality improvements on games still using DX9. Under DX10 TMAA is superseded by alpha to coverage in the API, but TMAA is still alive and well due to the large number of older games using DX9 and the large number of games yet to come that will still use DX9.

Because TMAA is functionally just enabling alpha to coverage on DX9 games, all of the changes we just mentioned to the CSAA hardware filter down to TMAA. This is excellent news, as TMAA has delivered lackluster results in the past – it was better than nothing, but only Transparency Super-Sample Anti-Aliasing (TSAA) really fixed billboard aliasing, and only at a high cost. Ultimately this means that a number of cases in the past where only TSAA was suitable are suddenly opened up to using the much faster TMAA, in essence making good billboard anti-aliasing finally affordable on newer DX9 games on NVIDIA hardware.

As a consequence of this change, TMAA’s tendency to have fake geometry on billboards pop in and out of existence is also solved. Here we have a set of screenshots from Left 4 Dead 2 showcasing this in action. The GF100 with TMAA generates softer edges on the vertical bars in this picture, which is what stops the popping from the GT200.

Left 4 Dead 2: TMAA on GT200

Left 4 Dead 2: TMAA on GF100

115 Comments

View All Comments

x86 64 - Sunday, January 31, 2010 - link

If we don't know these basic things then we don't know much.1. Die size

2. What cards will be made from the GF100

3. Clock speeds

4. Power usage (we only know that it’s more than GT200)

5. Pricing

6. Performance

Seems a pretty comprehensive list of important info to me.

nyran125 - Saturday, January 30, 2010 - link

You guys that buy a brand new graphics card every single year are crazy . im still running an 8800 GTS 512mb with no issues in any games whatso ever DX10, was a waste of money and everyones time. Im going to upgrade to the highest end of the GF100;s but thats from a 8800 GTS512mb so the upgrade is significant. Bit form a heigh end ati card to GF 100 ?!?!?!? what was the friggin point in even getting a 200 series card.!?!?!!?1/. Games are only just catching up to the 9000 series now.Olen Ahkcre - Friday, January 22, 2010 - link

I'll wait till they (TSMC) start using 28nm (from planned 40nm) fabrication process on Fermi... drop in size, power consumption and price and rise is clock speed will probably make it worth the wait.It'll be a nice addition to the GTX 295 I currently have. (Yeah, going SLI and PhysX).

Zingam - Wednesday, January 20, 2010 - link

Big deal... Until the next generation of Consoles - no games would take any advantage of these new techs. So? Why bother?zblackrider - Wednesday, January 20, 2010 - link

Why am I flooded with memories of the 20th Anniversary Macintosh?Zool - Wednesday, January 20, 2010 - link

Tesselation is quite resource hog on shaders. If u increase polygons by tenfold (quite easy even with basic levels of tesselation factor) the dissplacement map shaders needs to calculate tenfold more normals which ends in the much more detailed dissplacement of course. The main advatage of tesselation is that it dont need space in video memmory and also read(write ?) bandwith is on chip but it actualy acts as you would increase the polygons in game. Lightning, shadows and other geometry based efects should act as on high polygon models too i think (at least in uniengine heaven u have shadows after tesselation where before u didnt had a single shadow).Only the last stage of tesselator the domain shader produces actual vertices. The real question would be how much does this single(?) domain shader in radeons keep up with the 16 polymorph engines(each with its own tesselation engines) in gt300.

Thats 1(?) domain shader for 32 stream procesors in gt300(and much closer) against 1(?) for 320 5D units in radeon.

If u have too much shader programs that need the new vertices cordinations the radeon could end up being realy botlenecked.

Just my toughs.

Zool - Wednesday, January 20, 2010 - link

Of course ati-s tesselation engine and nvidias tesselation engine can be completly different fixed units. Ati-s tesselation engine is surely more robust than a single tesselation engine in nvidias 16 polymorph engines as its designed for the entire shaders.nubie - Tuesday, January 19, 2010 - link

They have been sitting on the technology since before the release of SLi.In fact SLi didn't have even 2-monitor support until recently, when it should have had 4-monitor support all along.

nVidia clearly didn't want to expend the resources on making the software for it until it was forced, as it now is by AMD heavily advertising their version.

If you look at some of their professional offerings with 4-monitor output it is clear that they have the technology, I am just glad they have acknowledged that it is a desire-able feature.

I certainly hope the mainstream cards get 3-monitor output, it will be nice to drive 3 displays. 3 Projectors is an excellent application, not only for high-def movies filmed in wider than 16:9 formats, but games as well. With projectors you don't get the monitor bezel in the way.

Enthusiast multi-monitor gaming goes back to the Quake II days, glad to see that the mainstream has finally caught up (I am sure the geeks have been pushing for it from inside the companies.)

wwwcd - Tuesday, January 19, 2010 - link

Maybe I'll live to see if Nvidia still wins AMD / Ati, a proposal which is as leadership price / performance, or even as productivity, regardless of price!:)AnnonymousCoward - Tuesday, January 19, 2010 - link

They should make a GT10000, in which the entire 300mm wafer is 1 die. 300B transistors. Unfortunately you have to mount the final thing to the outside of your case, and it runs off a 240V line.