Server Guide part 2: Affordable and Manageable Storage

by Johan De Gelas on October 18, 2006 6:15 PM EST- Posted in

- IT Computing

Parallel SCSI in trouble

Just like P-ATA, which was limited to 133 MB/s, all kinds of skew and crosstalk problems kept parallel SCSI from using a 160 MHz clock. That 160 MHz clock was necessary to reach the 640 MB/s for the next evolution of parallel SCSI, SCSI-640. The result is that SCSI-640 died a silent death.

As you can use up to 14 disk drives on one shared SCSI bus, and with the fastest SCSI hard disk reaching up to 100 MB/s, the 320 MB/s transfer rate was starting to become a bottleneck in many fileserver related applications. By design, SCSI is not very efficient when it comes to raw bandwidth. Tests show that 2 Gb/s or 200 MB/s FC offers more raw bandwidth than SCSI 320. Of course, fourteen SCSI disk still do not need to transfer several hundreds of megabytes per second when running a transactional OLTP database workload, but maximum wire speed wasn't the only concern with parallel SCSI.

As SCSI-320 was still backwards compatible with the early SCSI versions, commands were sent at a "back to the eighties" pace: 5 MB/s. This slow rate of sending commands wastes up to 30% of the performance of the SCSI bus. Another big problem was the fact that you could only attach 14 devices on one host bus adapter. This limited the possibilities to expand your storage in directly attached storage configurations.

The SAS/SATA revolution

SAS is much more than a serialized version of SCSI-320. Instead of writing a - probably boring - essay about the new features in the SAS protocol, let us see what new functionality is available by just looking at real SAS implementations and products. First, we take a look at the LSI Logic SAS3442X-R.

LSI Logic SAS3442X-R: a 8-port 3Gb/s SAS, PCI-X HBA

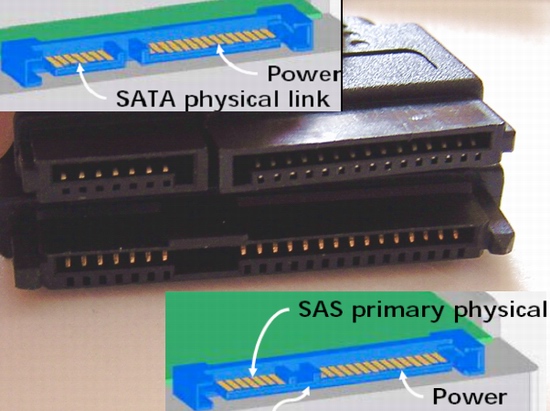

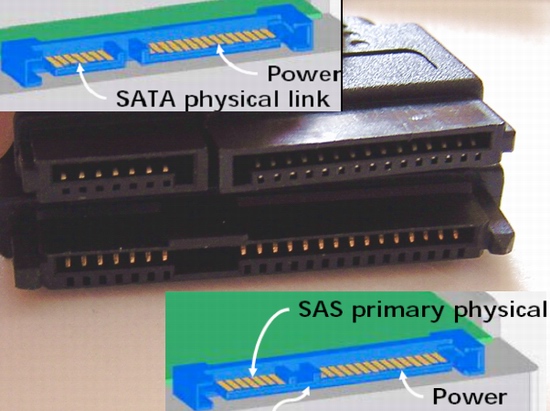

The first things that you will notice is that you can attach two cables to the internal SAS connector: one for SATA and one for SAS drives. The male connector on our SAS HBA card can connect to both the "female" connectors of SATA and the SAS cables which are slightly different. As you can see below, it is not possible to use the SAS male connector (the lower drawing in blue and green) of the disk with SATA cables. Basically, SAS HBA supports both SAS and SATA drives whereas SATA HBA only support SATA drives.

The connector on top enables the ability to connect - internally - a SATA drive to our HBA, and the connector at the bottom allows us to connect SAS drives.

SAS is just like TCP/IP or Fibre Channel in that it's a layered protocol. There are three transport protocols that use the same SAS underlying physical and link-layer protocols:

The Serial Management Protocol supports expanders, which give SAS a very high degree of flexibility and scalability. The "Port layer" allows wide port which enables much more bandwidth than would have been possible with SCSI-320 and 640. Notice that the SCSI Protocol (SSP) and the Serial ATA Tunnelling Protocol use the same link and physical layers. This allows SATA and SAS drives on the same expander. Let us bring the theory into actual practice....

Just like P-ATA, which was limited to 133 MB/s, all kinds of skew and crosstalk problems kept parallel SCSI from using a 160 MHz clock. That 160 MHz clock was necessary to reach the 640 MB/s for the next evolution of parallel SCSI, SCSI-640. The result is that SCSI-640 died a silent death.

As you can use up to 14 disk drives on one shared SCSI bus, and with the fastest SCSI hard disk reaching up to 100 MB/s, the 320 MB/s transfer rate was starting to become a bottleneck in many fileserver related applications. By design, SCSI is not very efficient when it comes to raw bandwidth. Tests show that 2 Gb/s or 200 MB/s FC offers more raw bandwidth than SCSI 320. Of course, fourteen SCSI disk still do not need to transfer several hundreds of megabytes per second when running a transactional OLTP database workload, but maximum wire speed wasn't the only concern with parallel SCSI.

As SCSI-320 was still backwards compatible with the early SCSI versions, commands were sent at a "back to the eighties" pace: 5 MB/s. This slow rate of sending commands wastes up to 30% of the performance of the SCSI bus. Another big problem was the fact that you could only attach 14 devices on one host bus adapter. This limited the possibilities to expand your storage in directly attached storage configurations.

The SAS/SATA revolution

SAS is much more than a serialized version of SCSI-320. Instead of writing a - probably boring - essay about the new features in the SAS protocol, let us see what new functionality is available by just looking at real SAS implementations and products. First, we take a look at the LSI Logic SAS3442X-R.

LSI Logic SAS3442X-R: a 8-port 3Gb/s SAS, PCI-X HBA

The first things that you will notice is that you can attach two cables to the internal SAS connector: one for SATA and one for SAS drives. The male connector on our SAS HBA card can connect to both the "female" connectors of SATA and the SAS cables which are slightly different. As you can see below, it is not possible to use the SAS male connector (the lower drawing in blue and green) of the disk with SATA cables. Basically, SAS HBA supports both SAS and SATA drives whereas SATA HBA only support SATA drives.

The connector on top enables the ability to connect - internally - a SATA drive to our HBA, and the connector at the bottom allows us to connect SAS drives.

SAS is just like TCP/IP or Fibre Channel in that it's a layered protocol. There are three transport protocols that use the same SAS underlying physical and link-layer protocols:

- The Serial SCSI Protocol (SSP), which transports the SCSI commands over the link layer (similar to the Fibre Channel Protocol)

- The Serial ATA Tunnelling Protocol (STP), which transports the SATA frames to the SATA drives

- The Serial Management Protocol (SMP), the protocol which makes it possible to use expanders and to get diagnostic information (does the disk work, is it plugged in?)

The Serial Management Protocol supports expanders, which give SAS a very high degree of flexibility and scalability. The "Port layer" allows wide port which enables much more bandwidth than would have been possible with SCSI-320 and 640. Notice that the SCSI Protocol (SSP) and the Serial ATA Tunnelling Protocol use the same link and physical layers. This allows SATA and SAS drives on the same expander. Let us bring the theory into actual practice....

21 Comments

View All Comments

dickrpm - Saturday, October 21, 2006 - link

I have a big pile of "Storage Servers" in my basement that function as a audio, video and data server. I have used PATA, SATA and SCSI 320 (in that order) to achieve necessary reliability. Put another way, when I started using enterprise class hardware, I quit having to worry (as much) about data loss.ATWindsor - Friday, October 20, 2006 - link

What happens if you encounter a unrecovrable read error when you rebuid a raid5-array? (after a disk has failed) Is the whole array unusable, or do you only loose the file using the sector which can't be read?AtW

nah - Friday, October 20, 2006 - link

actually the cost of the original RAMAC was USD 35,000 per year to lease---IBM did not sell them outright in those days, and the size was roughly 4.9 MB.nah - Friday, October 20, 2006 - link

actually the cost of the original RAMAC was USD 35,000 per year to lease---IBM did not sell them outright in those days, and the size was roughly 4.9 MB.yyrkoon - Friday, October 20, 2006 - link

It's nice to see that someone finally did an article that had information about SATA port multipliers (these devices have been around for around 2 years, and no one seems to know about them), but since I have no direct hands on experience, I feel the article concerning these was a bit skimpy.Also, while I see you're talking about iSCSI (I think some call it SCSI over IP ?) in the comments section here, I'm a bit interrested as to why I didnt see it mentioned in the article.

I plan on getting my own SATA port multiplier eventually, and I have a pretty good idea how well they would work under the right circumstances, with the right hardware, but since I do not work in a data center (or some such profession), the likelyhood of me getting my hands on a SAS, iSCSI, FC, etc rack/system is un-likely. What I'm trying to say here, is that I think you guys could go a good bit deeper into detail with each technology, and let each reader decide if the cost of product x is worth it for whatever they want to do. In the last several months (close to two years) I've been doing alot of research in this area, and still find some of these technologies a bit confusing. iSCSI for example, the only documention I could find on the subject (around 6 months ago) was some sort of technical document, written by Microsoft that I found very hard time digesting. Since then, I've only seen (going from memory) white papers from companies like HP pushing thier own specific products, and I dont care about thier product in particular, I care about the technology, and COULD be interrested in building my own 'system' some day.

What I am about to say next, I do not mean as an insult in ANY shape or form, however I think when you guys write articles on such subjects, that you NEED to go into more detail. Motherboards are one thing, hard drives, whatever, but when you get into technology that isnt very common(outside of enterprise solutions) such as SAS, iSCSI, etc, I think you're actualy doing your readers a dis-service by showing a flow chart or two, and briefly describing the technology. NAS, SAN, etc have all been done to death, but I think if you look around, you will find that a good article on ATLEAST iSCSI, how it works, and how to implement it, would be very hard to find(without buying a prebuilt solution from a company). Anyhow (again) I think I've beat this horse to death, you get my drift by now im sure ;)

photoguy99 - Thursday, October 19, 2006 - link

Great article, well worth it for AT to have this content.Can't wait for part 2 -

ceefka - Thursday, October 19, 2006 - link

Can we also expect a breakdown and benchmarking on network storage solutions for the home and small office?LoneWolf15 - Thursday, October 19, 2006 - link

Great article. It addressed points that I not only didn't think of, but that were far more useful to me than just baseline performance.It seems to me that for the moderately-sized business (or "enterprise-on-a-budget" role, such as K-12 education) that enterprise-level SATA such as Caviar RE drives in RAID-5, plus solid server backups (which should be done anyways) make more sense cost-wise than SAS. Sure, the risk for error is a bit higher, but that is why no systems/network administrator in their right minds would rely on RAID-5 alone to keep data secure.

I hope that Anandtech will do a similarly comprehensive article about backup for large storage someday, including multiple methods and software options. All this storage is great, but once you have it, data integrity (especially now that server backups can be hundreds of gigabytes or more) cannot be stressed enough.

P.S. It's one of the reasons I can't wait until we have enough storage that I can enable Shadow Copy on our Win2k3 boxes. Just one more method on top of the existing structure.

Olaf van der Spek - Thursday, October 19, 2006 - link

Why does this simple (non-mechanical) operation take so long?

Fantec - Thursday, October 19, 2006 - link

Working for an ISP, we started to use PATA/SATA a few years ago. We still use SCSI, FC & PATA/SATA depending on our needs. SATA is the first choice when we may have redundant data (and, in this case, disks are setup in JBOD (standalone) for performances issues). At the opposite, FC is only used for NFS filers (mostly used for mail storage, where average file size is a few KB).Between both, we are looking at needed storage size & IO load to make up our mind. Even for huge IO loads but only when requested block size is big enough, SATA behaves quite well.

Nonetheless, something bugs me in your article on Seagate test. I manage a cluster of servers whose total throughoutput is around 110 TB a day (using around 2400 SATA disks). With Seagate figure (an Unrecoverable Error every 12.5 terabytes written or read), I would get 10 Unrecoverable Errors every day. Which, as far as I know, is far away from what I may see (a very few per week/month).