AMD & ATI: The Acquisition from all Points of View

by Anand Lal Shimpi on August 1, 2006 10:26 PM EST- Posted in

- CPUs

Our Thoughts: The GPU Side

The AMD/ATI acquisition doesn’t make a whole lot of sense on the discrete graphics side if you view the evolution of PC graphics as something that will continue to keep the CPU and the GPU separate. If you look at things from another angle, one that isn’t too far fetched we might add, the acquisition is extremely important.

Some game developers have been predicting for quite some time that CPUs and GPUs were on this crash course and would eventually be merged into a single device. The idea is that GPUs strive, with each generation, to become more general purpose and more programmable; in essence, with each GPU generation ATI and NVIDIA take one more step to being CPU manufacturers. Obviously the GPU is still geared towards running 3D games rather than Microsoft Word, but the idea is that at some point, the GPU will become general purpose enough that it may start encroaching into the territory of the CPU makers or better yet, it may become general purpose enough that AMD and Intel want to make their own.

It’s tough to say if and when this convergence between the CPU and GPU would happen, but if it did and you were in ATI’s position, you’d probably want to be allied with a CPU maker in order to have some hope of staying alive. The 3D revolution killed off basically all giants in the graphics industry and spawned new ones, two of which we’re talking about today. What ATI is hoping to gain from this acquisition is protection from being killed off if the CPU and GPU do go through a merger of sorts.

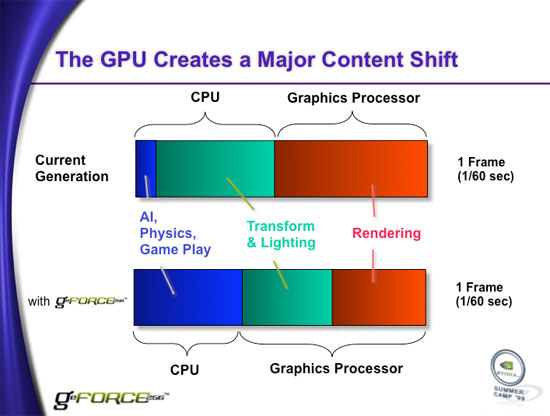

The NVIDIA GeForce 256 was NVIDIA's first "GPU", offloading T&L from the CPU. Who knows what the term GPU will mean in 5 years, will it be a fully contained within today's CPUs?

ATI and NVIDIA both seem to believe that within the next 2 - 3 years, Intel will release its own GPU and in a greater sense than their current mediocre integrated graphics. Since Intel technically has the largest share of the graphics market thanks to their integrated graphics, it wouldn’t be too difficult for them to take a large chunk of the rest of the market -- assuming Intel can produce a good GPU. Furthermore, if GPUs do become general purpose enough that Intel will actually be able to leverage much of its expertise in designing general purpose processors, then the possibility of Intel producing a good GPU isn’t too far fetched.

If you talk to Intel, it's business as usual. GPU design isn’t really a top priority and on the surface everything appears to be the same. However, a lot can happen in two years -- two years ago NetBurst was still the design of the future from Intel. Only time will tell if the doomsday scenario that the GPU makers are talking about will come true.

61 Comments

View All Comments

sykemyke - Wednesday, August 9, 2006 - link

Hey, why don't we just put FPGA block on the cpu?This way, programmer could create really new Assembly command, like 3DES or something..

unclebud - Monday, August 7, 2006 - link

it was good to just read any sort of article from the site owner.was feeling that the reviews section had just fallen into the depths of fanboyism, so it was good just to hear somebody at least sometimes impartial THINKING out loud rather than just showing off.

what's really interesting to me is that the whole article mimics what was written in the latest (i think) issue of cpu from selfsame author.

good issue incidentally. will buy it from wal-mart hopefully tomorrow (they have 10% off magazines)

cheers, and keep representing -- i still have the 440bx benchmarks/reviews filed away in a notebook

jp327 - Sunday, August 6, 2006 - link

I'm not a gamer so I usually dont follow the video segment, but looking at the Torenzaslide on page 2(this article), I can't help but see the similarity between what amd forcasts and the PS3's Cell architectuter:

http://www.anandtech.com/showdoc.aspx?i=2379&p...">http://www.anandtech.com/showdoc.aspx?i=2379&p... cell

http://www.anandtech.com/showdoc.aspx?i=2768&p...">http://www.anandtech.com/showdoc.aspx?i=2768&p... K8L

Doesn't AMD have a co-op of some sort w/ IBM?

RSMemphis - Sunday, August 6, 2006 - link

I thought you guys already knew this, but apparently not.Most likely, there will be no Fab 30, it will be re-equipped to be Fab 38, 300 mm with 65 nm features.

Considering all the aging Fabs out there, it makes sense to have the 90 nm parts externally manufactured.

xsilver - Saturday, August 5, 2006 - link

of the 5.4b of ATI's purchase price, is most of that due to intellectual property?i mean as you state, ATI has no fabs.

and then regarding the future of GPU's, with CPU's now becoming more and more multithreaded, couldnt it be fathomable that some of the work be moved back to the cpu in order to fill that workload?

unless of course gpus are also going multithreaded soon? (on die, not just SLI)

eugine MW - Saturday, August 5, 2006 - link

I had to register just to say well written article. It has provided me with much more information regarding the merger than any other website.Greatly written.

MadBoris - Thursday, August 3, 2006 - link

How is the GPU on a CPU even considered a good idea by anyone?GPU bandwidth + CPU Bandwith = how the hell are mobo bus's and chipset going to handle all that competing bandwidth from one socket. Either way their is crazy amount of conflicting bandwidth from one socket, I doubt it can be done without serious thrashing penalties.

When I want to upgrade my video card, I have to buy some $800 CPU/GPU combo. :O

Call me crazy, but that sounds like an April fools joke. But who's kidding who?

It's doom and gloom for PC gaming, and AMD just made it worse.

JarredWalton - Thursday, August 3, 2006 - link

Considering that we have the potential for dual socket motherboards with a GPU in the second socket, of a "mostly GPU CPU" in the second socket, GPU on CPU isn't terrible. Look at Montecito: 1.7 billion transistors on a CPU. A couple more process transitions and that figure will be common for the desktop CPUs.What do you do with another 1.4 billion transistors if you don't put it into a massive L2/L3 cache? Hmmm... A GPU with fast access to the CPU, maybe multiple FSBs so the GPU can still get lots of bandwidth, throw on a physics processor, whatever else you want....

Short term, GPU + CPU in a package will be just a step up from current IGPs, but long term it has a lot of potential.

dev0lution - Thursday, August 3, 2006 - link

1. There was no mention of the channel in this article, which is the vehicle by which most of these products make it to market. Intel and Nvidia have a leg up on any newly formed ATI/AMD entity, in that they make sure their partners make money and are doing more and more to reward them for supporting their platforms. AMD has been somewhat confused lately, trying to keep their promises to their partners while trying to meet sales goals on the other.2. Intel and Nvidia could ramp up their partnership a whole lot quicker than AMD/ATI can (no pesky merger and integrating cultures to worry about), so now you have Nvidia with a long term, very gradual share shift on the AMD side with a quicker ramp up on the Intel side of things to replace ATI's share. Intel and Nvidia in the short term end up doing pretty well, with plenty of time to develop next gen platforms to compete with whatever the long term AMD/ATI roadmap looks like.

3. AMD/ATI got more publicity and PR over this whole deal than they probably could have gotten with their annual marketing budgets combined. Everyone inside and outside the tech world have been talking about this merger which isn't a bad way to get brand recognition for no additional investment.

s1wheel4 - Wednesday, August 2, 2006 - link

This will be the end of AMD and ATI as we know them today....and the end of both in the high end enthusiasts market...when merged; the new company will be nothing more than a mediocre company both of which will lag behind Intel and NVIDIA in performance.