Supermicro Ultra SYS-120U-TNR Review: Testing Dual 10nm Ice Lake Xeon in 1U

by Dr. Ian Cutress on July 22, 2021 9:00 AM EST

With the launch of Intel’s Ice Lake Xeon Scalable platform comes a new socket and a range of features that vendors like Supermicro have to design for. The server and enterprise market is so vast that every design can come in a range of configurations and settings, however one of the key elements is managing compute density with memory and accelerator support. The SYS-120U-TNR we are testing today is a dense system with lots of trimmings all within a 1U, to which Supermicro is aiming at virtualization workloads, HPC, Cloud, Software Defined Storage, and 5G. This system can be equipped with upwards of 80 cores, 12 TB of DRAM, and four PCIe 4.0 accelerators, defining a high-end solution from Supermicro.

Servers: General Purpose or Hyper Focused?

Due to the way the server and enterprise market is both expansive and optimized, vendors like Supermicro have to decide how to partition their server and enterprise offerings. Smaller vendors might choose to target one particular customer, or go for a general purpose design, whereas the larger vendors can have a wide portfolio of systems for different verticals. Supermicro falls into this latter category, designing targeted systems with large customers, but also enabling ‘standard’ systems that can do a bit of everything but still offer good total cost of ownership (TCO) over the lifetime of the system.

Server size compared to a standard 2.5-inch SATA SSD

When considering a ‘standard’ enterprise system, in the past we have typically observed a dual socket design in a 2U (3.5-inch, 8.9cm height) chassis, which allows for a sufficient cooling design along with a number of add-in accelerators such as GPUs or enhanced networking, or space on the front panel for storage or additional cooling. The system we’re testing today, the SYS-120U-TNR, certainly fields this ‘standard’ definition, although Supermicro does the additional step of optimizing for density by cramming everything into a 1U chassis.

With only 1.75-inches (4.4cm) vertical clearance on offer, cooling becomes a priority, which means substantial enough heatsinks and fast moving airflow backed by 8 powerful 56mm fans, which are running at up to 30k RPM with PWM control. The SYS-120U-TNR we’re testing has support for 2 Ice Lake Xeon processors at up to 40 cores and 270 W each, as well as additional add-in accelerators (one dual slot full height + two single slot full height), and comes equipped with dual 1200W Titanium or dual 800W Titanium power supplies, indicating that it is suited up should a customer want to fill it with plenty of hardware. You can see in the image above and on the right of the image below, Supermicro uses plastic baffles to ensure that airflow through the heatsink and memory is as laminar as possible.

LGA-4189 Socket with 1U Heatsink and 16 DDR4 slots

Even with the 1U form factor, Supermicro has enabled full memory support for Ice Lake Xeon, allowing both processors sixteen DDR4-3200 memory slots, capable of supporting a total of 12 TB of memory with Intel’s Optane DCPMM 200-series.

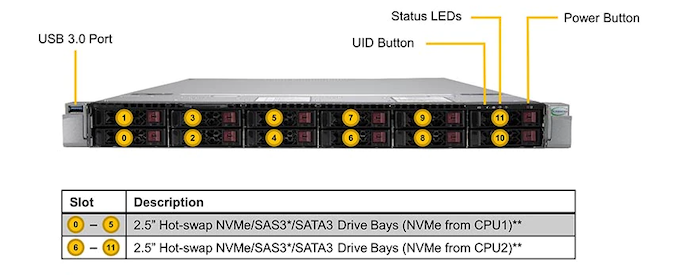

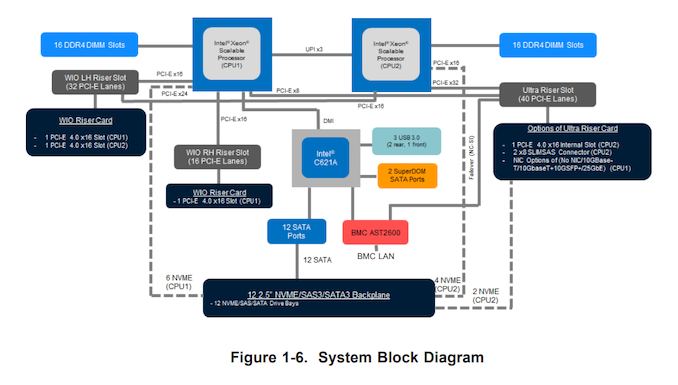

At the front are 12 2.5-inch SATA/NVMe PCIe 4.0 x4 hot swappable drive bays, with six apiece coming from each processor. If we start looking into where all the PCIe lanes from each processor go, it gets a bit confusing very quickly:

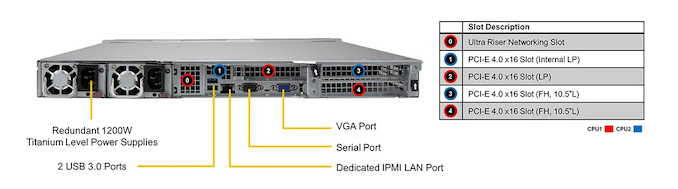

By default the system comes without network connectivity, only with a BMC connection for admin control. Network options requires an Ultra add-in riser card for dual 10GBase-T (X710-AT2), or dual 10GBase-T plus dual 10GbE SFP+ (X710-TM4). With the PCIe connectors, any other networking option might be configured, but Supermicro also lists the complete no-NIC option for air-gapped systems. The system also has three USB 3.0 ports (2 rear, 1 front), a rear VGA output, a rear COM port, and two SuperDOM ports internally.

Admin control comes from the Aspeed AST2600 which supports IPMI v2.0, Redfish API, Intel Node Manager, Supermicro’s Update Manager, and Supermicro’s SuperDoctor 5 monitoring interface.

The configuration Supermicro sent to us for review contains the following:

- Supermicro SYS-120U-TNR

- Dual Intel Xeon Gold 6330 CPUs (2x28-core, 2.5-3.1 GHz, 2x205W, 2x$1894)

- 512 GB of DDR4-3200 ECC RDIMMs (16 x 32 GB)

- Dual Kioxia CD6-R 1.92TB PCIe 4.0x4 NVMe U.2

- Dual 10GBase-T via X710-AT2

Full support for the system includes:

| Supermicro SYS-120U-TNR | ||

| AnandTech | Info | |

| Motherboard | Super X12DPU-6 | |

| CPUs | Dual Socket P+ (LGA-4189) Support 3rd Gen Ice Lake Xeon Up to 270W TDP, 40C/80T 7+1 Phase Design Per Socket |

|

| DRAM | 32 DDR4-3200 ECC Slots Support RDIMM, LRDIMM |

|

| Up to 8 TB 32 x 256 GB LRDIMM |

Up to 12 TB 16 x 512 GB Optane 16 x 256 GB LRDIMM |

|

| Storage | 12 x SATA Front Panel Optional PCIe 4.0 x4 NVMe Cabling |

|

| PCIe | PCIe 4.0 x16 Low Profile PCIe 4.0 x16 Low Profile (Internal) 2 x PCIe 4.0 x16 Full Height (10.5-inch length) Ultra Riser for Networking |

|

| Networking | None by default Optional X710-AT2 dual 10GBase-T Optional X710-TM4 dual 10GBase-T + SFP+ |

|

| IO | RJ45 BMC via ASpeed AST2600 3 USB 3.0 Ports (2 rear, 1 front) VGA BMC 1 x COM 2 x SuperDOM |

|

| Fans | 8 x 40mm double thick 30k RPM with control 2 Shrouds, 1 per CPU socket+DRAM |

|

| Power | 1200W Titanium Redundant, Max 100A | |

| Chassis | CSE-119UH3TS-R1K22P-T | |

| Management Software |

IPMI 2.0 via ASpeed AST2600 Supermicro OOB License included Redfish API Intel Node Manager KVM with Dedicated LAN SUM NMI Watch Dog SuperDoctor 5 ACPI Power Management |

|

| Optional | 2x M.2 RAID Carrier Broadcom Cache Vaults Intel VROC Raid Key RAID Cards + Cabling Hardware-based TPM Ultra Riser Cards |

|

| Note | Sold as assembled system to resellers (2 CPU, 4xDDR, 1xStorage, 1xNIC) |

|

We reached out to Supermicro for some insight into how this system might be configured for the different verticals.

| Supermicro Ultra-E SYS-120U-TNR Configuration Variants |

||||

| AnandTech | CPU | Memory | Storage | Add-In |

| Virtualization | ++ | ++ | ||

| HPC | ++ | + | ||

| Cloud Computing | handles all mainstream configs | |||

| High-End Enterprise | ++ | ++ | ++ | ++ |

| Software Defined Storage | + or 2U | |||

| Application aaS | + | + | + | + |

| 5G/Telco | Ultra-E Short-Depth Version | |||

Read on for our benchmark results.

53 Comments

View All Comments

hetzbh - Thursday, July 22, 2021 - link

So ... 70% of the sales win are due to AVX 512? Better hope that Intel finds another strategy as AMD EPYC Genoa adds AVX 512 support as well..Kamen Rider Blade - Thursday, July 22, 2021 - link

I concur, don't forget that they're getting Stacked L3 3D V-Cache.The Vorlon - Saturday, July 24, 2021 - link

Zen with 3D cache vs Saphire Rapids with HBM should be interesting.Early returns from Adler Lake (same Golden Cove core as Saphire) suggests intel will have a +/- 25% IPC lead, but AMD will retain a large power consumption advantage - it is hard to project how much of this IPC intel will need to give back to keep reasonable thermals. HBM should outperform 3D cache by a good margin, but given what we (think) we know about yields AMD may still have a lead in core counts...

Fun times ahead!

Jorgp2 - Thursday, July 22, 2021 - link

Lol, no.mode_13h - Thursday, July 22, 2021 - link

I wonder how much of this is customers simply buying into the performance story of AVX-512 and purchasing the promise vs. actually having AVX-512 workloads where it proves worthwhile.zepi - Thursday, July 22, 2021 - link

There are multiple reasons to buy Intel.One often critical one is availability / lead time. Second one is that some software is not supported on AMD or you need to pay additional HW validation bills and subject yourself to intricate details of tuning your network stack / firmwares / network cards and whatnot when you run a bit more special software.

mode_13h - Thursday, July 22, 2021 - link

> There are multiple reasons to buy Intel.Sure, but I'm not asking about that. I'm asking about the specific claim quoted in the article and mentioned by @hetzbh.

edzieba - Thursday, July 22, 2021 - link

"I wonder how much of this is customers simply buying into the performance story of AVX-512 and purchasing the promise vs. actually having AVX-512 workloads where it proves worthwhile."Very little: in this realm you don't buy hardware based on specs and review benchmarks, you have samples in house running your ACTUAL workload to determine real performance before rolling it out at scale.

mode_13h - Thursday, July 22, 2021 - link

And you know this from experience, or are you just speculating? And if the former, were you on the sales side or a volume purchaser?domih - Thursday, July 22, 2021 - link

I know that by experience. I spent two months testing 3 different racks of 20 units (two major brands and a "no brand" OEM) to advise a large purchasing customer on which ones to select. The final advice is not reduced to a Yes or No but rather the pros and cons of each system. The best benchmark results are not the only factor, software compatibility, manuals, professional services, training programs, existing relations with vendor, other support and pricing (final deal) count a lot too. In the end, using a comprehensive check list, you may select not the fastest system or even a system that has bugs (but with workarounds) due to significant difference in pricing or other arrangements. Utterly different from the DYI market. Analog: if you're going to buy 100 x 18-wheel trucks, you're going to spend quite some time evaluating the possible candidates and it will take time. Utterly different than going to a car dealer and buy a car on the spot.