Marvell Announces OCTEON 10 DPU Family: First to 5nm with N2 CPUs

by Andrei Frumusanu on June 28, 2021 6:30 AM EST

It’s been a little over a year since we covered Marvell’s OCTEON TX2 infrastructure processors, and since then, the ecosystem has been evolving in an extremely fast manner – both within Marvell and outside. Today, we’re covering the new generation OCTEON 10 family of DPUs, a whole new family of SoCs, built upon TSMC’s 5nm process node and also for the featuring for the first time Arm’s new Neoverse N2 processors.

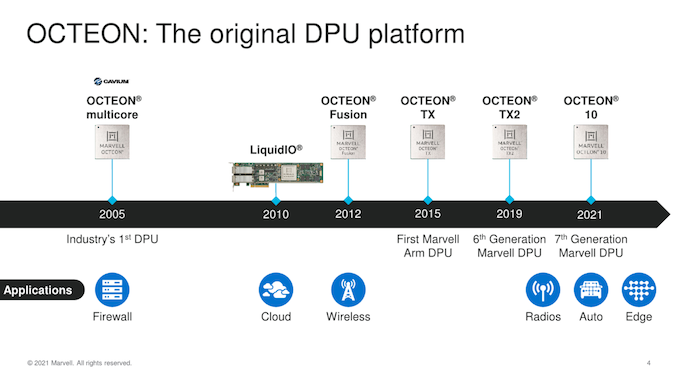

Starting off with a bit of history and nomenclature, Marvell is adopting the “DPU” term for this class of chip and accelerator type. The previous generation OCTEON TX and OCTEON TX2 already were DPUs in everything but name, previously simply being referenced as “infrastructure processors”. With the recent industry rising popularity of the term as well as competitor solutions being propped up, it seems we’re seeing the DPU term now being widely accepted nomenclature for this type of versatile chip design, defined by the fact that it’s an entity that helps process and move data while it travels through the network.

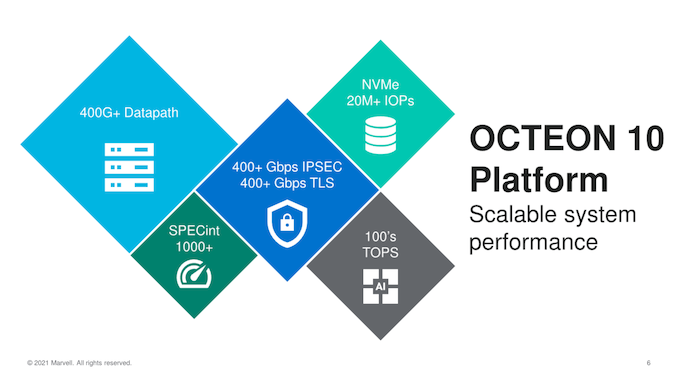

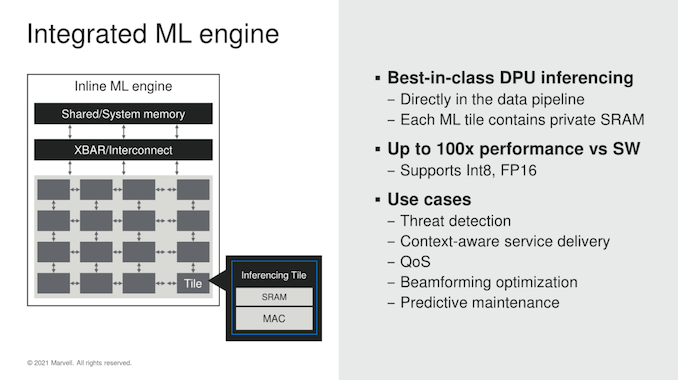

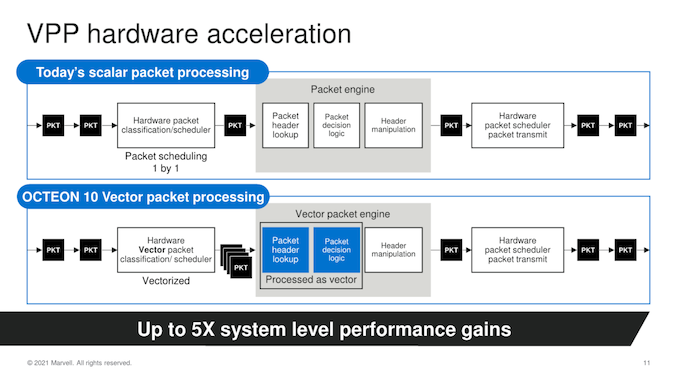

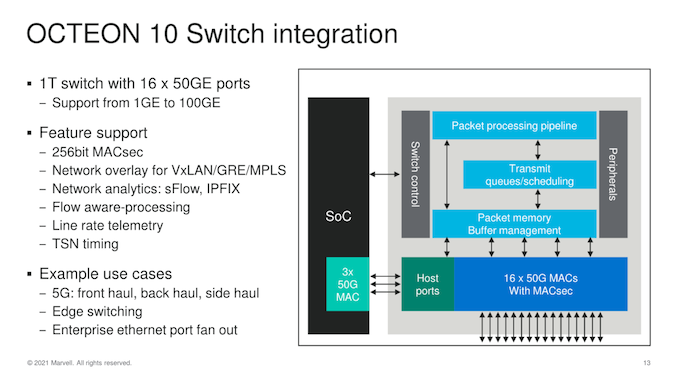

Starting with an overview, the new OCTEON 10 generally features the same versatile array of building blocks we’ve seen in the previous generation, this time upgraded to the new state of the art IP blocks, and also introducing some new features such as integrated machine learning inference engine, new inline and crypto processors as well as vector packet processors, all able to operated in a virtualised manner.

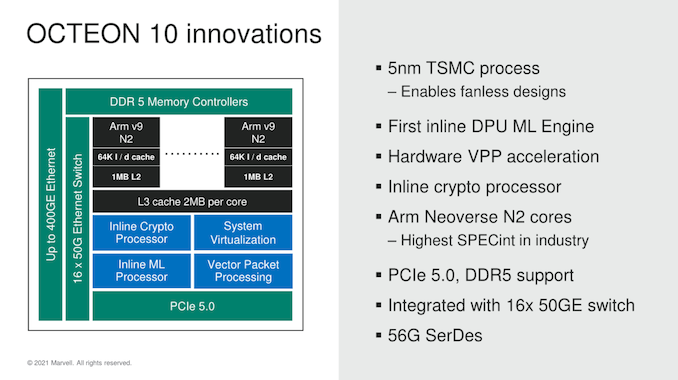

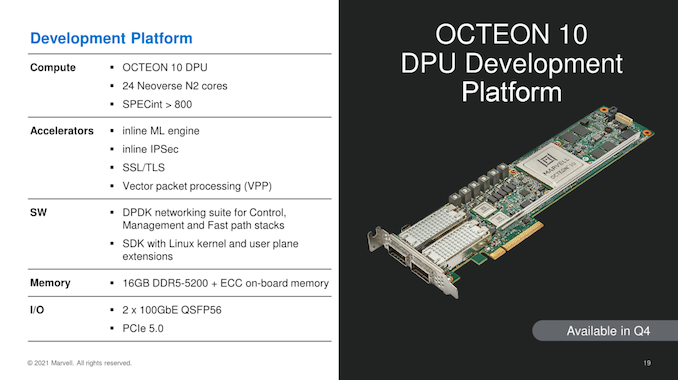

This is also Marvell’s first TSMC N5P silicon design, actually the first DPU of its kind on the new process, and also the first publicly announced Neoverse N2 implementation, featuring the newest PCIe 5.0 I/O capabilities as well as DDR5 support.

Starting off with what Marvell views as an important addition to the DPU, is a new in-house ML engine. Marvell had stated that the design for the IP had actually been originally created for a dedicated inference accelerator, and actually had been completed last year, but with Marvell opting to not bring it to market due to the extremely crowded competitive landscape. Instead, Marvell has opted to integrate the ML accelerator into their OCTEON DPU chips. Marvell here states that having the inference accelerator on the same monolithic silicon chip, directly integrated into the data pipeline is extremely important in achieving the low latency for higher throughput processing required for these kinds of data stream use-cases.

Essentially Marvell here is offering a competitor solution to Nvidia’s next-gen BlueField-3 DPU in terms of AI processing capabilities well ahead in terms of product generation, as the first OCTEON 10 solutions are expected to be sampling by end of this year while Nvidia projected BF3 to be arriving in 2022.

Also, a new capability of the new OCTEON 10 family is the introduction of vector packet processing engines, which are able to vastly augment the packet processing throughput by a factor of 5x compared to the current generation scalar processing engines.

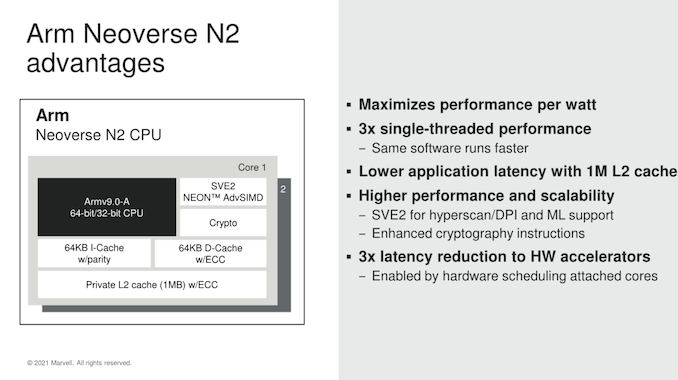

As noted, the new OCTEON 10 DPU family is the first publicly announced silicon design featuring Arm’s newest Neoverse N2 infrastructure CPU IP. We had covered the N2 and its HPC V1 sibling a couple of months ago – the jist of it is that the new generation core is the first Armv9 core from Arm and promises large 40% IPC gains in comparison to the current N1 core seen in Arm server CPUs such as the Amazon Graviton2 or Ampere Altra.

For Marvell, the performance improvements are even more significant as the company is switching over from the company’s previous in-house “TX2” CPU IP for the N2 core, promising a massive 3x higher single-threaded performance uplift. Late last year, Marvell had announced that it had stopped its own CPU IP in favour of Arm’s Neoverse cores, and today reiterated that the company is planning to stick to Arm’s roadmap for the foreseeable future, a large endorsement of Arm’s new IP which comes at bit of a contrast to other industry players such as Ampere or Qualcomm.

Important for DPU use-cases is the fact that this is a Armv9 CPU which also has SVE2 support, containing new important instructions that help data-processing and machine learning capabilities. This actually would be a large IP advantage over Nvidia’s BlueField3 DPU design that still “only” features Cortex-A78 cores which are Armv8.2+.

Marvell uses the full cache configuration options for their N2 implementations, meaning 64KB L1I and L1D caches, as well as the full 1MB of L2. The company’s integration into the SoC however continues to use their own internal mesh network solution – on a very high level this still looks similar in terms of basic specs, with 256bit datapaths in the mesh, and also a shared L3 containing 2MB cache slices, scaling up in number along with the core count.

In terms of switch integration and network throughput, Marvell integrated a 1 Tb/s switch with up to 16 x 50G MACs – it’s not be noted though that the capabilities here are going to vary a lot based on the actual SKU and chip design in the family.

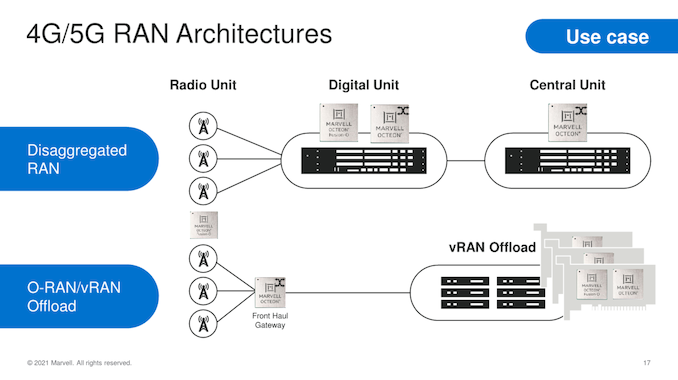

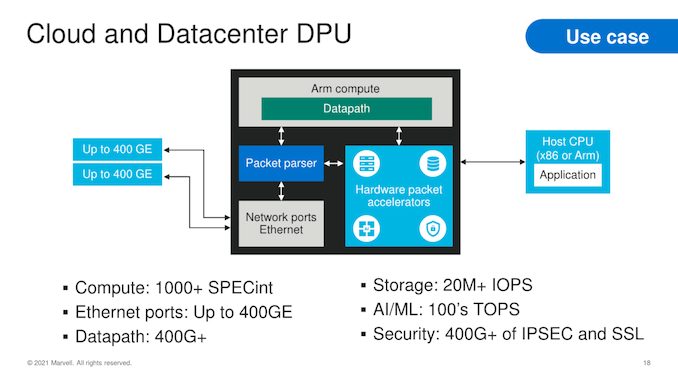

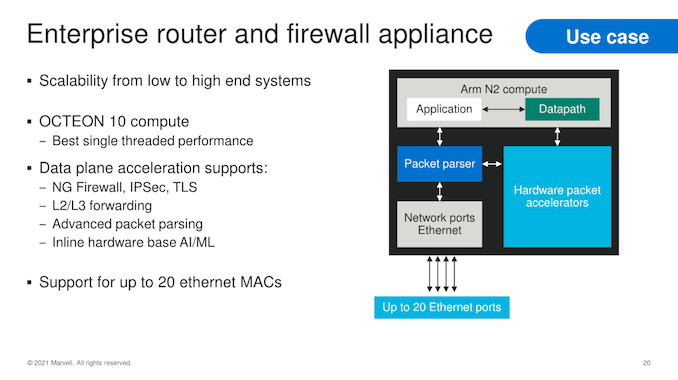

In terms of use-cases, the OCTEON 10 family covers a wide range of applications from the 4G/5G RAN Digital Units or Central Units, Front Haul Gateways or even vRAN Offload processors. In the cloud and datacentre, the solutions can offer a wide array of versatility in terms of compute and network throughput performance, while for enterprise use-cases, the family offers deeply integrated packet processing and security acceleration features.

The first OCTEON 10 product and samples will be based on the CN106XX design with 24 N2 cores and 2x 100GbE QSFP56 ports on a PCIe 5.0 form-factor, available for Q4.

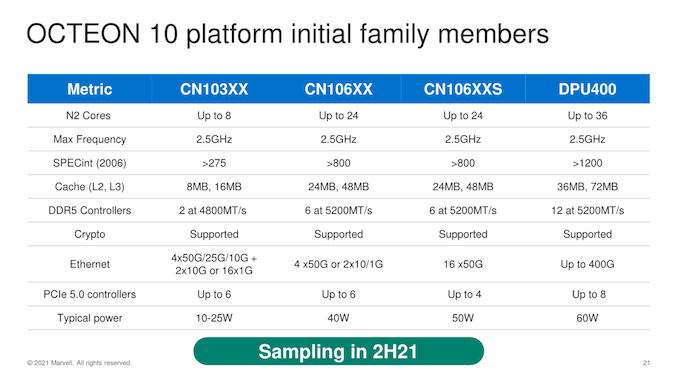

In terms of specifications, Marvell gives a breakdown of the various OCTEON 10 family designs:

Slide note: DDR5 controllers in this context refers to 40-bit channels (32+8bit ECC). Marvell also states that it still uses SPECint2006 due to its historical importance in regards to comparing to previous generation, and competitor solutions – it will publish 2017 estimates once the first silicon is ready.

The CN106XX is the first chip design of the OCTEON 10 family, taped out and expected to sample in the latter half of this year. Beyond this first chip, Marvell has 3 other OCTEON 10 designs in the form of the lower-end CN103XX with just 8 N2 cores and low TDPs of 10-25W, and two higher-end CN106XXS with improved network connectivity, and finally the DPU400 flagship with up to a massive 36 N2 cores and featuring the maximum amount of processing power and network connectivity throughput. What’s very exciting to see is that even with the largest implementations, the TDP only reaches 60W, which is far below the current generation CN98XX Octeon TX2 flagship implementation which lands in at 80-120W. These additional parts are yet to be taped out, and are planned to be sampled throughout 2022.

Marvell states that it’s been the industry leader in terms of DPU shipments, and is prevalent in all large datacentre deployments. This new Octeon 10 generation certainly seems extremely aggressive from a technology standpoint, featuring leading edge IP as well as manufacturing processes, which should give Marvell a notable advantage in terms of performance and power efficiency over the competition in the fast-evolving DPU market.

18 Comments

View All Comments

SarahKerrigan - Monday, June 28, 2021 - link

That looks like a really, really well-balanced accelerator.(I also appreciate that they didn't skimp on L3 like a lot of Neoverse designs have.)

Wilco1 - Monday, June 28, 2021 - link

The top model beats Graviton 2 on SPECINT due to having *twice* the performance per core. It also has twice as much cache and memory bandwidth per core. And all that at 60W...It's a monster and likely outperforms most servers it will be offloading!

SarahKerrigan - Monday, June 28, 2021 - link

At least for LLC, it's 4x the cache per core. Octeon10 is 2MB/core, Grav2 is 512KB/core.mode_13h - Monday, June 28, 2021 - link

For stateful packet processing, they really need as much cache as possible. The amount of context they can hold on chip can become a serious limiting factor. Because there's no SMT, the core is just sitting idle if you have to go off-chip for some connection-specific state.mode_13h - Monday, June 28, 2021 - link

...and the usual trick of masking it with hardware prefetchers won't work at all, because they can't know which connection the next packet belongs to.brucethemoose - Friday, July 2, 2021 - link

Isn't Marvell the one who made a 4 or 8-way SMT ARM core, then abandoned it, presumably because it was too niche?This seems like a perfect use case. A shame it had to go...

mode_13h - Saturday, July 3, 2021 - link

Seems that Cavium's Thunder X2 and X3 had SMT-4. And yes, they did get bought by Marvell.At that point, you could really reduce your OoO window and still probably get good utilization. Packet processing is one of those embarassingly parallel problems, so there should be no problem with workload scaling (or side-channel attacks, for that matter).

Oh well. Perhaps somebody else might have a go at it. Maybe we'll start to see some SMT RISC-V cores, especially now that Linux has "core scheduling" to better manage SMT sidechannel vulnerabilities.

Since this appears to be in a proprietary core, even Marvell could reverse course and drop in a SMT-based solution, at some point.

mode_13h - Monday, June 28, 2021 - link

> Nvidia’s BlueField3 DPU design that still “only” features Cortex-A78 coresI didn't believe this, given its projected launch date, but it turns out to be right in the GTC 2021 keynote slides!

https://images.anandtech.com/doci/16611/17056937.j...

eastcoast_pete - Tuesday, June 29, 2021 - link

Interesting units, especially for 5G base stations and networking. Notice how they emphasize "fanless" operation in the slides! Curious how those compare (if at all) with Intel's x86-based offerings with some ML thrown in? Also, how do these compare with whatever Huawei has or had before they were booted from TSMC's advanced nodes?mode_13h - Tuesday, June 29, 2021 - link

Should be: https://en.wikipedia.org/wiki/HiSilicon#Kunpeng_93...For more, see: https://fuse.wikichip.org/news/2274/huawei-expands...

I don't know if any of this stuff is (still) accurate.