A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTGaming Tests: F1 2019

The F1 racing games from Codemasters have been popular benchmarks in the tech community, mostly for ease-of-use and that they seem to take advantage of any area of a machine that might be better than another. The 2019 edition of the game features all 21 circuits on the calendar for that year, and includes a range of retro models and DLC focusing on the careers of Alain Prost and Ayrton Senna. Built on the EGO Engine 3.0, the game has been criticized similarly to most annual sports games, by not offering enough season-to-season graphical fidelity updates to make investing in the latest title worth it, however the 2019 edition revamps up the Career mode, with features such as in-season driver swaps coming into the mix. The quality of the graphics this time around is also superb, even at 4K low or 1080p Ultra.

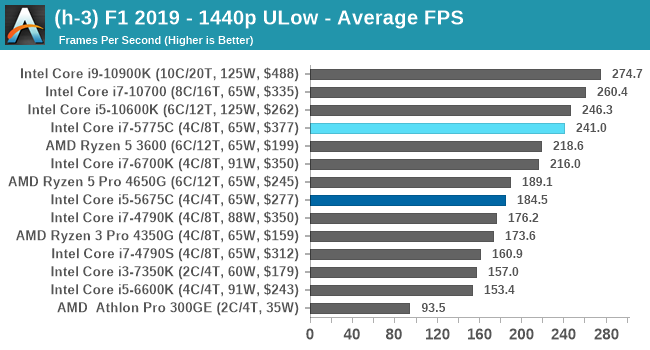

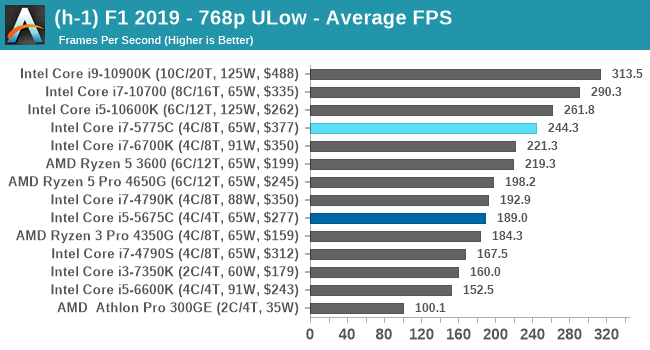

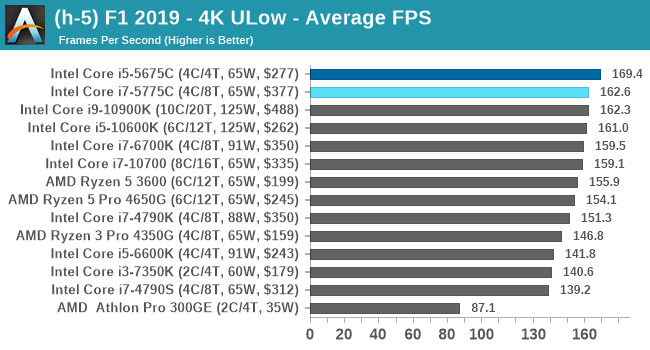

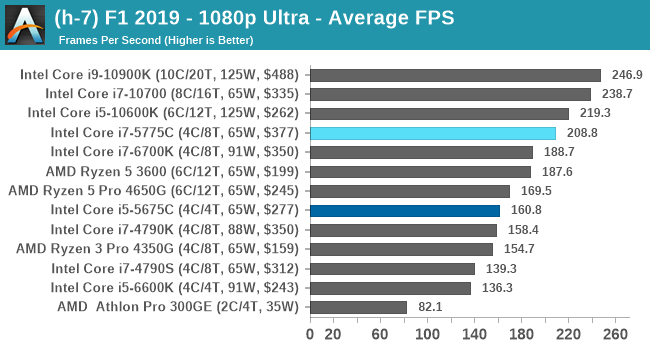

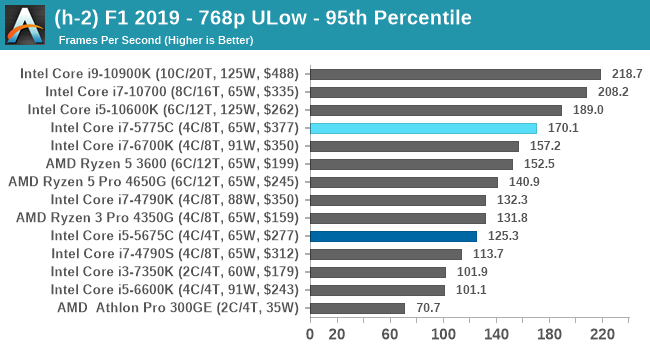

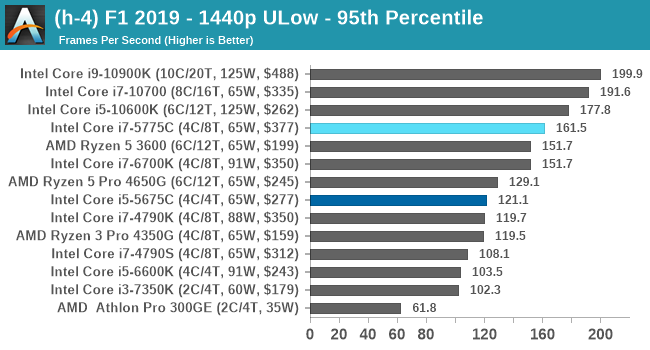

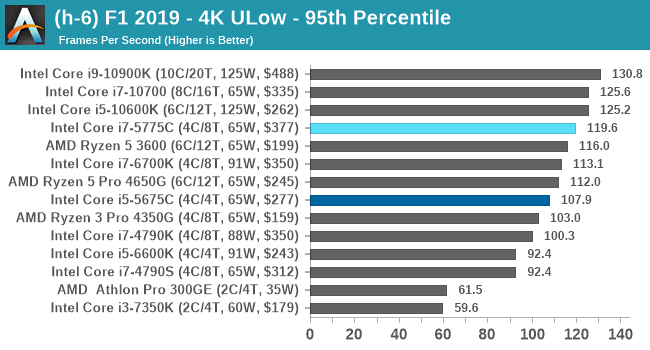

For our test, we put Alex Albon in the Red Bull in position #20, for a dry two-lap race around Austin. We test at the following settings:

- 768p Ultra Low, 1440p Ultra Low, 4K Ultra Low, 1080p Ultra

In terms of automation, F1 2019 has an in-game benchmark that can be called from the command line, and the output file has frame times. We repeat each resolution setting for a minimum of 10 minutes, taking the averages and percentiles.

| AnandTech | Low Res Low Qual |

Medium Res Low Qual |

High Res Low Qual |

Medium Res Max Qual |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

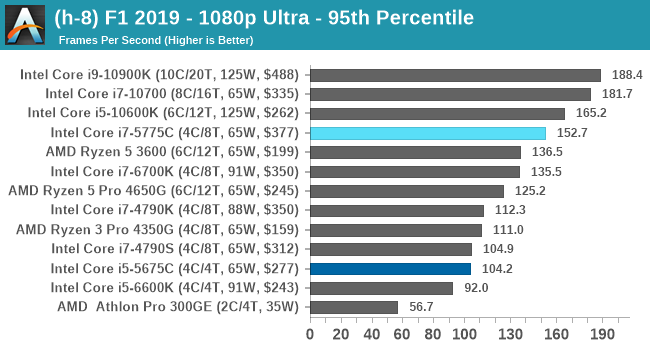

At our 4K Ultra-Low settings, the Broadwell parts seem to rule the roost at average frame rates. For other settings, we again see the BDW i7 just behind the CML i5.

All of our benchmark results can also be found in our benchmark engine, Bench.

120 Comments

View All Comments

realbabilu - Monday, November 2, 2020 - link

That Larger cache maybe need specified optimized BLAS.Kurosaki - Monday, November 2, 2020 - link

Did you mean BIAS?ballsystemlord - Tuesday, November 3, 2020 - link

BLAS == Basic Linear Algebra System.Kamen Rider Blade - Monday, November 2, 2020 - link

I think there is merit to having Off-Die L4 cache.Imagine the low latency and high bandwidth you can get with shoving some stacks of HBM2 or DDR-5, whichever is more affordable and can better use the bandwidth over whatever link you're providing.

nandnandnand - Monday, November 2, 2020 - link

I'm assuming that Zen 4 will add at least 2-4 GB of L4 cache stacked on the I/O die.ichaya - Monday, November 2, 2020 - link

Waiting for this to happen... have been since TR1.nandnandnand - Monday, November 2, 2020 - link

Throw in an RDNA 3 chiplet (in Ryzen 6950X/6900X/whatever) for iGPU and machine learning, and things will get really interesting.ichaya - Monday, November 2, 2020 - link

Yep.dotjaz - Saturday, November 7, 2020 - link

That's definitely not happening. You are delusional if you think RDNA3 will appear as iGPU first.At best we can hope the next I/O die to intergrate full VCN/DCN with a few RDNA2 CUs.

dotjaz - Saturday, November 7, 2020 - link

Also doubly delusional if think think RDNA3 is any good for ML. CDNA2 is designed for that.Adding powerful iGPU to Ryzen 9 servers literally no purpose. Nobody will be satisfied with that tiny performance. Guaranteed recipe for instant failure.

The only iGPU that would make sense is a mini iGPU in I/O die for desktop/video decoding OR iGPU coupled with low end CPU for an complete entry level gaming SOC aka APU. Chiplet design almost makes no sense for APU as long as GloFo is in play.