Hot Chips 2020: Marvell Details ThunderX3 CPUs - Up to 60 Cores Per Die, 96 Dual-Die in 2021

by Andrei Frumusanu on August 17, 2020 4:30 PM EST- Posted in

- Servers

- CPUs

- Marvell

- Arm

- Enterprise

- Enterprise CPUs

- ThunderX3

The Triton CPU Core - Evolution From Vulcan

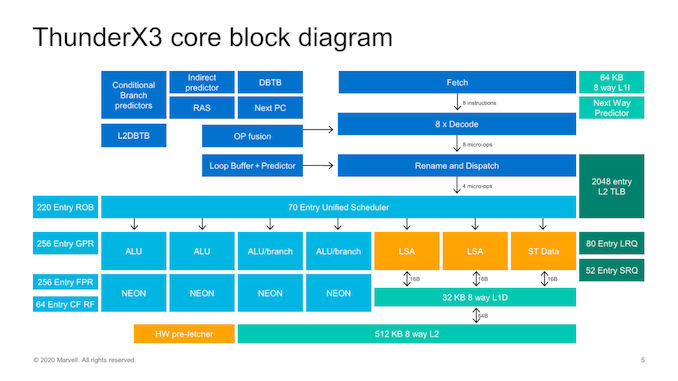

Moving on onto the core level, we see the first disclosures on Marvell’s new Triton CPU microarchitecture. The design is an evolution of the ThunderX2’s Vulcan cores with the company widening a lot of the aspects of the core, both in the front-end and on the back-end.

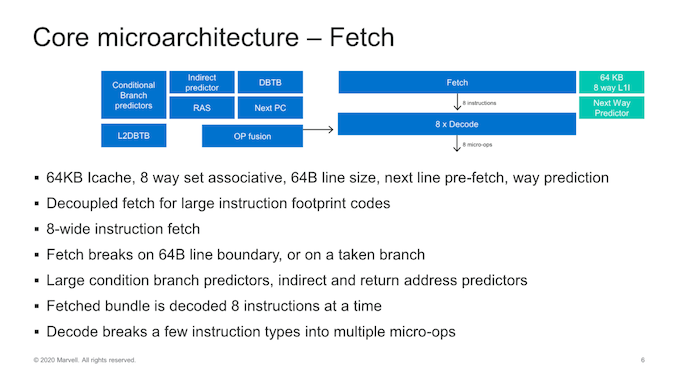

Starting off with the front-end side of the core, we see some very significant changes as we’ve almost seen a literal doubling of most structures and bandwidth in the core. The instruction cache has been doubled up from 32KB to 64KB, which now feeds into an 8-wife fetch unit, also double the previous generation.

Much like Arm’s recent microarchitectures, this is a new decoupled fetch unit that allows for better power savings. The decode unit matches the fetch bandwidth at 8 instructions wide – which actually along with the Power10 core from IBM now represents the widest decoders in the industry right now, which is quite surprising.

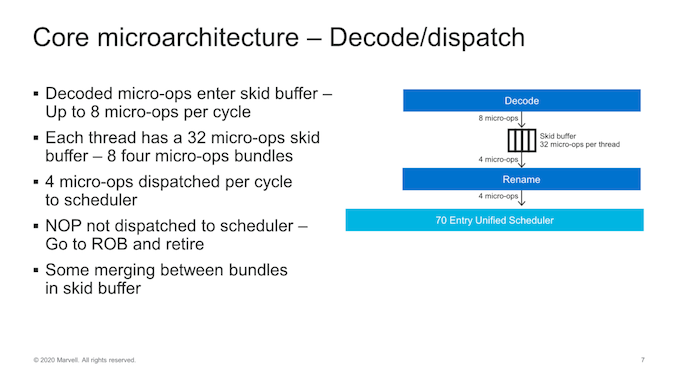

In the mid-core we see the decode unit feed into what Marvell calls a “Skid buffer”, which is essentially a loop buffer, which is segmented into 32 micro-ops per thread, further divided into eight four-wide micro-op bundles. It’s one of the rare structures in the core which is statically partitioned between threads, and it represents the boundary between the front-end and the mid-core of the microarchitecture.

The most interesting and confusing part of the Trition microarchitecture is at this part of the core, as even though the fetch and decode units of the core are 8-wide, micro-ops out of the Skid-buffer and into the rename unit and dispatched to the backend of the core only happens at 4 micro-ops per clock. So what seems to be happening here is that Marvell is taking advantage of a very wide front-end design not to actually feed a large back-end, but rather to better hide pipeline bubbles working in wider “bursts”.

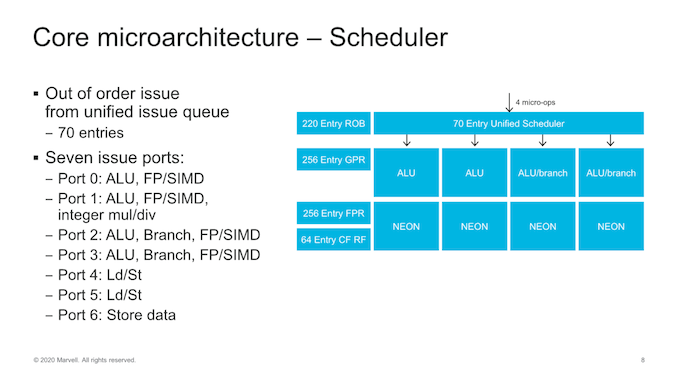

Dispatch into the backend of the core we see continued usage of a global unified scheduler that feeds into 7 execution ports. At the scheduler-level, we’ve seen a slight increase from 60 to 70 entries.

The out-of-order window of the core has increased slightly, such as the re-order buffer (ROB) growing from 180 to 220 entries.

On the execution ports, the big change has been the addition of a fourth execution pipeline capable of ALU instructions and a second branch port, meaning we’re seeing a 33% increase in simple integer ALU execution throughput and a doubling of the branch forwarding of the core. Alongside of these improvements, all four execution pipelines have been expanded with FP/SIMD capabilities which means there’s now a generational doubling of throughput for these instructions, making the Triton core one of the rare 4x128b machines out there.

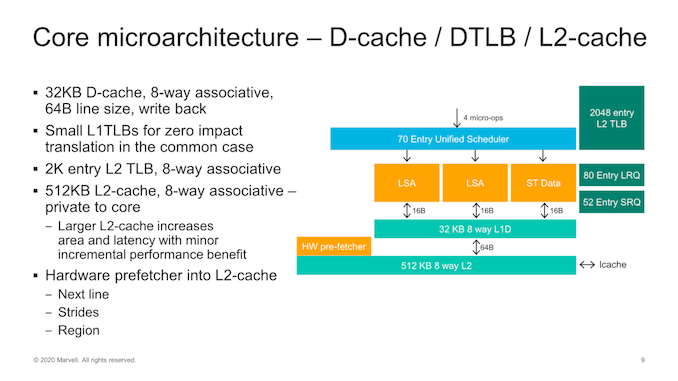

On the memory subsystem part of the core, improvements have been relatively small as we don’t seem to have major high-level changes of the microarchitecture. We still see two load-store units and a store data unit with bandwidths of 16 Bytes/cycle per unit feeding and fetching data from a 32KB L1 data cache. The load and store queues have been increased in their depth and have increased respectively from 64 to 80 entries for loads, and 36 to 48 entries for stores.

The core’s L2 has also doubled from 256KB to 512KB, but Marvell’s wording here on this change is interesting as they say it increases area and latency with only “minor incremental performance benefits”, which sounds quite disappointing in tone. We’ll see in the next slide this means 2.5%.

The hardware prefetchers are quite simplistic, with your traditional next-line, stride, and region-based designs pulling data into the L2.

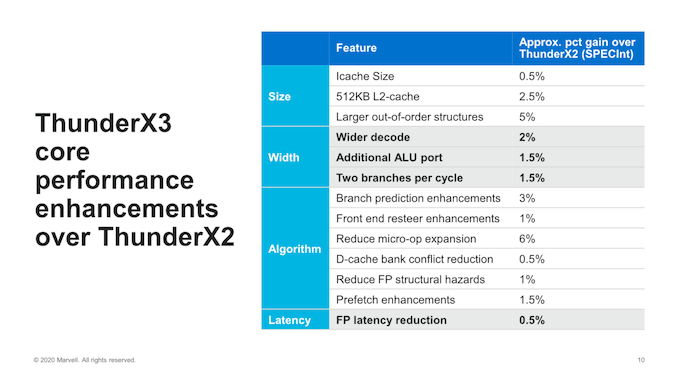

Overall, generational IPC improvements of the new core sum up to 30% in SPECint, and Marvell was generous enough to give us an overview of the new core’s features and how each is accounts for the total improvement:

On the structure side increases of things, the biggest improvements were due to the larger OoO increases in the mid-core which, although the increases weren’t all that big, represent a 5% IPC improvement. This seems a quite good trade-off versus some other doubling of structures such as the L1I and the L2 cache increases which only got a 0.5% and 2.5% benefit.

The front-end’s doubling and wider decode from 4 to 8 only accounted for only 2% improvement in performance which is extremely tame, but is likely bottlenecked given the narrow mid-core dispatch and comparatively narrow execution back-end.

The biggest improvement in IPC was due to reduced micro-op expansion from the decoder – Marvell here stated that they had been too aggressive in this regard on the ThunderX2 Vulcan cores in expanding instructions into multiple micro-ops, so they’ve reduced this significantly, and this probably alleviating the bottleneck on the mid-core and resulting into better back-end utilisation per actual instruction.

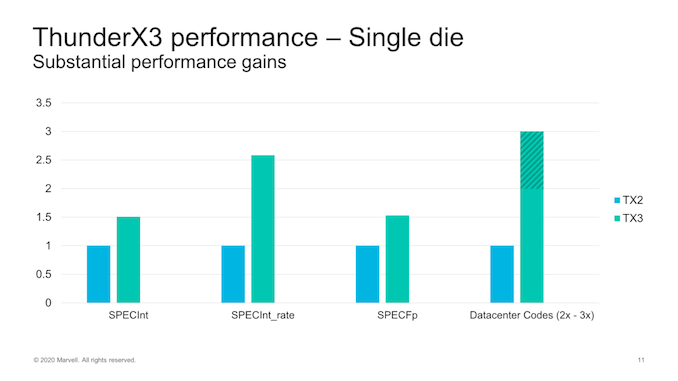

Generational performance improvements accounting for the IPC gains as well as frequency gains, we’re expected to see a 1.5x gain in SPECint. Given our historical numbers on the TX2, by these projections we should thus expect the TX3 to outperform the Graviton2 by around 10%.

SPECrate gains are naturally higher at around 2.5x the performance, thanks to the new design’s higher core count further amplifying the microarchitectural improvements.

27 Comments

View All Comments

Tomatotech - Monday, August 17, 2020 - link

SMT4 is interesting, 60% speedup from 5% area. Makes me think SMT8 might just be worth exploring in the next generation, even if it is only 30% speedup from 10% area.It’s been a while since I studied this, but saying it has 90MB L3 cache might be regarded as misleading. If you try stuffing your 50MB of code and data into cache, it’s going to fall over quite spectacularly. Each 4-core tile has only 6MB cache, so you have to keep your code and data to only 6MB or less if you want it to fit into cache. (Or fiddle around with splitting it between tiles). Better to say it has 15x6MB L3 cache (Still damn good) and leave it at that?

saratoga4 - Monday, August 17, 2020 - link

>Each 4-core tile has only 6MB cacheAccording to the slides linked above the L3 cache lines have no affinity for any specific core, so it really is a single 90MB L3, similar to how Intel's ring bus parts work.

Tomatotech - Monday, August 17, 2020 - link

You have to be careful about the marketing wording. What you said is what they want you to think - that any core can use any cache equally well - but a close reading indicates it could mean just 'any of the 4 cores in a single tile can use any of the 2x3MB caches associated with that tile'. Hence my use of 6MB.Even worse - a single core might not be able to use all of both on-tile caches at the same time, so the real max cache for a core might be as low as 3MB. This chip is designed to be a multi-thread monster with up to 240 threads per die, each thread accessing its own part of cache. Not to have a tiny number of threads using up all the cache. That's a different layout.

saratoga4 - Monday, August 17, 2020 - link

>but a close reading indicates it could mean just 'any of the 4 cores in a single tile can use any of the 2x3MB caches associated with that tile'.The slides say that individual tiles are striped, so I don't think your reading is correct.

Krysto - Tuesday, August 18, 2020 - link

Power10 is already doing SMT8.GreenReaper - Wednesday, August 19, 2020 - link

It talks about the increase in area, but not power usage. Moreover you risk effectively reducing single-threaded speed unless you upgrade every other bit (and probably even then). If you limit them further it starts to look more and more like a GPU.I think we need more evidence that people can use SMT4 effectively, as they have done eventually with Quad-Core and beyond, before it is increased further.

senttoschool - Tuesday, August 18, 2020 - link

Both AMD and Intel are in trouble. The x86 market is shrinking fast.AWS is deadset on transitioning to its own ARM server chips so they can reduce cost, build chips for their own needs, and have unique features. Google Cloud and Microsoft Azure will probably follow shortly in order to compete.

With Apple's transition to ARM, the x86 market shrank by 10% overnight. In addition, MacOS ARM will spur a renewed push for developers to optimize for ARM (Windows or Mac) on the laptop/desktop. This means Windows ARM will probably be an extremely viable option in the near future.

The x86 market isn't dead. But it's shrinking. AMD and Intel aren't just competing against each other, they're also competing with Apple, Qualcomm, Marvell, ARM, Nuvia, Ampere, Samsung, Huawei, Alibaba, etc.

Quantumz0d - Tuesday, August 18, 2020 - link

LOLARM is always stupid custom, Apple trash is the least bothered part for Apple themselves as their Mac share of revenue is under 10%, that's why Apple did the move because instead of paying Intel and getting caught in the cheap VRM trash news they would benefit from the new hybrid OS of Mac which is an abomination to desktop UX. Apple transition shranked x86 marketshare overnight ? hahah, like Apple said themselves it's going to be a 2 year period so the products are still there. By that time you think AMD and Intel both DC centric companies sit and lay eggs ? ARM is dogshit, it's always fucking custom the massive support of x86 in Linux space is not there for ARM so transitioning to that is not possible at this point of time, x86 already is a RISC underneath it, so your yapping of Intel and AMD in trouble ain't true. They sure have to make sure they are innovating, Intel is going Big Little for the mobile space, AMD patented the same and they will go the same, and with massive Windows ecosystem this ARM bullshit failed on RT and x86 to ARM translation is not for free, you get performance penalty. Apple pays top companies like Adobe to make their Software like first party IP, it's not a major market.

AT spec scores out of that graphs are meaningless. When the work done by Snapdragon processors is equivalent to what A series do, just because the OS feels snappy due to tight integration and closed binaries is not a measure but real life work performance.

All those companies, none of them make Datacenter processors except Marvell and with Apple being only consumer centric company what the fuck are you blabbing here ? Datacenter market is owned by x86 not ARM, and Qualcomm left after pouring billions in their Centriq prized custom ARM IP where Cloudflare was heralding just like how again CF is now doing it again with Altera. AWS is going Graviton because it's always Amazon's one of their own type in house stuff to save money and it's not going to beat AMD which is the king of the DC with EPYC 7742. Icelake SP is also coming and Intel already probably locked out vendors from moving to AMD by now forget ARM.

ARM is again, full custom. Qualcomm is the only one which properly respects the OSS by CAF and Binaries, Blobs etc on Android space. Samsung is a failure, Huawei is utter trash in that dept. Nuvia is vaporware until real product and that's only for DC market, not Smartphones or ultra portables, which is where lot of money is there to start up quick. And on Windows it's utter trash and same for Linux, only low level workloads. Name one ARM processor based machine which can run anything like x86 Wintel machines ?

HVAC - Tuesday, August 18, 2020 - link

Utter ... trash ...Greys - Wednesday, August 26, 2020 - link

I am agree with you!