New Uses for Smartphone AI: A Short Commentary on Recording History and Privacy

by Dr. Ian Cutress on September 6, 2019 7:20 AM EST- Posted in

- Smartphones

- AI

- Opinion

This opinion piece is reactionary to recent announcements.

Having just attended the Huawei keynote here at the IFA trade show, there were a couple of new features enabled through AI that were presented on stage that made the hair on the back of my neck stand on end. Part of it is just an impression on how quickly AI in hand-held devices is progressing, but the other part of it makes me think to how it can be misused.

Let me cover the two features.

"Real-Time Multi-Instance Segmentation"

Firstly, AI detection in photos is not new. Identifying objects isn’t new. But Huawei showed a use case where several people were playing musical instruments, and the smartphone camera could detect both the people from the background, and the people from each other. This allowed the software to change the background, from an indoor scene to an outdoor scene and such. What this also enabled was that individuals could be deleted, moved, or resized. Compare the title image to this one, where people are deleted and the background moved.

What does this mean? People can be removed from photos. Old lovers can be removed from those holiday photographs. Individuals can easily be removed (or added) from the historical record. The software would automatically generate the background behind them (if it’s the original background), and the size of people could even be changed. This was not only photographs, but video. The image blow shows one person increased in size, but it could just as easily be something significant.

Now I know that these algorithms already exist on photo editing software on a PC, if you know how to use it. I know that the demo that Huawei showed on stage was more of a representative aspect to AI on a smartphone, but I could imagine something similar coming to a smartphone, and being performed on a smartphone, and the goal to make it as easy to use as possible on a smartphone. How we in future might interpret the actions of our past selves (or past others) may have to take into account the level of access (and ease of use) in the ability to modify images and video.

Detecting Health Rate with Cameras

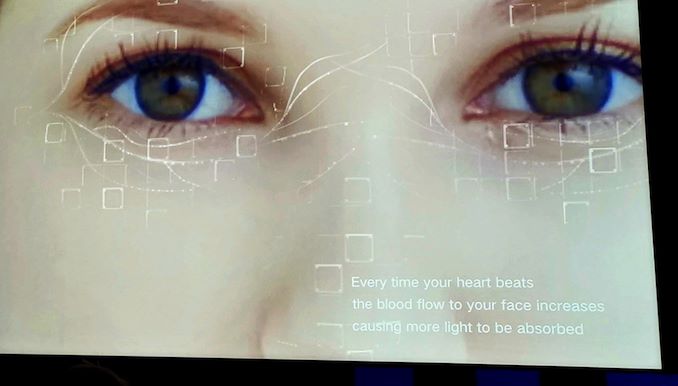

The second feature was related to Health and AR. By using a pre-trained algorithm, Huawei showed the ability for your smartphone to detect your heart rate simply by the front facing camera (and assuming the rear facing camera too). It does this by looking at small facial movements between video frames, and works on the values it predicts per pixel to get an overall picture.

Obviously, it isn’t meant to be used as a diagnostic tool (at least, I hope not). I could imagine similar technology being used with IP cameras for a home security system perhaps, and when it detects an elderly relative in distress, it can perform the appropriate action. But it lends itself to abuse, if you are able to use it on other people unsuspectingly. Does that constitute an invasion of privacy? Does it work on these smartphones with 10x zoom? I’m not sure I’m qualified to answer those questions.

A big part of me wants to see technology moving forward, with development and progression from generation to generation. But in seeing these two technology features today, there’s the tiniest part that doesn’t sit right, unless the correct security procedures are in place, such as edited images/videos have a signature marker, or only pre-registered people on a smartphone can have their heartbeat measured. Hopefully my initial fears aren't as serious as they first appear.

25 Comments

View All Comments

abufrejoval - Friday, September 6, 2019 - link

I could not agree more!Where AI in a smartphone could be used to do really nice things (my personal favorite is to have Downton Abbey's Carson live in my smartphone and manage all those IoT things I own), the main actors in the market only want to use AI compute to offload their spying and will bending onto the devices you own and charge, while governments, domestic or foreign--and plenty of other crooks for that matter--want to use it to ensure you're behaving like a proper citizen and pay the dues they believe should belong to them.

I don't want Alexa nor a Bundestrojaner on my AI servant. I keep thinking that in old Rome a merchant selling servants that remain loyal to him and not their new master would have been accorded the most gruesome death imaginable: A clean sweep lion would have been considered completely inadequate!

And I fail to understand how any decent nation on Earth could permit a vendor to lock down a very personal computer and make it act against the vested interests of its owner.

Oxford Guy - Tuesday, September 10, 2019 - link

"And I fail to understand how any decent nation on Earth could permit a vendor to lock down a very personal computer and make it act against the vested interests of its owner."You must be joking. See the Snowden "Happy Dance" slide.

It would be absolutely unimaginable for governments not to force corporations to embed spyware into products like CPUs and operating systems. When people can cheat they do, especially when they can pretend it's for the greater good.

r3loaded - Friday, September 6, 2019 - link

It's Huawei, the hair on the back of your neck should be standing on end regardless. Both the company and its founder have very close ties to the Communist Party and the PLA; their innovations are undoubtedly being used in military or mass surveillance applications.brucethemoose - Friday, September 6, 2019 - link

Thats not a big deal if you live outside China though. Ironically, buying "foreign made" is probably best wherever you live, as your local government doesn't have the jurisdiction to squeeze the smartphone manufacturer.As far as persobal privacy is concerned, I'd be more worried about my employer buying data from Google or Facebook than Huawei or the PLA.

PeachNCream - Friday, September 6, 2019 - link

I sort of agree with you on this. I'm a lot more concerned about what Facebook and Google are collecting without my consent. Google/Alphabet especially has its tendrils spread out all over the place via a plethora of deployed devices and services that make the company's data gathering a challenge to evade. Like take Anandtech for instance. It uses elements of Google's code provided via Google servers. Did anyone at one of these little, understaffed sites actually review the free candy they're getting from the windowless van Alphabet has parked down by the river?sheh - Friday, September 6, 2019 - link

It's not just Google and Facebook.This site also includes:

Twitter

Cloudfront

Parsely

Quantserve

Scorecardresearch

Diji1 - Friday, September 13, 2019 - link

As far as I'm aware, Cloudfront has nothing to do with controlling it's users via advertising and therefore shouldn't be on this list.Diji1 - Friday, September 13, 2019 - link

I would take you seriously except you're ignoring the much greater threat from your own Government while you concentrate on the boogieman that you learnt about from Government propaganda.Carmen00 - Friday, September 6, 2019 - link

Before newspapers and such, one would have to see something or have it communicated by a trusted party who had seen it. Then we grew to trust the written word, until the era of dominating yellow journalism, clickbait nonsense, and too-obvious propaganda. More recently, the safest way has been "pics or it didn't happen". And now we come to a situation where even if one sees the video or hears the voice, it still might not have happened. With advances like these, things have come full circle! Will the next generation have to be taught that unless one sees it oneself, or it is communicated by a trusted party, it cannot be trusted?mkozakewich - Saturday, September 7, 2019 - link

Trusted party is the worst. There were times where someone told me something, and I accepted it because they obviously thought it was true, but then it turned out they hadn't seen something correctly or missed some sarcasm when hearing what someone was saying. At least when I see a stupid Facebook headline with a doctored image I can say, "Oh, I know that's probably faked."