Innogrit Debuts With Four NVMe SSD Controllers

by Billy Tallis on August 1, 2019 8:30 AM EST

A new SSD controller designer is coming out of stealth mode today. Innogrit was founded in 2016 by storage industry veterans with the goal of developing storage technology to support AI and big data applications. We spoke with co-founder Dr. Zining Wu (formerly Marvell's CTO) about the company's planned product lineup, and he will be presenting more information next week in a keynote speech at Flash Memory Summit.

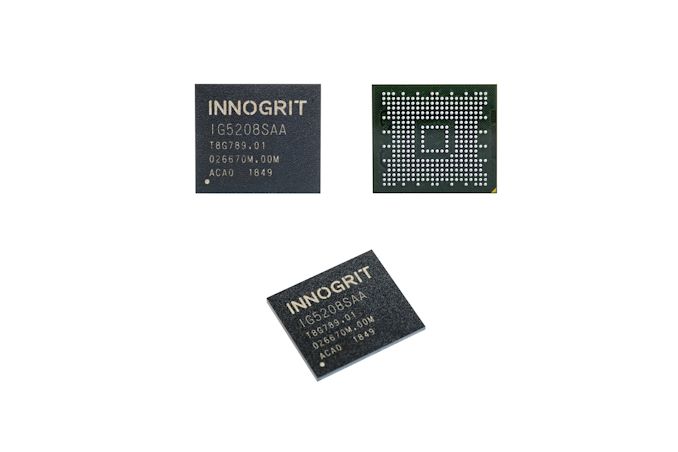

Innogrit's long term goal is to go after the enterprise storage market, but they are starting small with a DRAMless client SSD controller, the IG5208 "Shasta". This is already in mass production with full turnkey reference SSD designs available. It will be followed up incrementally larger controllers with more advanced feature sets: Shasta+, Rainier and Tacoma. With each iteration, Innogrit is increasing performance, adding more features and improving their LDPC error correction engine.

| Innogrit NVMe SSD Controller Roadmap | |||||

| Controller | Shasta | Shasta+ | Rainier | Tacoma | |

| Model Number | IG5208 | IG5216 | IG5236 | IG5668 | |

| Host Interface | PCIe 3 x2 | PCIe 3 x4 | PCIe 4 x4 | PCIe 4 x4 | |

| Protocol | NVMe 1.3 | NVMe 1.4 | |||

| NAND Channels | 4 | 4 | 8 | 16 | |

| Max Capacity | 2 TB | 2 TB | 16 TB | 32 TB | |

| DRAM Support | No (HMB Supported) | DDR3/4, LPDDR3/4 32/16-bit bus |

DDR3/4, LPDDR3/4, 72-bit bus |

||

| Manufacturing Process | 28nm | "16/12nm" | |||

| BGA Package Size | 10x9mm, 7x10mm |

7x11mm, 10x10mm |

15x15mm | 17x17mm | |

| Sequential Read | 1750 MB/s | 3.2 GB/s | 7 GB/s | 7 GB/s | |

| Sequential Write | 1500 MB/s | 2.5 GB/s | 6.1 GB/s | 6.1 GB/s | |

| 4KB Random Read | 250k IOPS | 500k IOPS | 1M IOPS | 1.5M IOPS | |

| 4KB Random Write | 200k IOPS | 350k IOPS | 800k IOPS | 1M IOPS | |

| Market Segment | Client | Client | High-end Client, Datacenter |

Datacenter, Enterprise | |

The Shasta and Shasta+ controllers are both primarily targeting the client SSD market, and they are designed as low-cost mainstream solutions. Shasta has just two PCIe 3 lanes while Shasta+ has four lanes and consequently higher performance, but otherwise they are quite similar. Both are 28nm designs and use the NVMe Host Memory Buffer feature rather than including DRAM controllers. Both controllers are small enough to be packaged inside single-chip BGA SSDs, and Innogrit's reference designs for Shasta-based SSDs include the standard 11.5x13mm and 16x20mm BGA SSD footprints and a CFX card design. The improved ECC capabilities of Shasta+ will make it a better choice for QLC-based SSDs, but both controllers support the full range of SLC through QLC from multiple manufacturers.

Because Shasta and Shasta+ are stepping stones toward the enterprise and datacenter markets, they include support for some features not commonly found on client SSDs, such as an Open-Channel SSD operating mode. End-to-end data path protection is included, with ECC on all the controller's SRAM buffers and on data stored in the Host Memory Buffer. Power management appropriate for client and embedded use is supported, with Shasta peaking at 0.9W and supporting idle states at 55mW and less than 1mW, while Shasta+ will peak at about 1.35W. The NVMe Boot Partition feature is also supported for embedded systems that don't include a separate boot ROM device.

Innogrit's Rainier controller is a significant generational advance over the Shasta family, moving up to the high-end client and entry-level datacenter markets. Rainier switches to one of TSMC's 16/12nm FinFET processes, which Innogrit (and most other controller designers) sees as necessary for PCIe gen4 support with reasonable power consumption. Rainier has 8 NAND channels that can run at up to 1200MT/s, fast enough for any currently-available NAND. This allows for sequential read and write speeds of up to 7GB/s and 6.1GB/s respectively, more or less saturating the PCIe 4 x4 interface. Rainier adds enterprise-oriented features like multiple namespace support and SR-IOV virtualization, but client-oriented power management is still supported, with idle states for 50mW and less than 2mW.

The most powerful controller on Innogrit's roadmap is Tacoma, which builds on Rainier by doubling the NAND channel count to 16 (bringing the maximum supported capacity up to 32TB), widening the DRAM interface to 72 bits (64b with ECC), and adding more high-end enterprise features. Sequential IO performance will be roughly the same as for Rainier but random IO gets a boost from the extra parallelism. The virtualization capabilities have been enhanced relative to Rainier and the NVMe Controller Memory Buffer feature is supported, which comes in handy for NVMe over Fabrics deployments. A special low-latency mode is introduced, which Innogrit will be demonstrating with Toshiba's XL-FLASH (their answer to Samsung's Z-NAND). Perhaps the most important feature of Tacoma is the addition of in-storage compute with a deep learning accelerator; more information about this will be shared next week during Innogrit's keynote presentation at Flash Memory Summit.

Innogrit's business model will be similar to most other independent SSD controller vendors, offering SSD vendors a range of options from a basic SDK for custom firmware up to full turnkey SSD designs. They have several design wins with the Shasta family controllers and are already sampling the Rainier and Tacoma controllers.

Source: Innogrit

5 Comments

View All Comments

29a - Thursday, August 1, 2019 - link

NVMe Host Memory BufferI wonder why OSs don't include something like this by default for all storage devices.

Billy Tallis - Thursday, August 1, 2019 - link

Graphics cards have had this kind of capability for a long time, but storage devices traditionally didn't need much working memory aside from buffers for user data, and putting those buffers in host memory doesn't accomplish anything. Flash-based SSDs are the first storage devices to need a non-trivial amount of scratch memory for their own internal metadata, so it's no surprise that the HMB feature originates with a storage protocol designed around SSDs rather than hard drives.ksec - Thursday, August 1, 2019 - link

When can we expect this? 7 GB/s Seq Read Write!Santoval - Friday, August 2, 2019 - link

Rainier and Tacoma are targeted at the HEDT and enterprise market, so (along with their 16+TB capacity) SSDs sporting them will cost an arm, a leg, a kidney and half a liver. These SSDs will not be in an M.2 format either, so even if you robbed a bank or inherited a small fortune from a rich uncle you had no idea existed (a wet dream, surely) you couldn't pair them with with your brand new Ryzen 30xx CPU.You would need to plug them in a server, unless perhaps there was an adapter you could use. SSDs with Rainier might also be available in an M.2 version, but not SSDs with Tacoma. Tacoma is in a class of its own :)

ballsystemlord - Saturday, August 3, 2019 - link

I think he meant, "When can we expect consumer drives to be able to handle 7GB/s Seq Read/Write?"Ignoring the fact that even someone as crazy as me would not need that rate of sequential transfers (even an ISO image of Linux is 4GB!), and would rather have higher random I/O speed improvements, the answer is "probably in 4 years". That's assuming we get those speeds at all, because, like I said, we have no use for them.