Amazon AWS Offers Another AMD EPYC-Powered Instance: T3a

by Anton Shilov on May 1, 2019 1:00 PM EST

Amazon Web Services has further expanded its usage of AMD EPYC-based machines for its Elastic Compute Cloud (EC2) instances. Last week the company started to offer its new EPYC-powered T3a instances, which enable customers to balance their instance mix based on cost and the amount of throughput they require at a given moment.

AWS’s T3a instances offer burstable performance and are intended for workloads that have low sustained throughput needs, but experience temporary spikes in usage. Amazon says that users of T3a get an assured baseline amount of processing power and can scale it up “to full core performance” when they need more for as long as necessary.

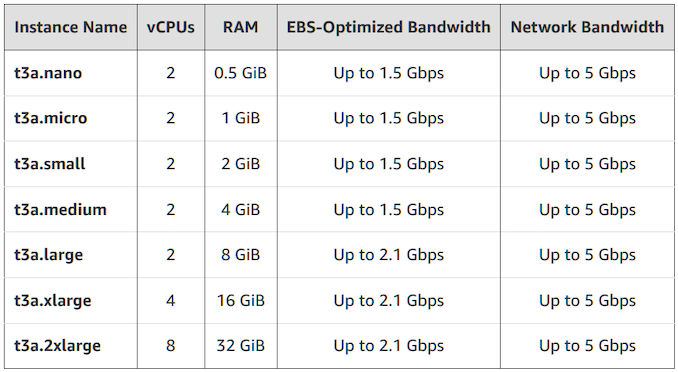

T3a instances are offered in seven sizes in the US East (N. Virginia), US West (Oregon), Europe (Ireland), US East (Ohio), and Asia Pacific (Singapore) Regions in On-Demand, Spot, and Reserved Instance form. The specifications look as follows:

This is Amazon’s third announcement of AMD EPYC-powered instances. Previously AWS started to offer M5, R5, M5ad, and R5ad instances based on AMD’s latest server processors.

Related Reading:

- Amazon Offers More EPYC: M5ad & R5ad Instances

- AMD’s EPYC CPUs Now Available on Amazon Web Services

- AMD Previews EPYC ‘Rome’ Processor: Up to 64 Zen 2 Cores

- Naples, Rome, Milan, Zen 4: An Interview with AMD CTO, Mark Papermaster

- CES 2019 Question and Answer Session with AMD CEO, Dr. Lisa Su

Source: Amazon Web Services

18 Comments

View All Comments

Drumsticks - Wednesday, May 1, 2019 - link

Is there a cost comparison with a similar Intel based machine offered by AWS? Surely they're paying less for EPYC processors per core - is that translating in any way to consumers of AWS?Marlin1975 - Wednesday, May 1, 2019 - link

Processor cost can be very small compared to the whole system. Memory usually cost more than the CPUs now depending on the configuration.nathanddrews - Wednesday, May 1, 2019 - link

I would also assume that AWS is still like 90% Intel-based, so the cost is probably equalized across the entire cloud. Like Marlin1975 said, bandwidth/memory are likely the primary cost driver.dolphin2x - Thursday, May 2, 2019 - link

Not if the AMD offering leverages a "P" single socket SKU, compared to a "similar intel based machine". That's the sweet spot with EPYC stuff right nowrahvin - Thursday, May 2, 2019 - link

That would depend entirely on whether AMD is giving tray discounts to Amazon and the other cloud providers to favor higher density.Based on my understanding of cloud economics the cloud companies are going to want to maximize density so they are going to want the 2P systems only as the rack and facility costs (racks, cooling building,etc) can be a huge factor in overall costs. Cloud providers are deeply concerned with total costs including infrastructure costs and they look at how much compute they get out of each square foot and kwh at the data center level. They also have the purchasing power to give them a strong negotiating position for tray costs.

Unless Intel is giving Tray discounts to the cloud providers to hold off competition AMD has a significant cost advantage right now for similar power/density requirements though not as high of a advantage because they're limited to 2P where XEON can do 4P.

AMD's going to get even better cost per sqft/kwh when Rome comes out. At that point a 2P 128core dual CPU Rome system would provide the equivalent processing to two 4P platinum Xeon systems which would mean almost a 2 fold increase in density and somewhere around half the power. I'd wager AMD's penetration into the cloud side will accelerate significantly when that happens if Intel doesn't fight back on costs.

Keep in mind Intel has a history of playing dirty so they'll likely start offering significant tray discounts to level the playing field. That will be good for everyone as those discounts should trickle down the stack as Intel loses market-share to Rome.

Strong competition from AMD is good for everyone. CPU prices, especially on the server side are way way higher than historical prices due to the lack of competition. It boggles my mind that a platinum level Xeon can cost upwards of $12,000 and they are projecting as high as $16k for the top end 10nm server chip when it lands.

PurpleTangent - Friday, May 3, 2019 - link

Great write up.One thing I'd like to add is that you're only taking into account 2P/4P configurations and not nodes per rack unit. Lots of data centers are moving towards 2U4N configurations, with 2P per node when dealing with high compute/RAM needs.

Similar to this product: https://www.servethehome.com/gigabyte-h261-z61-ser...

Honestly, it's insane that they can pack 256 cores worth of CPU into a single 2U server.

wumpus - Tuesday, May 7, 2019 - link

"Keep in mind Intel has a history of playing dirty" many of Intel's dirty tricks involve subsidizing chips where AMD is competitive and raising prices where they are not. Right now, that is largely the i9 series and the Pentium/celery <4 core jobs. This will almost certainly get worse for Intel after the Zen2 release.Intel's other favorite dirty trick is demanding exclusive use of their chips in return for significant discounts. Right now it doesn't appear that they can supply the chips to anybody for such an exclusive agreement (maybe Apple gets enough). Also they already have had to cough up a billion dollars to AMD (and AMD only took it because they needed the money quickly, they were relying on Bulldozer sales), I'd expect a second round would be even more expensive (AMD could wait for a jury to decide).

I'm not saying Intel will never be back to their old tricks, but don't expect them to have the most effective ones available until 2020-2021 (and don't be too surprised if snowy/sunny cove leapfrogs them back over AMD).

wumpus - Tuesday, May 7, 2019 - link

Edit/postscript: One thing already mentioned about servers is the cost of the memory. Intel appears to have an advantage with exclusive use of 3dXP memory (DDR4 configuration), but that appears to all but require custom programming (of enterprise software, which has to be developed and tested to enterprise quality before AMD gets their hands on it. But I've also been told that plenty of database work can be greatly improved by simply changing many memcopy commands to point to DDR-optane).Remember that Micron and Intel split up on bad terms, and I doubt Micron is all that interested in holding back DDR4 3dXP from AMD (or ARM). They should have the right to make the stuff this year or the next.

DanNeely - Wednesday, May 1, 2019 - link

For Linux/US East (Ohio), hourly pricing appears to be about 10% less for T3A vs T3 (intel). Other locations/software configurations may have different pricing.https://aws.amazon.com/ec2/pricing/on-demand/

kepstin - Wednesday, May 1, 2019 - link

Amazon's guidance is that the T3a instances have lower clocks than the T3 and don't offer the same max performance (but you need to benchmark your own use cases...), so with the price savings over the T3 instances, the T3a instances seem to work as intermediate steps between the existing T3 instance sizes.