The NVIDIA GPU Tech Conference 2019 Keynote Live Blog (Starts at 2pm PT/21:00 UTC)

by Ryan Smith on March 18, 2019 2:00 PM EST

04:43PM EDT - Alright, we're finally seated for the keynote

04:43PM EDT - Kicking off a very busy week for tech events in California, my first stop for the week is NVIDIA's annual GPU Technology Conference in San Jose.

04:44PM EDT - As always, CEO Jensen Huang will be kicking off the show proper with a 2 hour keynote, no doubt making some new product announcements and setting the pace for the company for the next year.

04:44PM EDT - The biggest question that's no doubt on everyone's minds being what NVIDIA plans to do for 7nm, as that process node is quickly maturing.

04:44PM EDT - Hopefully we'll find out the answer to that and more, so be sure to check-in at 2pm Pacific to see what's next for NVIDIA.

04:47PM EDT - This year's keynote has seen a change of venue

04:47PM EDT - GTC itself is still at the San Jose Convention Center

04:48PM EDT - But the keynote is out at the nearby San Jose State University, in one of their auditoriums

04:49PM EDT - I haven't heard anything official, but with the show projected to draw 8000 attendees, NVIDIA may have reached the practical limit for the hall they've been using for the keynote

04:49PM EDT - At any rate, the Wi-Fi is a lot better. The lack of tables, less so

04:50PM EDT - The press is seated, and the attendees are filtering in

04:53PM EDT - NVIDIA for its part is coming off of an odd year. The company got hit hard by the crypto collapse, and while 2018 was still a profitable year, it wasn't as high-flying as it started

04:55PM EDT - On the datacenter side of matters, which is arguably NVDIA's most lucrative business in terms of profit these days, Tesla V100 is turning 2, and the deep learning space is rife with competition

04:58PM EDT - But at the same time, it may be just a bit early for a big 7nm DL training GPU

04:59PM EDT - Meanwhile the consumer sector is still in the middle of the Turing launch

04:59PM EDT - And Tegra moves at its own pace

04:59PM EDT - So the floor is more or less wide open for what may or may not be announced

04:59PM EDT - The only constant in life, is after all, Jensen's leather jacket

05:00PM EDT - Normally these things start a few minutes late. This will probably be similar

05:02PM EDT - Meanwhile this keynote is officially scheduled to run for 2 hours. They have a tendency to go closer to 2:15 or 2:20, depending on how quickly speaker talk

05:02PM EDT - This keynote is also a bit later in the day than previous years. Normally it's at 10am; but in this case it starts at 2pm local time, or a nice, late 9pm (and later) for Europe

05:04PM EDT - Tangental to GTC, there are some other GPU and gaming-related announcements coming out this week, as GDC is going on next door in San Francisco

05:04PM EDT - And the lights have gone dark. Here we go

05:05PM EDT - Rolling a video. "I am AI"

05:05PM EDT - I feel like NVIDIA has done this theme before, though with different material

05:06PM EDT - The video is basically recapping all the cool things deep learning is being used for

05:07PM EDT - Also, I forgot to include my co-host for the afternoon. Billy Tallis is on photos. Thanks, Billy!

05:07PM EDT - Now on stage: Jensen Huang

05:08PM EDT - Thanking everyone for coming out to SJSU, since the old hall was so packed

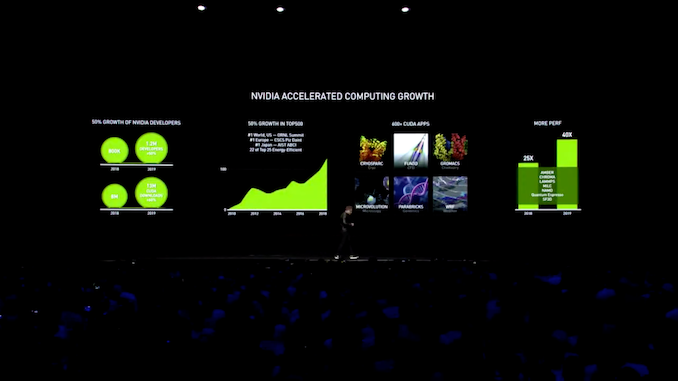

05:09PM EDT - Getting started right away, Jensen is recapping the growth of NVIDIA, its customers, and its technologies

05:09PM EDT - More developers, more customers, more supercomputers

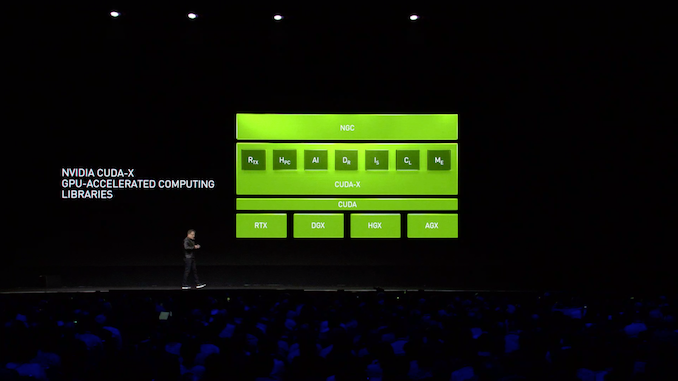

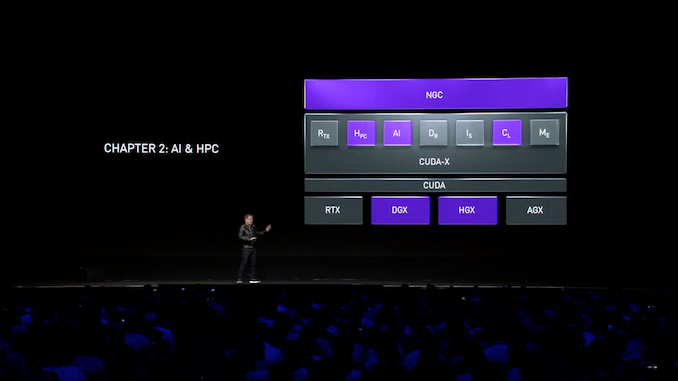

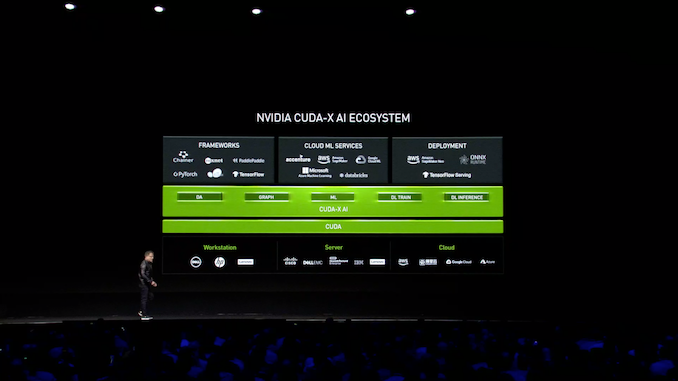

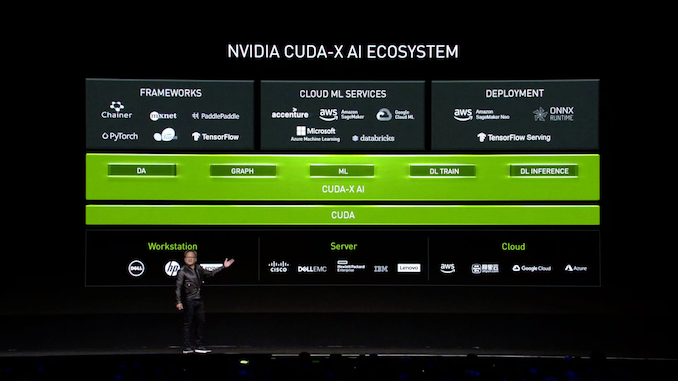

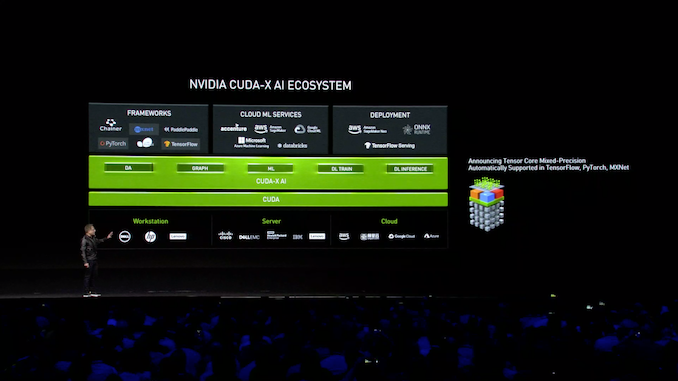

05:11PM EDT - Now on to the concept of the NVIDIA Stack

05:11PM EDT - All of NVIDIA's libraries are going to be bundled into a single brand: CUDA-X

05:12PM EDT - Not to be confused with CUDA 10, CUDA-X is just the collection of NVIDIA's GPU-accelerated computing libraries

05:12PM EDT - cuDNN, GameWorks, etc

05:13PM EDT - This is part of NVIDIA's ongoing efforts to make their software container-friendly, to make it more accessible to developers

05:13PM EDT - (We are being promissed a pop-quiz at the end)

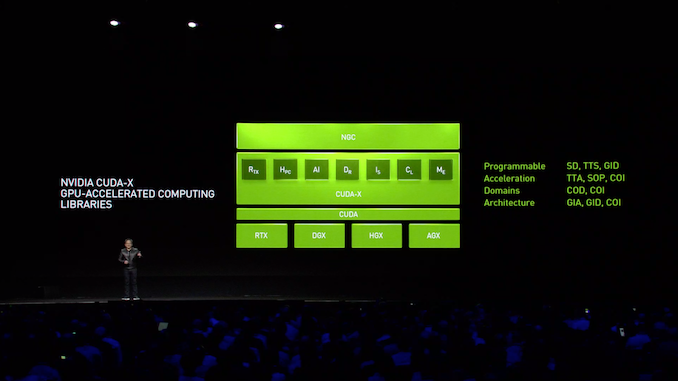

05:15PM EDT - Today's magic word: software-defined acceleration approach

05:16PM EDT - There is a certain irony in NVIDIA talking up high-level libraries at the same time as game programming is moving low-level

05:18PM EDT - Jwnsen is essentially arguing that ecosystems are key to making GPU computing successful. To have these libraries, to have so many CUDA-capable GPIUs out there, etc

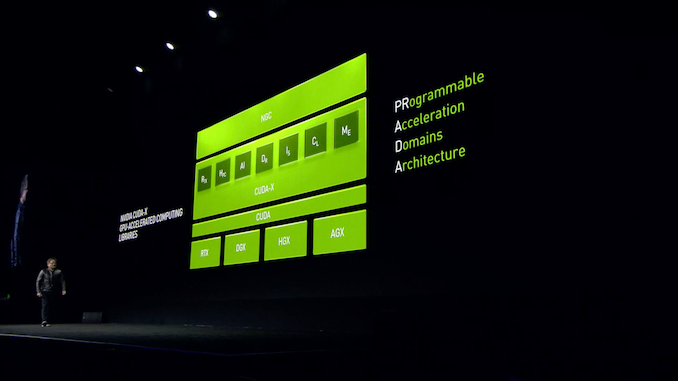

05:19PM EDT - Jensen's official word of the day: PRADA. Programmable Acceleration of multiple Domains with one Architecture

05:19PM EDT - (Does that make Jensen the devil?)

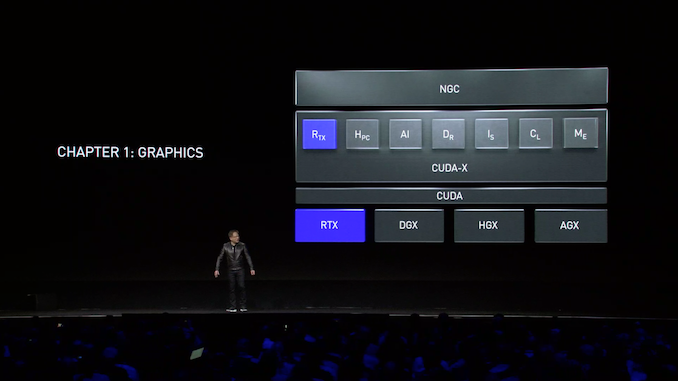

05:20PM EDT - 3 vhapters for today. Starting with computer graphics

05:20PM EDT - Rolling a video

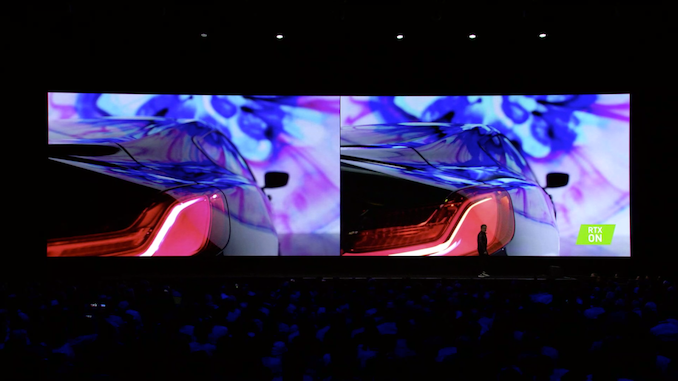

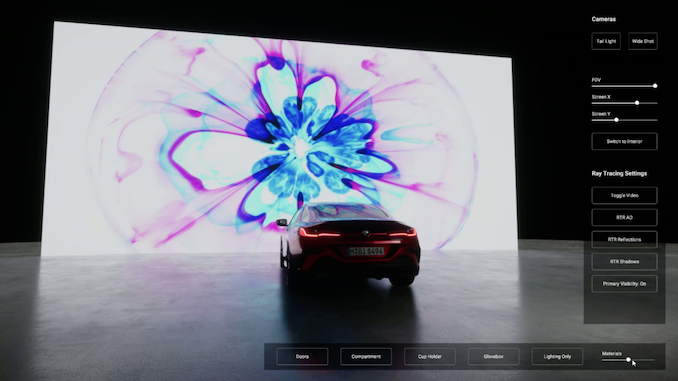

05:22PM EDT - A BMW car ad was just played

05:22PM EDT - Jensen is playing with the audience to aee which parts are real and which parts are rendered

05:23PM EDT - Static shots look good. In-motion shots tend to betray the CGI

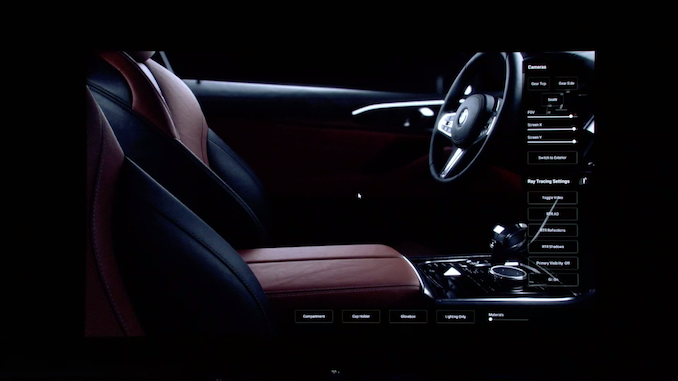

05:24PM EDT - Now showing parts of the video running on Unity in real time

05:26PM EDT - Real-time doesn't offer quite enough spatial resolution. But it still looks impressive

05:26PM EDT - Experimental Unity package is coming April 4th

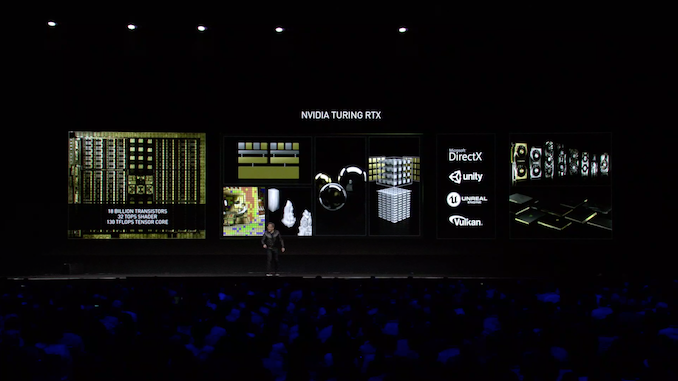

05:26PM EDT - Now recapping Turing architecture

05:27PM EDT - RT cores, tensor cores, dedicated INT32 ALUs, variable rate shading, etc

05:27PM EDT - "Render stuff"

05:30PM EDT - Also talking about the importance of game engines. The hardware is only as useful as the software can use those new futures

05:30PM EDT - Unreal Engine 4.22 will incorporate DXR support

05:31PM EDT - "This year we have more notebooks than ever"

05:31PM EDT - 40 new laptops announced thus far that include a Turing-based video adapter

05:32PM EDT - Rolling another video. A Nexon game using UE 4.22

05:33PM EDT - "Dragon Hound"

05:34PM EDT - All DXR/RTX accelerated, of course

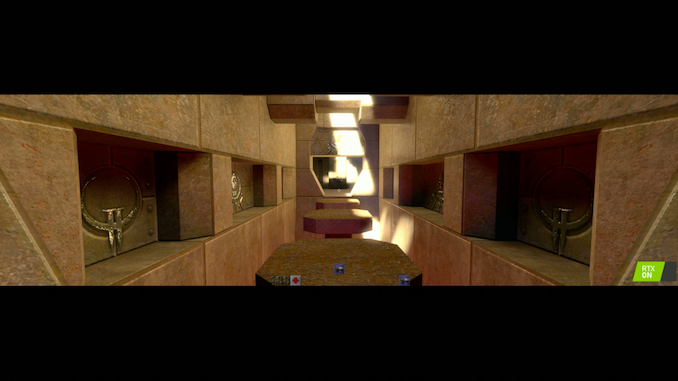

05:35PM EDT - Another video. This time of good ole' Quake 2

05:36PM EDT - And now a version using ray tracing

05:37PM EDT - Also implemented physically-based materials

05:39PM EDT - NVIDIA will be finishing it off and contributing it back to the Quake 2 engine source code

05:40PM EDT - (Intel has also been doing id Software-related ray tracing demos. But those involved ray tracing the entire game)

05:41PM EDT - Overall, DXR/RTX support is slated to come to a number of tools this year

05:41PM EDT - Over 80% of "leading" tool makers have adopted RTX

05:42PM EDT - Rolling another video

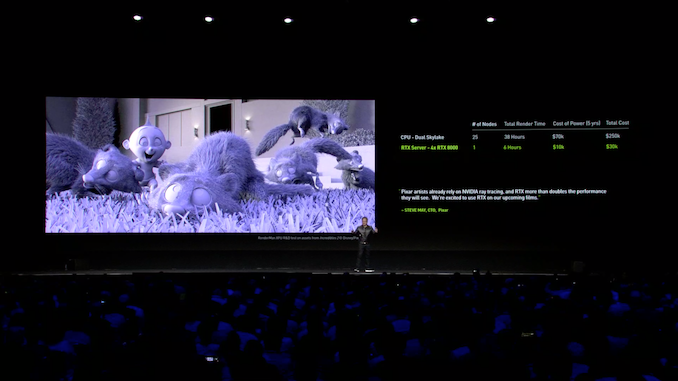

05:44PM EDT - Jensen is once again talking up the benefits of GPU rendering versus CPU rendering in the pro space

05:45PM EDT - Also touting the power savings (per unit of work)

05:47PM EDT - (My chair is ever so slightly shaking. And it's not me. Very small earthquake)

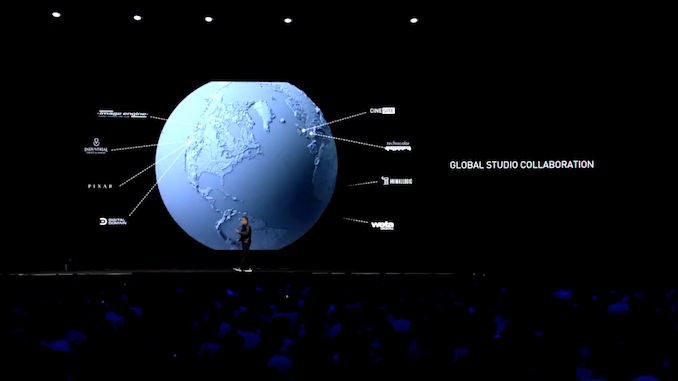

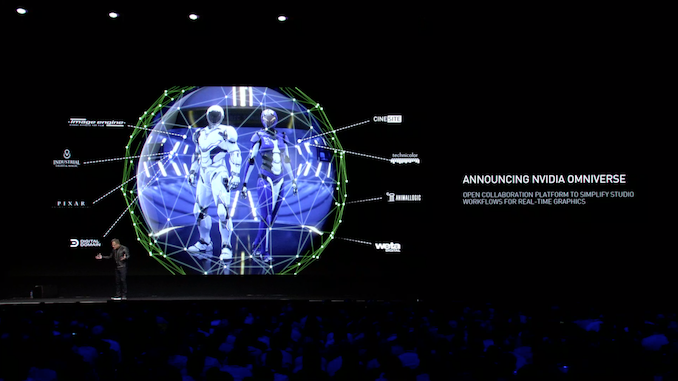

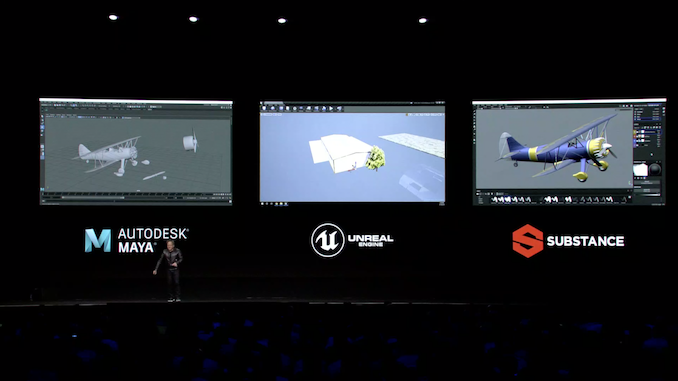

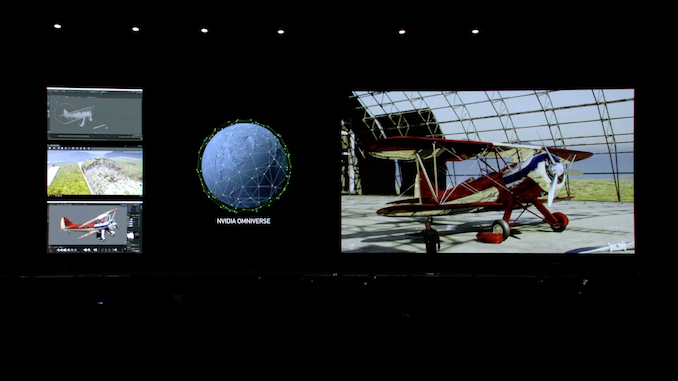

05:49PM EDT - Now tangential to graphics: allowing post-production studios to collaborate across the globe

05:49PM EDT - Announcing NVIDIA Omniverse

05:50PM EDT - An open collaberation platform for multiple artists working in a single workflow

05:52PM EDT - Supports tools from multiple vendors. Simultaneously

05:53PM EDT - So different workstations all making changes to the geometry, textures, abd background all at the same time. With the final product rendered in real time

05:54PM EDT - NVIDIA is currently looking for interested customers for early access

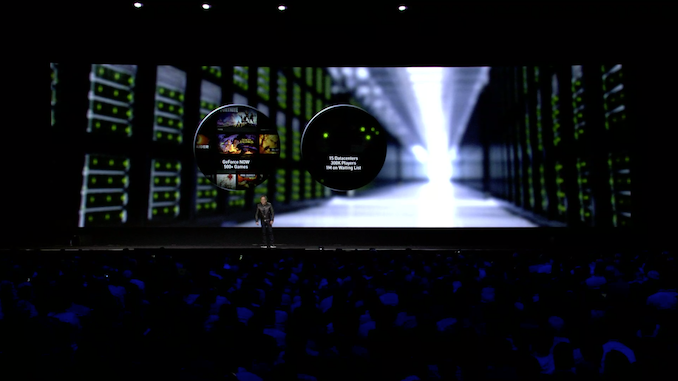

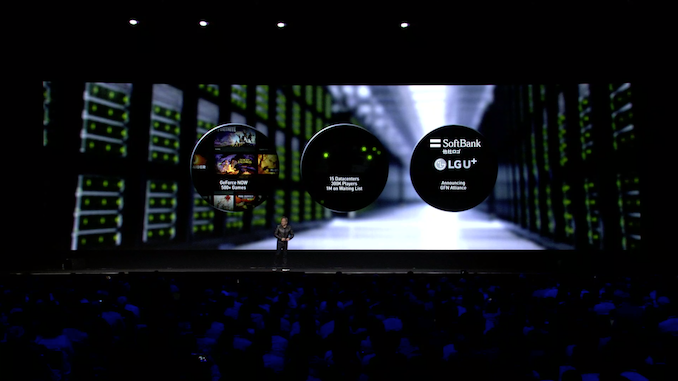

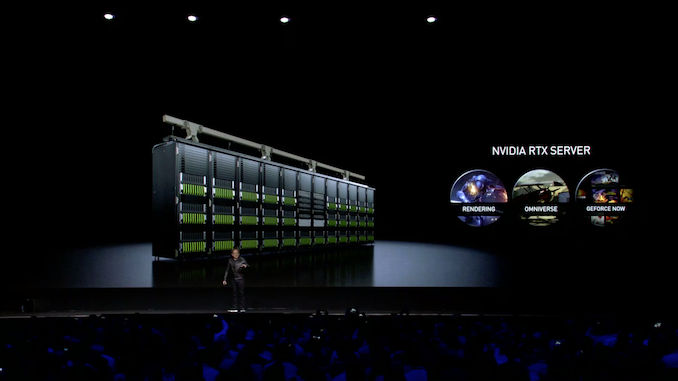

05:54PM EDT - Now on to GeForce NOW, NVIDIA's game streaming service

05:55PM EDT - Recapping the current features and design goals of GeForce NOW

05:56PM EDT - NVIDIA is basically selling a high performance server instance for remote gaming

05:58PM EDT - GeForce NOW Allianec: Creating a server architecture and software stack to supply to telcos to setup at the edge of their networks to provide game streaming services

05:58PM EDT - The first two partners: SoftBank Japan and LG U+ in South Korea

05:59PM EDT - Alliance partners provide server instances for GeForce NOW

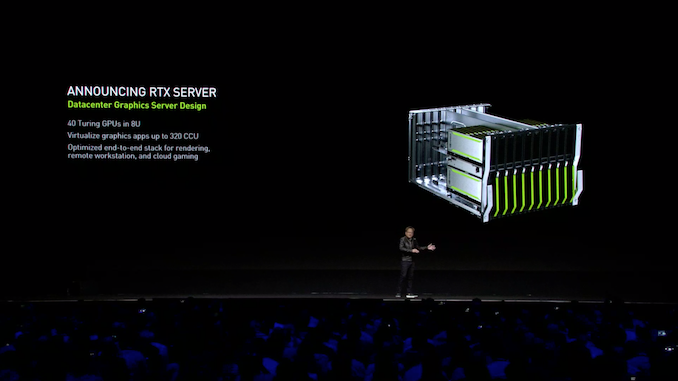

05:59PM EDT - The server NVIDIA will be using for this is the new RTX Server

05:59PM EDT - A datacenter-class graphics server

05:59PM EDT - 8U with 49 Turing GPUs

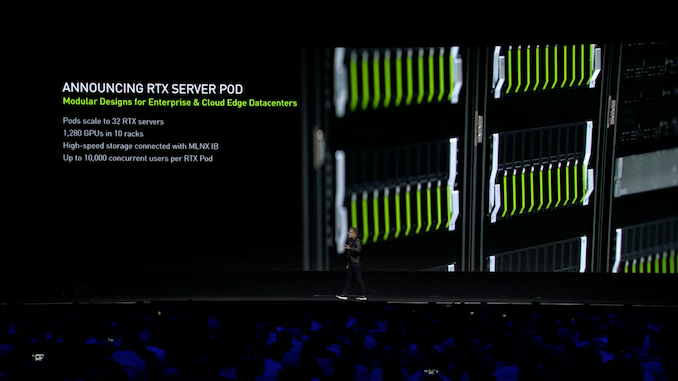

06:00PM EDT - It also comes bigger, in the RTX Server Pod, which scales up to 32 servers

06:00PM EDT - 1 pod can serve up to 10K users

06:00PM EDT - That's 40 GPUs per RTX Server, not 49, sorry about that

06:01PM EDT - Pods are interconnected using InfiniBand (Mellanox, of course)

06:03PM EDT - Rolling another NVIDIA video. i'm not quite sure what this has to do with anything

06:04PM EDT - It's another one of NVIDIA's exosuit videos

06:05PM EDT - A chance for NVIDIA's creative arts and engineering teams to do something fun, it looks like

06:05PM EDT - On to chapter 2: AI and HPC

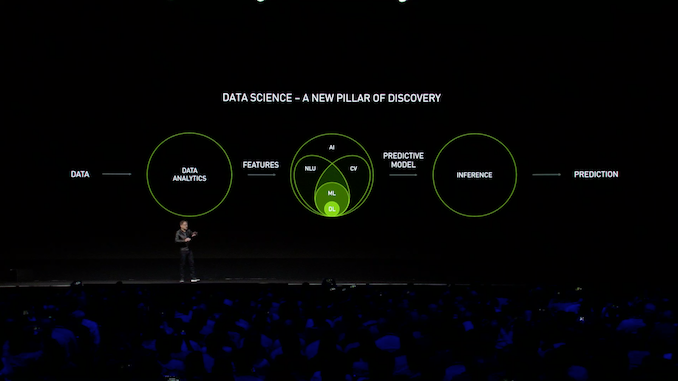

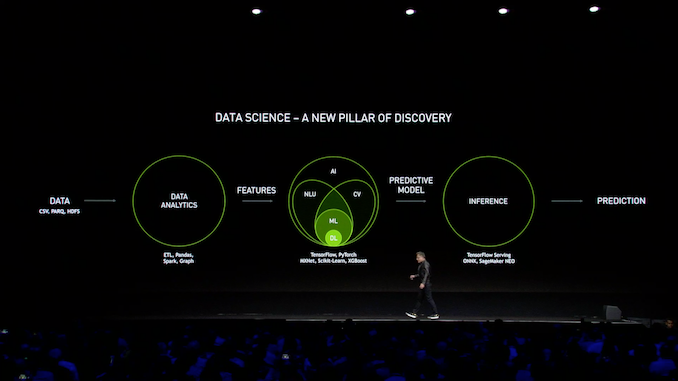

06:06PM EDT - "Data Science is the fasting growing field of Computer Science today"

06:09PM EDT - All about learning from data and making predictions from it

06:09PM EDT - i.e. AI

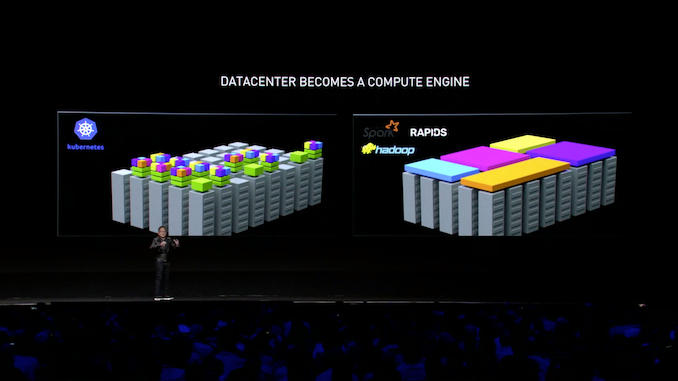

06:11PM EDT - Recapping the field of data science and all of the tools for machine learning, from Hadoop to Spart to TensorFlow

06:12PM EDT - And NVIDIA has libraries for all of these steps and tools

06:13PM EDT - Jensen notes that rattling off all those names is exhausting. He's not wrong

06:14PM EDT - Also recapping the current ecosystem and how hardware and software fit together

06:15PM EDT - Announcement support for automatic mixed precision has been added to TensorFlow, PyTorch, and MXNet

06:16PM EDT - So a free speedup. "You do nothing"

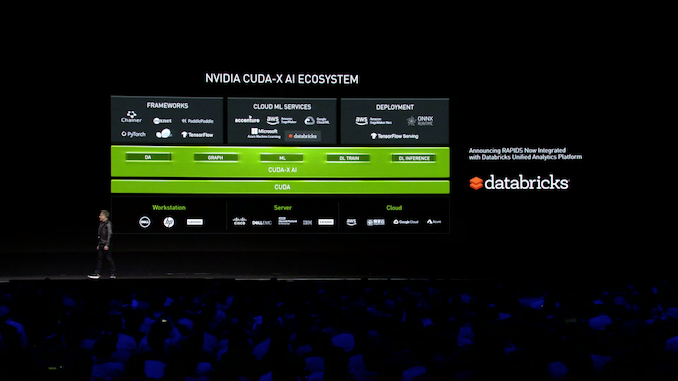

06:16PM EDT - NVIDIA RAPIDS is now integrated with Databricks' analytics platform

06:16PM EDT - Google Cloud and Microsoft Azure are also adding RAPIDS

06:17PM EDT - TensorRT has also been integrated into Microsoft Onyx

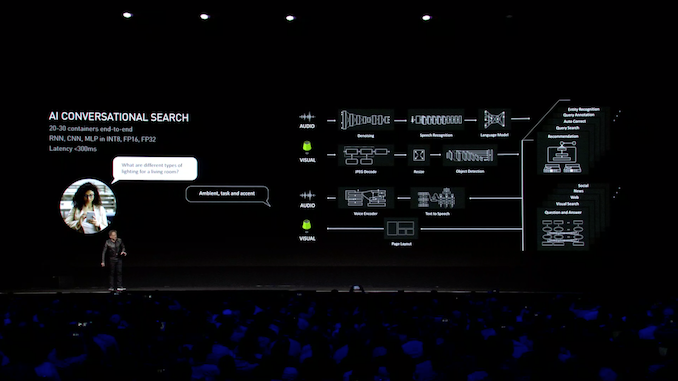

06:21PM EDT - The future is AI containers talking to other AI containers

06:22PM EDT - Showing off an example of the concept with Ai conversational search

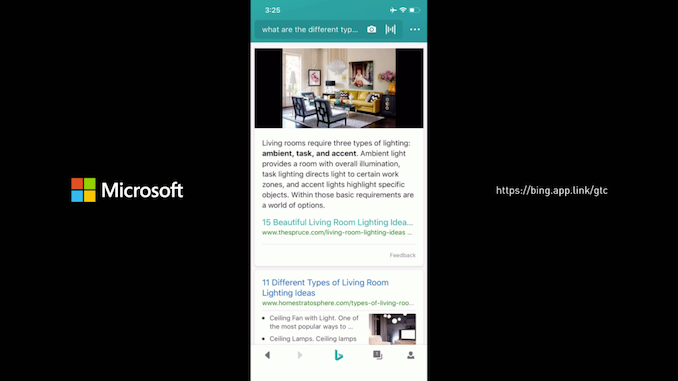

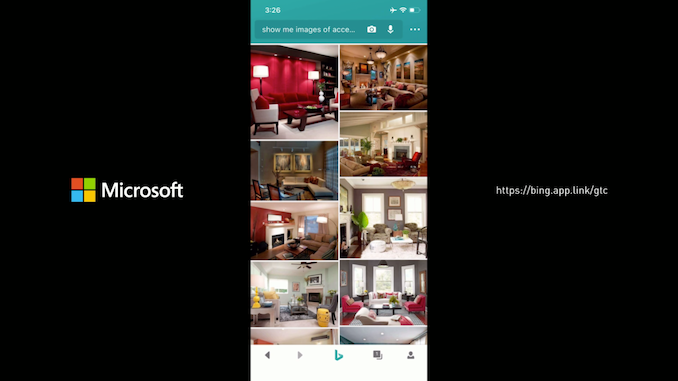

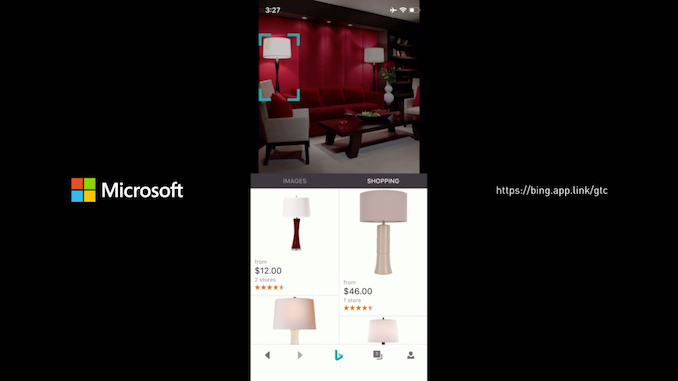

06:23PM EDT - Demo time with the Bing app

06:24PM EDT - Showing Bing doing voice recognition, and smartly searching for an answer

06:26PM EDT - "It's a very consistent experience"

06:28PM EDT - Demo end

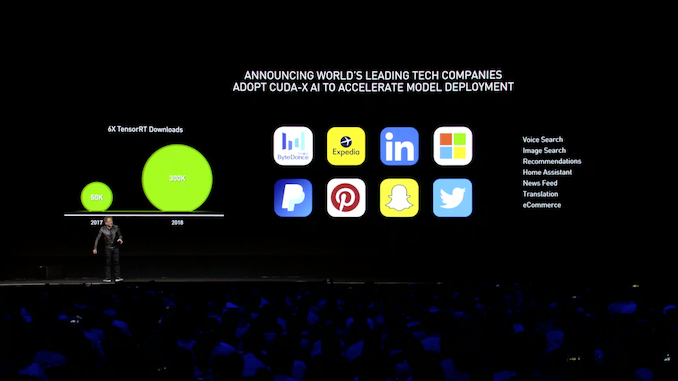

06:29PM EDT - Now on to talking about all of the services that are now using NVIDIA GPUs for acceleration, thanks to NVIDIA's libraries

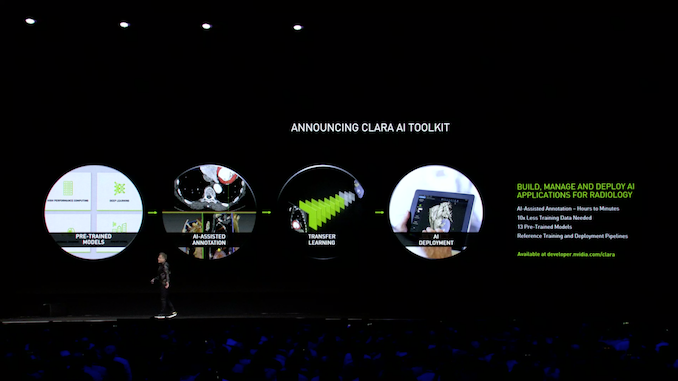

06:31PM EDT - NVIDIA is rolling out a new AI toolkit, which they are calling Clara

06:31PM EDT - The cornerstone of which is a series of pre-trained models

06:32PM EDT - NVIDIA’s Clara is an open, scalable computing platform that enables developers to build and deploy medical imaging applications into hybrid (embedded, on-premise, or cloud) computing environments to create intelligent instruments and automated healthcare workflows.

06:34PM EDT - Talking about more use cases for ML and all the talks at the show this week about the subject

06:35PM EDT - Demo time again

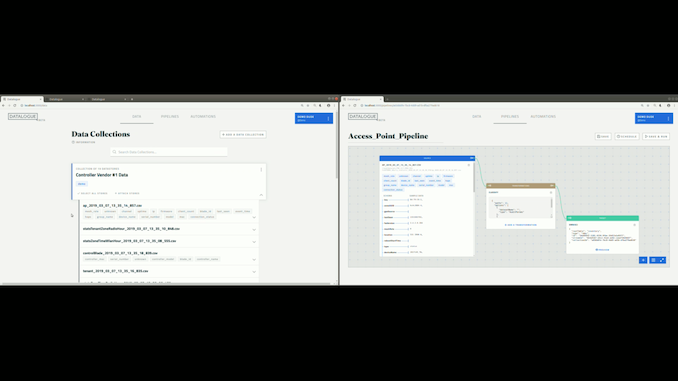

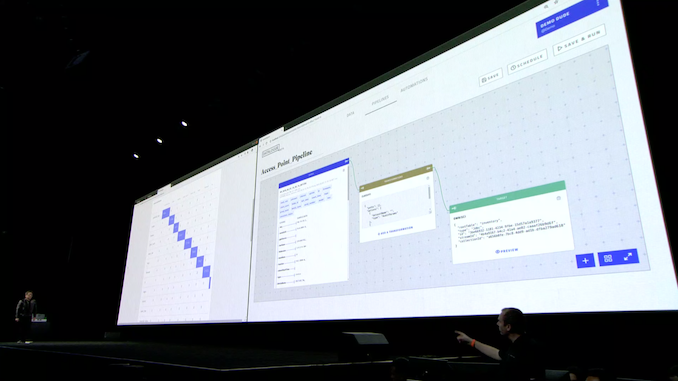

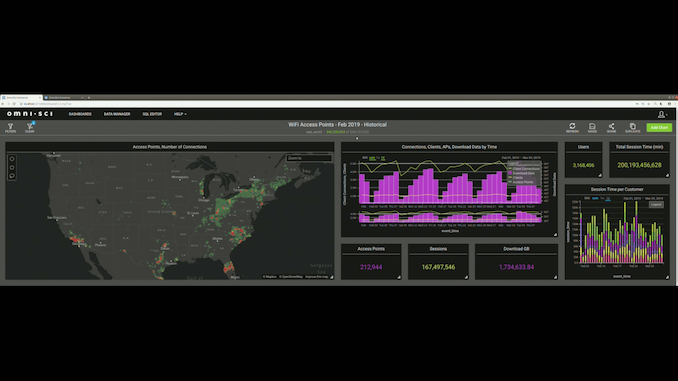

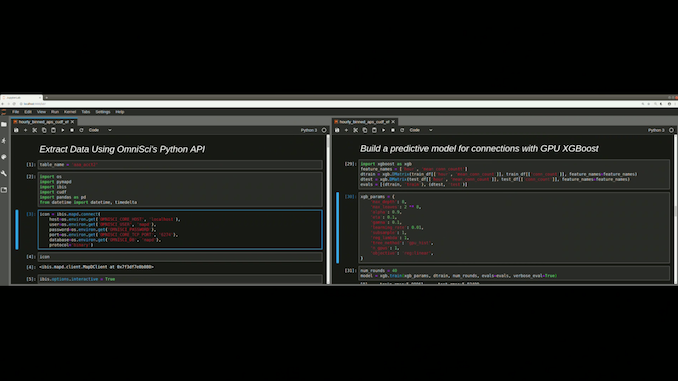

06:36PM EDT - Using Datalogue's tools to figure out where is best to put Wi-Fi access points

06:38PM EDT - Datalogue's tools

06:38PM EDT - Datalogue's tools use AI to transform raw data into something more useful

06:40PM EDT - "it would take days to do this by hand"

06:41PM EDT - This is a genuinely interesting demo. But it's about impossible to explain what's going on via live blog

06:44PM EDT - (we're over 1.5 hours in, and we're still on Chapter 2)

06:45PM EDT - And we've reached the final goal: a timeline of predicted Wi-Fi access point usage across Ohio

06:47PM EDT - And we've reached the end of that demo

06:48PM EDT - NVIDIA's Deep Learning Institute taught 100K data scientists last year alone

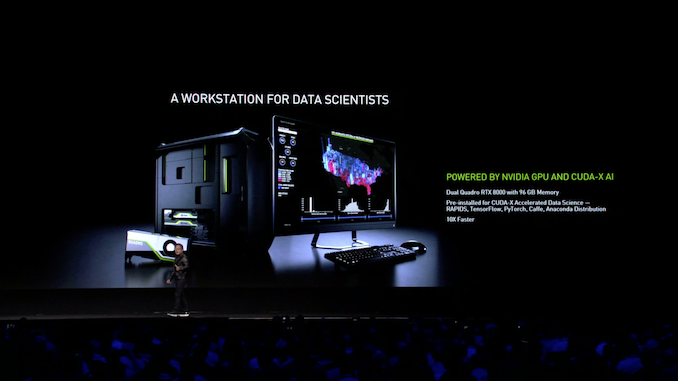

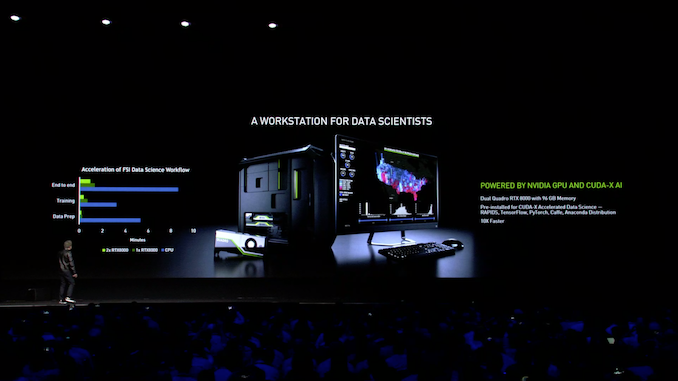

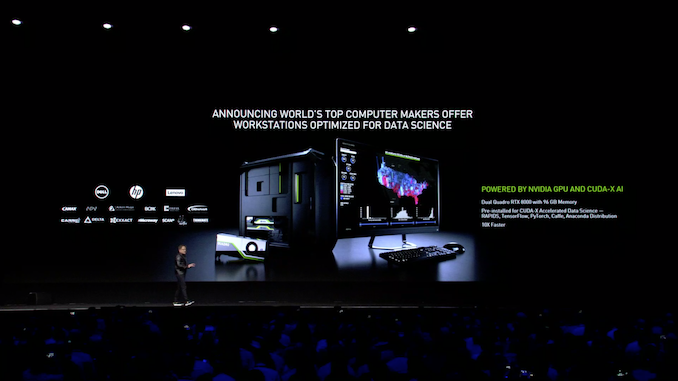

06:49PM EDT - So NVIDIA has decided that data scientists need a customized workstation

06:50PM EDT - The new workstations are a joint project with OEMs. High performance compute and high-speed I/O, incorporating 1 or more Quadro 6000/8000 video cards

06:50PM EDT - Officially NVIDIA calls this a "Data Science Workstation"

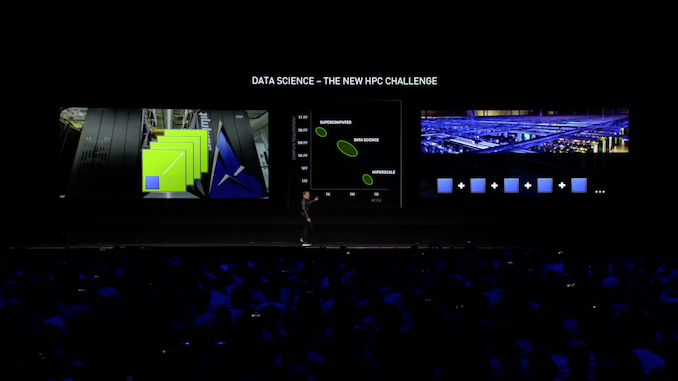

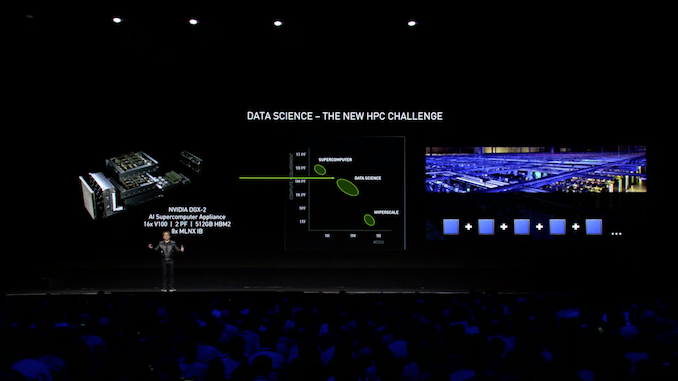

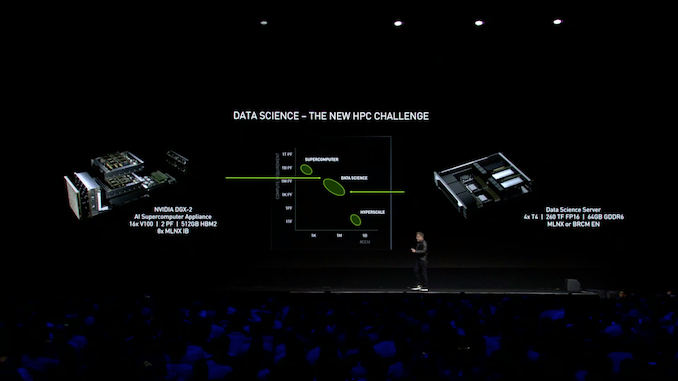

06:51PM EDT - "Data science is the new HPC"

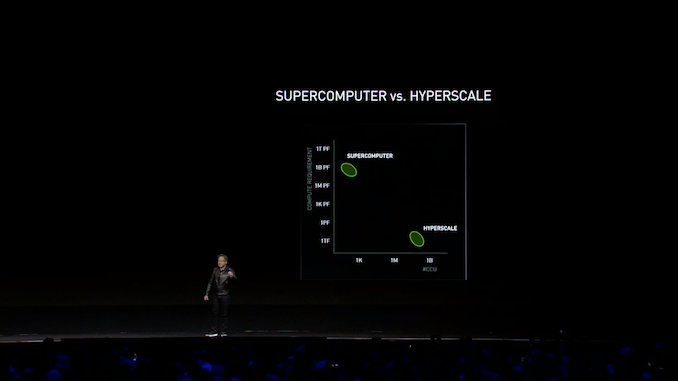

06:52PM EDT - Now on to explaining the difference between supercomputers and hyperscale clusters

06:52PM EDT - Superconputers do a small number of tasks wells

06:52PM EDT - Hyperscale clusters are all about capacity. Doing many small jobs

06:53PM EDT - Scale-up vs. Scale-out

06:54PM EDT - These require different system/cluster architectures

06:56PM EDT - Data science is in the middle. The tasks are heavier than hyperscale tasks and there are fewer of them. But it's still wider than a supercomputer

06:56PM EDT - And this is where NVIDIA's DGX-2 appliance fits in right now

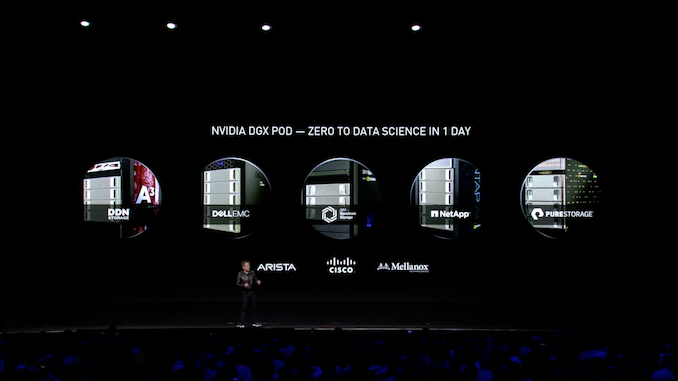

06:57PM EDT - But NVIDIA is not done. They are announcing a new Data Science Server initiative

06:57PM EDT - What do you do when you need inference instead of training? You fill a box with Tesla T4 (TU104) accelerators

07:02PM EDT - Jensen is now talking about why NVIDIA acquired Mellanox, and how they see networking as becoming part of the compute infrastructure of datacenters

07:02PM EDT - Now on stage: Eyal Waldman, CEO of Mellanox

07:06PM EDT - NVIDIA and Mellanox will be working hard together in the future to improve datacenter compute

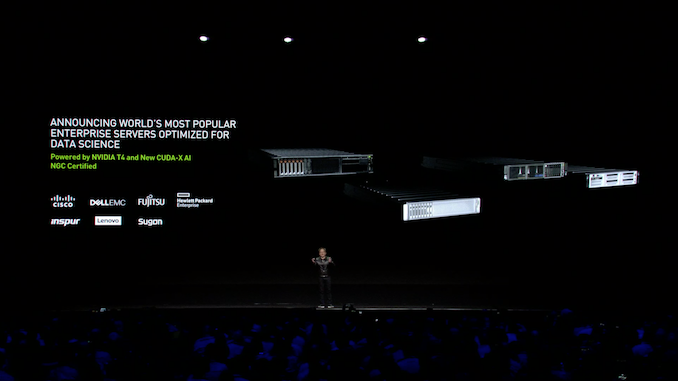

07:09PM EDT - Not a new product announcement, per-se, but NVIDIA's Tesla T4 accelerators are going to start showing up in a significant number of enterprise servers

07:09PM EDT - Fully certified, of course

07:09PM EDT - Previously Tesla T4s were being gobbled up by cloud providers like Google

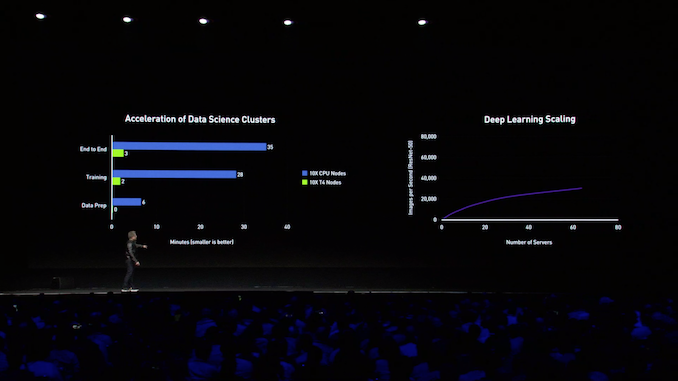

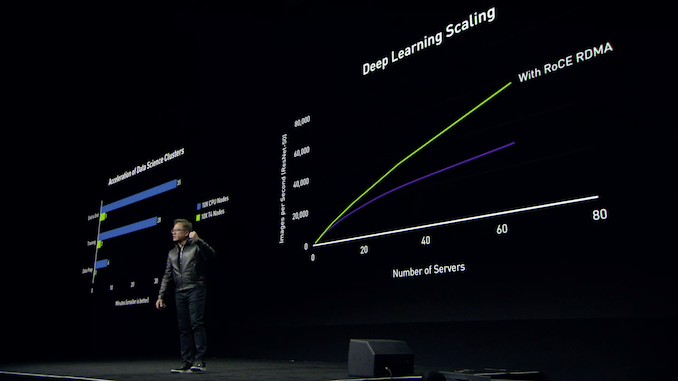

07:11PM EDT - Once more reiterating how GPUs are better than CPUs

07:11PM EDT - Especially if you need a large number of CPU nodes (too much cross-communication)

07:12PM EDT - Now on stage: Amazon's VP of Compute Services, Matt Garman

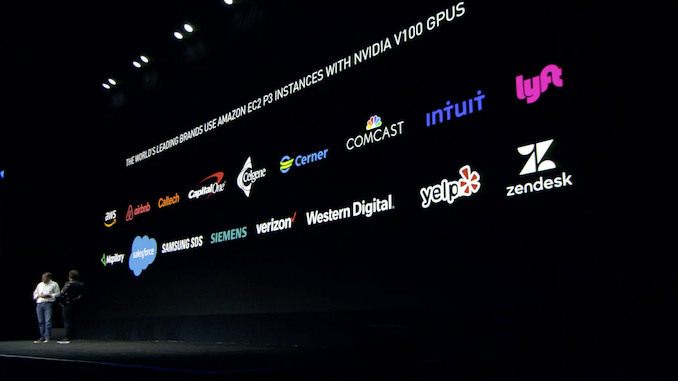

07:13PM EDT - Recapping Amazon's history of using NVIDIA accelerators for new compute instances

07:15PM EDT - Garman is talking up the benefits of renting server time through AWS. Including the ability to rent a large amount of it for a short period of time for testing

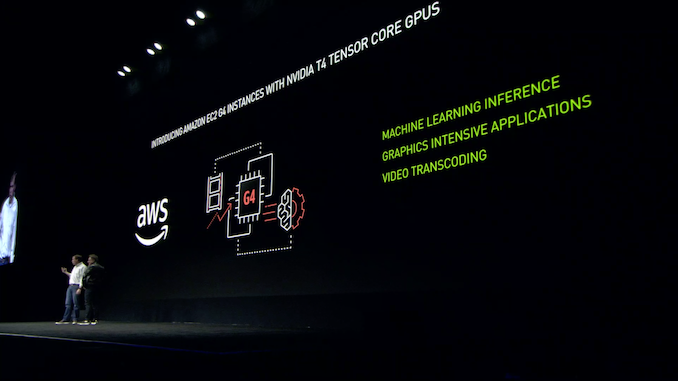

07:18PM EDT - Amazon is launching a new instance, the G4 instance, which runs Tesla T4 accelerators

07:19PM EDT - 2 hours and 15 minutes in, and we're now on Chapter 3

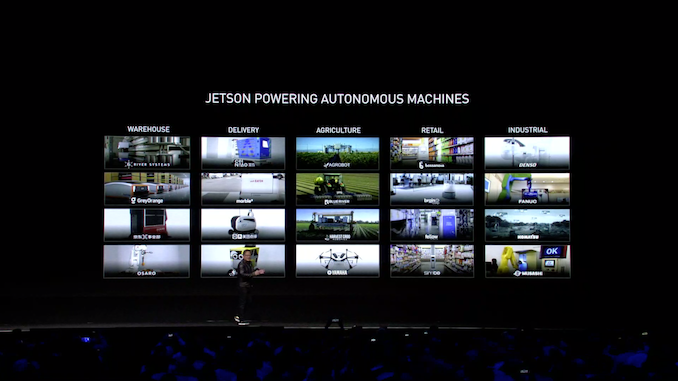

07:19PM EDT - Chapter 3: Robotics

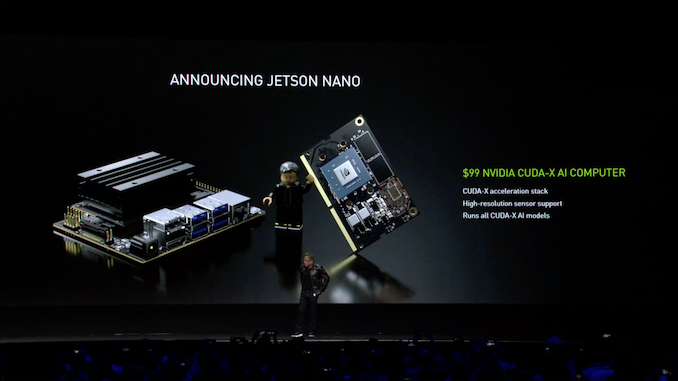

07:19PM EDT - As we're after 4pm PT, the Jetson Nano has already been announced via press release

07:20PM EDT - It's a small, cheap, Tegra X1-based Jetson compute module

07:21PM EDT - The module itself is little more than an SoC, RAM, NAND, and VRMs

07:21PM EDT - $99 for the dev kit

07:21PM EDT - Looks like NVIDIA is going after the Raspberry Pi market

07:22PM EDT - At least, anyone who needs more power than what;s found in the sub-$50 segment of it

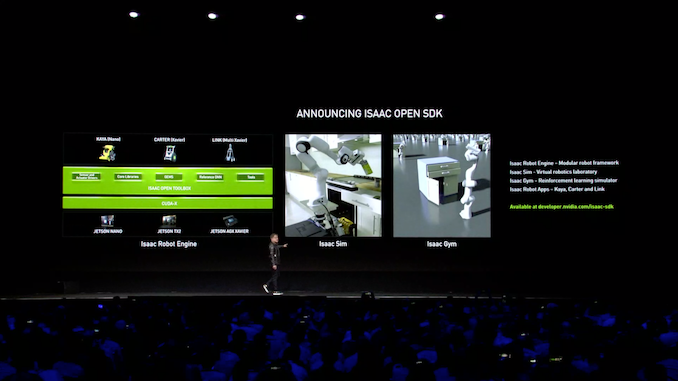

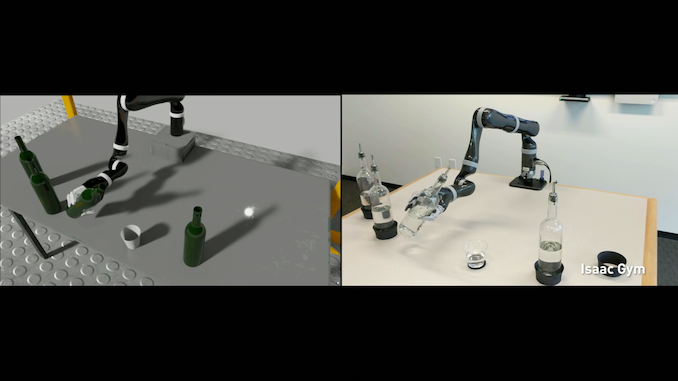

07:22PM EDT - NVIDIA is also releasing their Isaac robotics software as its own SDK

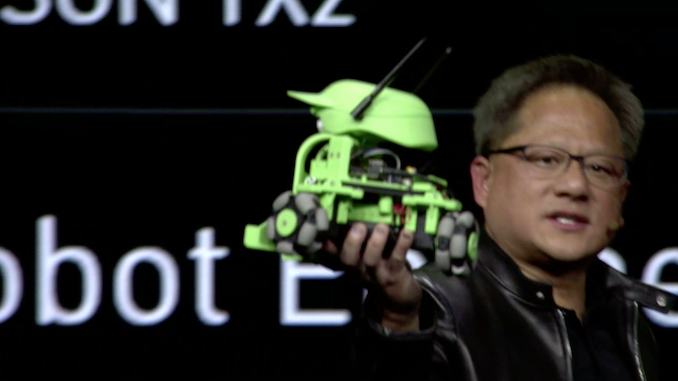

07:25PM EDT - Also showing off a small, simple robot powered by the Jetson Nano called Kaya

07:25PM EDT - (NVIDIA is getting amazing mileage out of that 20nn SoC, especially given how poorly 20nm behaves)

07:26PM EDT - Rolling a video on robotics

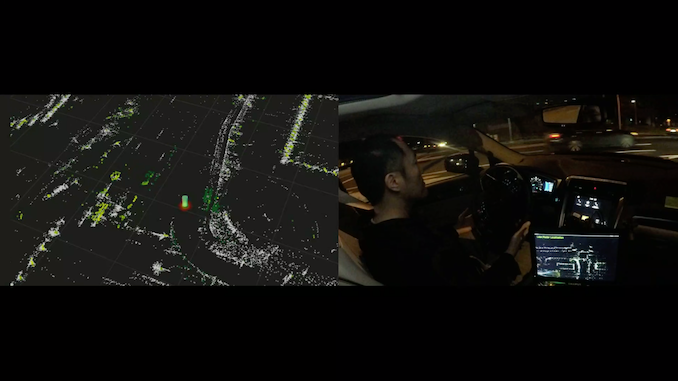

07:26PM EDT - Now on to self-driving cars

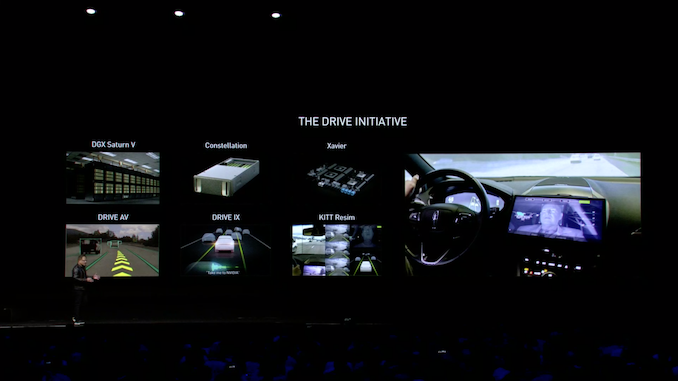

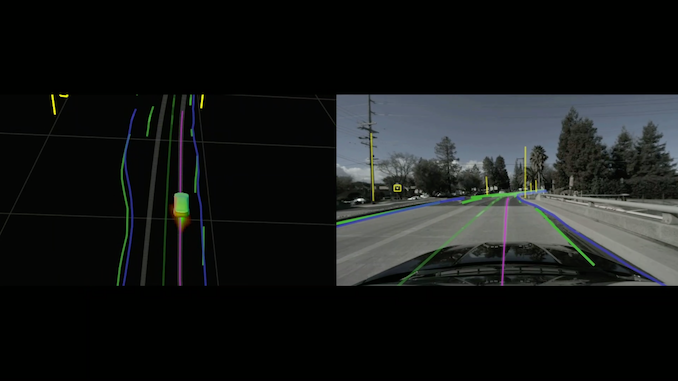

07:29PM EDT - Recapping all of NVIDIA's various self-driving car technologies and products

07:29PM EDT - "The future of autonomous systems has to be software-defined"

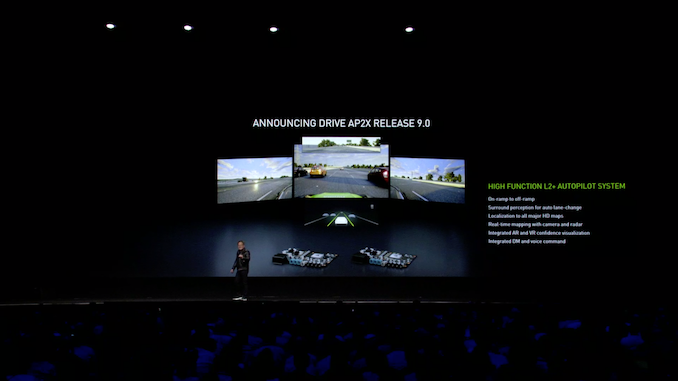

07:30PM EDT - Announcing release 9 of DRIVE AP2X

07:30PM EDT - NVIDIA's Level 2 autopiloting system

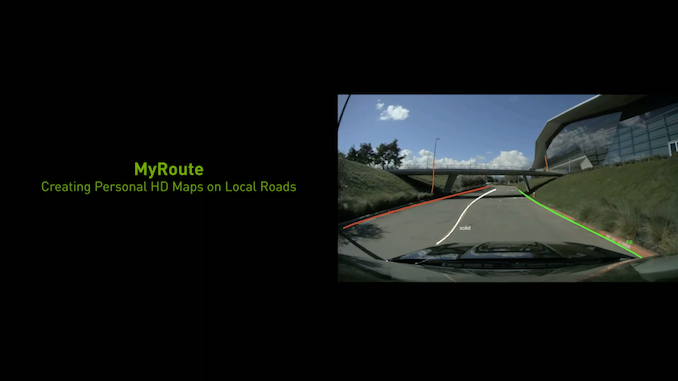

07:31PM EDT - Rolling a video on Drive AP2X

07:31PM EDT - (Jensen's crew just had a mic mix-up)

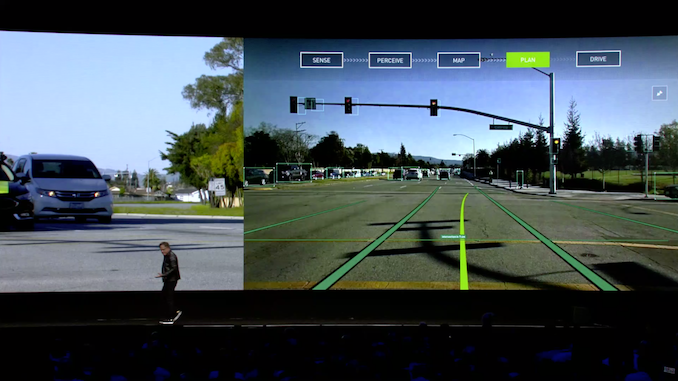

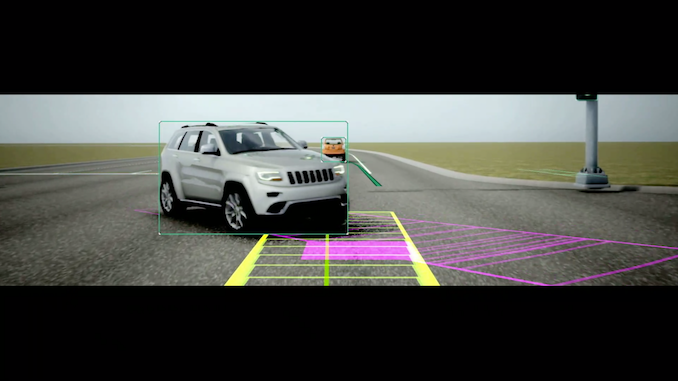

07:33PM EDT - Showing how the Drive system works and how it records a map of the world

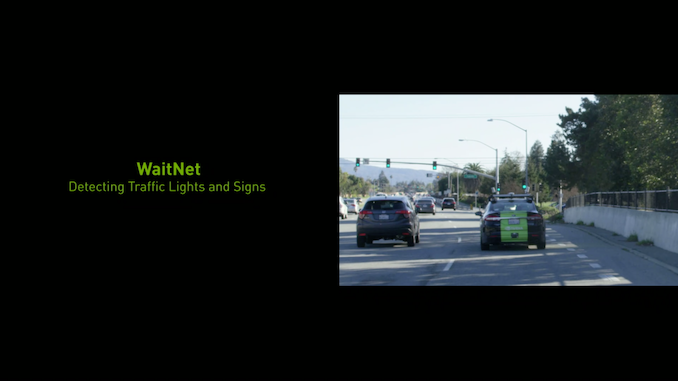

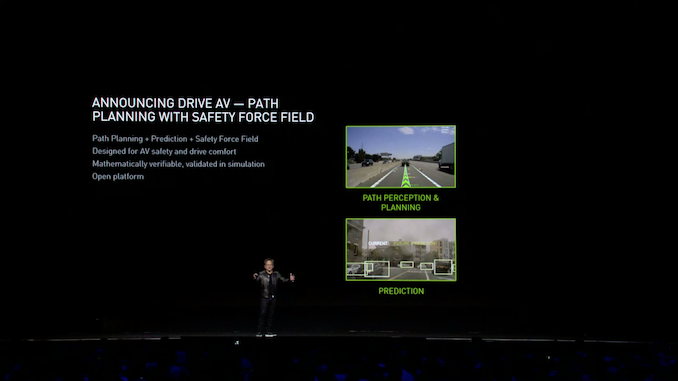

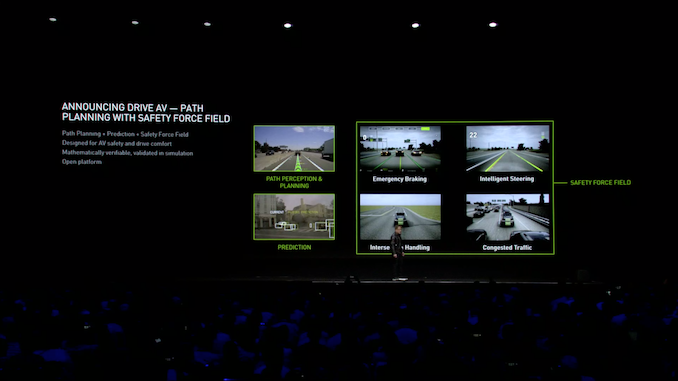

07:33PM EDT - Another DRIVE announcement: DRIVE AV

07:34PM EDT - DRIVE AV is a path planning system that also incorporates a prediction system to predict what other drivers will do and where they'll be

07:35PM EDT - Third pillar of DRIVE AV is called "safety force field". A computational algorithm for avoiding collisions

07:35PM EDT - "Mathematically verifiable"

07:37PM EDT - Steer and brake to avoid collisions with other drivers who are hazards. A Drive system should not be able to cause a collision

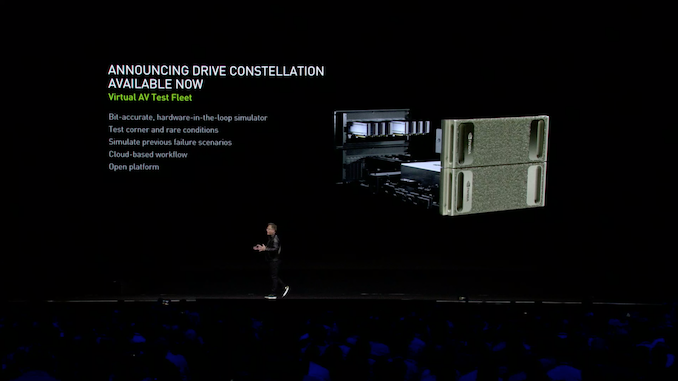

07:38PM EDT - Meanwhile Drive Constellation, NVIDIA's autonomous car virtual world simulator, is now available to the wider automotive industry

07:40PM EDT - Demoing the Constellation system

07:42PM EDT - Now showing a video of using Constellation for re-simulation of previous, real-world drives

07:43PM EDT - Self-driving cars is one of the world's greatest challenges

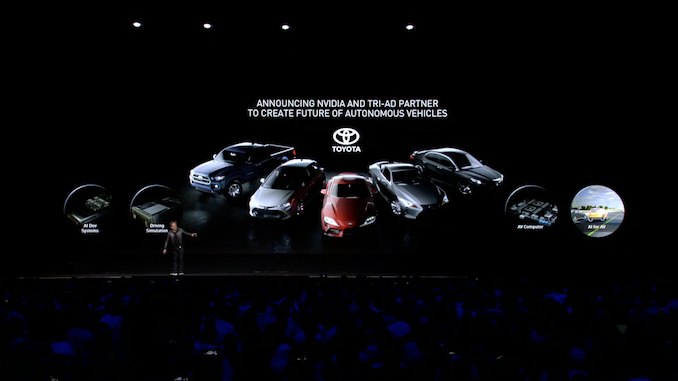

07:43PM EDT - Another announcement: Toyota is partnering with NVIDIA end-to-end for their autonomous vehicle development

07:44PM EDT - And we've reached the end. Jensen is now recapping (after 2 hours and 41 minutes)

07:45PM EDT - Graphics, data science, and robotics

07:46PM EDT - And that's a wrap

36 Comments

View All Comments

f4tali - Monday, March 18, 2019 - link

Looking forward to more "Leather Jacket" articles :)f4tali - Monday, March 18, 2019 - link

Maybe about Prada Leather Jackets ;)FreckledTrout - Monday, March 18, 2019 - link

Thanks for doing this Ryan. I really don't want to listen to 2 hours of Jensen but am curious about the plans.mode_13h - Monday, March 18, 2019 - link

My sentiments, exactly.mode_13h - Monday, March 18, 2019 - link

BTW, I got a good chuckle from the "Turing complete?" tagline.FreckledTrout - Monday, March 18, 2019 - link

NVIDIA Omniverse... I think they have been watching to much Riddick and talk of the underverse.D. Lister - Tuesday, March 19, 2019 - link

The term "omniverse" is not new.https://en.wikipedia.org/wiki/Omniverse

FreckledTrout - Tuesday, March 19, 2019 - link

LOL I understand but its a fictional thing. Just struck me funny.DillholeMcRib - Monday, March 18, 2019 - link

You deleted my comment. Left wingers run this site.Ryan Smith - Monday, March 18, 2019 - link

I deleted your comment because it had absolutely nothing to do with the keynote. Do try to stay on topic, please.