The AMD Radeon RX 590 Review, feat. XFX & PowerColor: Polaris Returns (Again)

by Nate Oh on November 15, 2018 9:00 AM ESTFinal Fantasy XV (DX11)

Upon arriving to PC earlier this, Final Fantasy XV: Windows Edition was given a graphical overhaul as it was ported over from console, fruits of their successful partnership with NVIDIA, with hardly any hint of the troubles during Final Fantasy XV's original production and development.

In preparation for the launch, Square Enix opted to release a standalone benchmark that they have since updated. Using the Final Fantasy XV standalone benchmark gives us a lengthy standardized sequence to utilize OCAT. Upon release, the standalone benchmark received criticism for performance issues and general bugginess, as well as confusing graphical presets and performance measurement by 'score'. In its original iteration, the graphical settings could not be adjusted, leaving the user to the presets that were tied to resolution and hidden settings such as GameWorks features.

Since then, Square Enix has patched the benchmark with custom graphics settings and bugfixes to be much more accurate in profiling in-game performance and graphical options, though leaving the 'score' measurement. For our testing, we enable or adjust settings to the highest except for NVIDIA-specific features and 'Model LOD', the latter of which is left at standard. Final Fantasy XV also supports HDR, and it will support DLSS at some later date.

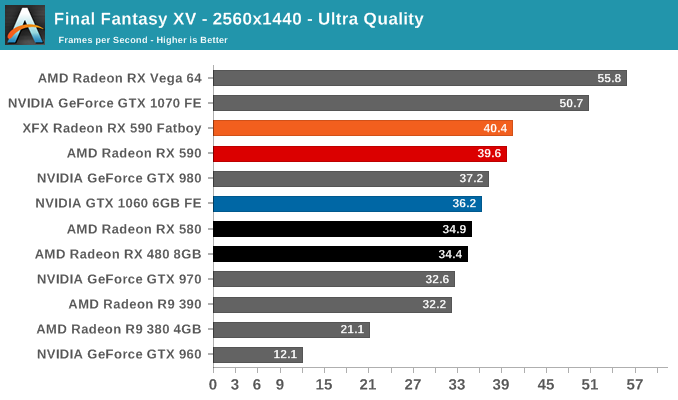

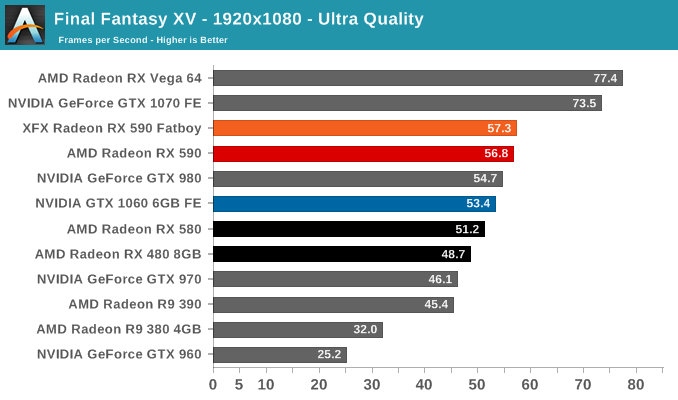

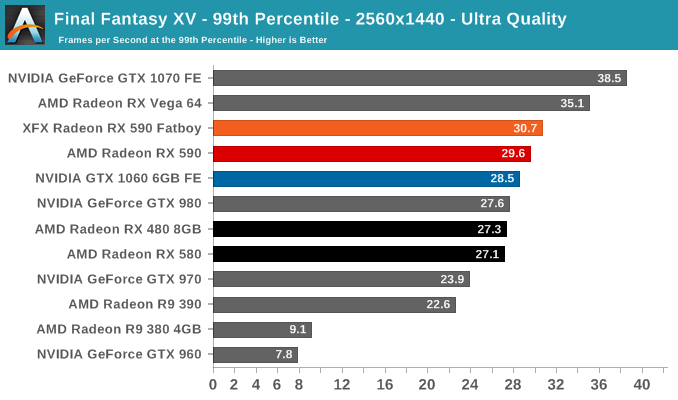

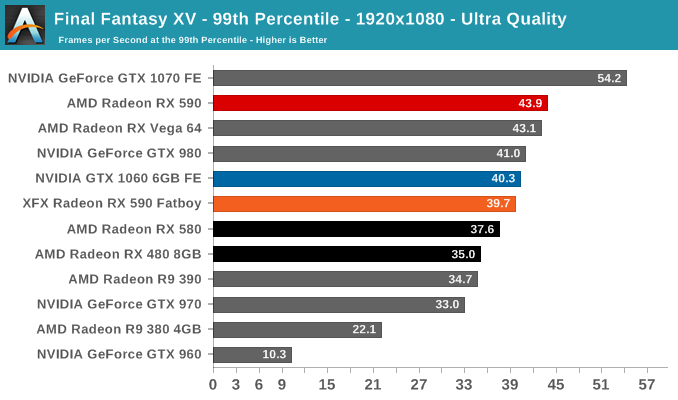

For Final Fantasy XV, the older R9 380 and GTX 960 simply can't keep up with the demands and are essentially unplayable with particularly low 99th percentiles. VRAM wouldn't be the sole issue - though FFXV does use high resolution textures - as the GTX 980 (4GB) performs up to par. NVIDIA hardware tends to perform well on FFXV but as with Ashes: Escalation, the RX 590's extra performance permits it to claim victory, reference-to-reference, which the RX 580 was unable to do here.

136 Comments

View All Comments

El Sama - Thursday, November 15, 2018 - link

To be honest I believe that the GTX 1070/Vega 56 is not that far away in price and should be considered as the minimum investment for a gamer in 2019.Dragonstongue - Thursday, November 15, 2018 - link

over $600 for a single GPU V56, no thank you..even this 590 is likely to be ~440 or so in CAD, screw that noise.minimum for a gamer with deep pockets, maybe, but that is like the price of good cpu and motherboard (such as Ryzen 2700)

Cooe - Thursday, November 15, 2018 - link

Lol it's not really the rest of the world's fault the Canadian Dollar absolutely freaking sucks right now. Or AMD's for that matter.Hrel - Thursday, November 15, 2018 - link

Man, I still have a hard 200 dollar cap on any single component. Kinda insane to imagine doubling that!I also don't give a shit about 3d, virtual anything or resolutions beyond 1080p. I mean ffs the human eye can't even tell the difference between 4k and 1080, why is ANYONE willing to pay for that?!

In any case, 150 is my budget for my next GPU. Considering how old 1080p is that should be plenty.

igavus - Friday, November 16, 2018 - link

4k and 1080p look pretty different. No offence, but if you can't tell the difference, perhaps it's time to schedule a visit with an optometrist? Nevermind 4K, the rest of the world will look a lot better also if your eyes are okay :)Great_Scott - Friday, November 16, 2018 - link

My eyes are fine. The sole advantage of 4K is not needing to run AA. That's about it.Anyone buying a card just so they can push a solid framerate on a 4K monitor is throwing money in the trash. Doubly so if they aren't 4->1 interpolating to play at 1K on a 4K monitor they needed for work (not gaming, since you don't need to game at 4K in the first place).

StevoLincolnite - Friday, November 16, 2018 - link

There is a big difference between 1080P and 4k... But that is entirely depending on how large the display is and how far you sit away from said display.Otherwise known as "Perceived Pixels Per Inch".

With that in mind... I would opt for a 1440P panel with a higher refresh rate than 4k every day of the week.

wumpus - Saturday, November 17, 2018 - link

Depends on the monitor. I'd agree with you when people claim "the sweet spot of 4k monitors is 28 inches". Maybe the price is good, but my old eyes will never see it. I'm wondering if a 40" 4k TV will make more sense (the dot pitch will be lower than my 1080P, but I'd still likely notice lack of AA).Gaming (once you step up to the high end GPUs) should be more immersive, but the 2d benefits are probably bigger.

Targon - Saturday, November 17, 2018 - link

There are people who notice the differences, and those who do not. Back in the days of CRT monitors, most people would notice flicker with a 60Hz monitor, but wouldn't notice with 72Hz. I always found that 85Hz produced less eye strain.There is a huge difference between 1080p and 2160p in terms of quality, but many games are so focused on action that the developers don't bother putting in the effort to provide good quality textures in the first place. It isn't just about not needing AA as much as about a higher pixel density and quality with 4k. For non-gaming, being able to fit twice as much on the screen really helps.

PeachNCream - Friday, November 16, 2018 - link

I reached diminishing returns at 1366x768. The increase to 1080p offered an improvement in image quality mainly by reducing jagged lines, but it wasn't anything to get astonished about. Agreed that the difference between 1080p and 4K is marginal on smaller screens and certainly not worth the added demand on graphics power to push the additional pixels.