HighPoint Releases the SSD7102: A Bootable Quad M.2 PCIe x16 NVMe SSD RAID Card

by Ian Cutress on October 24, 2018 8:00 AM EST

In the upper echelons of commercial workhouses, having access to copious amounts of local NVMe storage is more of a requirement than ‘something nice to have’. We’ve seen solutions in this space include custom FPGAs to software breakout boxes, and more recently a number of the motherboard vendors have provided PCIe x16 Quad M.2 cards for the market. The only downside is that they rely on the processor bifurcation, i.e. the ability for the processor to drive multiple devices from a single PCIe x16 slot. HighPoint has got around that limitation.

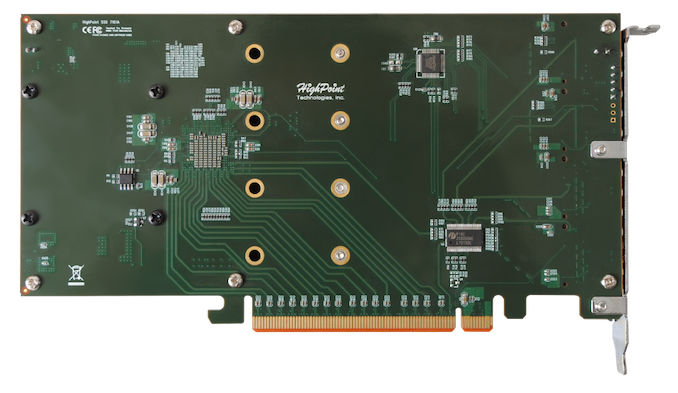

The current way of getting four NVMe M.2 drives in a single PCIe x16 slot sounds fairly easy. There are 16 lanes in the slot, and each drive can take up to four lanes, so what is all the fuss? The problem arises from the CPU side of the equation: that PCIe slot connects directly to one PCIe x16 root complex on the chip, and depending on the configuration it may only be expecting one device to be connected to it. The minute you put four devices in, it might not know what to do. In order to get this to work, you need a single device to act as a communication facilitator between the drives and the CPU. This is where PCIe switches come in. Some motherboards already use these to split a PCIe x16 complex into x8/x8 when different cards are inserted. For something a bit bigger, like bootable NVMe, then HighPoint use something bigger (and more expensive).

The best way to get around that limitation is to use a PCIe switch, and in this case HighPoint is using PLX8747 chip with custom firmware for booting. This switch is not cheap (not since Avago is now at the helm of the company and increased the pricing by several multiples), but it does allow for that configurable interface between the CPU and the drives that works in all scenarios. Up until today, HighPoint already had a device on the market for this, the SSD7101-A, which enabled four M.2 NVMe drives to connect to the machine. What makes the SSD7102 different is that the firmware inside the PLX chip has been changed, and it now allows for booting from a RAID of NVMe drives.

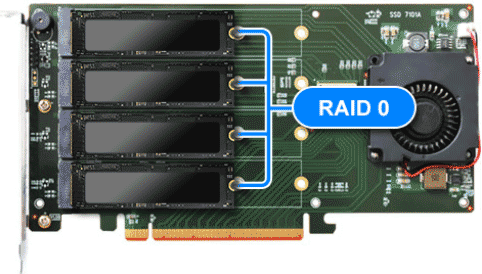

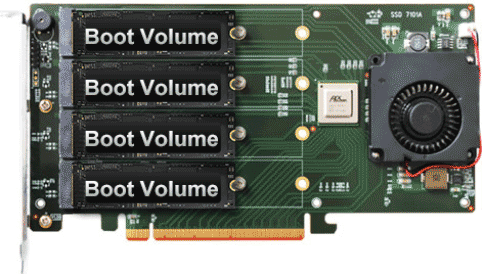

The SSD7102 supports booting with RAID 0 across all four drives, with RAID 1 across pairs of drives, or booting from a single drive in a JBOD configuration. Each drive in the JBOD can be configured to be a boot drive, allowing for multiple OS installs across the different drives. The SSD7102 supports any M.2 NVMe drives from any vendor, although for RAID setups it is advised that identical drives are used.

The card is a single slot device with a heatsink and a 50mm blower fan, to keep every drive cool. Drives up to the full 22110 standard are supported, and HighPoint says that the card is supported under Windows 10, Server 2012 R2 (or later), Linux Kernel 3.3 (or later) and macOS 10.13 (or later). Management of the drives after installation occurs through a browser based tool or a custom API for deployments that want to do their own management, and rebuilding arrays is automatic with auto-resume features. MTBF is set at just under 1M hours, with a typical power draw (minus drives) at 8W. HighPoint states that both AMD and Intel are supported, and given the presence of the PCIe switch, I suspect the card would also ‘work’ in PCIe x8 or x4 modes too.

The PCIe card is due out in November, either direct from HighPoint or through reseller/distribution partners. It is expected to have an MSRP of $399, the same as the current SSD7101-A which does not have the RAID bootable option.

23 Comments

View All Comments

Vorl - Wednesday, October 24, 2018 - link

This could be an amazing card. I wonder what the overhead will be, and how close it can get to the speed of 4 M.2 NVME 4x drives. Those drives are amazingly fast to begin with, and if you can raid 4 of them in a raid 0 (since the SSD failure rate is also amazingly low) they could offer truly unbelievable performance levels.I look forward to when others will come out with cards like this. It will help bring the price down some.

edzieba - Wednesday, October 24, 2018 - link

"It will help bring the price down some. "With the PLX chip on board by necessity, these are going to be priced between "obscenely expensive" and "my company is paying for this".

Unless you're speccing a maxed-out money-no-object workstation, it will likely be cheaper to buy a CPU that supports PCIe Bifurcation (pretty much any Intel CPU in the last 3/4 generations) and motherboard to go with it than to buy this card alone.

Duncan Macdonald - Wednesday, October 24, 2018 - link

If you have a suitable CPU and motherboard then the much cheaper ASRock Ultra Quad M.2 Card is a better option (needs a CPU with 16 available PCIe lanes and the ability to subdivide to 4x4 - eg an AMD Threadripper or EPYC system).Ian Cutress - Wednesday, October 24, 2018 - link

This is the thing - HighPoint will support this for years. If you need consistency over a large scale deployment, then the vendor based quad M.2 cards have too many limitations.imaheadcase - Wednesday, October 24, 2018 - link

Except price. :PFlunk - Wednesday, October 24, 2018 - link

Not really a concern for professional use. Downtime often costs a lot more than $400/unit. For home use, yeah. But Highpoint doesn't exactly target the home user with any of their products.rtho782 - Wednesday, October 24, 2018 - link

I remember back in the x38 days, loads of motherboards had PLX chips. Now they are so expensive as to be incredibly rare.What is stopping a competitor stepping in here? Is there a patent? If so, when does it run out?

DigitalFreak - Wednesday, October 24, 2018 - link

There are one or two competitors that have release products recently. I don't recall the names, but I suspect they're just to new to be used in products like this yet.mpbello - Wednesday, October 24, 2018 - link

Marvell also recently announced a PCIe switch.Billy Tallis - Wednesday, October 24, 2018 - link

It's not exactly a PCIe switch; the 88NR2241 is meant specifically for NVMe and includes RAID and virtualization features that you don't get from a plain PCIe switch. But since it only has an x8 uplink, it isn't a true replacement for things like the PLX switch HighPoint uses. It will be interesting to see whether/when Marvell expands the product line.