ASRock at CES 2018: Ultra Quad M.2 PCIe Card

by Ian Cutress on January 16, 2018 9:00 AM EST

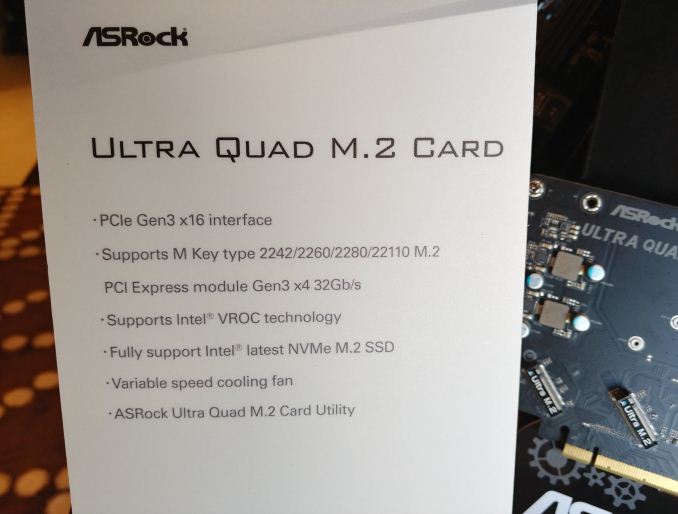

LAS VEGAS, NV – Sometimes, one M.2 PCIe drive is not enough. Some motherboards come with three M.2 slots for NVMe SSDs, but that might not be enough either. To get more, users need add-in cards, and these typically come in single, dual, or quad arrangements. Actually, there’s currently only one company that offers a consumer-focused quad M.2 card. Now ASRock is joining the market.

There is nothing too complex on an M.2 PCIe add-in card: the PCIe lanes on the slot finger go directly to the drives in question. As long as there is sufficient power, and sufficient cooling, there is not much more to it than that. ASRock’s card positions the M.2 drives at a 45-degree angle, provides a PCIe 6-pin for power, uses its metallic shroud for additional heatsink cooling, and then puts in a fan to direct airflow out of the exhaust. This is a variable speed fan that reacts to a thermal sensor, so will speed up if the case is warm. If you wanted overkill M.2 cooling, this is it.

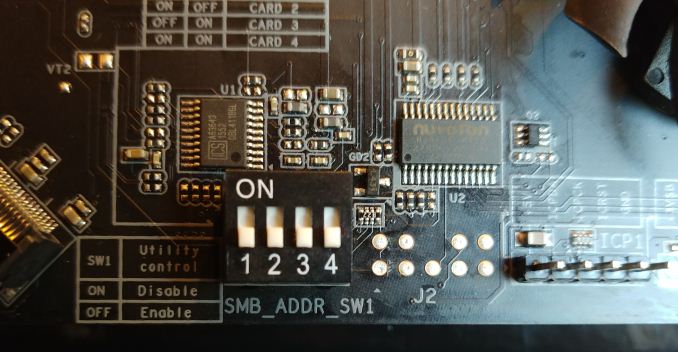

There is another feature that ASRock has had to put on the card, for anyone that wants to put more than one of these Ultra Quad M.2 PCIe Cards into the system. A series of four DIP switches are on the PCB which enables ASRock’s software and the system to determine which is the first Quad M.2 PCIe card, which is the second, and so on. Otherwise enumeration of drives in certain situations might not be guaranteed.

ASRock lists the add-in card as having support for Intel’s VROC technology, although that requires Intel PCIe SSDs in order to function as well as the VROC module. ASRock also stated that these cards support AMD systems without any similar requirements.

We suspect that ASRock will release the card sometime within Q1. Price is still to be determined.

10 Comments

View All Comments

edzieba - Tuesday, January 16, 2018 - link

Oh no, the terrible return of manual IRQ conflict resolution!Lord of the Bored - Wednesday, January 17, 2018 - link

Funny, my first thought was "DIP switches... how nostalgic."bronan - Thursday, May 16, 2019 - link

These switches are needed if you place more cards :D, if you got 4 pcie-x slots you can add 3 x 4 nvme drives what would happen if you make these in a raid-0 setup :DI would not even try to boot from it but would be interesting to see how fast it would run.

However i am not sure how many motherboards really have more than 2 or 3 REAL pci-e X16 slots. So far as i know are most limited to 1 or 2 real 16 slots and the rest are often shared or limited. I have been looking at many but found only a few with 2 real x16 slots

bronan - Thursday, May 16, 2019 - link

lol really could be an issue if you have too many devices in the machine enabled.We suppose to be able to run them and people claim that we do no longer have this issue, but if you do not have the latest new hardware it actually still exists.

I have never believed that a gigabyte z170x gaming 7 motherboard would give issues, but it actualy did so. The reason being 2 nic's and 3 nvme ssd,s 4 x ssd storage and the list goes on finally repoduced stutter and sound issues and some artifacts on my screen. I kept switching and resetting the bios till it finally ran a bit more stable. But i am kinda sure the problem still exists

rhysiam - Tuesday, January 16, 2018 - link

Does this have specific requirements of the system to work? I thought certain chipsets explicitly do *not* allow splitting the CPU PCIe lanes to multiple devices. That's why B250 boards (for example) cannot split the 16 CPU PCIe lanes to multiple graphics cards. Isn't this similar? Or is there a PCIe switch on the board? Would that have a tangible latency impact?Just a few questions!

supremelaw - Thursday, May 10, 2018 - link

The motherboard BIOS needs to support bifurcation. In the ASRock UEFI, it shows up as the "4x4" option. In the ASUS UEFI, it shows up as the "x4/x4/x4/x4" option. These AICs do NOT need a PLX chip, as long as the motherboard can split an x16 PCIe slot into 4 @ x4 PCIe 3.0 lanes, hence 4 x NVMe SSDs. In practice, idle CPU cores (of which are are now many) can now do the job formerly done by dedicated Input-Out Processors on hardware RAID cards. For those who desire to do a fresh install of Windows 10 in a RAID-0 array of 4 x NVMe SSDs, the ASRock approach can be done easily by following the directions which you can request from their Tech Support group. When we requested same, ASRock replied with those directions the next day. The Intel approach currently requires a VROC "dongle", and some reports have claimed that VROC requires Intel SSDs. Because we are currently busy designing a system with the ASRock X399M motherboard, we are not thoroughly familiar with all of the details of Intel's implementation. Hope this helps.supremelaw - Thursday, May 10, 2018 - link

EDIT: "(of which are are now many)" should be "(of which there are now many)"Sorry for the typo.

bronan - Thursday, May 16, 2019 - link

Intel has made the drivers Intel SSD's only and removed the old driver quickly before i could get my hands on it.This far i am pretty sure that they are never going to allow other brands to make use of the driver.

Maybe some hardware driver guru is able to make it work, but my guess is its gonna stay they way it is now