Microsoft Details Windows Machine Learning for Gaming

by Ryan Smith on March 19, 2018 1:00 PM EST- Posted in

- GPUs

- Gaming

- Microsoft

- DirectX 12

In a day filled with all sorts of game development-related API and framework news, Microsoft also has an AI-related announcement for the day. Parallel to today’s DirectX Raytracing announcement – but not strictly a DirectX technology – Microsoft is also announcing that they will be pursuing the use of machine learning in both game development and gameplay through their recently revealed Windows Machine Learning framework (WinML).

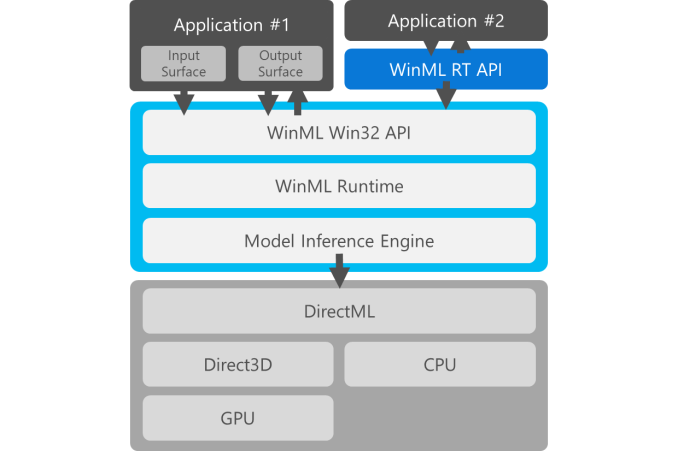

Announced earlier this month, WinML is a rather comprehensive runtime framework for neural networks on Windows 10. Utilizing the industry standard ONNX ML model format, WinML will be able to interface with pre-trained models from Caffe2, Tensorflow, Microsoft’s own CNTK, and other machine learning framework. In turn, the DirectML execution layer is able to run on top of DX12 GPUs as a compute task, using the supplied models for neural network inferencing.

The initial WinML announcement was a little ambiguous, and while today’s game-focused announcement has a more specific point to it, it’s still somewhat light on details. And this is mostly because Microsoft is still putting out feelers to get an idea of what developers would be interested in doing with machine learning functionality. We’re still in the early days of machine learning for more dedicated tasks, never mind game development and gameplay where this is all brand-new, so there aren’t tried-and-true use cases to point to.

On the development front, Microsoft is pitching WinML as a means to speed asset creation, letting machine learning models shoulder part of the workload rather than requiring an artist to develop an asset from start to end. Meanwhile on the gameplay front, the company is talking about the possibilities of using machine learning to develop better AIs for games, including AIs that learn from the player, or even just AIs that act more like humans. None of which is new to games – adaptive AIs have been around long before modern machine learning has – but it’s part of a broader effort to figure out what to do with this disruptive technology.

ML Super Sampling (left) and bilinear upsampling (right)

Though one interesting use case that Microsoft points out that does seem closer to making it to market is using machine learning for content-aware imagine upscaling. NVIDIA was showing this off last year at GTC as their super resolution technology, and while it’s ultimately a bit of a hack, it’s an impressive one. At the same time similar concepts are already used in games in the form of temporal reprojection, so if super resolution could be made to run in a reasonable period of time – no longer than around 2x the time it takes to generate a frame – then I could easily see a trend of developers rendering a game at sub-native resolutions and then upscaling it with super resolution, particularly to improve gaming performance at 4K. Or to work with Microsoft’s more conservative example, using such scaling methods to improve the quality of textures and other assets in real-time.

Moving on, while today’s announcement from Microsoft doesn’t introduce any further technologies, it does offer a bit more detail into the technological underpinnings of WinML. In particular, while the preview release of WinML is FP32 based, the final release will also support FP16 operations. The latter point being of some great importance as not only do recent GPUs implement fast FP16 modes, but NVIDIA’s recent Volta architecture went one step further and included dedicated tensor cores, which are meant to work with FP16 inputs.

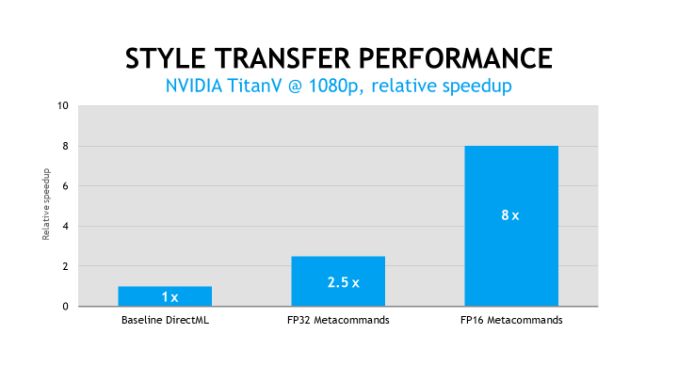

Another notable development here is that while WinML generates HLSL code as a baseline mode, hardware vendors will also have the option of writing DirectML metacommands for WinML to use. The idea behind these highly optimized commands is that hardware vendors can write commands that take full advantage of their hardware – including dedicated hardware like tensor cores – in order to further speed up WinML model inferencing over what’s capable with a naïve HLSL program. Working with NVIDIA, Microsoft is already showing off an 8x performance improvement over baseline DirectML performance by using FP16 metacommands.

Ultimately as with today’s DirectX Raytracing announcement, Microsoft’s WinML gaming announcement is about priming developers to use the technology and to collect feedback on it ahead of its final release. More so than even DirectX Raytracing, this feels like a technology where no one is sure where it’s going to lead. So while DXR has a pretty straightforward path for adoption, it will be interesting to see what developers do with machine learning given that they’re largely starting with a blank slate. To that end, Microsoft has a couple of WinML-related presentations scheduled for this week at GDC, which hopefully should shed a bit more light on developer interest.

Source: Microsoft

6 Comments

View All Comments

willis936 - Monday, March 19, 2018 - link

Are these the same neural network edge detection algorithms made/used by the scholar and hobbyist spaces?Ryan Smith - Monday, March 19, 2018 - link

It can be. WinML supports models from several different frameworks.CheapSushi - Tuesday, March 20, 2018 - link

While we're at it, MS, please bring back DirectSound3D for true sound hardware acceleration. :(This is when soundcards truly died (with EAX). I miss when audio wasn't a second (well, third class) citizen and there was more to it than just fidelity (which is why people get bogged down by DAC/AMP discussions). Soundscapes used to have a real-time ray tracing like implementation too, with materials having different properties for sound refraction, bounce, absorption, etc. Only a few AAA really try to bring out this soundscape aspect now for fuller immersion since most sound is just "good enough / close enough" emulation and fairly respectively basic now in most game engines.

WatcherCK - Wednesday, March 21, 2018 - link

Once game titles make more use of directML are we going to see a resurgence in dual gpu gaming setups but instead of both gpus being used for rendering have one for render (gpu major) and one for compute (gpu minor) as in you add a cheaper 1050/560 gpu just for compute?Or can existing gpus pipeline graphics and compute requests transparently... ?

Hah Im not an expert obviously, but just grew up adding boards into PCs to fulfill a specific function :)

BigMamaInHouse - Wednesday, January 16, 2019 - link

So Radeon7 gonna support this and also Vega offers 2X FP16 performance + Fury/Polaris offer 1:1 FP16 while NV pascal offers only 1:64 FP performance- looks like Vega and old AMD GPU's gonna get even better in 2019 with DiretML while on NV only the Turing Cards gonna be relevant!https://www.overclock3d.net/news/gpu_displays/amd_...

JakeBilson - Sunday, May 24, 2020 - link

Hello! Many people from around the world like to be the same as gamblers at https://gameflashappsreviews.com cause this website could provide many different reviews on the best and different casinos.