Unlimited 5 Year Endurance: The 100TB SSD from Nimbus Data

by Anton Shilov on March 19, 2018 10:45 AM EST- Posted in

- SSDs

- Storage

- NAND

- Enterprise SSDs

- Nimbus Data

- DC100

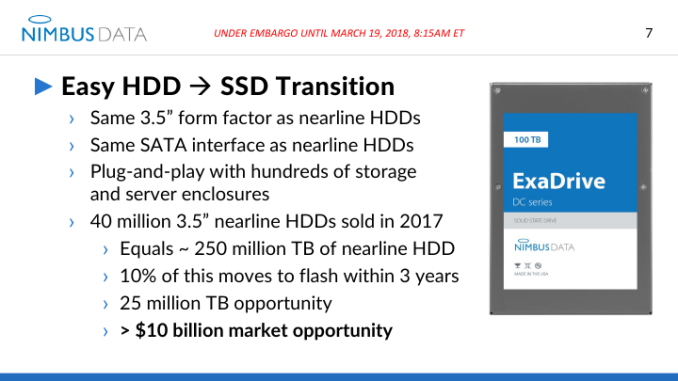

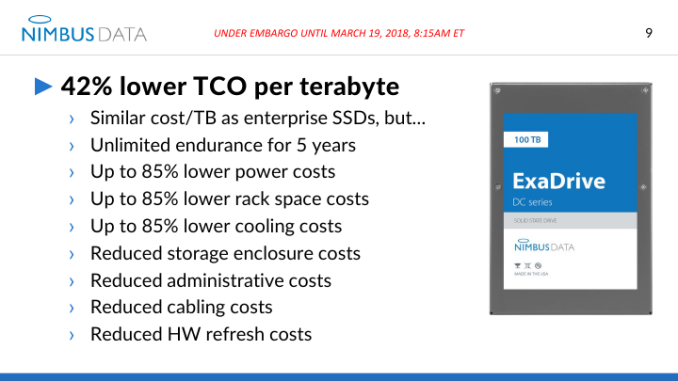

Nimbus Data on Monday introduced its new lineup of ultra-high capacity SSDs designed to compete against nearline HDDs in data centers. The ExaDrive DC drives use proprietary controllers and NAND flash in custom packaging to offer up to 100 TB of flash memory capacity in a standard 3.5-inch package. The SSDs use the SATA 6 Gbps interface and are rated for 'unlimited' endurance.

The Nimbus ExaDrive DC lineup will consist of two models featuring 50 TB and 100 TB capacities, a 3.5-inch form-factor, and a SATA 6 Gbps interface. Over time the manufacturer expects to release DC-series SSDs with an SAS interface, but it is unclear when exactly such drives will be available. When it comes to performance, the Nimbus DC SSDs are rated for up to 500 MB/s sequential read/write speeds as well as up to 100K read/write random IOPS, concurrent with most SATA-based SSDs in this space. As for power consumption, the ExaDrive DC100 consumes 10 W in idle mode and up to 14 W in operating mode.

The ExaDrive DC-series SSDs are based on Nimbus’ proprietary architecture, featuring four custom NAND controllers and a management processor. The drives use 3D MLC NAND flash memory made by SK Hynix in proprietary packaging. Nimbus does not disclose the ECC mechanism supported by the controllers, but keeping in mind that we are dealing with a 3D MLC-based device, it does not need a very strong ECC for maximum endurance.

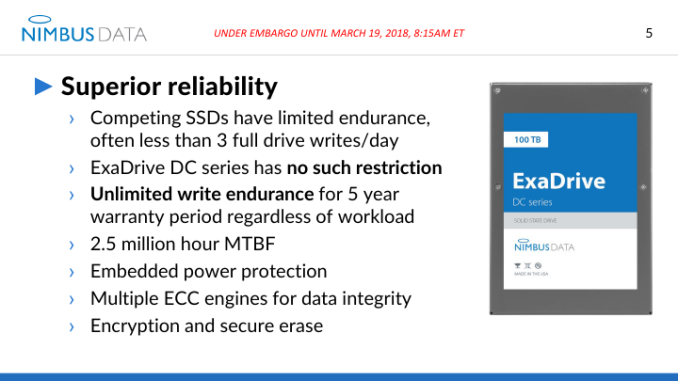

Speaking of endurance, it is worth noting that the 100 TB drive comes with an unlimited write endurance guarantee for the full five-year warranty period. This is not particularly surprising because it is impossible to write more than 43.2 TB of data per 24 hours at 500 MB/s, which equates to 43% of the 100TB drive. For those wondering, at that speed for five years comes to ~79 PB over the 5 year warranty of the drive (assuming constant writes at top speed for five years straight).

The drives support end-to-end CRC as well, with UBER specified as <1 bit per 10^17 bits read.

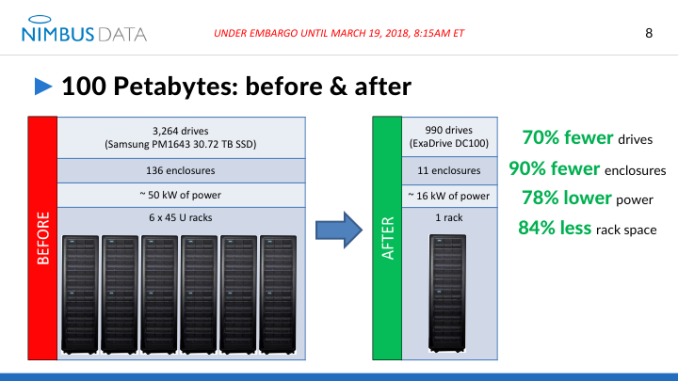

The Nimbus ExaDrive DC SSDs come in industry-standard 3.5-inch form-factor and are compatible with numerous SATA backplanes capable of supporting drives with at least 14 W power consumption. In particular, Nimbus Data says that there are "at least" three vendors of 4U90 chassis with proper thermal and power characteristics to support ExaDrive DC drives and the company has already qualified them. Keeping in mind that not all ultra high density enclosures can support 14 W drives because of power and cooling requirements, the ExaDrive DCs are not drop-in compatible with every application that use 3.5-inch hard drives today. So, if a datacenter operator wants to replace some of its high capacity HDDs with ultra-high capacity SSDs and maximize its storage per square meter density, it will need to use appropriate enclosures. In fact, Nimbus plans to announce high-density reference designs with enclosure partners in the coming weeks, but the company does not elaborate.

| Nimbus Data ExaDrive DC Specifications | |||

| 50 TB | 100 TB | ||

| Form Factor | 3.5" | ||

| Interface | SATA 6 Gbps | ||

| Controller | Four proprietary controller ASICs featuring a management processor | ||

| NAND | SK Hynix 3D MLC in proprietary packaging | ||

| Sequential Read | 500 MB/s | ||

| Sequential Write | 500 MB/s (= 43 TB/day) | ||

| Random Read (4 KB) IOPS | 100,000 | ||

| Random Write (4 KB) IOPS | 100,000 | ||

| Power | Idle | 10 W | |

| Operating | 14 W | ||

| ECC | unknown | ||

| Endurance | Unlimited DWPD for over five years (limited by performance) | ||

| Uncorrectable Bit Error Rate | <1 bit per 1017 bits read | ||

| End-to-End Data Protection | Yes | ||

| Warranty | Five years | ||

| Power Loss Protection | Yes | ||

| Additional Information | Link | ||

According to Nimbus, 40 million 3.5-inch nearline HDDs with an aggregate capacity of 250 million TBs were shipped in 2017. The company believes that 10% of newly sold nearline capacity will move to NAND flash within three years, which represents massive opportunities for high capacity SSDs like the ExaDrive DC.

Nimbus has already begun sampling its DC-series SSDs with select customers. Commercial shipments are expected to begin this summer. Since Nimbus not only sells drives directly to its >200 clients, but also makes them available through its partners like Viking or Smart, and we expect other brands to offer products similar with the ExaDrive DC in the future. So far no formal announcements have been made.

The manufacturer does not disclose pricing of the ExaDrive DC50 and DC100 SSDs, but only says it will be competitive. Keep in mind that since NAND flash pricing tends to fluctuate, only time will tell actual MSRPs of the ExaDrive SSDs when they ship this summer.

Related Reading

- Viking Ships UHC-Silo SSDs: 25 - 50 TB Capacity, Custom eMLC, SAS, $0.4 per GB

- Samsung’s PM1633a Now Available: $10k for 15 TB, $6k for 7 TB

- NGD Launches Catalina: a 24 TB PCIe 3.0 x4 SSD with 3D TLC NAND

- Seagate Introduces 10GB/s PCIe SSD And 60TB SAS SSD

- Samsung’s SSD 850 EVO 4 TB Now Available from Major Retailers

Source: Nimbus Data

25 Comments

View All Comments

iter - Monday, March 19, 2018 - link

Does it self-brick after 5 years?Huge capacity is nice, but it also increases components, therefore decreasing reliability. Most of the SSD drives that fail actually don't run out of PE cycles.

It might make sense if you are somewhere where server space is unreasonably expensive, but for most use cases it will be better to utilize a number of smaller drives instead, that will decrease density, but will increase performance, reliability and data availability.

100 tb is a lot of data to lose, and a lot of data to restore in case of a failure until you get the replacement drive populated and ready for service.

iter - Monday, March 19, 2018 - link

365 * 5 * ~2.2 DWPD comes to over 4000 PE cycles. That might be pushing it when it comes to contemporary high density mlc nand, which is far less durable than it used to be back when it was made on larger process node.Usage patterns are also very important. A fifo pattern might do well to extend media life as close to the theoretical maximum. But imagine a situation where a substantial amount of the data is permanently stored and those blocks of memory are essentially out of circulation, the drive will now have less memory to service them promised 43 tb of writes per day, increasing wear on the blocks that are in circulation.

That can actually be mitigated by moving stale data around, which will also be beneficial to avoid running into issues with data retention, although this will increase complexity and possibly decrease performance. But without that scheme implemented, wearing the drive out using the promised "unlimited endurance" is actually quite possible.

Ian Cutress - Monday, March 19, 2018 - link

43 TB/day is 0.43 DWPD.0.43 * 365 * 5 = 785 PE cycles

iter - Monday, March 19, 2018 - link

My bad, I was thinking DPDW instead of DWPD, that makes it significantly more feasible.FunBunny2 - Monday, March 19, 2018 - link

"That might be pushing it when it comes to contemporary high density mlc nand, which is far less durable than it used to be back when it was made on larger process node."that would be true, if it were true. 3D NAND is built, typically, on 40-50nm nodes, far, far larger than contemporary node size.

ಬುಲ್ವಿಂಕಲ್ ಜೆ ಮೂಸ್ - Monday, March 19, 2018 - link

"But imagine a situation where a substantial amount of the data is permanently stored"-------------------------------------------------------------------------------------------------------------------

Flash is not "permanent"

Who wants to trust 100TB of data to a temporary storage medium?

....and even if it was permanent, I would demand a write protection switch to prevent extortionware from encrypting all my files or other malware from permanently deleting my files

This is not the time to rely on temporary storage for this much data

chaos215bar2 - Monday, March 19, 2018 - link

1) Nothing is permanent. That's what your backups are for.2) If you're going around switching hardware write protection switches on your drives, you're not the target audience here. You clearly aren't managing enough data. If it's a software switch, does it really matter whether it's part of the drive or the OS? Either can potentially be changed by an attacker, and again, that's what your backups are for.

3) To the OP, there's no reason an SSD can't cycle "permanently" stored data onto new blocks occasionally to aid in wear leveling, even if it isn't actually modified.

phoenix_rizzen - Tuesday, March 20, 2018 - link

"365 * 5 * ~2.2 DWPD comes to over 4000 PE cycles. That might be pushing it when it comes to contemporary high density mlc nand, which is far less durable than it used to be back when it was made on larger process node."Reading the article would be helpful. These are using 3D NAND, which tends to be made on much larger processes (45nm or larger) than planar NAND. IOW, 3D NAND actually has *better* durability than planar NAND.

ಬುಲ್ವಿಂಕಲ್ ಜೆ ಮೂಸ್ - Monday, March 19, 2018 - link

If I were to move a Billion small files to this drive, would it slow down and choke after a few hundred MB?How many years would it take to fill it up when using small file sizes?

Wouldn't a SATA drive of this size only be useful for huge files?

How long can it move tiny files before it slows to a crawl

How long before it hits 50% of initial speed?

20% ?

10% ?

0% ?

iter - Monday, March 19, 2018 - link

Max file count depends on the file system. It will probably not slow down significantly, as it is already capped kinda slow, it doesn't even fully saturate sata, which is most likely intentional. And given the capacity, its internal components probably operate at a tiny fraction of their peak performance, so it will likely have plenty of load headroom before it comes to performance degradation.