The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTFinal Thoughts: Do or Do Not - There is no Try

In this review we’ve covered several important topics surrounding CPUs with large numbers of cores: power, frequency, and the need to feed the beast. Running a CPU is like the inverse of a diet – you need to put all the data in to get any data out. The more pi that can be fed in, the better the utilization of what you have under the hood.

AMD and Intel take different approaches to this. We have a multi-die solution compared to a monolithic solution. We have core complexes and Infinity Fabric compared to a MoDe-X based mesh. We have unified memory access compared to non-uniform memory access. Both are going hard against frequency and both are battling against power consumption. AMD supports ECC and more PCIe lanes, while Intel provides a more complete chipset and specialist AVX-512 instructions. Both are competing in the high-end prosumer and workstation markets, promoting high-throughput multi-tasking scenarios as the key to unlocking the potential of their processors.

| The Battle | |||||||||

| Cores/ Threads |

Base/ Turbo |

XFR/ TB |

L3 | DRAM 1DPC |

PCIe | TDP | Cost (8/10) |

||

| AMD | TR 1950X | 16/32 | 3.4/4.0 | +200 | 32 MB | 4x2666 | 60 | 180W | $999 |

| Intel | i9-7900X | 10/20 | 3.3/4.3 | +200 | 13.75 | 4x2666 | 44 | 140W | $980 |

| Intel | i7-6950X | 10/20 | 3.0/3.5 | +500 | 25 MB | 4x2400 | 40 | 140W | $1499 |

| AMD | TR 1920X | 12/24 | 3.5/4.0 | +200 | 32 MB | 4x2666 | 60 | 180W | $799 |

| Intel | i7-7820X | 8/16 | 3.6/4.3 | +200 | 11 MB | 4x2666 | 28 | 140W | $593 |

What most users will see on the specification sheet is this: compared to the Core i9-7900X, the AMD Ryzen Threadripper 1950X has 6 more cores, 16 more PCIe lanes, and ECC support for the same price. Compared to the upcoming sixteen core Core i9-7960X, the Threadripper 1950X still has 16 more PCIe lanes, ECC support, but is now substantially cheaper.

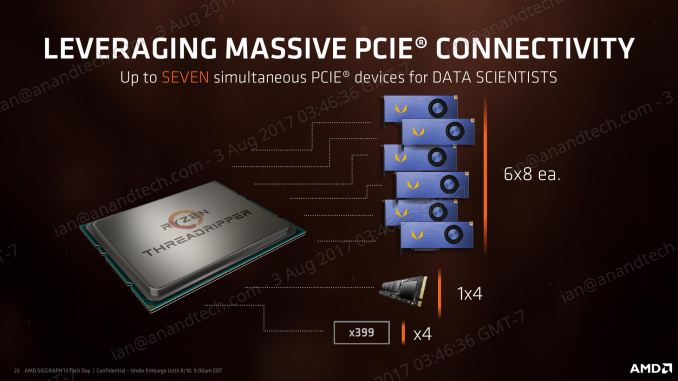

On the side of the 1920X, users will again see more cores, ECC support, and over double the number of PCIe lanes compared to the Core i7-7820X for $100 difference. Simply put, if there is hardware that need PCIe lanes, AMD has the solution.

In our performance benchmarks, there are multiple angles to describe the results we have collected. AMD is still behind when it comes to raw IPC, but plays competitively in frequency. Intel still wins the single threaded tasks, especially those that rely on DRAM latency. AMD pulls ahead when anything needs serious threads by a large amount, and most of the time the memory arrangement is not as much of an Achilles heel as might be portrayed. If a user has a workload that scales, AMD is bringing the cores to help it scale as wide as possible.

Despite Threadripper's design arguably being better tuned to highly threaded workstation-like workloads, the fact that it still has high clocks compared to Ryzen 7 means that gaming is going to be a big part of the equation too. In its default Creative Mode, Threadripper’s gaming performance is middling at best: very few games can use all those threads and the variable DRAM latency means that the cores are sometimes metaphorically tripping over themselves trying to talk to each other and predict when work will be done. To solve this, AMD is offering Game Mode, which cuts the number of cores and focuses memory allocations to the DRAM nearest to the core (at the expense of peak DRAM bandwidth). This has the biggest effect on minimum frame rates rather than average frame rates, and affects 1080p more than 4K, which is perhaps the opposite end of the spectrum to what a top-level enthusiast would be gaming on. In some games, Game Mode makes no difference, while in others it can open up new possibilities. We have a full article on Game Mode here.

If I were to turn around and say that Threadripper CPUs were not pure gaming CPUs, it would annoy a fair lick of the tech audience. The data is there – it’s not the best gaming CPU. But AMD would spin it like this: it allows the user to game, to stream, to watch and to process all at the same time.

You need a lot to do in order to fill 16 cores to the max, and for those that do, it’s a potential winner. For anyone that needs hardcore throughput such as transcode, decode, rendering such as Blender, Cinema 4D or ray-tracing, it’s a great CPU to have. For multi-GPUs or multi-storage aficionados or the part of the crowd that wants to cram a bunch of six PCIe 3.0 x8 FPGAs into a system, AMD has you covered.

Otherwise, as awesome as having 16 cores in a consumer processor is – and for that matter as awesome as the whole Threadripper name is in a 90s hardcore technology kind of way – Threadripper's threads are something of a mixed blessing in consumer workloads. A few well-known workloads can fully saturate the chip – video encoding being the best example – and a number of others can't meaningfully get above a few threads. Some of this has been due to the fact that for the last 8 years, the bread-and-butter of high-end consumer processors have been Intel's quad-core chips. But more than that, pesky Amdahl's Law is never too far away as core counts increase.

The wildcard factor here – and perhaps the area where AMD is treading the most new ground – is in the non-uniform allocation of the cores. NUMA has never been a consumer concern until now, so AMD gets to face the teething issues of that introduction head on. Having multiple modes is a very smart choice, especially since there's a good bit of software out there that isn't fully NUMA-aware, but can fill the CPU if NUMA is taken out of the equation and the CPU is treated as a truly monolithic device. Less enjoyable however is the fact that switching modes requires a reboot; you can have your cake and eat it too thanks to mode switching, but it's a very high friction activity. In the long-term, NUMA-aware code would negate the need for local vs distributed if the code would pin to the lowest latency memory automatically. But in lieu of that, AMD has created the next best thing, as even in an ideal world NUMA is not without its programming challenges, and consequently it's unlikely that every program in the future will pin its own memory correctly.

In that respect, a NUMA-style CPU is currently a bit of a liability in the consumer space, as it's very good for certain extreme workloads but not as well balanced as a single Ryzen. Costs aside, this means that Threadripper isn't always a meaningful performance upgrade over Ryzen. And this isn't a catch unique to AMD – for the longest time, Intel's HEDT products have required choosing between core counts and top-tier single-threaded performance – but the product calculus has become even more complex with Threadripper. There are trade-offs to scaling a CPU to so many cores, and Threadripper bears those costs. So for the consumer market its primarily aimed at, it's more important than ever to consider your planned workloads. Do you need faster Handbrake encoding or smoother gameplay? Can you throw enough cores at Threadripper to keep the beast occupied, or do you only occasionally need more than Ryzen 7's existing 8 cores?

AMD has promised that the socket will live for at least two generations, so Threadripper 2000-series when it comes along should drop straight in after a BIOS update. What makes it interesting is that with the size of the socket and the silicon configuration, AMD could easily make those two ‘dead’ silicon packages into ‘real’ silicon packages, and offer 32 cores. (Although those extra cores would always be polling at far memory speeds).

This is the Core Wars. A point goes to the first chip that calculate the Kessel run in under twelve parsecs.

347 Comments

View All Comments

Zoeff - Thursday, August 10, 2017 - link

Yeeeees! Thanks for the review! I was hoping there'd be an embargo lift at this hour. :DZingam - Sunday, August 13, 2017 - link

The best CPUs for MineSweeper in 2017 in a single article!!!!NikosD - Monday, August 14, 2017 - link

Anandtech is simply wrong regarding Game mode or "Legacy Compatibility Mode" as you prefer to call it and make jokes about it.It seems that you don't know what ALL other reviewers say that Game mode doesn't set SMT off, but it disables one die.

So, Threadripper doesn't become a 16C/16T CPU after enabling Game mode as you say, but a 8C/16T CPU like ALL other reviewers say.

Go read Tom's Hardware which says that Game mode executes "bcdedit /set numproc XX" in order to cut 8 cores and shrink the CPU to one die (8C/16T) but because that's a software restriction the memory and PCIe controller of the second die is still alive, giving Quad Channel memory support and full 60+4 PCIe lanes even in Game mode.

And you thought you are smart and funny regarding your Game mode comments...

monglerbongler - Tuesday, July 10, 2018 - link

real renderers buy epyc or xeon. Either they have the money because its corporate money, they have the money because it comes from plebs paying someone comission/subscription money, or they have the money because they are plebs buying pre-built workstations.craptasticlemon - Wednesday, September 13, 2017 - link

Here's the real Threadripper review:AMD thrashes Intel i9 in every possible way, smushes it's puny ass into the dirt, and dances on the grave for the coup de gras. It is very entertaining to watch the paid Intel lackeys here try to paper over what is clearly a superior product. Keep up with the gaming scores guys, like anyone is buying this for gaming. I for one am looking forward to those delicious 40% faster render times, for the same price as the Intel space heater.

alysdexia - Thursday, April 18, 2019 - link

its, shit-headswifter

Dr. Swag - Thursday, August 10, 2017 - link

In paragraph two you say Ryzen 3 has double the threads of i3, I think you mean to say double the cores :)IanHagen - Thursday, August 10, 2017 - link

Not trying to nitpick or imply anything but... There is a logical reason for Threadripper getting five pages of gaming performance review and Skylake-X not even appearing on the charts more than a month after it was reviewed?Ian Cutress - Thursday, August 10, 2017 - link

Bottom of page one.IanHagen - Thursday, August 10, 2017 - link

With all due respect Mr. Cutress, "circumstances beyond our control" and "odd BIOS/firmware gaming results" didn't prevent anyone from bashing Ryzen for its gaming performance on its debut.