Project Tango Demoed with Qualcomm at SIGGRAPH 2016

by Joshua Ho on July 27, 2016 7:00 AM EST

Project Tango at this point is probably not new to anyone reading this as we’ve discussed it before, but in the past few years Google has been hard at work making positional tracking and localization into a consumer-ready application. While there was an early tablet available with an Nvidia Tegra SoC inside, there were a number of issues on both hardware and software. As the Tegra SoC was not really designed for workloads that Project Tango puts on a mobile device, much of the work was done on the GPU and CPU, with offloading to dedicated coprocessors like ST-M’s Cortex M3 MCUs for sensor hub and timestamp functionality, computer vision accelerators like a VPU from Movidius, and other chips that ultimately increased BOM and board area requirements.

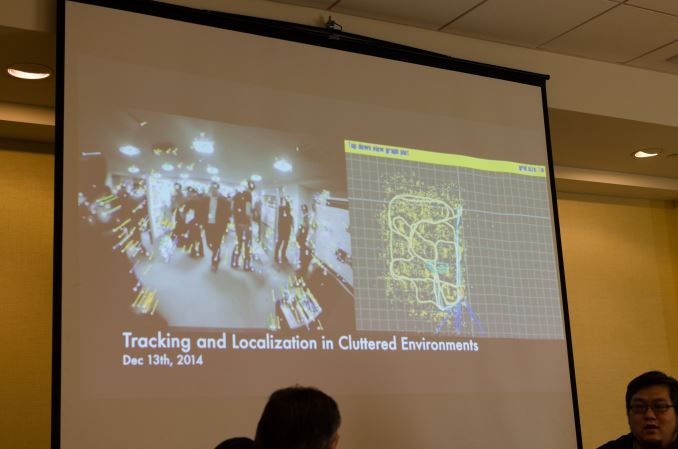

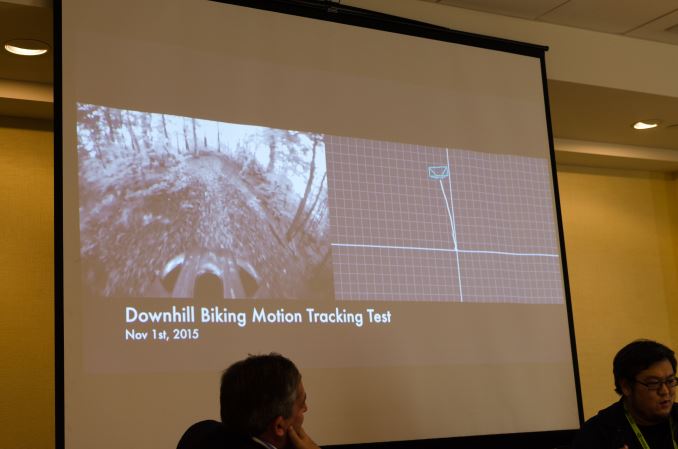

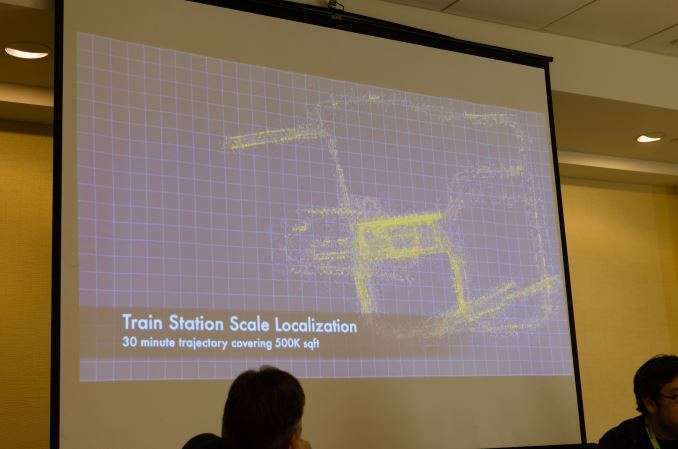

At SIGGRAPH today Google recapped some of this progress that we’ve seen at Google I/O as far as algorithms go and really polishing the sensor fusion, feature tracking, modeling, texturing, and motion tracking aspects of Tango. Anyone that has tried to do some research into how well smartphones can act as inertial navigation devices will probably know that it’s basically impossible to avoid massive integration error that makes the device require constant location updates from an outside source to avoid drifting.

With Tango, the strategy taken to avoid this problem works at multiple levels. At a high level, sensor fusion is used to combine both camera data and inertial data to cancel out noise from both systems. If you traverse the camera tree, the combination of feature tracking on the cameras as well as depth sensing on the depth sensing camera helps with visualizing the environment for both mapping and augmented reality applications. The combination of a traditional camera and a fisheye camera also allows for a sort of distortion correction and additional sanity checks for depth by using parallax, although if you’ve ever tried dual lens solutions on a phone you can probably guess that this distance figure isn’t accurate enough to rely completely on. These are hard engineering problems, so it hasn’t been until recently that we’ve actually seen programs that can do all of these things reliably. Google disclosed that without using local anchor points in memory that the system drifts at a rate of about 1 meter every 100 meters traversed, so if you never return to previously mapped areas the device will eventually have a non-trivial amount of error. However, if you return to previously mapped areas the algorithms used in Tango will be able to reset its location tracking and eliminate accumulated error.

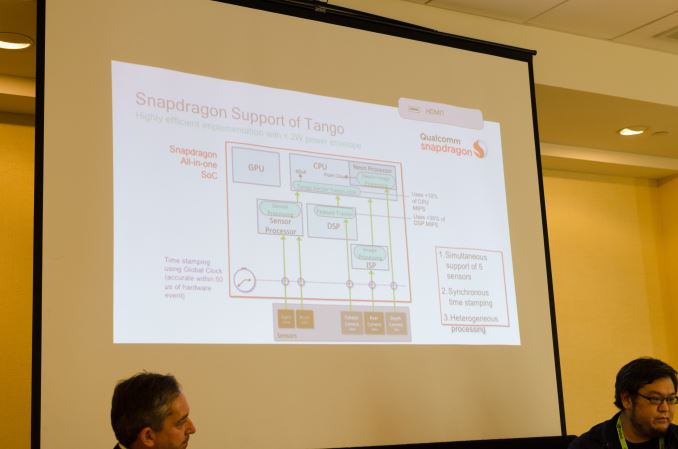

With the Lenovo Phab 2 Pro, Tango is finally coming to fruition in a consumer-facing way. Google has integrated Tango APIs into Android for the Nougat release this fall. Of course, while software is one part of the equation, it’s going to be very difficult to justify supporting Tango capabilities if it needs all of the previously mentioned coprocessors in addition to the depth sensing camera and fisheye camera sensors.

In order to enable Tango in a way that doesn’t require cutting into battery size or general power efficiency, Qualcomm has been working with Google to make the Tango API run on the Snapdragon SoC in its entirety rather than on dedicated coprocessors. While Snapdragon SoCs generally have a global synchronous clock, Tango really pushes the use of this to its full extent by using this clock on multiple sensors to enable the previously mentioned sensor fusion. In addition to this, processing is done on the Snapdragon 652 or 820’s ISP and Hexagon DSP, as well as the integrated sensor hub with low power island. The end result is that there enabling the Tango APIs requires no processing on the GPU and relatively minimal processing on the CPU such that Tango-enabled applications can run without hitting thermal limits and allowing for more advanced applications using Tango APIs. Qualcomm claimed that less than 10% of cycles on the S652 and S820 are used on the CPU and less than 35% of cycles on the DSP are needed as well. Qualcomm noted in further discussion that the use of Hexagon Vector Extensions would further cut down on CPU usage, and that much of the current CPU usage was on the NEON vector units.

To see how all of this translates Qualcomm showed off the Lenovo Phab 2 Pro with some preloaded demo apps like a home improvement application from Lowe's which supports size measurements and live preview of appliances in the home with fairly high level of detail. The quality of the augmented reality visualization is actually shockingly good to the extent that the device can differentiate between walls and the floor so you can’t just stick random things in random places, and the placement of objects is static enough that there’s no strange floatiness that often seems to accompany augmented reality. Objects are redrawn fast enough that camera motion results in seamless and fluid motion of virtual objects, and in general I found it difficult to see any real issues in execution.

While Project Tango still seemed to have some bugs to iron out and some features or polish to add, it looks like as it is now the ecosystem has progressed to the point where Tango API features are basically ready for consumers. The environment tracking for true six degree of freedom movement surely has implications for mobile VR headsets as well, and given that only two extra cameras are needed to enable Tango API features it shouldn’t be that difficult for high-end devices to integrate such features, although due to the size of these sensors it may be more targeted towards phablets than regular smartphones.

13 Comments

View All Comments

K_Space - Wednesday, July 27, 2016 - link

Given that the military/intelligence sector has typically a significant lead over the consumer sector, I wonder how long has NSA/others had access to these or better functions for?Out of interest, is there an Apple equivalent to this?

JoeyJoJo123 - Wednesday, July 27, 2016 - link

I think you're a bit confused here or perhaps misworded your post.No, they don't have a significant lead; the contractors that the military/intelligence government organizations contract have a significant lead, and due to contractual agreements, the government then owns these designs. It would be fair to say that the general military sector has a significant lead, when a typical marine in training on some naval base in Alaska might just be using a government laptop that's 6 years old, and that's only because that's all the government had to offer.

The reason I make this distinction is because it's easy for a reader to read your comment and believe that the goverment is this high-tech body, far outside the reach of normal people. In reality, the vast majority of government workers are still using HP and Dell workstations half a decade old or older, including first responders like 911 and fire departments.

tl;dr

government is incredibly large, slow, and low tech.

companies that want to get contracted by the government (think Boeing, etc) are super high tech.

Big difference.

BrokenCrayons - Wednesday, July 27, 2016 - link

Large institutions, corporations included, are behind the cutting edge when it comes to their own infrastructures and information technologies. While those companies are indeed developing advanced devices that do amazing things, their employees are usually performing daily work on relatively old hardware supported by older network plumbing and dated server farms. The corporate world is staring down the same problems government institutions deal with and some may actually be on longer hardware life cycles than their govt counterparts because they are profit-driven.tuxRoller - Wednesday, July 27, 2016 - link

Well said. That mirrors my experience.K_Space - Thursday, July 28, 2016 - link

Nicely worded and that is what I meant! As a public sector worker I can tell you we're the last people to shift up a gear and only if we have to. Great example was the move from XP to 7 I think it was 18month ago now, simply because MS no longer patched the OS.Dribble - Wednesday, July 27, 2016 - link

MS hololens already does this, so it's not new in the consumer space either.extide - Wednesday, July 27, 2016 - link

Not quite the same though....name99 - Wednesday, July 27, 2016 - link

What exactly do you imagine it is that hololens "already does"?The point of Tango is not AR, or user gestures; it is the use of computer vision to place one's 3D location in space without the use of traditional placement technologies like GPS.

Regardless of the issue of whether or not this is a worthwhile idea as a business project (as opposed to a research project, because it is possible that Bluetooth beacons or other technologies like spraying out IR grids will make the point moot before it's really perfected) as far as I know this is not part of the Kinect/Hololens suite of capabilities.

There is a second point which is that Google will, presumably, situate this within a larger framework of Indoor Maps. No-one has a useful Indoor Mapping story right now. Apple talked about for iOS 8, but shipped nothing that year or the next (and hasn't mentioned it for iOS 10); and Google has shipped nothing either, except that they both support a few very large indoor ares like malls, through traditional location technologies. It seems unlikely, given MS' general inability to actually execute over the past few years, that MS will ship a viable version of such a large product before Apple and Google.

Michael Bay - Thursday, July 28, 2016 - link

>desperate crapple shilling>obligatory antims bullshit

I knew it was you before checking the nickname, dude. ^_^

ats - Thursday, July 28, 2016 - link

To put in perspective, the military has hardware capable of traveling 13,000 km with an error of under 200m without using GPS. The military has other hardware that can travel 12,000 km with an error of under 90m in a GPS denied environment.Both of these of course are ICBM/SLBMs. ICBMs/SLBMs tend to have the most advanced and in some regards most complicated inertial navigation systems in the world. None of the ICBMs have even a fraction of the computing power of a modern smartphone, but what they do have is incredibly precise measuring instruments. In addition, they've been using things like using images to counter error for decades (which is what astro-inertial guidance is, you look at star positions and their movement to help calibrate error).