The AMD Radeon R9 Fury Review, Feat. Sapphire & ASUS

by Ryan Smith on July 10, 2015 9:00 AM ESTMeet The ASUS STRIX R9 Fury

Our second card of the day is ASUS’s STRIX R9 Fury, which arrived just in time for the article cutoff. Unlike Sapphire, Asus is releasing just a single card, the STRIX-R9FURY-DC3-4G-GAMING.

| Radeon R9 Fury Launch Cards | |||||

| ASUS STRIX R9 Fury | Sapphire Tri-X R9 Fury | Sapphire Tri-X R9 Fury OC | |||

| Boost Clock | 1000MHz / 1020MHz (OC) |

1000MHz | 1040MHz | ||

| Memory Clock | 1Gbps HBM | 1Gbps HBM | 1Gbps HBM | ||

| VRAM | 4GB | 4GB | 4GB | ||

| Maximum ASIC Power | 216W | 300W | 300W | ||

| Length | 12" | 12" | 12" | ||

| Width | Double Slot | Double Slot | Double Slot | ||

| Cooler Type | Open Air | Open Air | Open Air | ||

| Launch Date | 07/14/15 | 07/14/15 | 07/14/15 | ||

| Price | $579 | $549 | $569 | ||

With only a single card, ASUS has decided to split the difference between reference and OC cards and offer one card with both features. Out of the box the STRIX is a reference clocked card, with a GPU clockspeed of 1000MHz and memory rate of 1Gbps. However Asus also officially supports an OC mode, which when accessed through their GPU Tweak II software bumps up the clockspeed 20MHz to 1020MHz. With OC mode offering sub-2% performance gains there’s not much to say about performance; the gesture is appreciated, but with such a small overclock the performance gains are pretty trivial in the long run. Otherwise at stock the card should see performance similar to Sapphire’s reference clocked R9 Fury card.

Diving right into matters, for their R9 Fury card ASUS has opted to go with a fully custom design, pairing up a custom PCB with one of the company’s well-known DirectCU III coolers. The PCB itself is quite large, measuring 10.6” long and extending a further .6” above the top of the I/O bracket. Unfortunately we’re not able to get a clear shot of the PCB since we need to maintain the card in working order, but judging from the design ASUS has clearly overbuilt it for greater purposes. There are voltage monitoring points at the front of the card and unpopulated positions that look to be for switches. Consequently I wouldn’t be all that surprised if we saw this PCB used in a higher end card in the future.

Moving on, since this is a custom PCB ASUS has outfitted the card with their own power delivery system. ASUS is using a 12 phase design here, backed by the company’s Super Alloy Power II discrete components. With their components and their “auto-extreme” build process ASUS is looking to make the argument that the STRIX is a higher quality card, and while we’re not discounting those claims they’re more or less impossible to verify, especially compared to the significant quality of AMD’s own reference design.

Meanwhile it comes as a bit of a surprise that even with such a high phase count, ASUS’s default power limits are set relatively low. We’re told that the card’s default ASIC power limit is just 216W, and our testing largely concurs with this. The overall board TBP is still going to be close to AMD’s 275W value, but this means that Asus has clamped down on the bulk of the card’s TDP headroom by default. The card has enough headroom to sustain 1000MHz in all of our games – which is what really matters – while FurMark runs at a significantly lower frequency than any R9 Fury series cards built on AMD’s PCB as a result of the lower power limit. As a result ASUS also bumps up the power limit by 10% when in OC mode to make sure there’s enough headroom for the higher clockspeeds. Ultimately this doesn’t have a performance impact that we can find, and outside of FurMark it’s unlikely to save any power, but given what Fiji is capable of with respect to both performance and power consumption, this is an interesting design choice on ASUS’s part.

PCB aside, let’s cover the rest of the card. While the PCB is only 10.6” long, ASUS’s DirectCU III cooler is larger yet, slightly overhanging the PCB and extending the total length of the card to 12”. Here ASUS uses a collection of stiffeners, screws, and a backplate to reinforce the card and support the bulky heatsink, giving the resulting card a very sturdy design. In a first for any design we’ve seen thus far, the backplate is actually larger than the card, running the full 12” to match up with the heatsink, and like the Sapphire backplate includes a hole immediately behind the Fiji GPU to allow the many capacitors to better cool. Meanwhile builders with large hands and/or tiny cases will want to make note of the card’s additional height; while the card will fit most cases fine, you may want a magnetic screwdriver to secure the I/O bracket screws, as the additional height doesn’t leave much room for fingers.

For the STRIX ASUS is using one of the company’s triple-fan DirectCU III coolers. Starting at the top of the card with the fans, ASUS calls the fans on this design their “wing-blade” fans. Measuring 90mm in diameter, ASUS tells us that this fan design has been optimized to increase the amount of air pressure on the edge of the fans.

Meanwhile the STRIX also implements ASUS’s variation of zero fan speed idle technology, which the company calls 0dB Fan technology. As one of the first companies to implement zero fan speed idling, the STRIX series has become well known for this feature and the STRIX R9 Fury is no exception. Thanks to the card’s large heatsink ASUS is able to power down the fans entirely while the card is near or at idle, allowing the card to be virtually silent under those scenarios. In our testing this STRIX card has its fans kick in at 55C and shutting off again at 46C.

| ASUS STRIX R9 Fury Zero Fan Idle Points | ||||

| GPU Temperature | Fan Speed | |||

| Turn On | 55C | 28% | ||

| Turn Off | 46C | 25% | ||

As for the DirectCU III heatsink on the STRIX, as one would expect ASUS has gone with a large and very powerful heatsink to cool the Fiji GPU underneath. The aluminum heatsink runs just shy of the full length of the card and features 5 different copper heatpipes, the largest of the two coming in at 10mm in diameter. The heatpipes in turn make almost direct contact with the GPU and HBM, with ASUS having installed a thin heatspeader of sorts to compensate for the uneven nature of the GPU and HBM stacks.

In terms of cooling performance AMD’s Catalyst Control Center reports that ASUS has capped the card at 39% fan speed, though in our experience the card actually tops out at 44%. At this level the card will typically reach 44% by the time it hits 70C, at which point temperatures will rise a bit more before the card reaches homeostasis. We’ve yet to see the card need to ramp past 44%, though if the temperature were to exceed the temperature target we expect that the fans would start to ramp up further. Without overclocking the highest temperature measured was 78C for FurMark, while Crysis 3 topped out at a cooler 71C.

Moving on, ASUS has also adorned the STRIX with a few cosmetic adjustments of their own. The top of the card features a backlit STRIX logo, which pulsates when the card is turned on. And like some prior ASUS cards, there are LEDs next to each of the PCIe power sockets to indicate whether there is a full connection. On that note, with the DirectCU III heatsink extending past the PCIe sockets, ASUS has once again flipped the sockets so that the tabs face the rear of the card, making it easier to plug and unplug the card even with the large heatsink.

Since this is an ASUS custom PCB, it also means that ASUS has been able to work in their own Display I/O configuration. Unlike the AMD reference PCB, for their custom PCB ASUS has retained a DL-DVI-D port, giving the card a total of 3x DisplayPorts, 1x HDMI port, and 1x DL-DVI-D port. So buyers with DL-DVI monitors not wanting to purchase adapters will want to pay special attention to ASUS’s card.

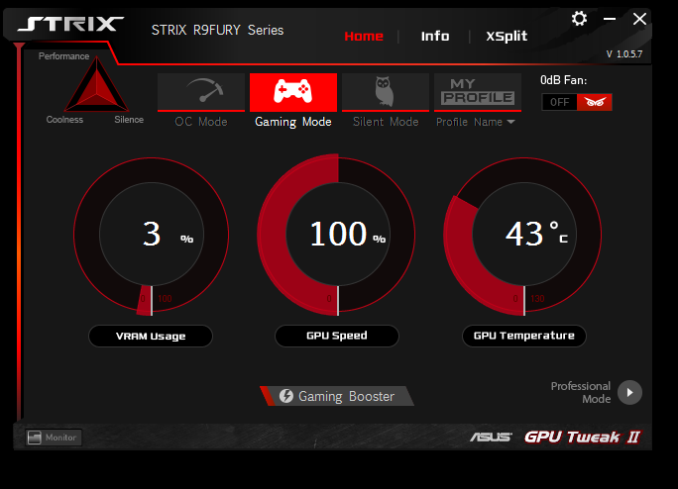

Finally, on the software front, the STRIX includes the latest iteration of ASUS’s GPU Tweak software, which is now called GPU Tweak II. Since the last time we took at look at GPU Tweak the software has undergone a significant UI overhaul, with ASUS giving it more distinct basic and professional modes. It’s through GPU Tweak II that the card’s OC mode can be accessed, which bumps up the card’s clockspeed to 1020MHz. Meanwhile the other basic overclocking and monitoring functions one would expect from a good overclocking software package are present; GPU Tweak II allows control over clockspeeds, fan speeds, and power targets, while also monitoring all of these features and more.

GPU Tweak II also includes a built-in copy of the XSplit game broadcasting software, along with a 1 year premium license. Finally, perhaps the oddest feature of GPU Tweak II is the software’s Gaming Booster feature, which is ASUS’s system optimization utility. Gaming Booster can adjust the system visual effects, system services, and perform memory defragmentation. To be frank, ASUS seems like they were struggling to come up with something to differentiate GPU Tweak II here; messing with system services is a bad idea, and system memory defragmentation is rarely necessary given the nature and abilities of Random Access Memory.

Wrapping things up, the ASUS STRIX R9 Fury will be the most expensive of the R9 Fury launch cards. ASUS is charging a $30 premium for the card, putting the MSRP at $579.

288 Comments

View All Comments

akamateau - Tuesday, July 14, 2015 - link

Radeon 290x is 33% faster than 980 Ti with DX12 and Mantle. It is equal to Titan X.http://wccftech.com/amd-r9-290x-fast-titan-dx12-en...

Sefem - Wednesday, July 15, 2015 - link

You should stop reading wccftech.com this site is full of sh1t! you made also an error because they are comparing 290x to 980 and not the Ti!Asd :D I'm still laughing... those moron cited PCper's numbers as fps, they probably made the assumption since are 2 digit numbers but that's because PCper show numbers in million!!! look at that http://www.pcper.com/files/imagecache/article_max_...

wccftech.com also compare the the 290x on Mantle with the 980 on DX12, probably for an apple to apple comparison ;), the fun continue if you read this Futurmark's not on this particular benchmark, that essentially says something pretty obvious, number of draw calls don't reflect actual performance and thus shouldn't be used to compare GPU's

http://a.disquscdn.com/uploads/mediaembed/images/1...

Finally I think there's something wrong with PC world's results since NVIDIA should deliver more draw calls than AMD on DX11.

FlushedBubblyJock - Wednesday, July 15, 2015 - link

They told us Fury X was 20% and more faster, they lied then, too.Now amd fanboys need DX12 as a lying tool.

Failure requires lying, for fanboys.

Drumsticks - Friday, July 10, 2015 - link

Man auto correct plus an early morning post is hard. I meant "do you expect more optimized drivers to cause the Fury to leap further ahead of the 980, or the Fury X to catch up to the 980 Ti" haha. My bad.My first initial impression on that assessment would be yes, but I'm not an expert so I was wondering how many people would like to weigh in.

Samus - Friday, July 10, 2015 - link

Fuji has a lot more room for driver improvement and optimization than maxwell, which is quite well optimized by now. I'd expect the fury x to tie the 980ti in the near future, especially in dx12 games. But nvidia will probably have their new architecture ready by then.FlushedBubblyJock - Wednesday, July 15, 2015 - link

So, Nvidia is faster, and has been for many months, and still is faster, but a year or two into the future when amd finally has dxq12 drivers and there are actually one or two Dx12 games,why then, amd will have a card....

MY GOD HOW PATHETIC. I mean it sounded so good, you massaging their incompetence and utter loss.

evolucion8 - Friday, July 17, 2015 - link

Your continuous AMD bashing is more pathetic. Check the performance numbers of the GTX 680 when it was launched and check where it stands now? Do the same thing with the GTX 780 and then with the GTX 970, then talk.CiccioB - Monday, July 13, 2015 - link

That is another confirmation that AMD GCN doesn't scale well. That problem was already seen with Hawaii, but also Tahiti showed it's inefficiency with respect to smaller GPUs like Pitcairn.Nvidia GPUs scales almost linearly with respect to the resources integrated into the chip.

This has been a problem for AMD up to now, but it would be worse with new PP, as if no changes to solve this are introduced, nvidia could enlarge its gap with respect to AMD performances when they both can more than double the number of resources on the same die area.

Sdriver - Wednesday, July 15, 2015 - link

This resources reduction just means that AMD performance bottleneck is somewhere else in card. We have to see that this kind of reduction is not made to purposely slow down a card but to reduce costs or to utilize chips which didn't pass all tests to become a X model. AMD is know to do that since their weird but very functional 3 cores Phenons. Also this means if they can work better on the real bottleneck, they will be able to make a stronger card with much less resources, who remembers the HD 4770?...akamateau - Tuesday, July 14, 2015 - link

@ Ryan SmithThis review is actually a BIG LIE.

ANAND is hiding the DX12 results that show 390x outperforming GTX 980Ti by 33%+, Fury outperforming 980ti by almost 50% and Titan X by almost 20%.

Figures do not lie. BUT LIARS FIGURE.

Draw calls are the best metric we have right now to compare AMD Radeon to nVidia ON A LEVEL PLAYING FIELD.

You can not render and object before you draw it!

I dare you to run the 3dMark API Overhead Feature Tests on Fury show how Mantle and DX12 turns nVidia siliocn into RUBBISH.

Radeon 290x CRUSHES 980Ti by 33% and is just a bit better than Titan X.

www dot eteknix.com/amd-r9-290x-goes-head-to-head-with-titan-x-with-dx12/

"AMD R9 290X As Fast As Titan X in DX12 Enabled 3DMark – 33% Faster Than GTX 980"

www dot wccftech dot com/amd-r9-290x-fast-titan-dx12-enabled-3dmark-33-faster-gtx-980/