The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

Starting with voltages, at least for the time being we have nothing to report on R9 Fury X as far as voltages go. AMD is either not exposing voltages in their drivers, or our existing tools (e.g. MSI Afterburner) do not know how to read the data, and as a result we cannot see any of the voltage information at this time.

| Radeon R9 Fury X Average Clockspees | ||

| Game | R9 Fury X | |

| Max Boost Clock | 1050MHz | |

| Battlefield 4 |

1050MHz

|

|

| Crysis 3 |

1050MHz

|

|

| Mordor |

1050MHz

|

|

| Civilization: BE |

1050MHz

|

|

| Dragon Age |

1050MHz

|

|

| Talos Principle |

1050MHz

|

|

| Far Cry 4 |

1050MHz

|

|

| Total War: Attila |

1050MHz

|

|

| GRID Autosport |

1050MHz

|

|

| Grand Theft Auto V |

1050MHz

|

|

| FurMark |

985MHz

|

|

Jumping straight to average clockspeeds then, with an oversized cooler and a great deal of power headroom, the R9 Fury X has no trouble hitting and sustaining its 1050MHz boost clockspeed throughout every second of our benchmark runs. The card was designed to be the pinnacle of Fiji cards, and ensuring it always runs at a high clockspeed is one of the elements in doing so. The lack of throttling means there’s really little to talk about here, but it sure gets results.

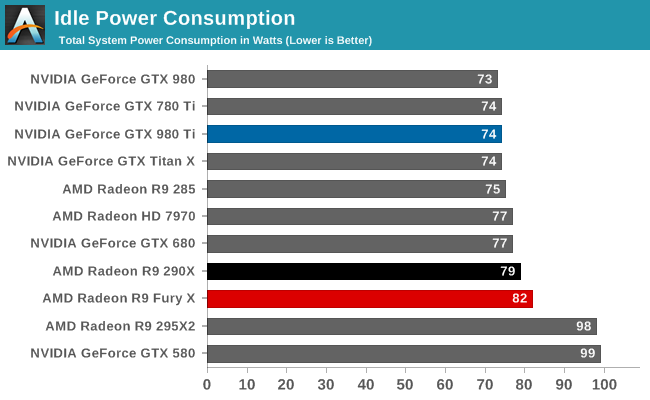

Idle power does not start things off especially well for the R9 Fury X, though it’s not too poor either. The 82W at the wall is a distinct increase over NVIDIA’s latest cards, and even the R9 290X. On the other hand the R9 Fury X has to run a CLLC rather than simple fans. Further complicating factors is the fact that the card idles at 300MHz for the core, but the memory doesn’t idle at all. HBM is meant to have rather low power consumption under load versus GDDR5, but one wonders just how that compares at idle.

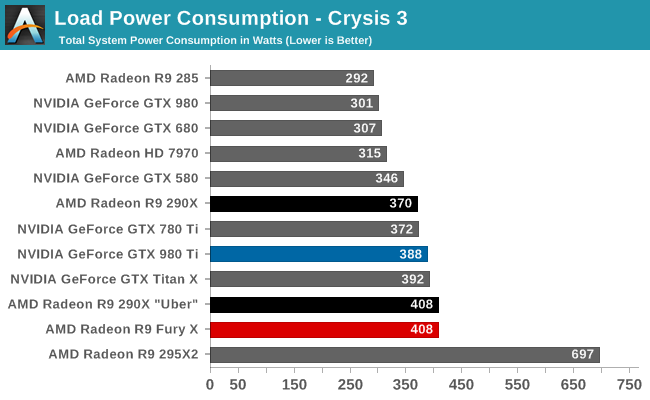

Switching to load power consumption, we go first with Crysis 3, our gaming load test. Earlier in this article we discussed all of the steps AMD took to rein in on power consumption, and the payoff is seen here. Equipped with an R9 Fury X, our system pulls 408W at the wall, a significant amount of power, but only 20W at the wall more than the same system with a GTX 980 Ti. Given that the R9 Fury X’s framerates trail the GTX 980 Ti here, this puts AMD’s overall energy efficiency in a less-than-ideal spot, but it’s not poor either, especially compared to the R9 290X. Power consumption has essentially stayed put while performance has gone up 35%+.

On a side note, as we mentioned in our architectural breakdown, the amount of power this card draws will depend on its temperature. 408W at the wall at 65C is only 388W at the wall at 40C, as current leakage scales with GPU temperature. Ultimately the R9 Fury X will trend towards 65C, but it means that early readings can be a bit misleading.

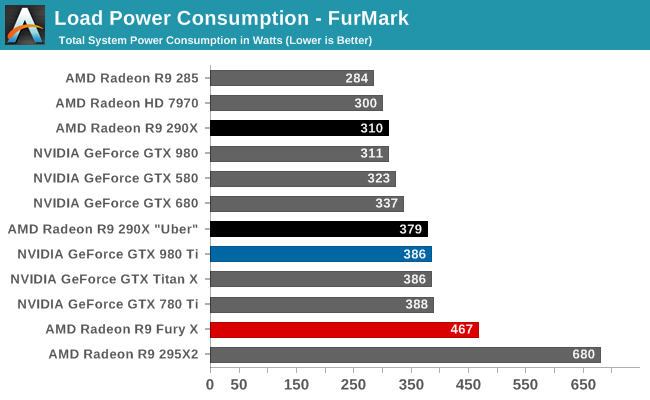

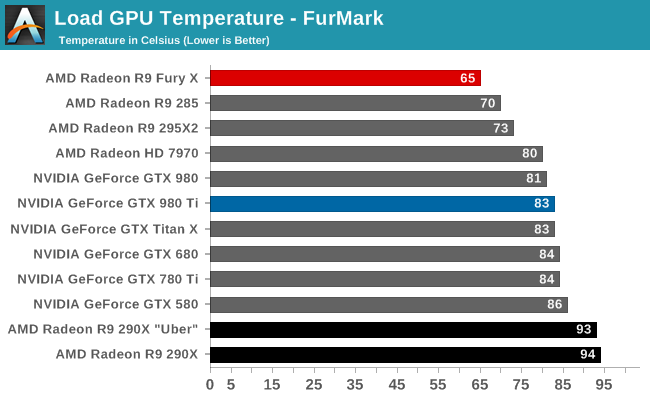

As for FurMark, what we find is that power consumption at the wall is much higher, which far be it from being a problem for AMD, proves that the R9 Fury X has much greater thermal and electrical limits than the R9 290X, or the NVIDIA completion for that matter. AMD ultimately does throttle the R9 Fury X here, at around 985MHz, but the card easily draws quite a bit of power and dissipates quite a bit of heat in the process. If the card had a gaming scenario that called for greater power consumption – say BIOS modded overclocking – then these results paint a favorable picture.

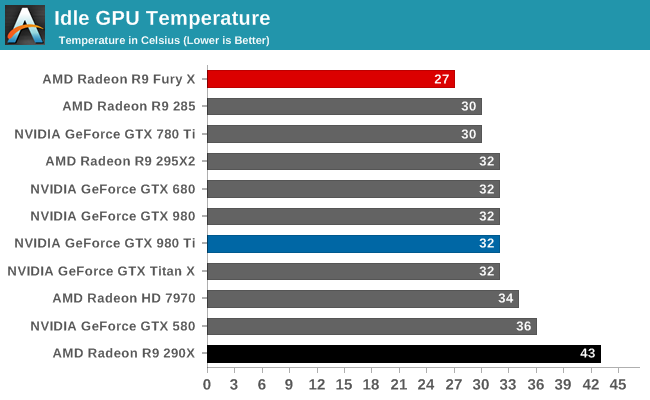

Moving on to temperatures, the R9 Fury X starts off looking very good. Even at minimum speeds the pump and radiator leads to the Fiji GPU idling at just 27C, cooler than anything else on this chart. More impressive still is the R9 290X comparison, where the R9 Fury X is some 15C cooler, and this is just at idle.

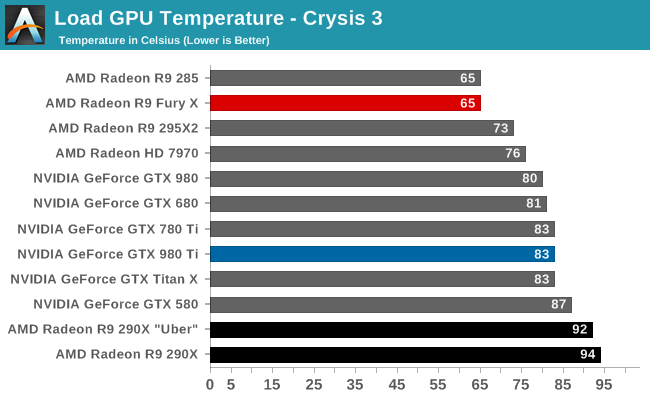

Loading up a game, after a good 10 minutes or so the R9 Fury X finally reaches its equilibrium temperature of 65C. Though the default target GPU temperature is 75C, it’s at 65C that the card finally begins to ramp up the fan in order to increase cooling performance. The end result is that the card reaches equilibrium at this point and in our experience should not exceed this temperature.

Compared to the NVIDIA cards, this is an 18C advantage in AMD’s favor. GPU temperatures are not everything – ultimately it’s fan speed and noise we’re more interested in – but for AMD GPU temperatures are an important component of controlling GPU power consumption. By keeping the Fiji GPU at 65C AMD is able to keep leakage power down, and therefore energy efficiency up. R9 Fury X would undoubtedly fare worse in this respect if it got much warmer.

Finally, it’s once again remarkable to compare the R9 Fury X to the R9 290X. With the former AMD has gone cool to keep power down, whereas with the latter AMD went hot to improve cooling efficiency. As a result the R9 Fury X is some 29C cooler than the R9 290. One can only imagine what that has done for leakage.

The situation is more or less the same under FurMark. The NVIDIA cards are set to cap at 83C, and the R9 Fury X is set to cap at 65C. This is regardless of whether it’s a game or a power virus like FurMark.

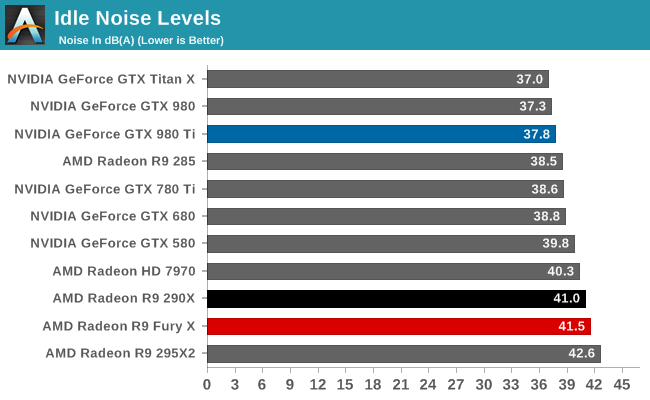

Last but not least, we have noise. Starting with idle noise, as we mentioned in our look at the build quality of the R9 Fury X, the card’s cooler is effective under load, but a bit of a liability at idle. The use of a pump brings with it pump noise, and this drives up idle noise levels by around 4dB. 41.5dB is not too terrible for a closed case, and it’s not an insufferable noise, but HTPC users will want to be weary. This if anything makes a good argument for looking forward to the R9 Nano.

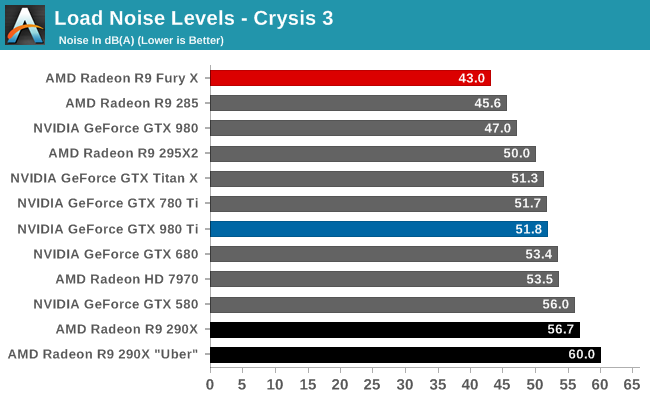

Because the R9 Fury X starts out a bit loud due to pump noise, the actual noise increase under load is absolutely miniscule. The card tops out at 19% fan speed, 4% (or about 100 RPM) over its default fan speed of 15%. As a result we measure an amazing 43dB under load. For a high performance video card. For a high performance card within spitting distance of NVIDIA’s flagship and one of the best air cooled video cards of all time.

These results admittedly were not unexpected – one need only look at the R9 295X2 to get an idea of what a CLLC could do for noise – but they are none the less extremely impressive. Most midrange cards are louder than this despite offering a fraction of the R9 Fury X’s gaming performance, which puts the R9 Fury X at a whole new level for load noise from a high performance video card.

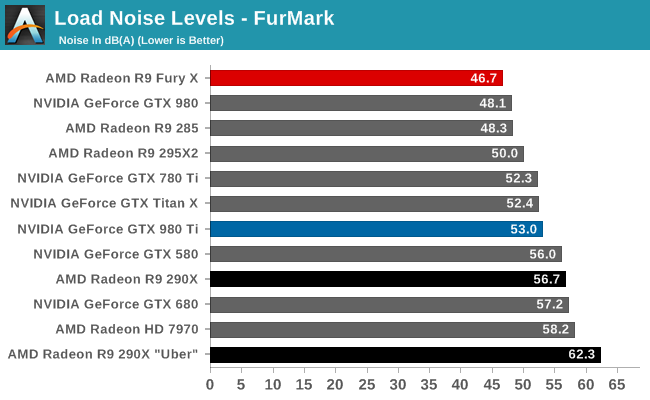

The trend continues under FurMark. The fan speed ramps up quite a bit further here thanks to the immense load from FurMark, but the R9 Fury X still perseveres. 46.7 dB(A) is once again better than a number of mid-range video cards, never mind the other high-end cards in this roundup. The R9 Fury X is dissipating 330W of heat and yet it’s quieter than the GTX 980 at half that heat, and around 6 dB(A) quieter than the 250W GM200 cards.

There really isn’t enough nice things I can say about the R9 Fury X’s cooler. AMD took the complaints about the R9 290 series to heart, and produced something that wasn’t just better than their previous attempt, but a complete inverse of their earlier strategy. The end result is that the R9 Fury X is well near whisper quiet under gaming, and only a bit louder under even the worst case scenario. This is a remarkable change, and one that ears everywhere will appreciate.

That said, the mediocre idle noise showing will undoubtedly dog the R9 Fury X in some situations. For most cases it will not be an issue, but it does close some doors on ultra-quiet setups. The R9 Fury X in that respect is merely very, very quiet.

458 Comments

View All Comments

chizow - Sunday, July 5, 2015 - link

@piiman - I guess we'll see soon enough, I'm confident it won't make any difference given GPU prices have gone up and up anyways. If anything we may see price stabilization as we've seen in the CPU industry.medi03 - Sunday, July 5, 2015 - link

Another portion of bulshit from nVidia troll.AMD never ever had more than 25% of CPU share. Doom to Intel, my ass.

Even in Prescott times Intell was selling more CPUs and for higher price.

chizow - Monday, July 6, 2015 - link

@medi03 AMD was up to 30% a few times and they did certainly have performance leadership at the time of K8 but of course they wanted to charge anyone for the privilege. Higher price? No, $450 for entry level Athlon 64, much more than what they charged in the past and certainly much more than Intel was charging at the time going up to $1500 on the high end with their FX chips.Samus - Monday, July 6, 2015 - link

Best interest? Broken up for scraps? You do realize how important AMD is to people who are Intel\NVidia fans right?Without AMD, Intel and NVidia are unchallenged, and we'll be back to paying $250 for a low-end video card and $300 for a mid-range CPU. There would be no GTX 750's or Pentium G3258's in the <$100 tier.

chizow - Monday, July 6, 2015 - link

@Samus, they're irrelevant in the CPU market and have been for years, and yet amazingly, prices are as low as ever since Intel began dominating AMD in performance when they launched Core 2. Since then I've upgraded 5x and have not paid more than $300 for a high-end Intel CPU. How does this happen without competition from AMD as you claim? Oh right, because Intel is still competing with itself and needs to provide enough improvement in order to entice me to buy another one of their products and "upgrade".The exact same thing will happen in the GPU sector, with or without AMD. Not worried at all, in fact I'm looking forward to the day a company with deep pockets buys out AMD and reinvigorates their products, I may actually have a reason to buy AMD (or whatever it is called after being bought out) again!

Iketh - Monday, July 6, 2015 - link

you overestimate the human drive... if another isn't pushing us, we will get lazy and that's not an argument... what we'll do instead to make people upgrade is release products in steps planned out much further into the future that are even smaller steps than how intel is releasing nowsilverblue - Friday, July 3, 2015 - link

I think this chart shows a better view of who was the underdog and when:http://i59.tinypic.com/5uk3e9.jpg

ATi were ahead for the 9xxx series, and that's it. Moreover, NVIDIA's chipset struggles with Intel were in 2009 and settled in early 2011, something that would've benefitted NVIDIA far more than Intel's settlement with AMD as it would've done far less damage to NVIDIA's financials over a much shorter period of time.

The lack of higher end APUs hasn't helped, nor has the issue with actually trying to get a GPU onto a CPU die in the first place. Remember that when Intel tried it with Clarkdale/Arrandale, the graphics and IMC were 45nm, sitting alongside everything else which was 32nm.

chizow - Friday, July 3, 2015 - link

I think you have to look at a bigger sample than that, riding on the 9000 series momentum, AMD was competitive for years with a near 50/50 share through the X800/X1900 series. And then G80/R600 happened and they never really recovered. There was a minor blip with Cypress vs. Fermi where AMD got close again but Nvidia quickly righted things with GF106 and GF110 (GTX 570/580).Scali - Tuesday, July 7, 2015 - link

nVidia wasn't the underdog in terms of technology. nVidia was the choice of gamers. ATi was big because they had been around since the early days of CGA and Hercules, and had lots of OEM contracts.In terms of technology and performance, ATi was always struggling to keep up with nVidia, and they didn't reach parity until the Radeon 8500/9700-era, even though nVidia was the newcomer and ATi had been active in the PC market since the mid-80s.

Frenetic Pony - Thursday, July 2, 2015 - link

Well done analysis, though the kick in the head was Bulldozer and it's utter failure. Core 2 wasn't really AMD's downfall so much as Core/Sandy Bridge, which came at the exact wrong time for the utter failure of Bulldozer. This combined with AMD's dismal failure to market its graphics card has cost them billions. Even this article calls the 290x problematic, a card that offered the same performance as the original Titan at a fraction of the price. Based on empirical data the 290/x should have been almost continuously sold until the introduction of Nvidia's Maxwell architecture.Instead people continued to buy the much less performant per dollar Nvidia cards and/or waited for "the good GPU company" to put out their new architecture. AMD's performance in marketing has been utterly appalling at the same time Nvidia's has been extremely tight. Whether that will, or even can, change next year remains to be seen.