The AMD Radeon R9 Fury X Review: Aiming For the Top

by Ryan Smith on July 2, 2015 11:15 AM ESTThe State of Mantle, The Drivers, & The Test

Before diving into our long-awaited benchmark results, I wanted to quickly touch upon the state of Mantle now that AMD has given us a bit more insight into what’s going on.

With the Vulkan project having inherited and extended Mantle, Mantle’s external development is at an end for AMD. AMD has already told us in the past that they are essentially taking it back inside, and will be using it as a platform for testing future API developments. Externally then AMD has now thrown all of their weight behind Vulkan and DirectX 12, telling developers that future games should use those APIs and not Mantle.

In the meantime there is the question of what happens to existing Mantle games. So far there are about half a dozen games that support the API, and for these games Mantle is the only low-level API available to them. Should Mantle disappear, then these games would no longer be able to render at such a low-level.

The situation then is that in discussing the performance results of the R9 Fury X with Mantle, AMD has confirmed that while they are not outright dropping Mantle support, they have ceased all further Mantle optimization. Of particular note, the Mantle driver has not been optimized at all for GCN 1.2, which includes not just R9 Fury X, but R9 285, R9 380, and the Carrizo APU as well. Mantle titles will probably still work on these products – and for the record we can’t get Civilization: Beyond Earth to play nicely with the R9 285 via Mantle – but performance is another matter. Mantle is essentially deprecated at this point, and while AMD isn’t going out of their way to break backwards compatibility they aren’t going to put resources into helping it either. The experiment that is Mantle has come to an end.

This will in turn impact our testing somewhat. For our 2015 benchmark suite we began using low-level APIs when available, which in the current game suite includes Battlefield 4, Dragon Age: Inquisition, and Civilization: Beyond Earth, not counting on AMD to cease optimizing Mantle quite so soon. As a result we’re in the uncomfortable position of having to backtrack on our policies some in order to not base our recommendations on stupid settings.

Starting with this review we’re going to use low-level APIs when available, and when using them makes performance sense. That means we’re not going to use Mantle in the cases where performance has clearly regressed due to a lack of optimizations, but will use it for games where it still works as expected (which essentially comes down to Civ: BE). Ultimately everything will move to Vulkan and DirectX 12, but in the meantime we will need to be more selective about where we use Mantle.

The Drivers

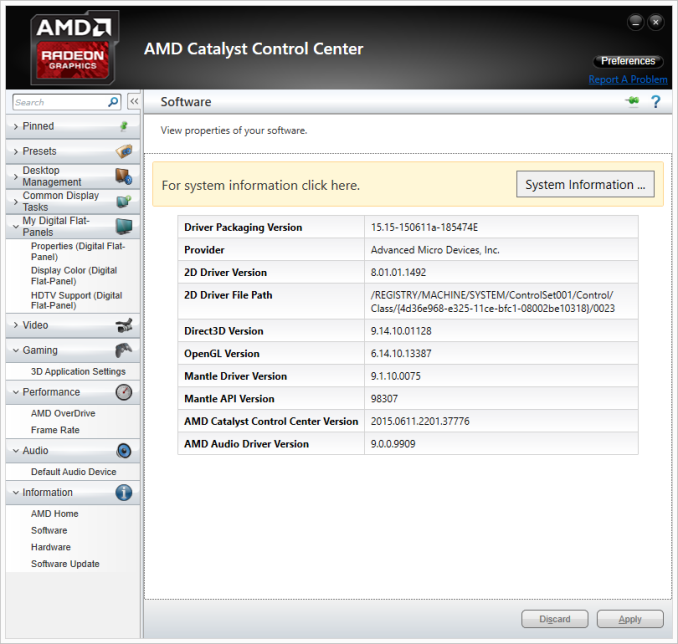

For the launch of the 300/Fury series, AMD has taken an unexpected direction with their drivers. The launch driver for these parts is the Catalyst 15.15 driver, AMD’s next major driver branch which includes everything from Fiji support to WDDM 2.0 support. However in launching these parts, AMD has bifurcated their drivers; the new cards get Catalyst 15.15, the old cards get Catalyst 15.6 (driver version 14.502).

Eventually AMD will bring these cards back together in a later driver release, after they have done more extensive QA against their older cards. In the meantime it’s possible to use a modified version of Catalyst 15.15 to enable support for some of these older cards, but unsigned drivers and Windows do not get along well, and it introduces other potential issues. Otherwise considering that these new drivers do include performance improvements for existing cards, we are not especially happy with the current situation. Existing Radeon owners are essentially having performance held back from them, if only temporarily. Small tomes could be written on AMD’s driver situation – they clearly don’t have the resources to do everything they’d like to at once – but this is perhaps the most difficult situation they’ve put Radeon owners in yet.

The Test

Finally, let’s talk testing. For our benchmarking we have used AMD’s Catalyst 15.15 beta drivers for the R9 Fury X, and their Catalyst 15.5 beta drivers for all other AMD cards. Meanwhile for NVIDIA cards we are on release 352.90.

From a build standpoint we’d like to remind everyone that installing a GPU radiator in our closed cased test bed does require reconfiguring the test bed slightly; a 120mm rear exhaust fan must be removed to make room for the GPU radiator.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 Fury X AMD Radeon R9 295X2 AMD Radeon R9 290X AMD Radeon R9 285 AMD Radeon HD 7970 NVIDIA GeForce GTX Titan X NVIDIA GeForce GTX 980 Ti NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 780 Ti NVIDIA GeForce GTX 680 NVIDIA GeForce GTX 580 |

| Video Drivers: | NVIDIA Release 352.90 Beta AMD Catalyst Cat 15.5 Beta (All Other AMD Cards) AMD Catalyst Cat 15.15 Beta (R9 Fury X) |

| OS: | Windows 8.1 Pro |

458 Comments

View All Comments

looncraz - Friday, July 3, 2015 - link

We don't yet know how the Fury X will overclock with unlocked voltages.SLI is almost just as unreliable as CF, ever peruse the forums? That, and quite often you can get profiles from the wild wired web well before the companies release their support - especially on AMD's side.

chizow - Friday, July 3, 2015 - link

@looncrazWe do know Fury X is an exceptionally poor overclocker at stock and already uses more power than the competition. Who's fault is it that we don't have proper overclocking capabilities when AMD was the one who publicly claimed this card was an "Overclocker's Dream?" Maybe they meant you could Overclock it, in your Dreams?

SLI is not as unreliable as CF, Nvidia actually offers timely updates on Day 1 and works with the developers to implement SLI support. In cases where there isn't a Day 1 profile, SLI has always provided more granular control over SLI profile bits vs. AMD's black box approach of a loadable binary, or wholesale game profile copies (which can break other things, like AA compatibility bits).

silverblue - Friday, July 3, 2015 - link

No, he did actually mention the 980Ti's excellent overclocking ability. Conversely, at no point did he mention Fury X's overclocking ability, presumably because there isn't any.Refuge - Friday, July 3, 2015 - link

He does mention it, and does say that it isn't really possible until they get modified bios with unlocked voltages.e36Jeff - Thursday, July 2, 2015 - link

first off, its 81W, not 120W(467-386). Second, unless you are running furmark as your screen saver, its pretty irrelevant. It merely serves to demonstrate the maximum amount of power the GPU is allowed to use(and given that the 980 Ti's is 1W less than in gaming, it indicates it is being artfically limited because it knows its running furmark).The important power number is the in game power usage, where the gap is 20W.

Ryan Smith - Thursday, July 2, 2015 - link

There is no "artificial" limiting on the GTX 980 Ti in FurMark. The card has a 250W limit, and it tends to hit it in both games and FurMark. Unlike the R9 Fury X, NVIDIA did not build in a bunch of thermal/electrical headroom in to the reference design.kn00tcn - Thursday, July 2, 2015 - link

because furmark is normal usage right!? hbm magically lowers the gpu core's power right!? wtf is wrong with younandnandnand - Thursday, July 2, 2015 - link

AMD's Fury X has failed. 980 Ti is simply better.In 2016 NVIDIA will ship GPUs with HBM version 2.0, which will have greater bandwidth and capacity than these HBM cards. AMD will be truly dead.

looncraz - Friday, July 3, 2015 - link

You do realize HBM was designed by AMD with Hynix, right? That is why AMD got first dibs.Want to see that kind of innovation again in the future? You best hope AMD sticks around, because they're the only ones innovating at all.

nVidia is like Apple, they're good at making pretty looking products and throwing the best of what others created into making it work well, then they throw their software into the mix and call it a premium product.

Intel hasn't innovated on the CPU front since the advent of the Pentium 4. Core * CPUs are derived from the Penitum M, which was derived from the Pentium Pro.

Kutark - Friday, July 3, 2015 - link

Man you are pegging the hipster meter BIG TIME. Get serious. "Intel hasn't innovated on the CPU front since the advent of the Pentium 4..." That has to be THE dumbest shit i've read in a long time.Say what you will about nvidia, but maxwell is a pristinely engineered chip.

While i agree with you that AMD sticking around is good, you can't be pissed at nvidia if they become a monopoly because AMD just can't resist buying tickets on the fail train...