AMD Dives Deep On High Bandwidth Memory - What Will HBM Bring AMD?

by Ryan Smith on May 19, 2015 8:40 AM ESTThe Net Benefits of HBM

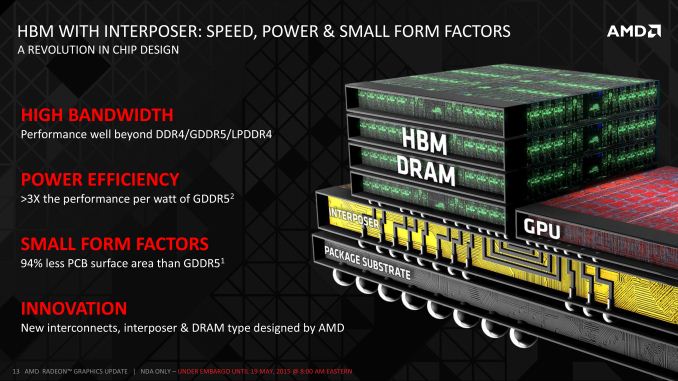

Now that we’ve had a chance to talk about how HBM is constructed and the technical hurdles in building it, we can finally get to the subject of the performance and design benefits of HBM. HBM is of course first and foremost about further increasing memory bandwidth, but the combination of stacked DRAM and lower power consumption also opens up some additional possibilities that could not be pursued with GDDR5.

We’ll start with the bandwidth capabilities of HBM. The amount of bandwidth ultimately depends on the number of stacks in use along with the clockspeed of those stacks. HBM uses a DDR signaling interface, and while AMD is not disclosing final product specifications at this time, they have given us enough information to begin to build a complete picture.

| GPU Memory Math | |||||

| AMD Radeon R9 290X | NVIDIA GeForce GTX Titan X | Theoretical 4-Stack HBM1 | |||

| Total Capacity | 4GB | 12GB | 4GB | ||

| Bandwidth Per Pin | 5Gbps | 7Gbps | 1Gbps | ||

| Number of Chips/Stacks | 16 | 24 | 4 | ||

| Bandwidth Per Chip/Stack | 20GB/sec | 14GB/sec | 128GB/sec | ||

| Effective Bus Width | 512-bit | 384-bit | 4096-bit | ||

| Total Bandwidth | 320GB/sec | 336GB/sec | 512GB/sec | ||

| Estimated DRAM Power Consumption |

30W | 31.5W | 14.6W | ||

The first generation of HBM AMD is using allows for each stack to be clocked up to 500MHz, which after DDR signaling leads to 1Gbps per pin. For a 1024-bit stack this means a single stack can deliver up to 128GB/sec (1024b * 1G / 8b) of memory bandwidth. HBM in turn allows from 2 to 8 stacks to be used, with each stack carrying 1GB of DRAM. AMD’s example diagrams so far (along with NVIDIA’s Pascal test vehicle) have all been drawn with 4 stacks, in which case we’d be looking at 512GB/sec of memory bandwidth. This of course is quite a bit more than the 320GB/sec of memory bandwidth for the R9 290X or 336GB/sec for NVIDIA’s GTX titan X, working out to a 52-60% increase in memory bandwidth.

At the same time this also calls into question memory capacity – 4 1GB stacks is only 4GB of VRAM – though AMD seems to be saving that matter for the final product introduction later this quarter. Launching a new, high-end GPU with 4GB could be a big problem for AMD, but we'll see just what they have up their sleeves in due time.

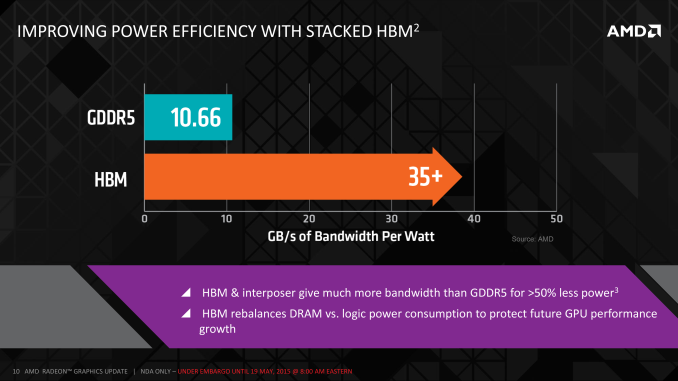

What’s perhaps more interesting is what happens to DRAM energy consumption with HBM. As we mentioned before, R9 290X spends 15-20% of its 250W power budget on DRAM, or roughly 38-50W of power on an absolute basis. Meanwhile by AMD’s own reckoning, GDDR5 is good for 10.66GB/sec of bandwidth per watt of power, which works out to 30W+ via that calculation. HBM on the other hand delivers better than 35GB/sec of bandwidth per watt, an immediate 3x gain in energy efficiency per watt.

Of course AMD is then investing some of those gains back in to coming up with more memory bandwidth, so it’s not as simple as saying that memory power consumption has been cut by 70%. Rather given our earlier bandwidth estimate of 512GB/sec of memory bandwidth for a 4 stack configuration, we would be looking at about 15W of power consumption for a 512GB/sec HBM solution, versus 30W+ for a 320GB/sec GDDR5 solution. The end result then points to DRAM power consumption being closer to halved, with AMD saving 15-20W of power.

What’s the real-world advantage of a 15-20W reduction in DRAM power consumption? Besides being able to invest that in reducing overall video card power consumption, the other option is to invest it in increasing clockspeeds. With PowerTune putting a hard limit on power consumption, a larger GPU power budget would allow AMD to increase clockspeeds and/or run at the maximum GPU clockspeed more often, improving performance by a currently indeterminable amount. Now as fair warning here, higher GPU clockspeeds typically require higher voltages, which in turn leads to a rapid increase in GPU power consumption. So although having additional power headroom does help the GPU, it may not be good for quite as much of a clockspeed increase as one might hope.

Meanwhile the performance increase from the additional memory bandwidth is equally nebulous until AMD’s new product is announced and benchmarked. As a rule of thumb GPUs are virtually always memory bandwidth bottlenecked – they are after all high-throughput processors capable of trillions of calculations per second working with only hundreds of billions of bytes of bandwidth – so there is no doubt that the higher memory bandwidths of HBM will improve performance. However memory bandwidth increases currently don’t lead to 1:1 performance increases even on AMD’s current cards, and it’s unlikely to be any different on future products.

Throwing an extra wrinkle into matters, any new AMD product would be based on GCN 1.2 or newer, which introduced AMD’s latest generation of color compression technology. The net result is that on identical workloads, memory bandwidth pressure is going down exactly at the same time as memory bandwidth availability is going up. AMD will end up gaining a ton of effective memory bandwidth – something that will be very handy for high resolutions – but it also makes it impossible to predict what the final performance impact might be. Still, it will be interesting to see what AMD can do with a 2x+ increase in effective memory bandwidth for graphics workloads.

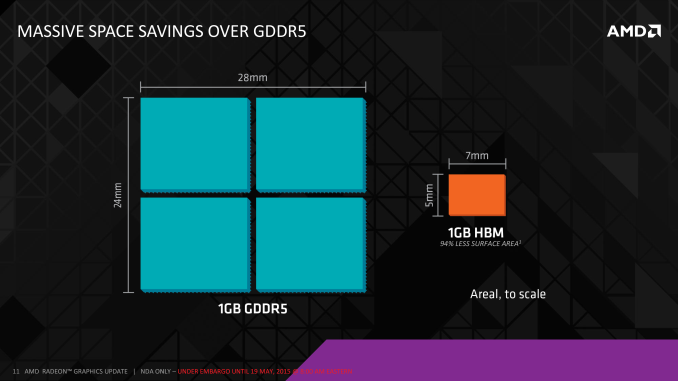

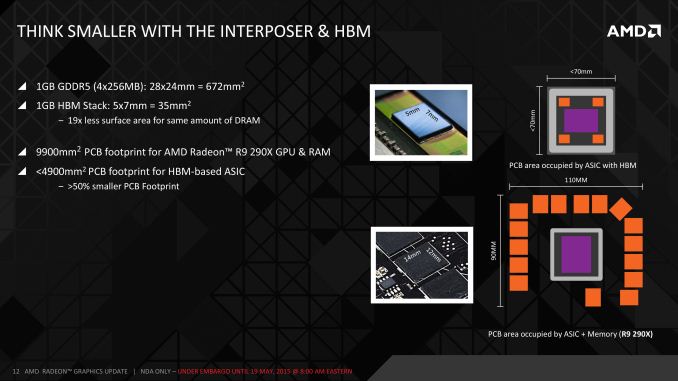

The final major benefit AMD is looking at taking advantage of with HBM – and that this point they’re not even being subtle about – is new form factor designs from the denser designs enabled by HBM. With the large GDDR5 memory chips replaced with much narrower HBM stacks, AMD is telling us that the resulting ASIC + RAM setups can be much smaller.

How much smaller? Well 1GB of GDDR5, composed of 2Gbit modules (the standard module size for R9 290X) would take up 672mm2, versus just 35mm2 for the same 1GB of DRAM as an HBM stack. Even if we refactor this calculation for 4Gbit modules – the largest modules used in currently shipping video cards – then we still end up with 336mm2 versus 35mm2, which is still a savings of 89% for 1GB of DRAM. Ultimately the HBM stack itself is composed of multiple DRAM dies, so there’s still quite a bit of silicon in play, however its 2D footprint is reduced significantly thanks to stacking.

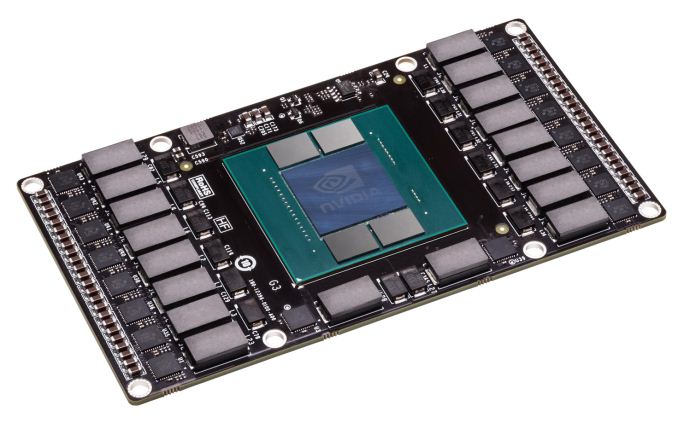

By AMD’s own estimate, a single HBM-equipped GPU package would be less than 70mm X 70mm (4900mm2), versus 110mm X 90mm (9900mm2) for R9 290X. Throw in additional space savings from the fact that HBM stacks don’t require quite as complex power delivery circuitry, and the card space savings could be significant. By our reckoning the total card size will still be fairly big – all of those VRMs and connectors need to go somewhere – but there is potential for significant savings. What AMD intends to do with those savings remains to be seen, but with apologies to AMD on this one, NVIDIA has already shown off their Pascal test vehicle for their mezzanine connector design, and it goes without saying that such a form factor opens up some very interesting possibilities.

With apologies to AMD: NVIDIA’s Pascal Test Vehicle, An Example Of A Smaller, Non-Traditional Video Card Design

Finally, aftermarket enthusiasts may or may not enjoy one final benefit from the use of HBM. Because the DRAM and GPU are now on the same package, AMD is going to be capping the package with an integrated heat spreader (IHS) to compensate for any differences in height between the HBM stacks and GPU die, to protect the HBM stacks, and to supply the HBM stacks with sufficient cooling. High-end GPU dies have been bare for some time now, so an IHS brings with it the same kind of protection for the die that IHSs brought to CPUs. At the same time however this means it’s no longer possible to make direct contact with the GPU, so extreme overclockers may come away disappointed. We’ll have to see what the shipping products are like and whether in those cases it’s viable to remove the IHS.

Closing Thoughts

Bringing this deep dive to a close, as the first GPU manufacturer to be shipping an HBM solution – in fact AMD expects to be the only vendor to ship an HBM1 solution – AMD has set into motion some very aggressive product goals thanks to the gains from HBM. Until we know more about AMD’s forthcoming video card I find it prudent to keep expectations in check here, as HBM is just one piece of the complete puzzle that is a GPU. But at the same time let’s be clear here: HBM is the future memory technology of GPUs, there is potential for significant performance increases thanks to the massive increase in memory bandwidth offers, and for roughly the next year AMD is going to be the only GPU vendor offering this technology.

AMD for their part is looking to take as much of an advantage of their lead as they can, both at the technical level and the consumer level. At the technical level AMD has said very little about performance so far, so we’ll have to wait and see just what their new product brings. But AMD is being far more open about their plans to exploit the size advantage of HBM, so we should expect to see some non-traditional designs for high-end GPUs. Meanwhile at the consumer level, expect to see HBM enter the technology lexicon as the latest buzzword for high-performance products – almost certainly to be stamped on video card boxes today just as GDDR5 has been for years – as AMD looks to let everyone know about their advantage.

Meanwhile shifting gears towards the long term, high-end GPUs are just the first of what AMD expects to be a wider rollout for HBM. Though AMD is not committing to any other products at this time, as production ramps up and costs come down, HBM is expected to become financially viable in a wider range, including lower-end GPUs, HPC products (e.g. FirePro S and AMD’s forthcoming HPC APU), high-end communications gear, and of course AMD’s mainstream consumer APUs. As lower-margin products consumer APUs will likely be among the farthest off, however in the long-run they may very well be the most interesting use case for HBM, as APUs are among the most bandwidth-starved graphics products out there. But before we get too far ahead of ourselves, let’s see what AMD is able to do with HBM on their high-end video cards later this quarter.

163 Comments

View All Comments

chizow - Tuesday, May 19, 2015 - link

Nice article Ryan, I think this gets back to some of the general tech deep dives that a lot of people miss on AT, rather than the obligatory item reviews that I know you guys have to put out as well. Always interesting to read about new and upcoming technology, thanks for the read!This part I think however, on last page needs to be clarified, as it is REALLY important to stay consistent in terminology now that GPU socket and PCB topology is changing:

"By AMD’s own estimate, a single HBM-equipped GPU package would be less than 70mm X 70mm (4900mm2), versus 110mm X 90mm (9900mm2) for R9 290X."

Even by AMD's own slide, that is *PCB area* occupied by either the HBM GPU package, or the GPU + GDDR5 modules. Calling everything a "package" doesn't really fit here and just confuses the issue if we keep the term Package intact, meaning GPU substrate sitting on PCB.

extide - Tuesday, May 19, 2015 - link

No, package is the correct term, as it is a single complete item that attaches to the PCB, including the GPU, RAM, interposer, etc all in one piece. It is not much different than the MCM (Multi Chip Modules) that many manufacturers (Intel for example) have used in the past. Since the memory is all on the package, the PCB area used is the same size as the package itself, in this case.chizow - Tuesday, May 19, 2015 - link

I agree the HBM package terminology is correct, but I'm not referring to that. I'm referring to the reference that the 290X package size is 110mm x 90mm for R9 290X. That's not very clear, because they are counting the *PCB AREA* on the 290X and using it synonymously with Package.It would be more clearly stated if it read something like:

"By AMD’s own estimate, the PCB area occupied by a single HBM-equipped GPU package would be less than 70mm X 70mm (4900mm2), versus 110mm X 90mm (9900mm2) PCB area for R9 290X that includes the GPU package and GDDR5 modules."

gamerk2 - Tuesday, May 19, 2015 - link

How much does the production of the Interposer cost? It's obviously going to eat into AMDs margins, which would imply that unless they sell more product, their profits will actually decline. Likewise, I wonder if that extra cost is going to squeeze them on the low end, where they currently have an advantage.extide - Tuesday, May 19, 2015 - link

Probably on the order of $10/GPU -- not a ton, but enough to be a significant item on the BoM.DanNeely - Tuesday, May 19, 2015 - link

I doubt gen 1 HBM will show up on budget cards; and wouldn't hold my breath on gen 2 or 3 either. For the 4xx generation, they're only putting it on the 490 family. 460-480 are going to remain at GDDR5. HBM will presumably kill off GDDR5 for midlevel cards over the next few years; but unless it becomes as cheap as DDR4 it's not going to be a factor on budget GPUs.SunLord - Tuesday, May 19, 2015 - link

I wonder how this will impact dual gpu cardsKevin G - Tuesday, May 19, 2015 - link

It'd actually make the board design simpler as difficult part, the memory traces, are now all in the interposer. The challenge for a dual GPU designs shifts toward power and cooling.With the board area savings, they could conceptually do a triple GPU card. The problem wouldn't be the designs of such a card but actually getting enough power. Of course they could go out of the PCIe spec and go towards a 525W design for such a triple GPU beast.

testbug00 - Tuesday, May 19, 2015 - link

Naw, they would go for a full water cooled 725W card XD (1W under the electric limits of PCI + 8 + 8 pin iirc) trollololoolololol.don't think anyone would do a triple GPU card for consumers. The scaling is still pretty bad beyond 2 iirc.

Kevin G - Tuesday, May 19, 2015 - link

The wonderful thing about having all the GPUs on a single board is that incorporating a private high speed bus between chips becomes possible to improve scaling. AMD attempted this before with the 4870X2: http://www.anandtech.com/show/2584/3However, it was never really utilized as it was disabled via drivers.

Alternatively, multiple GPU dies and memory could just be placed onto the same the interposer. Having a fast and wide bus between GPU dies would then become trivial. Power consumption and more importantly power density, would not be so trivial.