AMD's Radeon HD 4870 X2 - Testing the Multi-GPU Waters

by Anand Lal Shimpi & Derek Wilson on August 12, 2008 12:00 AM EST- Posted in

- GPUs

These Aren't the Sideports You're Looking For

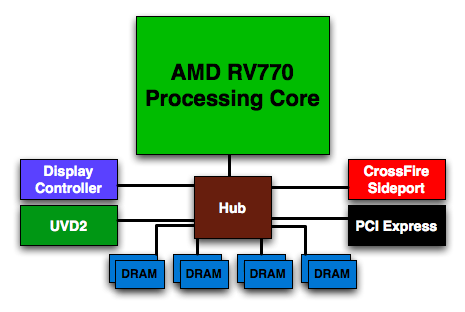

Remember this diagram from the Radeon HD 4850/4870 review?

I do. It was one of the last block diagrams I drew for that article, and I did it at the very last minute and wasn't really happy with the final outcome. But it was necessary because of that little red box labeled CrossFire Sideport.

AMD made a huge deal out of making sure we knew about the CrossFire Sideport, promising that it meant something special for single-card, multi-GPU configurations. It also made sense that AMD would do something like this, after all the whole point of AMD's small-die strategy is to exploit the benefits of pairing multiple small GPUs. It's supposed to be more efficient than designing a single large GPU and if you're going to build your entire GPU strategy around it, you had better design your chips from the start to be used in multi-GPU environments - even more so than your competitors.

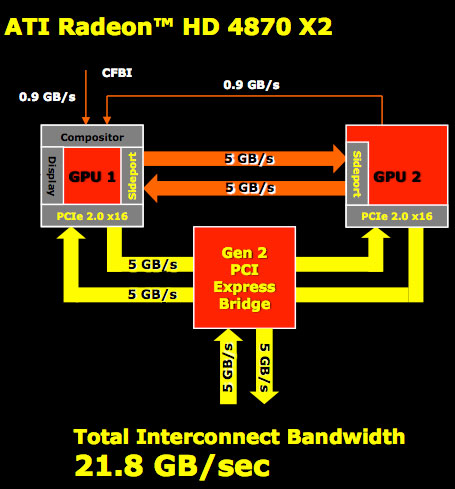

AMD wouldn't tell us much initially about the CrossFire Sideport other than it meant some very special things for CrossFire performance. We were intrigued but before we could ever get excited AMD let us know that its beloved Sideport didn't work. Here's how it would work if it were enabled:

The CrossFire Sideport is simply another high bandwidth link between the GPUs. Data can be sent between them via a PCIe switch on the board, or via the Sideport. The two aren't mutually exclusive, using the Sideport doubles the amount of GPU-to-GPU bandwidth on a single Radeon HD 4870 X2. So why disable it?

According to AMD the performance impact is negligible, while average frame rates don't see a gain every now and then you'll see a boost in minimum frame rates. There's also an issue where power consumption could go up enough that you'd run out of power on the two PCIe power connectors on the board. Board manufacturers also have to lay out the additional lanes on the graphics card connecting the two GPUs, which does increase board costs (although ever so slightly).

AMD decided that since there's relatively no performance increase yet there's an increase in power consumption and board costs that it would make more sense to leave the feature disabled.

The reference 4870 X2 design includes hardware support for the CrossFire Sideport, assuming AMD would ever want to enable it via a software update. However, there's no hardware requirement that the GPU-to-GPU connection is included on partner designs. My concern is that in an effort to reduce costs we'll see some X2s ship without the Sideport traces laid out on the PCB, and then if AMD happens to enable the feature in its drivers later on some X2 users will be left in the dark.

I pushed AMD for a firm commitment on how it was going to handle future support for Sideport and honestly, right now, it's looking like the feature will never get enabled. AMD should have never mentioned that it ever existed, especially if there was a good chance that it wouldn't be enabled. AMD (or more specifically ATI) does have a history of making a big deal of GPU features that never get used (Truform anyone?), so it's not too unexpected but still annoying.

The lack of anything special on the 4870 X2 to make the two GPUs work better together is bothersome. You would expect a company who has built its GPU philosophy on going after the high end market with multi-GPU configurations to have done something more than NVIDIA when it comes to actually shipping a multi-GPU card. AMD insists that a unified frame buffer is coming, it just needs to make economic sense first. The concern here is that NVIDIA could just as easily adopt AMD's small-die strategy going forward if AMD isn't investing more R&D dollars into enabling multi-GPU specific features than NVIDIA.

The lack of CrossFire Sideport support or any other AMD-only multi-GPU specific features reaffirms what we said in our Radeon HD 4800 launch article: AMD and NVIDIA don't really have different GPU strategies, they simply target different markets with their baseline GPU designs. NVIDIA aims at the $400 - $600 market while AMD shoots for the $200 - $300 market. And both companies have similar multi-GPU strategies, AMD simply needs to rely on its more.

93 Comments

View All Comments

ajbird - Friday, September 12, 2008 - link

I am not sure I agree with this"Along with such a title comes a general requirement: if you're dropping over $500 on a graphics card on a somewhat regular basis, you had better have a good monitor - one of many 30" displays comes to mind. Without a monitor that can handle 2560x1600, especially with 2x 4870 X2 cards in CrossFire, all that hard earned money spent on graphics hardware is just wasted."

I have just built a new rig and will not be building anything else for a long time to come. I want something that will play new games now at 1680x1050 with all the eye candy switched on and will still be able to play games in 3 years time at a decent level of IQ. I paid £330 for this card and think it was worth every penny.

I have always splashed out on top end cards (i build a new pc about overy 3-4 years) and have aways been happy with their life span.

4g63 - Monday, August 18, 2008 - link

Cars and cards are two completely different things. I think that the guy [author] was just humoring you with the comment about energy costs. Why is this even an issue. If you want to burn a few hundred extra watts on your gaming rig there is no logical reason not to. If you want to help this place with energy support nuke power. Nuke power is clean, safe, plentiful and reliable. Oil prices have soared because of skeptics who have bought into a unsubstantiated construct of social panic. One guy was basically drawing conclusions between the amount of kilowatt hours on his power meter [due to the use of a gaming rig!] to a conglomeration of natural phenomena. Come on. Think for Yourself and Research the Truth if you are going to preach about how we should give up our right to play games as fast and as clearly as we possibly can! God Bless Capitalism.Zak - Thursday, August 21, 2008 - link

I'd like to see 9800GX2 scores too for comparison. I have been quite disappointed with it: sudden performance dropoff above 1680x1050 due to low bandwidth and only 512MB of memory, I guess, and extremely hot. I'm thinking about getting this new AMD/ATI card as the GeForce 200 series is a joke.Z.

X1REME - Friday, August 15, 2008 - link

I used to look 2 this site 2 C what is good 2 buy & what not 2 buy. last time I purchased the ASUS P5B Deluxe/WiFi-AP AiLifestyle Series - motherboard recommended by this site. I have looked at many reviews but have not come across one 2 this date, which recommends somfin from AMD, I don't know why, maybe they just have not made anything good enough or maybe every1 expects more from AMD (and that's a good thing). so if som1 can show me personally a review that favours AMD outright as with Intel and nVidia all the time would be a start.StormEffect - Friday, August 15, 2008 - link

Through the past couple of years I've become more and more of an AMD/ATI fanboy, even though I've tried to stop. Yet I bought a Macbook Pro (By Apple, with Intel and Nvidia parts). All of my desktops are primarily Intel and Nvidia.But somehow the underdog status has me totally charmed, and this new 4000 series has added to the affection I have for AMD/ATI. I even recently built a new desktop with a 780G chipset and an 8750 tricore Phenom (no GPU except the integrated HD3200, which rocks).

I REALLY <3 AMD/ATI right now. So I am QUITE surprised that everyone here seems so adamant that there is some serious bias in this article. It states the facts, this card is as fast as it gets, but it isn't perfect. So what's wrong with that? When you are at the top you deserve to be looked at critically.

Is everyone just oversensitive at this point? I believe in being nice to others, but does the writer of this review have to drool over this card to validate it? I LOVE AMD/ATI. If there is bias here I'd be freaking out and getting out the picket signs, but I have reread it and I STILL don't see where you are all coming from.

When they reviewed the GTX280 they used words with possibly unkind connotations to describe the massive core, does that make them Nvidia haters too?

I don't see it, will someone point it out to me?

Mr Roboto - Saturday, August 16, 2008 - link

I generally agree with what you're saying except the stopgap garbage 9800GX2 card was not looked at nearly as critical when it was reviewed and the card was at it's EOL after only 3 months from the release date. Also Nvidia did not add anything new to that card when compared to the 7950GX2 from 3 years ago. Why does the already obsolete 9800GX2 get a pass?It's right on the money to state "Hey lets get it going from a hardware level with these single slot dual GPU cards" instead of relying on software profiles that waste money by having to have a dedicated team of programmers working on it to get any significant improvement in performance. Not just the time and money aspect but like Anand said it's not going to last and was supposed to be a temporary thing that has now been the only way either side has shown any progress. But Nvidia is doing the same thing. The bias is noticeable but I have gotten used to it in the last few years since ATI has been out of the game. Nvidia's aggressive "marketing" if you want to call it that, has corrupted nearly all of the major online hardware publications point of views IMO. AMD is definitely the underdog but with a different sort of negative twist.

far327 - Friday, August 15, 2008 - link

http://phoenix.craigslist.org/evl/sys/790600009.ht...">http://phoenix.craigslist.org/evl/sys/790600009.ht...Don't miss out on a steal.

far327 - Friday, August 15, 2008 - link

I'm sorry boys & girls, but this is where my maturity and passion for gaming collide... It is just getting to damned excessive to be able to play a PC game at an HD resolution. I think my PC gaming days are done until Nvidia & AMD decide to work on better cooling methods and lower power consumption. Doesn't anyone here realize the world is in the middle of an energy crisis that is causing food and energy prices to soar??? Video cards today are like muscle cards from the 70's. I am running a E8400 with two 8800 GT Akimbo 1024mb in Sli off a 550watt PSU. I refuse to invest into a market that is more or less careless towards the environment. Waiting on green solutions!!!Ezareth - Friday, August 15, 2008 - link

That is fine with us. The rest of us who can think for ourselves will continue to advance while you revert back to the stone age. "Green" is a marketing ploy, much like "Organic" food, and Global Warming etc. If we need more electricity you and your kind need to give up your opposition to nuclear power. We have enough uranium in the US to power all of our electricity needs for the next few centuries.Not everyone buys into that but computers and graphics cards will continue to consume more and more electricity until some technology breakthrough comes through that doesn't involve the use of transistors(like IBM spintronics research).

If you are so concerned about being "green" go live in the woods somewhere, and let the rest of us enjoy our advanced lifestyles.

far327 - Friday, August 15, 2008 - link

It must be nice to completely ignore reality. I suppose you think $5.00 per gallon of gas is just fine too? Nuclear power can't fix that bro. Nor can nuclear power fix that flood that hit the Mississippi or California's massive wild fires, or Katrina. The recent surge in China's economy has allowed 1.8 billion people to drive automobiles. Think that might have a slight effect on our atmosphere? The population of the USA increases by 400,000 yearly. Think of all the consumption done by each person every single day! And our population continues to increase. The difference between you and I is that when you look at outside, you see a tree. When I look outside I see a forest. The world is bigger than your computer screen.