AMD Dives Deep On High Bandwidth Memory - What Will HBM Bring AMD?

by Ryan Smith on May 19, 2015 8:40 AM ESTHBM: Wide & Slow Makes It Fast

Given the challenges faced in pushing GDDR5 and similar memory technologies even further, development of high bandwidth memory technology has in the last decade shifted back towards wider, slower interfaces. As serial interfaces reach their limits, parallel interfaces become an increasingly viable alternative. And although they bring with them their own challenges – there’s a reason serial interfaces have been dominant most recently, after all – the ramp up of challenges in further improving serial interfaces has coincided with the development of technologies that make parallel interfaces easier to implement. As a result the pendulum has swung back to parallel interfaces and HBM.

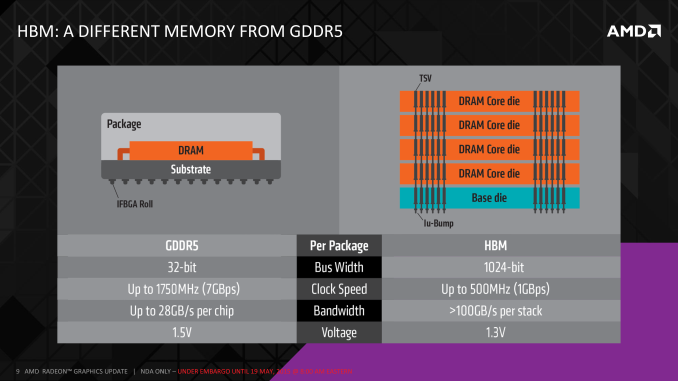

HBM in a nutshell takes the wide & slow paradigm to its fullest. Rather than building an array of high speed chips around an ASIC to deliver 7Gbps+ per pin over a 256/384/512-bit memory bus, HBM at its most basic level involves turning memory clockspeeds way down – to just 1Gbps per pin – but in exchange making the memory bus much wider. How wide? That depends on the implementation and generation of the specification, but the examples AMD has been showcasing so far have involved 4 HBM devices (stacks), each featuring a 1024-bit wide memory bus, combining for a massive 4096-bit memory bus. It may not be clocked high, but when it’s that wide, it doesn’t need to be.

Of course while extra memory bandwidth is nice, the far more interesting part is how HBM delivers this. Although the idea of a wide, slow parallel bus is easy enough to comprehend on paper, implementing it is a whole other matter. A 4096-bit memory bus involves thousands of traces, far more than GDDR5, all of which must be carefully constructed in order to make HBM work. As a result there are a couple of fundamental technologies that are seeing their big (though not necessarily initial) introduction with HBM.

The first and most fundamental matter is how do you efficiently route a 4096-bit memory bus? Even the best surface mounting BGA technologies have their limits, and as it is Hawaii was pushing things with its 512-bit GDDR5 memory bus. An even wider bus only makes that harder, inviting issues both with the routing such a wide bus on a PCB or chip substrate, and in using BGA to connect a chip to those traces.

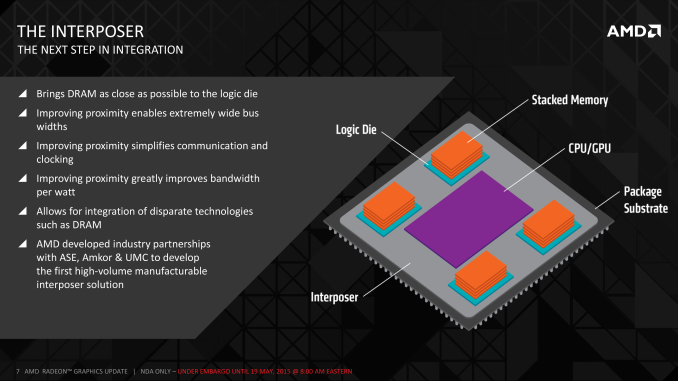

First part of the solution to that in turn was to develop something capable of greater density routing, and that something was the silicon interposer. The interposer in its broadest terms is a partially fabbed silicon chip that instead of being developed into a full ASIC packed with logic, is only developed as far as having metal layers, in order to route signals and power among devices. The interposer in turn works because it exploits some of the core advantages of modern photolithographic processes, allowing for very fine paths to be created that would otherwise not be possible/practical on traditional PCBs and substrates.

Using a silicon interposer solves some of the fundamental problems with HBM, but it also provides some ancillary benefits as well. Along with solving the obvious routing issue, the interposer allows for DRAM to be placed very close to an ASIC, but without being placed on top of it (ala Package-on-package), which is impractical for high TDP devices like GPUs. By being able to place DRAM so close to the ASIC, it avoids the drawbacks of long memory paths, making the shorter paths both simpler to construct and require less power in the process. It also benefits integration, as similar to PoP technology, you can have more of the device’s functionality located on the same package as the ASIC, reducing the number of devices that need to be placed off-package and routed to the ASIC.

Of course the interposer does come with a drawback as well, and that’s cost. While AMD is not talking about costs in great detail – this is a technology deep dive, not an analyst meeting – the fact that the interposer is essentially a very large, partially developed silicon chip means that it’s relatively expensive to produce, especially compared to the very low costs of PCBs and traditional substrates. Mitigating this is the fact that interposers don’t need to go through the most complex and expensive phases of photolithography – the actual front-end lithography – so the cost is only the silicon wafer itself, along with the work required to create the metal layers, with the final interposer only being some 100 microns thick. Furthermore this doesn’t require cutting-edge fabs – old, fully amortized 65nm equipment works quite well – which further keeps the costs down. The end result is that the interposer is still a significant cost, but it is not as bad as it initially seems. This ultimately is why HBM will first be introduced on high margin products like high-end video cards before potentially making its way down to cheaper devices like APUs.

Meanwhile AMD and their vendors will over the long run also benefit from volume production. The first interposers are being produced on retooled 65nm lithographic lines, however once volume production scales up, it will become economical to develop interposer-only lines that are cheaper to operate since they don’t need the ability to offer full lithography as well. Where that cut-off will be is not quite clear at this time, though it sounds like it will happen sooner than later.

Looking at the broader picture, in the grand scheme of things the interposer becomes a new layer on a complete chip, sitting between the traditional substrate and any DRAM/ASICs mounted on top of it. Microbumps will connect the DRAM and ASICs to the interposer, and the interposer will then be connected to the substrate, before finally the substrate is connected to its partner PCB. PCB mounting itself will become a bit easier in the process, as there’s no longer a need to route memory traces through the substrate, which means the only remaining connections are data (PCIe bus, etc) and power for the ASIC and DRAM. All the complex routing is essentially localized to occurring at the interposer layer.

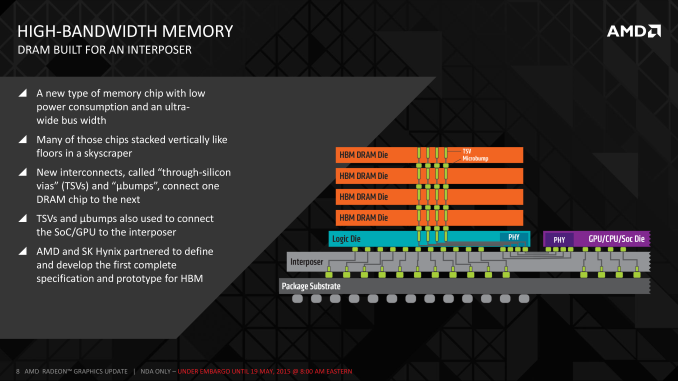

Moving on, the other major technological breakthrough here is the creation of through-silicon vias (TSVs). With the interposer to enable the routing of a dense memory bus, the other issue to solve was the creation of dense memory. The solution to this was to stack multiple memory dies together into a single device/stack, in order to create the single 1024-bit stack, and TSVs are in turn what make this possible.

The reason for stacking DRAM is pretty straightforward: it makes production easier by reducing the DRAM to fewer discrete devices, not to mention it saves space. The challenge here is that you can’t have traditional surface mount connections since the DRAM is stacked, and traditional edge connections (as used in PoP) are neither dense enough nor do they scale well to the kind of stacks HBM would require.

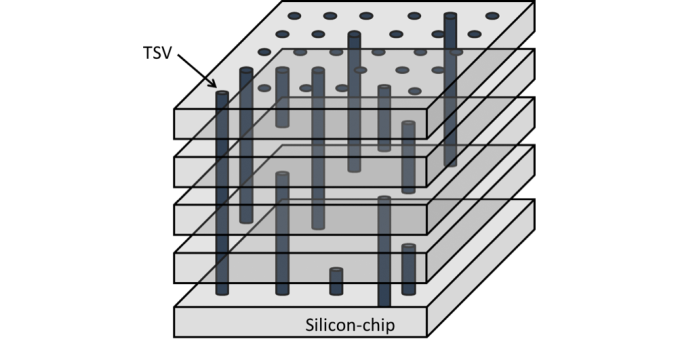

TSVs. Image Courtesy The International Center for Materials Nanoarchitectonics

As a result a means was needed to route DRAM connections though the lower layers of the stack, and this problem was solved with TSVs. Whereas regular vias offer the ability to connect two layers together, TSVs extend this principle by running the vias straight through silicon devices in order to connect layers farther out. The end result is something vaguely akin to DRAM dies surface mounted on top of each other via microbumps, but with the ability to communicate through the layers. From a manufacturing standpoint, between the silicon interposer and TSVs, TSVs are the more difficult technology to master as it essentially combines all the challenges of DRAM fabbing with the challenges of stacking those DRAM dies on top of each other.

Having developed the means to stack DRAM, the final component of an HBM stack is a logic die that lies on the bottom of the stack. Similarly outfitted with TSVs, the logic die is responsible for actually operating the DRAM dies above it, and then handling the operation of the HBM bus between the stack and the ASIC. This actually ends up being a rather interesting development since the net result is more logic added despite the simplicity of the HBM bus, but at the same time thanks to TSVs and the interposer, it’s easier than ever to add that logic.

163 Comments

View All Comments

chizow - Tuesday, May 19, 2015 - link

Nice article Ryan, I think this gets back to some of the general tech deep dives that a lot of people miss on AT, rather than the obligatory item reviews that I know you guys have to put out as well. Always interesting to read about new and upcoming technology, thanks for the read!This part I think however, on last page needs to be clarified, as it is REALLY important to stay consistent in terminology now that GPU socket and PCB topology is changing:

"By AMD’s own estimate, a single HBM-equipped GPU package would be less than 70mm X 70mm (4900mm2), versus 110mm X 90mm (9900mm2) for R9 290X."

Even by AMD's own slide, that is *PCB area* occupied by either the HBM GPU package, or the GPU + GDDR5 modules. Calling everything a "package" doesn't really fit here and just confuses the issue if we keep the term Package intact, meaning GPU substrate sitting on PCB.

extide - Tuesday, May 19, 2015 - link

No, package is the correct term, as it is a single complete item that attaches to the PCB, including the GPU, RAM, interposer, etc all in one piece. It is not much different than the MCM (Multi Chip Modules) that many manufacturers (Intel for example) have used in the past. Since the memory is all on the package, the PCB area used is the same size as the package itself, in this case.chizow - Tuesday, May 19, 2015 - link

I agree the HBM package terminology is correct, but I'm not referring to that. I'm referring to the reference that the 290X package size is 110mm x 90mm for R9 290X. That's not very clear, because they are counting the *PCB AREA* on the 290X and using it synonymously with Package.It would be more clearly stated if it read something like:

"By AMD’s own estimate, the PCB area occupied by a single HBM-equipped GPU package would be less than 70mm X 70mm (4900mm2), versus 110mm X 90mm (9900mm2) PCB area for R9 290X that includes the GPU package and GDDR5 modules."

gamerk2 - Tuesday, May 19, 2015 - link

How much does the production of the Interposer cost? It's obviously going to eat into AMDs margins, which would imply that unless they sell more product, their profits will actually decline. Likewise, I wonder if that extra cost is going to squeeze them on the low end, where they currently have an advantage.extide - Tuesday, May 19, 2015 - link

Probably on the order of $10/GPU -- not a ton, but enough to be a significant item on the BoM.DanNeely - Tuesday, May 19, 2015 - link

I doubt gen 1 HBM will show up on budget cards; and wouldn't hold my breath on gen 2 or 3 either. For the 4xx generation, they're only putting it on the 490 family. 460-480 are going to remain at GDDR5. HBM will presumably kill off GDDR5 for midlevel cards over the next few years; but unless it becomes as cheap as DDR4 it's not going to be a factor on budget GPUs.SunLord - Tuesday, May 19, 2015 - link

I wonder how this will impact dual gpu cardsKevin G - Tuesday, May 19, 2015 - link

It'd actually make the board design simpler as difficult part, the memory traces, are now all in the interposer. The challenge for a dual GPU designs shifts toward power and cooling.With the board area savings, they could conceptually do a triple GPU card. The problem wouldn't be the designs of such a card but actually getting enough power. Of course they could go out of the PCIe spec and go towards a 525W design for such a triple GPU beast.

testbug00 - Tuesday, May 19, 2015 - link

Naw, they would go for a full water cooled 725W card XD (1W under the electric limits of PCI + 8 + 8 pin iirc) trollololoolololol.don't think anyone would do a triple GPU card for consumers. The scaling is still pretty bad beyond 2 iirc.

Kevin G - Tuesday, May 19, 2015 - link

The wonderful thing about having all the GPUs on a single board is that incorporating a private high speed bus between chips becomes possible to improve scaling. AMD attempted this before with the 4870X2: http://www.anandtech.com/show/2584/3However, it was never really utilized as it was disabled via drivers.

Alternatively, multiple GPU dies and memory could just be placed onto the same the interposer. Having a fast and wide bus between GPU dies would then become trivial. Power consumption and more importantly power density, would not be so trivial.