Intel & Cray Land Contract for 2 Dept. of Energy Supercomputers

by Ryan Smith on April 9, 2015 5:30 PM EST

Late last year the United States Department of Energy kicked off the awards phase of their CORAL supercomputer upgrade project, which would see three of the DoE’s biggest national laboratories receive new supercomputers for their ongoing research work. The first two supercomputers, Summit and Sierra, were awarded to the IBM/NVIDIA duo for Oak Ridge National Laboratory and Lawrence Livermore National Laboratory respectively. Following up on that, the final part of the CORAL program is being awarded today, with Intel and Cray receiving orders to build 2 new supercomputers for Argonne National Laboratory.

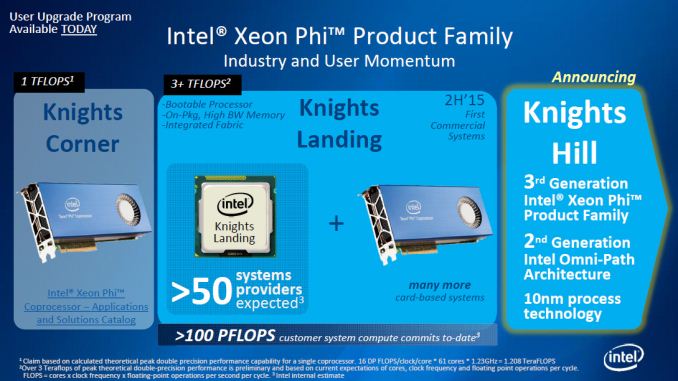

The flagship of these two computers is Aurora, a next-generation Cray “Shasta” supercomputer that is scheduled for delivery in 2018. Designed to deliver 180 PetaFLOPS of peak compute performance, Aurora will be heavily leveraging Intel’s suite of HPC technologies. Primarily powered by a future version of Intel’s Xeon Phi accelerators – likely the 10nm-fabbed Knights Hill – Aurora will be combining the Xeon Phi with Intel’s Xeon CPUs (Update: Intel has clarified that the Xeons are for management purposes only), an unnamed Intel developed non-volatile memory solution, and Intel’s high-speed and silicon photonics-driven Omni-Path interconnect technology. Going forward, Intel is calling this future setup their HPC scalable system framework.

At 180 PFLOPS of performance, Aurora will be in the running for what will be the world’s fastest supercomputer. Whether it actually takes the crown will depend on where exactly ORNL’s Summit supercomputer ends up – it’s spec’d for between 150 PFLOPS and 300 PFLOPS – with Aurora exceeding the minimum bounds of that estimate. All told this makes Aurora 18 times faster than its predecessor, the 10 PFLOPS Mira supercomputer. Meanwhile Aurora’s peak power consumption of 13MW is also 2.7 times Mira’s, which works out to an overall increase in energy efficiency of 6.67x.

| US Department of Energy CORAL Supercomputers | ||||||

| Aurora | Theta | Summit | Sierra | |||

| CPU Architecture | Intel Xeon (Management Only) |

Intel Xeon (Management Only) |

IBM POWER9 | IBM POWER9 | ||

| Accelerator Architecture | Intel Xeon Phi (Knights Hill?) | Intel Xeon Phi (Knights Landing) | NVIDIA Volta | NVIDIA Volta | ||

| Performance (RPEAK) | 180 PFLOPS | 8.5 PFLOPS | 150 - 300 PFLOPS | 100+ PFLOPS | ||

| Power Consumption | 13MW | 1.7MW | ~10MW | N/A | ||

| Nodes | N/A | N/A | 3,400 | N/A | ||

| Laboratory | Argonne | Argonne | Oak Ridge | Lawrence Livermore | ||

| Vendor | Intel + Cray | Intel + Cray | IBM | IBM | ||

The second of the supercomputers is Theta, which is a much smaller scale system intended for early production system for Argonne, and is scheduled for delivery in 2016. Theta is essentially a one-generation sooner supercomputer for further development, based around a Cray XC design and integrating Intel Xeon processors along with Knights Landing Xeon Phi processors. Theta in turn will be much smaller than Aurora, and is scheduled to deliver a peak performance of 8.5 PFLOPS while consuming 1.7MW of power.

The combined value of the contract for the two systems is over $200 million, the bulk of which is for the Aurora supercomputer. Interestingly the prime contractor for these machines is not builder Cray, but rather Intel, with Cray serving as a sub-contractor for system integration and manufacturing. According to Intel this is the first time in nearly two decades that they have been awarded the prime contractor role in a supercomputer, their last venture being ASCI Red in 1996. Aurora in turn marks the latest in a number of Xeon Phi supercomputer design wins for Intel, joining existing Intel wins such as the Cori and Trinity supercomputers. Meanwhile for partner Cray this is also the first design win for their Shasta family of designs.

Finally, Argonne and Intel have released a bit of information on what Aurora will be used for. Among fields/tasks planned for research on Aurora are: battery and solar panel improvements, wind turbine design and placement, improving engine noise & efficiency, and biofuel research, including more effective disease control for biofuel crops.

Source: Intel

35 Comments

View All Comments

SarahKerrigan - Friday, April 10, 2015 - link

NVlink is also memory-coherent with the host - no translation required, unlike PCIe. This results in *very* large latency reductions when talking to the attached accelerators.trsohmers - Friday, April 10, 2015 - link

You seem to be confusing GB (Gigabyte) and Gigabit. PCIe Gen 4 is 1969MB/s (1.9GB/s) per lane, with max of 16 lanes per interface... giving you almost 32GB/s. NVLINK is also 16 lanes, but gives a full interface speed of 80GB/s, or 5GB/s per lane.Intel's "competitor" is their socket-socket Quick Path Interconnect interface, which is a parallel bus (unlike PCIe and NVLINK, which are serial) and has speed ranging from 32GB/s to ~80 GB/s in chips today. Unlike Kevin G saying in the comments section here, Intel QPI is *not* used for internal chip communication, it is used for the uncore, motherboard, and socket-socket communication.

iAPX - Thursday, April 16, 2015 - link

This is *NOT* about bandwidth, it's all about latency, nVidia plan to launch an incredible offer with a really low latency to enable efficient communication between GPU on a server, stay tuned ;)tuxRoller - Friday, April 10, 2015 - link

Wow, are you that much of a fanboy? I gave credit to both.Power9 should be pretty awesome, despite being on a larger node than intel.

A single chip supports almost 100 threads, and these threads are a good deal heavier than those than make up a wave (also much finer grained control).

Lastly, capi and nvlink will allow for an HSA-type workload.

For embarrasingly parallel tasks pascal will be awesome, and for more dynamic workloads it'll be far better than maxwell.

extide - Monday, April 13, 2015 - link

Where are you reading about thread counts on POWER9 ? -- POWER8 supports 96 threads in a single socket (for the big version) -- so POWER9 may end up being even more.It's difficult to compare those big nasty power chips to xeons though as they typically run in much higher TDP ranges.

Jalek99 - Thursday, April 9, 2015 - link

There'll be many in the government wanting to use it for decryption of the 14 years of data they've gathered on everyone, foreign and domestic. Once the communications are intercepted and stored, the data-mining begins. Everything a person does becomes charted on a graph, financial transactions or travel or anything. Thus, as data like bookstore receipts, bank statements, and commuter toll records flow in, the NSA is able to paint a more and more detailed picture of someone’s life.Have fun with convincing people like Hatch, McCain, and Graham that what Nixon did, using such information for political purposes, shouldn't be accomodated.

redviper - Thursday, April 9, 2015 - link

There will be no one reasonable who would possibly use Aurora or Summit for decryption tasks. For one thing these machines haven't been co-designed with that in mind. These machines aren't on classified networks and it is pretty likely that the NSA has something better suited than either of these giant machines. Plus anyone logged into either machine can see what else is in the queue. This is just stupid paranoia and this kind of crap undermines very real issues with the surveillance state.Jalek99 - Thursday, April 9, 2015 - link

It's largely quoted text from the Wired article that came a year before Snowden, that many similarly dismissed as baseless paranoia.redviper - Friday, April 10, 2015 - link

You sound like an anti-vaxxer, unable to digest information that you don't agree with.tuxRoller - Friday, April 10, 2015 - link

Logic fail.Just because one very specific conspiracy theory is true doesn't make the rest any more or less likely.