DDR4 Haswell-E Scaling Review: 2133 to 3200 with G.Skill, Corsair, ADATA and Crucial

by Ian Cutress on February 5, 2015 10:10 AM ESTConclusions on Haswell-E DDR4 Scaling

When we first start testing for a piece, it is very important to keep an open mind and not presuppose any end-results. Ideally we would go double blind, but in the tech review industry that is not always possible. We knew the results from our DDR3 testing showing that outside of integrated graphics, there are a few edge cases where upgrading to faster memory makes sense but avoiding the trap of low base memory can actually have an overall impact on the system - as long as XMP is enabled of course.

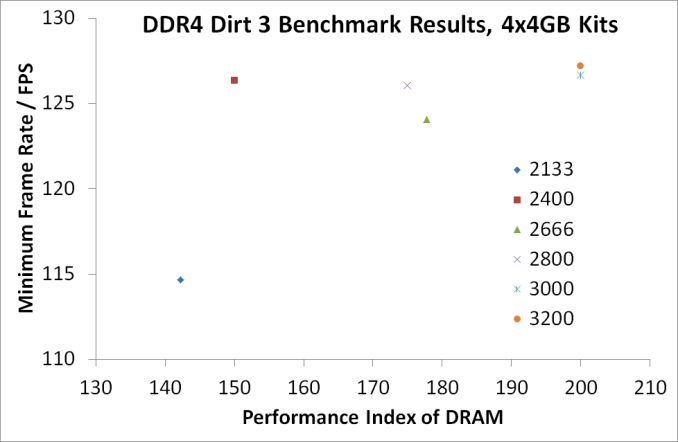

Because Haswell-E does not have any form of integrated graphics, the results today are fairly muted. In some ways they mirror the results we saw on DDR3, but are more indicative of the faster frequency memory at hand.

For the most part, the base advice is: aim for DDR4-2400 CL15 or better.

DDR4-2133 CL15, which has a performance index of 142, has a few benchmarks where it comes out up to 3-10% slower than the rest of the field. Cases in point include video conversion (Handbrake at 4K60), fluid dynamics, complex web code and minimum frame rates on certain games.

For professional users, we saw a number of benefits moving to the higher memory ranges, although for only very minor performance gains. Cinebench R15 gave 2%, 7-zip gave 2% and our fluid dynamics Linux benchmark was up +4.3%. The only true benchmark where 2800+ memory made a significant difference was in Redis, which is a scalable database memory-key store benchmark. Only users with specific needs would need to consider this.

There is one other group of individuals where super-high frequency memory on Haswell-E makes sense – the sub-zero overclockers. For these people, relying on the best synthetic test results can mean the difference between #5 and #20 in the world rankings. The only issue here is that these individuals or teams are often seeded the best memory already. This relegates high end memory sales to system integrators who can sell it at a premium.

Personally, DDR4 offers three elements of interest. Firstly is the design, and finding good looking memory to match a system that you might want to show off can be a critical element when looking at components. Second is density, and given that Haswell-E currently supports four memory channels at two modules per channel, if we get a whiff of 16GB modules it could be a boon for high memory capactiy prosumers. The third element to the equation is integrated graphics, where the need for faster memory can actually greatly improve performance. Unfortunately we will have to wait for the industry to catch up on that one.

At this point in time, our DDR4 testing is not yet complete. Over the next couple of weeks, we will be reviewing these memory kits individually, comparing results, pricing, styling and overclockability for what it is worth. Our recent array of DDR4-3400 news from Corsair and G.Skill has also got some of the memory manufacturers interested in seeing even higher performance kits on the test bed, so we are looking forward to that. I also need to contact Mushkin and Kingston and see if those CL12/CL13 memory kits could pose a threat to the status quo.

Edit: Mushkin actually emailed me this morning about getting some product for review.

We have a couple of updates for our testing suite in mind as well, particularly the gaming element and are waiting for new SSDs and GPUs to arrive before switching some of our game tests over to something more recent, perhaps at a higher resolution as well. When that happens, we will post some more numbers to digest.

120 Comments

View All Comments

jabber - Friday, February 6, 2015 - link

Well I've added into my T5400 workstation USB3.0, eSATA, 7870 GPU, SSHD and SSD. I haven't added SATA III as its way too costly for a decent card, plus even though I can only push 260MBps from a SSD, with 0.1ms access times I really can't notice in real world. The main chunk of the machine only cost around £200 to put together.Striker579 - Friday, February 6, 2015 - link

omg those retro color mb's....good timesWardrop - Saturday, February 7, 2015 - link

Wow, how did you accidentally insert your motherboard model in the middle of the word "provide"? Quite an impressive typo, lolmsroadkill612 - Saturday, September 2, 2017 - link

To be the devils advocate, many say there are few downside for most using 8 lane gpu vs 16 lanes for gpu.if nvme an ssd means reducing to 8 lanes for gpu to free some lanes, I would be tempted.

FlushedBubblyJock - Sunday, February 15, 2015 - link

Core 2 is getting weak - right click and open ttask manager then see how often your quad is maxxed at 100% useage (you can minimize and check the green rectangle by the clock for percent used).That's how to check it - if it's hammered it's time to sell it and move up. You might be quite surprised what a large jump it is to Sandy Bridge.

blanarahul - Thursday, February 5, 2015 - link

TOTALLY OFF TOPIC but this is how Samsung's current SSD lineup should be:850: 120 GB, 250 GB TLC with TurboWrite

850 Pro: 128 GB, 256 GB MLC

850 EVO: 500/512 GB, 1000/1024 GB TLC w/o TurboWrite

Because:

a) 500 GB and 1000 GB 850 EVOs don't get any speed benefit from TurboWrite.

b) 512/1024 GB PRO has only 10 MB/s sequential read, 2K IOPS and 12/24 GB capacity advantage over 500/1000 GB EVO. Sequential write speed, advertised endurance, random write speed, features etc. are identical between them.

c) Remove TurboWrite from 850 EVO and you get a capacity boost because you are no longer running TLC NAND in SLC mode.

Cygni - Thursday, February 5, 2015 - link

Considering what little performance impact these memory standards have had lately, DDR2 is essentially just as useful and relevant as the latest stuff... with the added of advantage of the fact that you already own it.FlushedBubblyJock - Sunday, February 15, 2015 - link

If you screw around long enough on core 2 boards with slight and various cpu OC's with differing FSB's and result memory divisors and timings with mechanical drives present, you can sometimes produce and enormous performance increase and reduce boot times massively - the key seems to have been a differing sound in the speedy access of the mechanical hard drive - though it offten coincided with memory access time but not always.I assumed and still do assume it is an anomaly in the exchanges on the various buses where cpu, ram, harddrive, and the north and south bridges timings just happen to all jibe together - so no subsystem is delayed waiting for some other overlap to "re-access".

I've had it happen dozens of times on many differing systems but never could figure out any formula and it was always just luck goofing with cpu and memory speed in the bios.

I'm not certain if it works with ssd's on core 2's (socket 775 let's say) - though I assume it very well could but the hard drive access sound would no longer be a clue.

retrospooty - Thursday, February 5, 2015 - link

I love reviews like this... I will link it and keep it for every time some newb doof insists that high bandwidth RAM is important. We saw almost no improvement going from DDR400 cas2 to DDR3-1600 CAS10 now the same to DDR4 3000+ CAS freegin 80 LOLmenting - Thursday, February 5, 2015 - link

depends on usage. for applications that require high total bandwidth, new generations of memory will be better, but for applications that require short latency, there won't be much improvement due to physical restraints of light speed