NVIDIA Tegra X1 Preview & Architecture Analysis

by Joshua Ho & Ryan Smith on January 5, 2015 1:00 AM EST- Posted in

- SoCs

- Arm

- Project Denver

- Mobile

- 20nm

- GPUs

- Tablets

- NVIDIA

- Cortex A57

- Tegra X1

GPU Performance Benchmarks

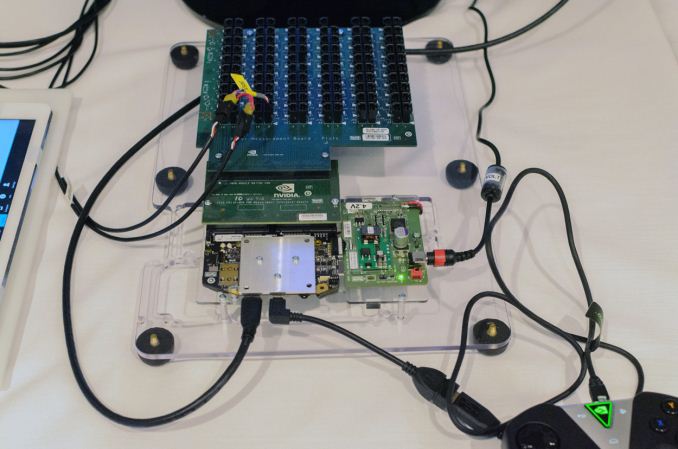

As part of today’s announcement of the Tegra X1, NVIDIA also gave us a short opportunity to benchmark the X1 reference platform under controlled circumstances. In this case NVIDIA had several reference platforms plugged in and running, pre-loaded with various benchmark applications. The reference platforms themselves had a simple heatspreader mounted on them, intended to replicate the ~5W heat dissipation capabilities of a tablet.

The purpose of this demonstration was two-fold. First to showcase that X1 was up and running and capable of NVIDIA’s promised features. The second reason was to showcase the strong GPU performance of the platform. Meanwhile NVIDIA also had an iPad Air 2 on hand for power testing, running Apple’s latest and greatest SoC, the A8X. NVIDIA has made it clear that they consider Apple the SoC manufacturer to beat right now, as A8X’s PowerVR GX6850 GPU is the fastest among the currently shipping SoCs.

It goes without saying that the results should be taken with an appropriate grain of salt until we can get Tegra X1 back to our labs. However we have seen all of the testing first-hand and as best as we can tell NVIDIA’s tests were sincere.

| NVIDIA Tegra X1 Controlled Benchmarks | |||||

| Benchmark | A8X (AT) | K1 (AT) | X1 (NV) | ||

| BaseMark X 1.1 Dunes (Offscreen) | 40.2fps | 36.3fps | 56.9fps | ||

| 3DMark 1.2 Unlimited (Graphics Score) | 31781 | 36688 | 58448 | ||

| GFXBench 3.0 Manhattan 1080p (Offscreen) | 32.6fps | 31.7fps | 63.6fps | ||

For benchmarking NVIDIA had BaseMark X 1.1, 3DMark Unlimited 1.2 and GFXBench 3.0 up and running. Our X1 numbers come from the benchmarks we ran as part of NVIDIA’s controlled test, meanwhile the A8X and K1 numbers come from our Mobile Bench.

NVIDIA’s stated goal with X1 is to (roughly) double K1’s GPU performance, and while these controlled benchmarks for the most part don’t make it quite that far, X1 is still a significant improvement over K1. NVIDIA does meet their goal under Manhattan, where performance is almost exactly doubled, meanwhile 3DMark and BaseMark X increased by 59% and 56% respectively.

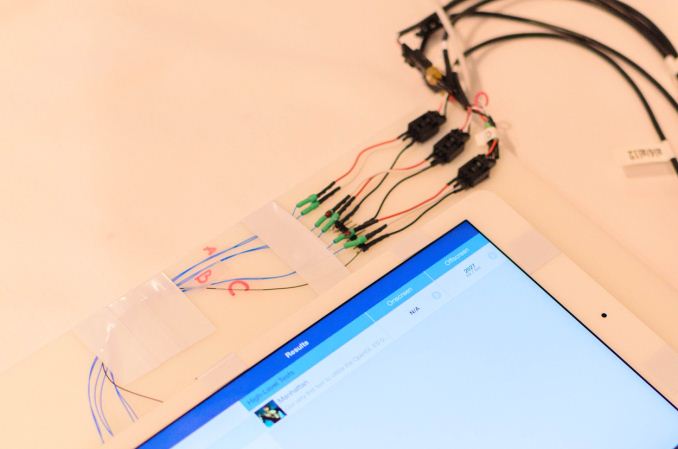

Finally, for power testing NVIDIA had an X1 reference platform and an iPad Air 2 rigged to measure the power consumption from the devices’ respective GPU power rails. The purpose of this test was to showcase that thanks to X1’s energy optimizations that X1 is capable of delivering the same GPU performance as the A8X GPU while drawing significantly less power; in other words that X1’s GPU is more efficient than A8X’s GX6850. Now to be clear here these are just GPU power measurements and not total platform power measurements, so this won’t account for CPU differences (e.g. A57 versus Enhanced Cyclone) or the power impact of LPDDR4.

Top: Tegra X1 Reference Platform. Bottom: iPad Air 2

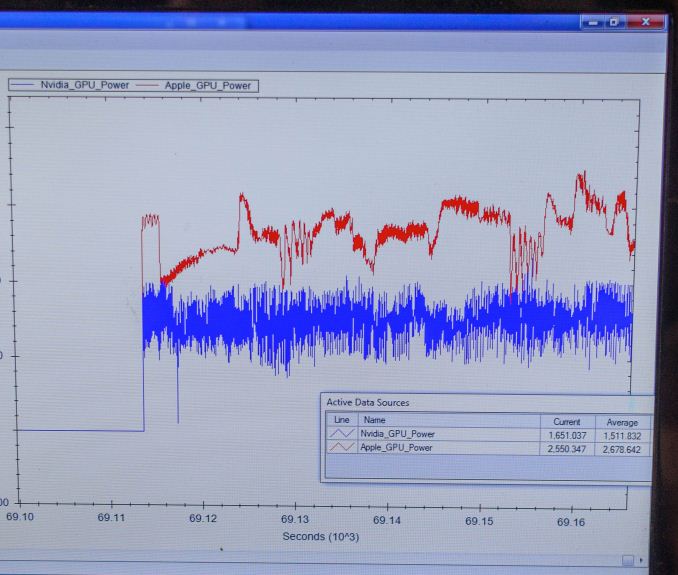

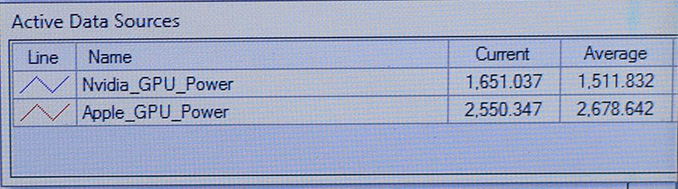

For power testing NVIDIA ran Manhattan 1080p (offscreen) with X1’s GPU underclocked to match the performance of the A8X at roughly 33fps. Pictured below are the average power consumption (in watts) for the X1 and A8X respectively.

NVIDIA’s tools show the X1’s GPU averages 1.51W over the run of Manhattan. Meanwhile the A8X’s GPU averages 2.67W, over a watt more for otherwise equal performance. This test is especially notable since both SoCs are manufactured on the same TSMC 20nm SoC process, which means that any performance differences between the two devices are solely a function of energy efficiency.

There are a number of other variables we’ll ultimately need to take into account here, including clockspeeds, relative die area of the GPU, and total platform power consumption. But assuming NVIDIA’s numbers hold up in final devices, X1’s GPU is looking very good out of the gate – at least when tuned for power over performance.

194 Comments

View All Comments

chizow - Monday, January 5, 2015 - link

Nvidia is only catching up on process node, because what they've shown is when comparing apples to apples:1) They have a much faster custom 64-bit CPU (A8X needed 50% more CPU to edge Denver K1)

2) They have a much faster GPU architecture (A8X also needed 50% more GPU cores to edge Denver K1, but get destroyed by Tegra X1 on the same 20nm node).

As we can see, once it is an even playing field at 20nm, A8X isn't going to be competitive.

GC2:CS - Monday, January 5, 2015 - link

Thy just postponed their "much faster custom 64-bit CPU" in favor of off the shelf design and compared to A8X is much higher clocked.A8X has just 33% percent more "cores" than k1 and aggain the GXA6850 GPU is probably miles under ~1Ghz clockspeed that nvidia targets.

And what's wrong with using a wider CPU/GPU ?

And yeah Tegrax1 is up to 2x faster than A8X, but considering it also runs at the same power as K1, it is not a lot more efficient.

chizow - Monday, January 5, 2015 - link

How do you get only 33% for A8X? A8 = 2 core, Denver K1 = 2 core, A8X = 3 core. 1/2 = 50% increase.Same for A8X over A8. GPU cores went from 4 to 6, again, 2/4 = 50% increase. Total transistors went from 2Bn to 3Bn, again 50% increase.

In summary, Apple fully leveraged 20nm advantage to match Denver K1 GPU and edge in CPU (still losing in single-core) using a brute-force 50% increase in transistors and functional units.

Obviously they won't be able to pull the same rabbit out of the hat unless they go to FinFet early, which is certainly possible, but then again, its not really a magic trick when you pay a hefty premium for early access to the best node is it?

Bottomline is Nvidia is doing more on the same process node as Apple, simple as that, and that's nothing to be ashamed of from an engineering standpoint.

GC2:CS - Monday, January 5, 2015 - link

A8X got 8 GPU clusetrs. And I still can't get your idea, you think that A8X is worse because it's brute force ~ 50% faster ? Yeah it is brute force, but I don't know how can you preceive that as a bad thing.They will certainly try to push finfet and rather hard I think.

And how can you say that nvidia is doing more on the same node while boasting how apple is the one who is doing more and how it's bad just above ?

chizow - Monday, January 5, 2015 - link

Wow A8X is 8 clusters and doesn't even offer a 100% increase over A8? Even worst than I thought, I guess I missed that update at some point over the holiday season.The point is that in order to match the "disappointing" Denver K1, Apple had to basically redouble their efforts to produce a massive 3Bn transistor SoC while fully leveraging 20nm. You do understand that's really not much of an accomplishment when you are on a more advanced process node right?

Sure Apple may push FinFET hard, but from everything I've read, FinFET will be more widely available for ramp compared to the problematic 20nm, which was always limited capacity outside of the premium allocation Apple pushed for (since they obviously needed it to distinguish their otherwise unremarkable SoCs).

It should be obvious why I am saying Nvidia is doing more on the same process node, because when you compare apple to Apples, Nvidia's chip on the 28nm node is more than competitive with the 20nm Apple chips, and when both are on 20nm, its going to be no contest in Nvidia's favor.

Logical conclusion = Nvidia is doing more on the same process node, ie. outperforming their competition when the playing field is leveled.

lucam - Tuesday, January 6, 2015 - link

Chizow the more I read and the more I laugh. You compare clusters with cores they have different technologies and you still state this crap. Maybe would be better to compare how much both of them are capable in term of of GFLOPS at same frequency? This is count. Regarding your absurd discussion of processing node, since the Nvidia chip is so efficient, I look forward to see it in smartphones.aenews - Saturday, January 24, 2015 - link

The A8X isn't on any phones either. In fact, they left it out of both iPhones AND the iPad Mini.And take in mind, even the Qualcomm Snapdragon 805 had few design wins... only the Kindle Fire HDX for tablets. They scored two major phones (Nexus 6 and Note 4) but the other manufacturers haven't used it.

squngy - Monday, January 5, 2015 - link

He did not say it is worse, his whole point is that Apple most likely will not be able to do the same thing again.tipoo - Tuesday, May 17, 2016 - link

Core counts are irrelevant across GPU architectures, they're just different ways of doing something.If someone gets to the same power draw, performance, and die size with 100 cores as someone else does with 10, what does it matter?

Jumangi - Monday, January 5, 2015 - link

Uh the A8 is an actual product that exists and wait for it you can actually BUY a product with it in there. This is another mobile paper launch by Nvidia with the consumer having no idea when or where it will actually be. The only thing real enthusiasts should care about is the companies that can actually deliver parts people can actually use. Nvidia still has a loooong ways to go in that department. Paper specs mean shit.